Get Complete Project Material File(s) Now! »

A short introduction to 1-D snow avalanche dy-namic modeling

Avalanche numerical models provide a simpli cation of avalanche ow on the basis of physical conservation laws. To situate avalanche models in human history, one of the rst avalanche models was probably proposed in the 1930s [Salm, 2004]. This rst model had a dry friction coe cient (Coulomb friction) [Salm, 2004]. Then, Voellmy [1964] pro-posed a based on hydrodynamics model, that had two friction coe cients: a turbulent friction coe cient and a Coulomb-like dry coe cient . Since then, di erent analytical and numerical models have been proposed based in Coulomb or Voellmy friction laws [e.g., Perla et al., 1980, Salm et al., 1990]. Analytical (e.g., sliding block models) and numerical models (e.g., Saint-Venant proposed by Naaim et al. [2004]) have advantages and disadvantages as for example their accuracy in test site experiments, computational cost, number of parameters, etc. Also, some of them may be only capable to output velocities and runout distances1 while others can also compute ow depths. Nevertheless, avalanche modeling is still challenging, due to lack of measurements and due to avalanche complexity (e.g., ow regime transitions, snow mass balance) [Harbitz et al., 1998]. How-ever, depending on the application, analytical or numerical models (or both) might be used. A more detailed review of these models can be found in e.g., Harbitz et al. [1998], Salm [2004] and Jamieson et al. [2008].

For illustration purpose, Figure 2.1 shows a graphical simpli cation of a sliding block model. These kind of models repre- sent an avalanche as a sliding rigid body experimenting frictional forces. The model is based in the motion equation: du = g sin F ; (2.1) dt m where u = jj~ujj is the velocity norm, g is Figure 2.1: Avalanche block model. the gravity constant, is the local slope, h is the mean ow depth, m the mass and ~ F = jjF jj the friction force. For example, mg 2 Coulomb friction law F = mg cos or Voellmy friction law F = mg cos + h u could be considered in Equation (2.1).

Model input parameters

All of the aforementioned models, independently of their complexity, depend on unknown parameters that cannot be measured. More speci cally, friction parameters cannot be measured from experiments. To guide practitioners, some authors have created parame-ter guidelines. For example, Salm et al. [1990] proposed reference values for according to the avalanche characteristics (e.g., wet snow, large avalanche) and for according to the avalanche path characteristics (e.g., path local slope). Author guideline is called the Swiss guideline. For example, Christen et al. [2010b] applied the guideline recommenda-tions for back calculation of the In den Arlen2 avalanche using the 2-D RAMMS model. However, parameter in situ calibration is still necessary for a better comprehension of avalanche models. Deterministic inversion, which consists in writing friction coe cients as a function of observed quantities, has been employed to calibrate parameters from in situ experiments. In the work of Dent et al. [1998], friction coe cients were computed by measuring normal forces. Ancey et al. [2003] proposed a deterministic inversion framework to retrieve friction parameters from runout distances and the sum of snow fall amount during the three days previous to the avalanche event. Indeed, the snow fall during the days preceding the avalanche event is a key meteorological parameter in avalanche phe-nomena [Ancey et al., 2003]. In Ancey and Meunier [2004] a back analysis was performed to infer friction coe cients from velocities. Naaim et al. [2004] performed a back analysis to model validation, also the authors found an empirical relationship between the dry fric-tion coe cient and runout distances. Recently, Oda et al. [2020] performed small scale 2Avalanche occurred in Switzerland the 27 January 1968. The event destroyed a farmhouse and killed four people.

A short introduction to 1-D snow avalanche dynamic modeling

experiments using a rotating drum device to measure ow velocities and ow depths in order to perform a deterministic inversion to retrieve friction parameters. Nevertheless, parameter point estimation does not take into account parameter uncertainty which is important for uncertainty propagation.

One of the principal di culties of parameter calibration in avalanche problems is the low number of observations recorded. Therefore, more complex schemes of calibration based on Bayesian inference have been proposed. For example, Ancey [2005], Straub and Gr^et-Regamey [2006] and Eckert et al. [2010] used runout distances to model calibration. Gauer et al. [2009] used the velocity at the lower avalanche track and runout distances to model calibration. Also, procedures based on optimization have been proposed. For example, Naaim et al. [2013] performed the calibration of friction parameters using runout distances from historical avalanche events. Regarding 3-D avalanche model calibration, in Fischer et al. [2014], the 3-D SamosAT (Snow Avalanche Modeling and Simulation Advanced technology) model is calibrated using ow depths and velocities deduced from Doppler radar measurements. Fischer et al. [2015] proposed a multivariate parameter optimization to calibrate SamosAT.

In the last two decades, the interest in Bayesian approaches is increasing in the avalanche community. To demonstrate this assertion, we cite some examples: the al-ready described works of Ancey [2005], Straub and Gr^et-Regamey [2006], Gauer et al. [2009], Eckert et al. [2010], Heredia et al. [2020] and other recent works, Schlappy et al. [2014] reconstructed runout distances from dendropomorphic historical data, then authors applied a Bayesian approach based on Eckert et al. [2010] to model calibration and to estimate return periods. Recently, Fischer et al. [2020] provided a comprehensive tool for snow avalanche simulation using Bayesian inference which is implemented in the open source software r.avaflow. The software r.avaflow was developed by Mergili et al. [2017].

Sensitivity analyses for avalanche models

As mentioned before, avalanche models depend on poorly known parameters (e.g., friction parameters and initial conditions corresponding to the avalanche released). Authors have studied the parameter sensitivity, for example, Barbolini and Savi [2001] used a Monte Carlo approach to analyze the sensitivity for the runout distances and impact pressures outputs of the VARA model. Authors found two interesting results: (i) for relatively frequent events, friction parameters and release depth and length are important for runout distances and, (ii) for extreme rare events, the friction coe cient has a higher importance than the two other parameters. Borstad and McClung [2009] developed a sensitivity analysis of an avalanche model with Coulomb friction law. Their results demonstrated that the friction coe cient is important for the runout positions. Buhler et al. [2011] studied the in uence of the digital elevation model resolution (DEM) on the outputs of the RAMMS model. They found that DEM resolution and quality is critical for modeling and a spatial resolution of around 25m is su cient for large-scale modeling. Fischer et al. [2014] performed a sensitivity analysis for RAMMS model by estimating the Spearman rank correlation between parameters and outputs. Buhler et al. [2018] made a sensitivity analysis of runout distance, volume and avalanche velocity in the runout zone with respect to the initial volume in the RAMMS model. They found that the released volume has a large e ect in runout distance and avalanche velocity. However, even if all the previous works provide useful information about avalanche models, none of them has used variance-based global sensitivity measures to quantify input parameter importance.

Few words about land-use planning

Finally, why avalanche input parameters and thus, model outputs are important? The reason is that model outputs (e.g., avalanche speed and runout distance) might be used in land use planning which typically consists into dividing a region in zones according to their potential risk [Salm, 2004]. In some countries (e.g., Austria, France, Switzerland), this assignation is done by colors: green (or white in France [Eckert et al., 2018b]), blue and red. The colors assignation are sometimes determined by runout return periods. A runout return period is the mean time in which a given runout distance is reached or exceeded at a given path’s position [Eckert et al., 2007, 2008b, Schlappy et al., 2014]. For example in Switzerland, red zones have return periods lower than 300 years and avalanche pressures can exceed 30kNm 2 [WSL]. Constructions are forbidden in red zone [WSL]. Therefore, parameter estimation and associated uncertainty might be useful for computing more accurate avalanche simulations and as a consequence, for a better land use planning. The importance of avalanche model calibration and current issues for hazard mapping are nicely described in Jamieson et al. [2008].

To summarize, the two main axes of this thesis are model calibration using Bayesian inference and global sensitivity analysis applied to avalanche models. These two subjects are explained in Section 2.2 and Section 2.3.

Model calibration using Bayesian inference

Model calibration consists in nding the most likely combination(s) of parameters given some observations. We are not only interested in determining an optimal single combi-nation of parameters, but also in the uncertainty associated to it [Kuczera and Parent, 1998]. Bayesian calibration o ers a solution to this problem, because it allows to assess parameter uncertainty by deriving the posterior distribution of parameters from observa-tions [Kennedy and O’Hagan, 2001]. Moreover, advantages of Bayesian calibration are twofold: (i) if model is used for prediction, all the sources of uncertainty are included, and (ii) since no model is perfect, the approach cope for any inadequacy between observations and model [Kennedy and O’Hagan, 2001].

Let us denote f a prediction model for a given phenomenon (e.g., an avalanche model which predicts the avalanche runout distance), f depends on parameters 2 Rp and some forcing variables x 2 Rd. Let us denote by yobs a vector of n noisy observations: yobsi = f( ; x) + i; 8i 2 f1; : : : ; ng (2.2).

where models observation errors. For example, yobs could be a set of observed runout distances to calibrate an avalanche model. Note that within this formulation, we do not make distinction between the observation errors and the model inadequacy. The distinction has been studied in, e.g., Kennedy and O’Hagan [2001], Carmassi et al. [2018]. In a statistical framework to solve the inverse problem of retrieving the model parameters , assumptions of the distribution of the residuals or errors might be re-quired. For example, they could be assumed i.i.d. centered Gaussian random variables i N (0; 2) 8i 2 f1; : : : ; ng. Let us denote the residual parameters. In our example, = f 2g. An advantage of Bayesian inference is that practitioner experience or beliefs could be plugged into the inference by the prior distribution denoted ( ; ). Then, the posterior distribution is: j obs L(yobsj ; ; x) ( ; )d d ( ; y ; x) = R L(yobsj ; ; x) ( ; ) (2.3).

where L(yobsj ; ; x) is the likelihood of the observations which can be obtained from the error distribution. It is usually di cult to compute L(yobsj ; ; x) ( ; )d d , ex-posterior distribution, which is achieved R cept when there is an analytical expression of the by using conjugate priors. When there is no explicit posterior formulation, Monte Carlo Markov chain (MCMC) methods can be used to sample from the posterior distribution. MCMC methods consist into constructing a stationary and ergodic Markov chain that converges, under mild conditions, to the posterior distribution ( ; jyobs; x).

Among the MCMC methods, there is the Metropolis-Hasting algorithm [Metropolis et al., 1953, Hastings, 1970] which constructs sequentially a Markov chain by applying acceptance-rejection rules de ned by a proposal distribution q. The convergence of the MH algorithm depends on q [Robert, 2015] and q is usually assumed as multinormal distribution.

If there is an analytical expression of all the parameter conditional distributions ( ‘jyobs; x; 1; : : : ‘ 1; ‘+1; : : : d), Gibbs sampling, a particular instance of MH algo-rithm, could be applied. The Hamiltonian Monte Carlo [Duane et al., 1987] algorithm, also known as hybrid Monte Carlo, has shown good results in applications. Nevertheless, the gradient of the distribution functions are required and their computations might be expensive. When observations are time series, errors could be autocorrelated or/and su er from heteroscedasticity. In the calibration of hydrological models, authors have studied the importance of considering the correlations and/or heteroscedasticity in residuals [e.g., Kuczera, 1983, Kuczera and Parent, 1998, Evin et al., 2014]. Indeed, they found that if autocorrelation is not included parameter estimation could be biased. In Chapter 3, given an avalanche velocity measured time series, we analyze the impact of considering or not the error autocorrelation in a Bayesian inference calibration framework. A block model based on the Voellmy friction law is considered.

Global sensitivity analysis

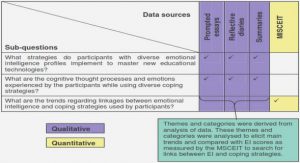

Saltelli et al. [2004] de ned precisely the sensitivity analysis (SA) as \the study of how uncertainty in the output of a model (numerical or otherwise) can be apportioned to di erent sources of uncertainty in the model input »3. Under a SA framework, we consider a deterministic model f (computational code, black box model, etc) that depends on d inputs X = (X1; : : : ; Xd). First for simplicity, we consider the output Y as scalar Y = f(X). The inputs are modeled by random variables on a probability space ( ; F; P). Thus, the output Y is also a random variable ( ; F; P) because it depends on the random vector X. Let us denote (x; y) a random sample from (X; Y ). Figure 2.2 illustrates the 3 This de nition is, in general, widely accepted in the SA community.

SA framework. The following exposition is inspired by the works of Iooss and Lema^tre [2015] and Iooss and Saltelli [2017].

SA is divided in two classes: local and global methods. Local methods consist in study-ing the impact of small input perturbations around certain nominal values on the output.

An example of local methods are partial derivatives, they consist into calculating @f at @xi a given point x0 = (x01; : : : ; x0d) in the domain of X. Local methods are sometimes used when model computational cost or number of parameters is high. For example, Castaings et al. [2009] proposed the adjoint state method to compute e ciently the derivatives of the output of a distributed hydrological model with respect to parameter inputs to perform local SA. Castillo et al. [2004] proposed a local SA method based on the duality property of mathematical programming to compute partial derivatives. In contrast to local meth-ods, global methods explore all the input domain and not only variations around nominal values.

Global methods provide a more convincing tool for highly nonlinear models often en-countered in environmental sciences. Indeed, global methods provide an exploration of the whole input space [Saltelli et al., 2008, Iooss and Lema^tre, 2015]. Nonetheless, there is a price to pay to use global sensitivity methods in terms of number of model simula-tions. But there are some ways to reduce computational costs which will be explained in Subsection 2.3.5. Tang et al. [2006] compared local and global methods applied to a watershed model and they concluded that global methods yield robust results. [Saltelli et al., 2008, page 11] recommended strongly to use global methods rather than local ones. Hereafter, we focus on global sensitivity analysis methods.

Global sensitivity analysis (GSA) consists into identifying the input parameters that contribute the most (in a sense to be precised) to a given quantity of interest de ned from the output of the model [Iooss and Lema^tre, 2015]. The objectives of GSA are numerous: they are to provide a better comprehension of the numerical model, to iden-tify non in uential parameters to set them to nominal values, among others [Iooss and Lema^tre, 2015]. To guide practitioners, Saltelli et al. [2004] proposed four settings of GSA objectives: factor prioritization, factor xing, variance cutting and factor mapping. Before performing a GSA, it is important to have clear the objectives of the study [Iooss and Lema^tre, 2015] and also, to select the adequate GSA method to avoid misinterpre-tations [Saltelli et al., 2019]. Then, parameter inputs might be ordered according to their importance or contributions by computing sensitivity measures.

GSA has been widely applied in many elds as for example, in environmental sciences and engineering. We cite some recent works in di erent application domains, Sarrazin et al. [2016] proposed a guide to apply GSA in environmental models, more particularly applied to hydrological models. Mavromatidis et al. [2018] developed a GSA for dis-tributed energy systems. These models are important for an e cient and sustainable energy future. Prieur et al. [2019] performed a GSA for a marine biogeochemical model with a high number of inputs (74 input parameters).

The GSA measures may be divided into: screening approaches, variance-based mea-sures, moment free measures and, other measures. In the manuscript, we only focus on variance based measures which are explained in Subsection 2.3.2. Even if we do not use the other three GSA measures, they are brie y explained in Subsections 2.3.1, 2.3.3 and, 2.3.4 to provide a general overview of GSA measures.

Screening approaches

Screening approaches consist in the discretization of inputs X in levels [Iooss and Lema^tre, 2015]. If the model has a large number of inputs d 1, these sensitivity measures could help the user to identify non in uential input parameters. Therefore, screening methods could be a preliminary step before computing other, in general more expensive, GSA measures when input dimension is high. One of the most popular screening methods is the OAT (one at a time) Morris method [Morris, 1991].

Here we describe brie y the Morris method. The d random inputs X are supposed independent and are modeled with uniform distributions on [0; 1], i.e. Xi U[0; 1] for all i 2 f1; : : : ; dg. The assumption of uniformity on [0; 1] is not constraining as for Xi with cumulative distribution function (cdf) F , we can apply the inverse transform sampling to come back to that setting.

The rectangular domain [0; 1] of each input is divided in a p-level grid, then each xi may take values from f0; 1=(p 1); 2=(p 1); : : : ; 1g. Let = f0; 1=(p 1); 2=(p 1); : : : ; 1gd.

Let be a predetermined multiple of 1=(p 1). For a given value x 2 !, the elementary e ect of the input Xi is de ned according to: di(x) = f(x1; x2; : : : ; xi 1; xi + ; xi+1; : : : ; xd) f(x1; : : : ; xd).

Table of contents :

1 Introduction

1.1 Context

1.2 Objectives

1.3 Organization of the manuscript

2 Avalanche models, Bayesian inference and global sensitivity analysis

2.1 A short introduction to 1-D snow avalanche dynamic modeling

2.1.1 Model input parameters

2.1.2 Sensitivity analyses for avalanche models

2.1.3 Few words about land-use planning

2.2 Model calibration using Bayesian inference

2.3 Global sensitivity analysis

2.3.1 Screening approaches

2.3.2 Variance-based measures

2.3.3 Moment-free measures

2.3.4 Other measures

2.3.5 A little bit about metamodels

2.3.6 Multivariate and/or functional model outputs

2.4 Dimension reduction techniques for functional outputs

Part I Model calibration using Bayesian inference

3 Bayesian calibration of an avalanche model

3.1 Introduction

3.2 Avalanche model calibration principle

3.2.1 Sliding block propagation model

3.2.2 Statistical model formulation

3.2.3 Bayesian framework

3.2.4 Metropolis-Hastings algorithm

3.2.5 Model selection using Bayes Factor

3.3 Application data

3.3.1 Avalanche data from the Lautaret test-site

3.3.2 Synthetic data generation

3.4 Application, results and discussion

3.4.1 The avalanche

3.4.2 Synthetic data

3.5 Conclusion and outlook

3.6 Appendix A: Likelihood of an AR(1) process

3.7 Appendix B: Numerical evaluation of the Bayes Factor

3.8 Appendix C: Prior sensitivity analysis

3.9 Appendix D: Images used in the photogrammetric process

Part II Global sensitivity analysis

4 Nonparametric estimation of aggregated Sobol’ indices

4.1 Introduction

4.2 Aggregated Sobol’ indices

4.2.1 Nonparametric estimation procedure

4.2.2 Bias correction and bandwidth selection

4.2.3 Dimension reduction based on principal component analysis

4.3 Test cases

4.3.1 Toy functions

4.3.2 A functional example: the mass-spring model

4.4 Application: the avalanche model

4.4.1 Sobol’ indices

4.4.2 Scalar Sobol’ indices

4.4.3 Aggregated Sobol’ indices

4.5 Conclusions and perspectives

4.6 Appendix

5 Aggregated Shapley eects

5.1 Introduction

5.2 Aggregated Shapley eects

5.2.1 Denition

5.2.2 Properties

5.3 Estimation procedure for scalar and aggregated Shapley eects

5.3.1 Given data estimator of scalar Shapley eects proposed by [Broto et al., 2020]

5.3.2 Estimator of the aggregated Shapley eects

5.3.3 Dimension reduction: functional principal component analysis

5.4 Bootstrap condence intervals with percentile bias correction

5.5 Test cases

5.5.1 Multivariate linear Gaussian model in dimension d = 2

5.5.2 Mass-spring model

5.6 Snow avalanche modeling

5.6.1 Model

5.6.2 Scenario 1

5.6.3 Scenario 2

5.7 Conclusions and perspectives

5.8 Comparison between the uniform allocation Nu = Ntot=(2d 2) , and the one introduced in [Broto et al., 2020] N u = j Ntot d juj 1 (d 1)1 k , ; u ( f1; : : : ; dg

5.9 Comparison between dierent values of NI

5.10 Estimation of Shapley eects using one or two samples

5.11 Functional principal components and Shapley eects for scenario 2

6 Conclusions and perspectives

6.1 Conclusions

6.2 Perspectives

6.2.1 For calibration using Bayesian inference

6.2.2 For the Global sensitivity analysis

Appendices

A Given data estimation methods for Sobol’ indices

A.1 Given data method

A.2 The spectral approach EASI

A.3 Test cases

A.3.1 Ishigami function

A.3.2 Bartley et al. function

B Correlations between Shapley eects and local slope

Bibliography