Get Complete Project Material File(s) Now! »

Hydrograph separation methods

Hydrograph separation is the oldest age topic in hydrology (Hall, 1968). It is a deconstructive method of streamflow as a two-component or multi-component process (Mei and Anagnostou, 2015). The most commonly used scheme is the two-component scenario that considers streamflow consisting of direct flow (i.e. quick surface or subsurface flow) and baseflow (i.e. flow that comes from groundwater storage or other delayed source) (Hall, 1968; Tallaksen, 1995). As shown in Figure 10, classical hydrograph separation is defined in terms of the delay or lag times of the components, without implication of origin (Hall, 1968).

Methods that are not based on tracers are mainly founded on the study of recession curves. The flood recession curve extends from the peak flow rate onward (Figure 10). The end of stormflow (i.e. quickflow or direct runoff) and the return to groundwater-derived flow (baseflow) is often taken as the point of inflection of the recession limb (Figure 10). The recession limb represents the withdrawal of water from the storage built up in the basin during the earlier phases of the hydrograph. From Boussinesq (1877), Maillet (1905) and Horton (1933), the groundwater-derived flow (baseflow) can be expressed by the equation of a single linear reservoir as follow: 𝑄(𝑡)= 𝑄0(𝑡).𝑒𝑥𝑝−( ∝𝑡) Eq. (9).

Where Q𝑡 is the streamflow at time 𝑡, 𝑄0 initial discharge, and ∝ a constant. The term 𝑒𝑥𝑝−( ∝) is normally replaced by 𝐾 called the recession constant (see Eq. (10)). Q(𝑡)= 𝑄0(𝑡).𝐾𝑡.

Eigenvector analysis

Eigenvectors analysis, calculates temporal correlations from a time series of chemical concentrations. Eigenvectors of this correlation matrix are determined and a subset is rotated to maximize and minimize correlations of each factor with each measured species. The factors are interpreted as end-members profiles by comparison of factor loadings with end-member measurements. Several different normalization and rotation schemes have been used, but their physical significances have not been established (Pierret et al., 2018). The most used methods are: Principal Component Analysis (PCA) and the Factor analysis (FA), also called Positive Matrix Factorization (PMF) by Paatero and Tapper (1994).

Principal Component Analysis (PCA)

Principal Component Analysis (PCA) extracts the eigenvalues and eigenvectors from the covariance matrix of original variables. The PCs are the uncorrelated (orthogonal) variables, obtained by multiplying the original correlated variables with the eigenvector, which is a list of coefficients (loadings or weightings). Thus, the PCs are weighted linear combinations of the original variables. PC provides information on the most meaningful parameters, which describe whole data set affording data reduction with minimum loss of original information (Singh et al., 2004; Vega et al., 1998). It is a powerful technique for pattern recognition that attempts to explain the variance of a large set of inter-correlated variables and transforming into a smaller set of independent (uncorrelated) variables (principal components)(Singh et al., 2004).

However, application of the method to practical environmental data requires that error estimates for the data be chosen judiciously so that the estimates reflect the quality and reliability of different data points. For a typical environmental problem, with outliers and other problematic data points, Albek (1999) reports this in detail. Moreover, the PCA approach can produce negative values for almost all factors, whereas it is not possible, for example, to have negative amount of a basic constituent in any sample. Despite the transforming (‘rotating’) factors in order to eliminate the negative entries, there usually remain some negative values (Paatero and Tapper, 1994).

Factor analysis (FA) or positive matrix factorization (PMF)

The term, ‘factor analysis’ (FA), is ambiguous. FA means principal component analysis (PCA): singular value decomposition (SVD), selection of dimension, and rotations. Statisticians often remark that this is not FA at all according to their definition of FA. In statistics, FA means investigation of correlations of random variables. This leads to a non-linear computation, which cannot be done with SVD. To avoid the ambiguous term ‘FA’, Paatero and Tapper (1994) called the statistician FA, ‘positive matrix factorization’ or PMF.

Although highly used in the air pollution domain to perform end-members apportionment of particulate matter (see detailed review of existing methods in Popoola et al., 2018), the PMF method is recent for the domain of hydrochemistry (Capozzi et al., 2018; Haji Gholizadeh et al., 2016; Zanotti et al., 2019). The PMF method utilizes statistical techniques to reduce the data to meaningful terms for identifying the chemical end-members and to estimate the end-member contributions.

The form of the PMF model, most widely used to analyze the chemical network data, is the bilinear model: two matrices, G and F, leading to a reproduction of the dataset variability as a linear combination of a set of constant factors profile and their contribution to each sample (Reff et al., 2007).

The main advantages of this method compared to PCA are that: (i) it takes into account the analytical uncertainties often associated with measurements of environmental samples and (ii) forces all of the values in the solution profiles and contributions to be positive, which can lead to a more realistic representation (Reff et al., 2007; Zanotti et al., 2019).

End member mixing analysis (EMMA)

End member mixing analysis (EMMA) was developed from works done by Christophersen et al. (1990), Christophersen and Hooper (1992), Hooper (2003) and modified by Liu et al. (2004; 2008) and Barthold et al. (2011). It is a hydrological mixing model which principal aims is identifying the minimum number of end-members required to explain the variability of measured concentrations and co-linearity (Tubau et al., 2014).

EMMA is based on an analytical approach that solves an over-determined set of equations on the basis of a least squares procedure (Barthold et al., 2011). According to Barthold et al. (2011), EMMA uses the following assumptions:

(i) the stream water is a mixture of source solutions with a fixed composition.

(ii) the mixing process is linear and relies completely on hydrodynamic mixing.

(iii) the solutes used as tracers are conservative.

(iv) the source solutions have extreme concentrations.

EMMA allows reducing the dimensionality of the analysis because visualization and interpretation of a large number of chemical analyses of many species is hard. The problem is much easier to handle if, instead of studying a dataset potentially as large as the number of species, it would suffice to analyze their projections on a space of much smaller (say 2 or 3) dimensions (Pelizardi et al., 2017). An EMMA aims at identifying the minimum number of end-members required to explain the variability of measured concentrations in time or space. The explanation of the variance and the contribution of each species to the mixture are obtained from the analysis of information provided by the calculation of the eigenvalues (Tubau et al., 2014).

Study site – The Oracle-Orgeval observatory

The Orgeval catchment is a small sub-catchment of the Oracle-Orgeval observatory located 70 km east of Paris, France (Figure 16). In the department of Seine-et-Marne (77), the Orgeval catchment is a sub-basin of the Grand Morin watershed, main tributary of the Marne River. The Grand Morin watershed has a significant quantitative and qualitative impact on the Seine River and the Parisian conurbation. To understand and monitor the hydrological and chemical processes that lead to floods and eutrophication of the Seine River, Irstea set up an observatory on the Orgeval catchment in 1962. The relatively small extent of the Oracle-Orgeval observatory, 104 km², gives it homogeneous natural conditions to assert a representation at the regional scale.

Table of contents :

General introduction

1 Context

2 Scientific questions of this thesis

3 Structure of the thesis

Part I A brief review of concentration-discharge (C-Q) relationships

1 Introduction

2 Non-univocal concentration-discharge (C-Q) relationship (hysteresis)

3 Concentration-discharge (C-Q) relationship models

3.1 One-component models

3.1.1 Power-law model

3.1.2 Hyperbolic model

3.2 N-component models

3.2.1 Mixing models

4 Hydrograph separation methods

4.1 Non tracers-based method

4.1.1 Recession curves analysis methods

4.1.2 Filtering methods

4.2 Tracers-based methods

4.2.1 Isotopic tracers

4.2.2 Geochemical tracers

5 Quantification of the end-members

5.1 Eigenvector analysis

5.1.1 Principal Component Analysis (PCA)

5.1.2 Factor analysis (FA) or positive matrix factorization (PMF)

5.2 Classification analysis

5.2.1 Cluster analysis (CA)

5.2.2 Discriminant analysis (DA)

5.3 Mixed analysis

5.3.1 End member mixing analysis (

5.3.2 MIX method

6 Study site – The Oracle-Orgeval observatory

6.1 Characteristics of Oracle-Orgeval observatory

6.1.1 Location and brief history

6.1.2 Topography and Climate

6.1.3 Geology and hydrogeology

6.1.4 Pedology

6.1.5 Land use and hydro-agricultural infrastructures

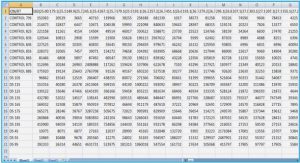

6.2 Hydrological measurements of Oracle-Orgeval observatory

6.3 Chemical measurements of Oracle-Orgeval observatory

6.3.1 Long-term monitoring

6.3.2 River Lab. and high-frequency measurements

6.3.3 Typology of high-frequency measurements of ions concentrations during flow events

6.4 Hydrological and chemical behavior of the catchment

7 References

Part II Revisiting the concentration-discharge (C-Q) relationships

Chapter 1: Revisiting the one-component models – power law model Technical Note: A two-sided affine power scaling relationship to represent the concentration–discharge relationship

1 Introduction

2 Tested dataset

3 Mathematical formulations

3.1 Classic one-sided power scaling relationship (power law)

3.2 Limits of the power law

3.3 A two-sided affine power scaling relationship as a progressive alternative to the power law

3.4 Choosing an appropriate transformation for different ion species (calibration mode)

4 Numerical identification of the parameters for the 2S-APS relationship

5 Results

5.1 Results in calibration mode

5.2 Results in validation mode

6 Conclusion

7 Appendix 1 – Description of the River Lab

8 Appendix 2 – Graphical representation of the numerical identification of parameters

9 References

Chapter 2: Revisiting the n-component models – mixing model

Hydrograph separation issue using high-frequency chemical measurements

1 Introduction

1.1 Hydrograph separation: an age-old issue

1.2 A variety of solutions proposed for hydrograph separation

1.3 Joining the strengths of hydrological and chemical approaches for hydrograph separation

1.4 Using high-frequency chemical measurements to revisit the hydrograph separation issue .3

2 Methodology

2.1 Study site and data set

2.2 Hydrograph separation methods

2.2.1 Lyne – Hollick method (LH)

2.2.2 Eckhardt method (ECK)

2.2.3 Hydrological recession time constant of the catchment

2.2.4 MB method

2.3 Comparison of the two RDF methods through an hydrological and a chemical calibration .

2.3.1 The hydrological recession time constant (τ) from hydrological MRC approach

2.3.2 Sensibility of the RDF methods to the hydrological recession time constant of the catchment (τ)

2.3.3 The hydrological recession time constant of the catchment (τ) from MB approach

2.4 Comparison between the components 𝑪𝒃𝒋 and 𝑪𝒒𝒋 obtained from the optimal hydrograph separation and the field chemical dataset.

3 Results and Discussion

3.1 Hydrological approach to calculate the hydrological recession time constant of the catchment (τ)

3.2 Baseflow exploration from RDF methods with hydrological recession time constant (τ) values

3.3 MB approach to find the optimal hydrological recession time constant

3.4 Comparison between the components 𝑪𝒃𝒋 and 𝑪𝒒𝒋 obtained from the optimal hydrograph separation and the field chemical dataset.

4 Conclusions

5 References

Chapter 3: Combining the one- and n-component models Combining concentration-discharge relationships with mixing models

1 Introduction

2 Procedure for combining mixing models and C-Q relationships

2.1 Case 1: chemostatic components ( bb = bq = 0)

2.2 Case 2: single 2S-APS relationship (ab =aq = a and bb = bq = b)

2.3 Case 3: General case (a and b are different)

3 Application of the combining model

3.1 Study site and datasets

3.2 Methodology

4 Results and discussion

4.1 Identification of the parameters and overall performance of the models

4.2 Performances for selected storm events

5 Conclusions

6 References

Chapter 4: Identification and quantication of the end-members.

Identification of potential end members and their apportionment from downstream high – frequency chemical data

1 Introduction

1.1 Formulation of the problem and main resolution techniques

1.2 Identification and contribution of end members methods

1.2.1 Tracer mass balance methods (TMB)

1.2.2 Multivariate statistical methods (MS)

1.2.3 Principal Component Analysis (PCA) and End member mixing analysis (EMMA)

1.2.4 Positive Matrix Factorization method (PMF)

1.2.5 MIX method

1.3 Scope of this paper

2 Material and method

2.1 Study site

2.2 Data set processing

2.3 Methodology

2.3.1 Optimization function

2.3.2 Sensitivity analysis of end members

3 Results and discussion

3.1 Relation between the criterion VR and A/B ratio

3.2 Average monthly concentrations of the potential end-members and their respective apportionment

3.3 Sensitivity analysis of end members

3.4 Potential end-members to identify observed evolution in a synthetic manner

3.5 Potential end-members versus pre-identified possible end-members

4 Conclusions

5 Appendix

5.1 Cluster analysis for evolution of end-members using a variable VR (0.02<VR<0.10)

5.2 Appendix-2: Summary for the month of November 2015 of: a) the initial position of the concentration with respect to the triangle of end-members. b) Position of the concentrations after their projection in the triangle. c) End-members apportionment. d) End-members apportionment multiplied by flow.

6 References

Part III Conclusions and Perspectives

1 Conclusions

1.1 Main achievements of the thesis

1.1.1 The affine power scaling relationship

1.1.2 Calibration of the Hydrograph separation

1.1.3 Combining of affine relationship and mixing model

1.1.4 Identification and quantification of potential end-members

1.2 The develop of a parsimonious model

2 Perspectives