Get Complete Project Material File(s) Now! »

Graph representation of brain connectivity networks

To build a brain network, the first step consists in the identification of nodes and edges. The graph nodes are typically defined according to the specific neuro-imaging technique used to extract the brain data. For voxel-based techniques, like fMRI and PET, nodes classically correspond to regions of interests (ROIs), identified using anatomical atlas [42, 162]. For sensor-based techniques, such as EEG and MEG, nodes usually correspond to recording sensors [100]. In this latter case, brain nodes lies at the scalp level, but source-reconstruction techniques can be applied to re-define nodes on the cortex [55, 90].

Neuroimaging techniques measure in specific modalities brain regional activi-ties, without having access to their mutual relationships. Consequently, the edges’ weights have to be inferred from signals through statistical procedures. Functional connectivity (FC) estimators, described in the previous section, are typically used to quantify links in a graph setting.

Considered together, nodes and links give rise to a new type of networked data, which cannot be analysed with standard techniques but requires appropriate tools coming from network science, a research domain merging graph theory, sta-tistical mechanics and inferential modelling [2]. Network science provides a novel perspective to analyse interacting data at several level [21, 181]. The networks of traffic movements, social relationships and molecular or protein interactions are only some examples [8, 78, 151, 196] . In this scenario, a complex network is mod-elled as a graph [126]. A graph G = fV, E, Ag is defined as a set of nodes (or vertices) V with jVj = N, a set of links (or edges) E and an adjacency matrix A [126]. The Ai,j element differs from zero if there is a link e = (i, j) between the nodes i and j. At this stage, the graph is fully connected and weighted.

Widely used techniques in network domain involve thresholding strategies to reduce the number of links or to obtain a binary adjacency matrix. Reducing graph links provides advantages in improving the interpretabilty and mitigating false connections [52, 174]. The most intuitive way to proceed consists in defin-ing a threshold on the number of edges or on the functional connectivity values. This simple approach is parametric and the same analysis has to be often per-formed several times before obtaining stable results. Another possibility is to use nonparametric methods, based on the statistical or topological properties of the network [52, 189]. After thresholding, networks can be weighted or unweighted, depending on whether the links’ weights are binarized. In Fig. 2.2, we report a graphical representation of the main steps needed to estimate graph connectivity networks from time series.

Once the graph is completely defined through its nodes and edges, it is possi-ble to extract some metrics, which concisely capture graph topological properties [68]. Network properties are defined at several scales. At a local scale, which refers to the node level, we typically have measures quantifying the node impor-tance in the network. One example is the node strength which is computed as the weighted sum of the links including a node. At a meso-scale, which is de-noted as the node group level, measures of nodes’ tendency to group are defined. One example is the study of modules and communities. At the global-level, we study properties related to the information transfer in the graph, such as global-or local-efficiency [59].

Figure 2.2: Main steps to estimate graph connectivity networks from time series. The first step is definition of graph nodes (i.e. the yellow circles on the left panel). From each node, brain signals are recorded. After choosing an appropriate FC estimator, functional connectivity is computed for each pair of nodes in order to obtain an adjacency matrix. Nodes and edges, corresponding to the elements in the adjacency matrix, constitute the final brain network.

The Graph Signal Processing Framework

Even basic and intuitive concepts in classical signal processing become chal-lenging where signals are defined over a graph. One example is the translation operation, which does not have a direct meaning in the graph setting because the shift invariant property is not defined on irregular domains [163]. Also the downsampling procedure, consisting on eliminating some samples in the classical signal processing, here is tricky because of the vertex dimension [132, 182].

Another fundamental tool in signal processing is the Fourier Transform, which has been redefined as Graph Fourier transform (GFT) [173] in GSP. For the pre-sentation of this transformation, a step back is needed. After defining the edges’ weights obtaining the adjacency matrix A, we can compute the graph Laplacian, as follows:

where D is the degree matrix, which is a diagonal matrix collecting in the i-diagonal element the sum of the edges including the node i.

We identify the set of orthonormal eigenvectors fuigi=0,1,…,N 1 , where N is the number of graph vertices. fuig relates to increasingly ordered eigenvalues 0 = l0 l1 l2… lN 1 = lmax.

Laplacian eigenvectors fui g play a fundamental role in GSP. In fact they are used as signals on graphs (s) and they represent a basis for the Graph Fourier Transform. The GFT for SoG s is computed as the projection of the SoG on the Laplacian eigenvectors:

Graph Laplacian eigenvalues defined in the graph setting, have the similar no-tion of frequencies in the classical Fourier transform. Indeed, small eigenvalues correspond to eigenvectors which have small variation on connected vertices. Con-versely, eigenvectors associated to large eigenvalues rapidly vary over connected nodes [132, 141, 173].

These considerations on graph eigenvectors will be used in Chapter 4 to define the denoising algorithm.

Among the other applications, GSP has been recently leveraged in brain net-work scenarios [80]. Indeed, GSP can be applied to neuroimaging data and it pro-vides tools to simultaneously investigate brain structure, contained in the graph, and the brain functioning, contained in the SoGs [118]. Medaglia et al. in [113] applied the GSP metrics of alignment and liberality to deepen the problem of at-tention switching with fMRI data. Findings show that subjects characterized by signals aligned with white matter structure can switch attention more rapidly. The same metrics (i.e. alignement and liberality, deriving from spectral graph filtering) have been applied by Bolton et al. in [22] to predict behavioural variables, such as cognitive features, task skills and motor abilities. Specifically, authors studied the presence of temporal dynamics in the alignment and liberality. Results from the temporal investigation, have demonstrated the possibility of detecting network transitions through changes in GSP metrics.

Graph signal processing has recently been applied for brain-computer inter-face applications. Petrantonakis et al. [140] have used GSP tools to extract novel features to improve the classification performances in NIRS-based BCIs systems.

Taken together, these first results show potential in the application of GSP to brain data analysis. In fact, GSP provides complete and flexible tools to analyse interacting systems, which find application in brain behaviour investigation at several levels and in a large set of applications, such as BCIs.

Representation of Time-Varying Networked Data

Nowadays networked data are studied by different perspectives and with several innovative tools. One intrinsic property characterizing almost all the data is the time-varying evolution. Social, biological, traffic networks change their descriptors in time and investigation tools must be able to capture this dynamic behaviour [94, 152]. Concerning the brain investigation, many studies limit the analysis to static functional connectivity networks, assuming that the interactions between brain signals are stationary. Today we know the brain coupling mechanisms are time-varying processes [23, 35, 167] with a dynamic alternation of synchronizations and desynchronizations that constitutes the basis for many neuro-physiological tasks, as perception and memory [61, 81].

In order to investigate the time-varying brain functioning, the simplest strategy is to reduce the length of the time interval, considering sliding windows with possible overlap. While reducing the time windows assures the signal (quasi) stationarity, the reliability of the FC estimates can rapidly decrease. This situation becomes even more critical when multivariate or non-linear FC estimators are adopted, since they need even longer time signals to compute reliable estimates [123, 138].

To overcome this limitation, a possible strategy for time-varying FC estimation consists in using techniques specifically designed for non-stationary signals, such as detrended fluctuation analysis [67] and wavelet transformation [38]. Among the other FC measures [68], some have been specifically developed for time-varying scenarios, such as wavelet coherence [184] and adaptive partial directed coherence [164].

This is a first-level analysis that deals only with short-time FC estimation pro-cedures. Another possibility is to go further and model graph temporal evolution. Fig. 2.4 represents an example of network changing its edges over time windows.

One strategy is presented in [134], where Ozdemir et al. performed a tensor decomposition of FC networks. The proposed algorithm enables to track time-varying connectivity networks, extracted from brain EEG data. This method firstly performs a decomposition of the graph Laplacian into a low rank + a sparse structure. Then, a tracking algorithm gradually updates subspaces at each new time interval. The hypothesis behind this decomposition is that FC slowly changes across time. As a consequence, the FC is modelled as a low rank matrix while the sparse component models noise and outliers. Shen et al. developed in [171] an alternative algorithm to track 3 D tensor representing time-varying graphs. In this study, authors proposed an original structural equation model (SEM), which requires a tensor decomposition, obtained by means of the well-known parallel factor decomposition [95].

State-of-the-art graph learning methods use either simplified graph models for SoGs or models that are more expensive in terms of computational cost. In the case of time-varying graphs, these aspects are even exacerbated. Ghoroghchian et al. [65] proposed a graph learning method based on representation learning on graphs. Specifically, the representation learning algorithm produces embeddings for graph vertices, by considering neighbouring nodes. This procedure was tested on intra-cranial electroencephalographic (iEEG) data recorded from epileptic pa-tients.

In the context of graph learning, many recent studies address the problem of time-varying graph characterization by assuming the smoothness on the tempo-ral domain [65, 122]. In this direction, Kalofolias et al. [91] and Yamada et al.

[207] proposed advanced optimization algorithms. Specifically, in [207], authors introduced a method to combine several regularizations for graph learning appli-cations even when few observations are available. Jiang et al. [86] developed a model to characterize graph temporal evolution in human connectome. Authors mathematically describe the problem as a quadratic objective function on graph vertices across short-time intervals. A regularization procedure is applied to re-flect the smoothness and other properties, such as the graph Laplacian evolution.

Another strategy consists in modelling the spatio-temporal structure of a 3 D graph. To this aim, Romero et al. [157] developed a kernel-based decomposition. The advantage of this method consists in simultaneously estimating the graph structure on time and space domain, with a reduction of the number of vertices to be analysed. Liu et al. [104] proposed an alternative way to estimate 3 D graph, by introducing a different algorithm based on smoothness prior to learn the graph and simultaneously identify the time-correlation pattern.

Ortiz et al. [133] presented a different strategy based on the structure of prod-uct graphs, that, whenever obtained by applying Kronecker and Cartesian prod-ucts, can be used for sampling and reconstruction applications. This method has broad applicability since product graphs can be used to model sensors’ values acquired at different time points.

In [82], Isufi et al. developed a sampling procedure for time-varying SoGs, to observe and track signals described by a linear state-space model. A mathematical study to identify the role of graph, graph signals and sample location is provided.

In conclusion, novel tools coming from different research domains pave the way for the study of brain organizational processes and time varying dynamics from an original perspective, constituted by merging signal processing, network theory and graph signal processing.

Functional connectivity (FC) is widely explored for its ability to capture functional interactions between brain signals. However, the role of FC in the context of brain-computer interface applications is still poorly understood. To address this gap in knowledge, we consider a group of 20 healthy sub-jects performing a motor imagery (MI) task. We study two state-of-the-art FC estimators, i.e. spectral- and imaginary-coherence, and we describe how they change during MI tasks. We characterize the resulting FC networks by computing the node strength of each EEG sensor and we compare its dis-criminant ability with respect to standard univariate features. At the group level, we show that while spectral-coherence based features increase in the sensorimotor areas, those based on imaginary-coherence significantly de-crease. We demonstrate that this opposite, but complementary, behaviour is caused by the increase in amplitude and phase synchronization between the brain signals during MI task. At the individual level, we demonstrate the potential of network connectivity features in an off-line classification frame-work. Taken together, our findings offer new insights into the oscillatory mechanisms underlying brain network during MI task and open new per-spectives to improve BCI performance.

Based on the detection of cognitive states from brain signals, brain-computer in-terfaces (BCIs) are explored for control, communication and rehabilitation, via the ability of subjects to voluntary modulate their brain activity through mental im-agery [202, 204, 205]. The ability to correctly identify the user’s intent is therefore a key point to design BCI systems[4, 20, 28, 56, 186, 194].

In this direction, investigators have explored different approaches based on various theoretical and experimental perspectives. One possibility is to look for the best mental strategy to detect the user response or to identify the adapted feedback to convey the most relevant information to the user [1, 120, 195].

Another possibility is to develop advanced signal processing methods and so-phisticated classification algorithms to improve the signal-to-noise ratio and to correctly identify the user’s intent [105, 106]. Although these methods provide performance increments, they are intrinsically blind to the neural mechanisms that enable to identify the user’s cognitive state and may not have a direct physical or physiological interpretation[190]. However, this is crucial especially in clinical ap-plications where brain functioning can be compromised and other solutions must be identified.

A different strategy consists in looking for alternative – potentially more infor-mative – features reflecting the human brain organization processes. To this end, functional connectivity (FC) can be adopted to estimate the interaction between spatially distributed brain areas by quantifying the dependences between the re-gional activities [51]. In contrast to univariate features such as frequency band power, FC appears more appropriate to grasp the oscillatory network processes involved in brain (re)organization during cognitive tasks [49]. Recent studies have demonstrated the potential of FC features in BCI, albeit the results are variable and difficult to compare because of the different FC estimators, tasks and limited number of subjects [24, 76, 176, 200]. More importantly, the neurophysiological interpretation of FC features is still poorly understood in motor imagery tasks but this is crucial to eventually design alternative FC-based BCIs.

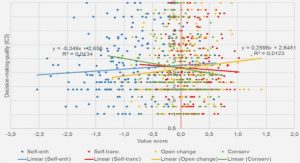

In order to investigate the actual possibility of applying FC features in BCI context, we consider two state-of-the-art FC estimators, i.e. the spectral coher-ence and imaginary coherence [29, 128]. From a theoretical perspective, these estimators bring complementary information because the first quantifies the syn-chronization between the signal amplitudes while the latter also depends on their phase difference [128, 158]. Our hypothesis is that the integration of these comple-mentary features will allow a better characterization of the BCI-related cognitive states and that including them in the feature extraction block will improve the BCI accuracy as compared to standard approaches solely based on univariate features. To verify our predictions, we explore brain FC networks extracted from EEG data recorded in a group of 20 healthy subjects performing the motor imagery (MI) of the right hand. In order to compare our approach with power spectrum features (P), we compute for each sensor the node strength, an intuitive graph metric which describes for each node its overall connectivity within the network. At the group level, we statistically compare the spatial patterns obtained from graph-related features associated to motor imagery and resting state. At the individual level, we evaluate the discriminant potential of network metrics by means of an off-line classification simulation.

To facilitate the reader, we list in In Table 3.1 the main notation used in this chapter.

Material and methods

Experimental protocol and preprocessing

Twenty healthy subjects (aged 27.60 4.01* years, 8 women), all right-handed, participated in the study. The subjects were recruited for a BCI training protocol and they did not have any medical or psychological disorder. The study was approved by the ethical committee CPP-IDF-VI of Paris and each subject signed a written informed consent. Each participant received financial compensation for the participation.

The BCI experiment consisted in a standard 1D, two-target box task [205]. The subject was in front of a screen with a distance of 90 cm. When the target was up, the subject was instructed to imagine moving his/her the right hand (i.e. grasping); when the target was down, the subject had to remain at rest. EEG data were recorded with a 74-channel system, with Ag/AgCl sensors (Easycap, Germany) in a 10-10 standard configuration. The reference for the EEG signals were mastoid signals and the ground electrode was set on the left scalpula. Data were recorded in a shielded room. Impedances were lower than 20 kOhms, the sampling frequency was 1 kHz, then downsampled to 250 Hz. All the subjects were naive BCI users and participated in a training protocol. For each subject we collected 64 trials of motor imagery and 64 trials of resting state. In each trial, the first second corresponded to the inter-stimulus interval (ISI), when a black screen was presented to the subject. During the following 5s, the target appeared on the screen and during this period subjects had to imagine a sustained grasping of their right dominant hand. During the experiments, hand muscular activity was recorded with EMG (electromyogram) to check the presence of involuntary movements during the motor imagery tasks. On-line, the experimenter ensured that subjects were not generating muscular artefacts during the task. Off-line, all the recorded signals have been checked to exclude the presence of evident muscular artefacts. We remind the reader to [45] for a detailed description of the experiments.

A pre-preprocessing step preceded the analysis/ Specifically, we performed on the entire dataset an independent component analysis (ICA) to eliminate ocular and cardiac artefacts, via the Infomax algorithm [18] available in the Fieldtrip toolbox [130]. The ICA was operated by the visual inspection of both time signals and their associated topographies. We removed no more than two independent components in average. In Appendix A we report one example of preprocessing on the first subject.

Functional connectivity and brain network features

We consider two state-of-the-art functional connectivity estimators [16], i.e. spec-tral coherence (C) [29] and imaginary coherence (IC) [128].

While other FC estimators, directed and undirected, have been already applied in the BCI context [71, 74, 76, 97, 146], here we explore C and IC because of their relatively simplicity and intuitiveness.

At group level, we average for each subject the associated connectivity matrices across trials and within frequency bands, namely: theta(q) = 4 7Hz, al pha(a) = 8 13Hz, beta(b) = 14 29Hz and gamma(g) = 30 40Hz. Node strength fea-tures are computed from each of these resulting networks. The same procedure is performed for power spectrum-based features.

We statistically compared connectivity and node strength values between the two mental states of MI and resting conditions. More specifically, for each condi-tion we consider the distributions of the connectivity-based features obtained from the entire population of 20 subjects. We used non parametric permutation statisti-cal tests (2000 permutations) with a statistical threshold of 0.05 [210] corrected for multiple comparisons with false discovery rate [19]. .

At individual level, we did not average the features across trials or within fre-quency bands. We let the classification procedure automatically select the set of best discriminant features for MI and resting for each subject. We only impose some constraints to limit the research complexity. First, we consider frequency bins from 4 to 40 Hz, because state-of-the-art results prove their involvement in similar motor tasks [125]. Second, we limit the research among a subset of elec-trodes spatially covering the sensorimotor areas [143].

In order to investigate the contribution of the three different types of features (SC,SIC and P) to the classification we considered all their possible combinations, i.e. seven in total. To normalize the values in each combination, we apply a z-score transformation to original features, i.e. channels frequency bins. Then, we perform a 100 repeated ten-fold cross-validation classification with linear dis-criminant analysis (LDA) [105, 106]. In addition, we perform a sequential feature selection procedure [57] within a nested cross-validation framework to automati-cally identify the best discriminant features for each subject.

Table of contents :

1 Introduction

2 From brain signals to functional connectivity and graph analysis

2.1 How to estimate brain connectivity from time series

2.2 Graph representation of brain connectivity networks

2.3 The Graph Signal Processing Framework

2.4 Representation of Time-Varying Networked Data

3 Graph connectivity estimation to detect mental states

3.1 Introduction

3.2 Material and methods

3.2.1 Experimental protocol and preprocessing

3.2.2 Functional connectivity and brain network features

3.2.3 Statistical Analysis and Classification

3.3 Results

3.3.1 EEG network connectivity changes during motor imagery

3.3.2 Modulation of amplitude and phase synchronization between brain signals

3.3.3 Mental state detection in single individuals

3.4 Discussion

3.4.1 Methodological considerations

3.5 Conclusions

4 Improving graph connectivity estimation with graph signal processing

4.1 Introduction

4.2 Related Work

4.3 Signal Model

4.4 Graph Connectivity Denoising

4.5 Jensen divergence of connectivity states

4.5.1 J-Divergence based Laplacian coefficients scoring

4.6 Results on synthetic data

4.6.1 Signal on Graph generation and connectivity estimation

4.6.2 Subspace robustness on synthetic data

4.6.3 J-divergence computation on synthetic data

4.7 Real BCI measurements

4.7.1 Experimental Protocol and Preprocessing

4.7.2 J-divergence of brain connectivity states

4.7.3 Scoring of Laplacian coefficients in beta band

4.7.4 Short-time estimation of Laplacian coefficients in b band

4.8 Conclusion and further work

5 Framework for short-time graph connectivity estimation

5.1 Introduction

5.2 Deep L1-PCA computational framework

5.3 Application to graph synthetic data

5.3.1 Connectivity matrices simulation

5.3.2 Robustness analysis via MSE

5.3.3 Classification framework

5.4 Results on real BCI data

5.4.1 Experimental Protocol and Preprocessing

5.4.2 Functional connectivity estimation procedure

5.4.3 Classification analysis on real EEG data

5.5 Deep L1-PCA applicability in BCI systems

5.6 Conclusions

6 Conclusions and future perspectives