Get Complete Project Material File(s) Now! »

Preliminaries

This chapter presents the background knowledge and material necessary to understand all the con-cepts presented in this report. Section 2.1 introduces the data graphs that are the subject of this research. Section 2.2 recalls the quotient summarization framework. The following three sections (2.3, 2.4, 2.5) provide detailed description of the process of summarization gradually adding new features enabling us to target the most general type of graphs, namely RDF graphs.

Data graphs

Our work is targeted to directed graphs, with labeled nodes and edges. This includes those described in RDF [39], the W3C standard for representing Web data. However, RDF graphs attach special inter-pretation to certain kinds of edges: (i) type edges may be used to attach type information to a data node; (ii) ontology edges may describe application-domain knowledge as relationships that hold between edge labels and/or node types.

Below, we introduce the useful terminology for RDF graphs since they are the most general class of graphs for which our summarization methods apply. We also isolate significant subsets of such graphs, which will be handled differently during summarization.

An RDF graph is a set of triples of the form s p o. A triple states that its subject s has the property p, and the value of that property is the object o. We consider only well-formed triples, as per the RDF specification [39], using uniform resource identifiers (URIs), typed or untyped literals (constants) and blank nodes (unknown URIs or literals). Blank nodes are essential features of RDF allowing to support unknown URI/literal tokens. These are conceptually similar to the labeled nulls or variables used in incomplete relational databases [1], as shown in [18].

Notations. We use s, p, and o as placeholders for subjects, properties and objects, respectively.

Figure 2.1 (top) shows how to use triples to describe resources, that is, to express class (unary relation) and property (binary relation) assertions. The RDF standard [39] has a set of built-in classes and properties, as part of the rdf and rdfs pre-defined namespaces. We use these namespaces exactly for these classes and properties, e.g., rdf type specifies the class(es) to which a resource belongs.

As our running example, Figure 2.2 shows a sample RDF graph. Black and violet edges encode data and type respectively, e.g., node n1 has property a whose object (value) is a1, n1 is of class (or has type) C1, etc.

RDF Schema (RDFS). RDFS allows enhancing the descriptions in RDF graphs by declaring ontological constraints between the classes and the properties they use. Figure 2.1 (bottom) shows the four kinds of RDFS constraints, and how to express them through triples. Here, “domain” denotes the first, and “range” the second attribute of every property. In Figure 2.2, the blue edges connecting boxed nodes are RDFS constraints: they state that C1 and C2 are subclasses of C, and that the domain of d is C2.

RDFS constraints are interpreted under the open-world assumption (OWA) [1], i.e., as deductive constraints. RDF entailment is the mechanism through which, based on a set of explicit triples and some entailment rules, implicit RDF triples are derived. For instance, in Figure 2.2, node n1 is of type C1, which is a subclass of C. Through RDF entailment with the subclass constraint in Figure 2.1, we obtain the implicit (entailed) triple n1 type C stating that n1 is of class C. Similarly, n2 and n4 have property d whose domain is C2 (thanks to the domain constraint in Figure 2.1), thus n2 and n4 are also of class C2. Further, because C2 is a subclass of C, they are also of class C.

In general, a triple s p o is entailed by a graph G, denoted G ⊢RDF s p o, if and only if there is a sequence of applications of entailment rules that leads from G to s p o (where at each step, triples previously entailed are also taken into account).

RDF graph saturation. Given a set of entailment rules, the saturation (a.k.a. closure) of an RDF graph G, is defined as the fixpoint obtained by repeatedly adding to G the triples derived using the entailment rules; we denote it G∞. For the four constraints shown in Figure 2.1, which we consider throughout this work, the saturation of G, is finite, unique (up to blank node renaming), and does not contain implicit triples (they have all been made explicit by saturation). An obvious connection holds between the triples entailed by a graph G and its saturation: G entails (leads to, has as logical consequence) the triple s p o if and only if s p o ∈ G∞. It is important to note that the semantics of an RDF graph is its saturation [39]. In particular, when querying an RDF graph, the answer to the query should be computed both from its explicit and its implicit triples.

For presentation purposes, we may use a triple-based or a graph-based representation of an RDF graph:

1. Triple-based representation of an RDF graph. We see G as a union of three edge-disjoint subgraphs G = DG; SG; TG , where: (i) SG, the schema component, is the set of all G triples whose properties are subclass, subproperty, domain or range; we depict such triples with blue edges; (ii) TG, the type component, is the set of type triples from G; we show them in violet; (iii) DG, the data component, holds all the remaining triples of G; we display them in black. Note that each union of the DG, SG, and TG components is an RDF graph by itself.

Further, we call data property any property p occurring in DG, and data triple any triple in DG.

2. The graph-based representation of an RDF graph. As per the RDF specification [39], the set of nodes of an RDF graph is the set of subjects and objects of triples in the graph, while its edges correspond to its triples. We define three categories of RDF graph nodes: (i) a class node is any node whose URI appears as subject or object of a subclass triple, or object of a domain or range or type triple; we show them in blue boxes; (ii) a property node is any node whose URI appears as subject or object of a subproperty triple, or subject of a domain or range triple, or property of a triple1; in Figure 2.2, d is a property node. We also show them in blue boxes; (iii) a data node is any node that is neither a class nor a property node. We show them in black. Note that the sets of class nodes and of property nodes may intersect (indeed, nothing in the RDF specification forbids it). However, data nodes are disjoint from both class and property nodes.

Size and cardinality notations. We denote by SGSn the number of nodes in a graph G, and by SGS its number of edges. Further, for a given attribute x ∈ {s; p; o} and graph G, we note SGS0x the number of distinct values of the attribute x within G. For instance, SDGS0p is the number of distinct properties in the data component of G.

We will rely on the graph, respectively, the triple-based representation when each is most natural for the presentation; accordingly, we may use triple or edge interchangeably to denote a graph edge.

Summarization framework

We recall the classical notion of graph quotient, on which many graph summaries, including ours, are based. In particular, we recall quotients based on bisimilarity, and show that their very nature makes them ill-suited to summarize heterogeneous graphs.

Graph quotients. Given a data graph G and an equivalence relation2over the node of G, the quotientof G by , denoted G i node for each equivalence class of (thus, for each set ≡ ~≡ , is the graph having ( ) a a ≡ a ≡ of 1 n 2 in G, an edge m 1 m 2 1 ; m 2 are theequivalent G nodes); and (ii) for each edge n , where m quotient nodes corresponding to the equivalence classes of n ; n respectively. Ð→ 1 2 Ð→

Many known graph summaries, e.g., [32, 25, 12, 37, 28, 14, 17] are quotient-based; they differ in their equivalence relations ≡. Quotient summaries have several desirable properties:

Size guarantees By definition, G~≡ is guaranteed to have at most as many nodes and edges as G. Some non-quotient summaries, e.g., Dataguides [19], cannot guarantee this.

Property completeness denotes the fact that every property (edge label) from G is present on summary edges. This is helpful to users approaching a graph dataset for the first time3; it is for this scenario precisely that our summaries are used in LODAtlas [34].

Structural representativeness is the following property: for any query q that has answers on G, its structure-only version q′, which copies all the graph patterns of q but erases its possible selections on nodes as well as counting predicates, is guaranteed to have answers on G~≡.

For instance, if q1 is “find all nodes that are target of an f edge and source of a b and a d edge” on the graph in Figure 2.2, then q1′ is the same as q1. If the query q2 is “find all nodes whose labels contain “Alice”, having exactly one (not more) incoming f edge and exactly one outgoing b edge”, the query q2′ asks for “all nodes having incoming f and outgoing b edges”. Thanks to representativeness, quotient summaries can be used to prune empty-answer queries: if q′(G~≡) has no answers, then q has no answers on G. Since the summary is often much smaller than G, pruning is very fast and saves useless query evaluation effort on G.

To enjoy the above advantages, in this work, a summary of G is its quotient through some equivalence relation.

Two extreme quotient summaries help to see the trade-offs in this context. First, let ⊺ denote the equivalence relation for which all nodes are equivalent: then, G~⊺ has a single node with a loop edge to itself for each distinct property in G. This summary collapses (and loses) most of the graph structure. Now, let – denote the equivalence relation for which each node is only equivalent to itself. Then, G~– is isomorphic to G for any graph G; it preserves all the structure but achieves no summarization.

Bisimulation-based summaries. Many known structural quotient summaries, e.g., [32, 12, 26, 15] are based on bisimilarity [23]. Two nodes n1; n2 are forward and/or backward bisimilar (denoted fw, bw and fb) iff for every G edge n a m 1, G also comprises an edge n a m 2, such that m m are 1 2 1 and≡ 2 ≡ forward and/or backward bisimilar, respectively. The fw and relations only take into account also ≡ Ð→ ≡ ≡ bwÐ→ symmetry fb the paths outgoing from (resp. only the paths incoming of G is an to) the nodes. The advantage, as it makes it more resistent to minor modeling differences in the data. For instance,~ let t′ be the triple a hasAuthored p and t′′ be p hasAuthor a, which essentially represent the same information. Triple t′ would impact the nodes to which a is ≡~fw, while it would not impact the nodes to which a is ≡~bw; symetrically, t′′ would impact the ≡~bw class of p but not its ≡~fw class. In contrast, ≡~fb reflects this information whether it is modeled in one direction or in the other.

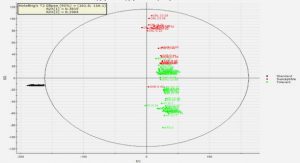

We denote the bisimulation based summaries G~fw (forward), G~bw (backward) and G~fb (forward and backward), respectively. They tend to be large because bisimilarity is rare in heterogeneous data graphs. For instance, each node of the graph in Figure 2.2 is only ≡~fb to itself, thus ≡~fb is useless for summarizing it; our experiments in Section 5.1 confirm this on many graphs. To mediate this problem, k-bisimilarity has been introduced [27], whereas nodes are k-forward (and/or backward) bisimilar iff their adjacent paths of length at most k are identical; the smaller k is, the more permisive the equivalence relation.

One drawback of k-bisimilarity is that it requires users to guess the k value leading to the best com-promise between compactness (which favors low k; note that k = 0 leads exactly to G~⊺) and structural information in the summary (high k). Further, even 1-bisimilarity is hard to achieve in heterogeneous graphs. For instance, Figure 2.3 shows the 1fb summary of the sample graph in Figure 2.24. Nodes in the bottom row of G, which only have incoming a, b, respectively d edges, and have no outgoing edges, are summarized together. However, none of n1, n2, n3 and n4 are equivalent, because of the presence/absence of a and d edges, and of their possible incoming edges.

Any structural equivalence relation cuts a trade-off between compactness and structure preservation. Below, we introduce two relations leading to compact summaries that in addition cope well with the data heterogeneity frequently encountered in RDF (and other) graphs.

Other interesting properties of such relations are: the complexity of building the corresponding summary, and of updating it to reflect graph updates. Further, the presence of type and schema (ontology) triples has not been formally studied in quotient graph summarization.

Data graph summarization

We first consider graphs made of data triples only, thus of the form G = DG; ∅; ∅ . We define the novel notion of property cliques in Section 2.3.1; building on them, we devise new graph node equiva-lence relations and corresponding graph summaries in Section 2.3.2. Summarization will be generalized to handle also type triples in Section 2.4, and type and schema triples in Section 2.5.

Data property cliques

We are interested in defining equivalence relations between two data nodes, that can cope with the heterogeneity present in many real-life data graphs. For example, up to 14 data properties (such as title, author, year, but also note, etc.) are used to describe conference papers in a graph version of the DBLP bibliographic database. Each paper has a certain subset of these 14 properties, and has some of them, e.g., authors, with multiple values; we counted more than 130 such distinct property subsets in a small (8MB) fragment of DBLP. To avoid the “noise” introduced by such structural heterogeneity, we need node equivalence relations that look beyond it, and consider that all the nodes corresponding to conference publications are equivalent.

To do that, we first focus on the way data properties (edge labels) are organized in the graph. The simplest relation that may exist between two properties is co-occurrence, when a node is the source (or target) of two edges carrying the two labels. However, in heterogeneous RDF graphs such as DBLP, two properties, say author and title, may co-occur on a node n, while another node n′ has title, year, and howpublished: we may consider all these properties (author, title, year and howpublished) related, as they (directly or transitively) co-occur on some nodes. Formally:

Definition 1. (PROPERTY RELATIONS AND CLIQUES) Let p1, p2 be two data properties in G: 1. p1; p2 ∈ G are source-related iff either: (i) a data node in G is the subject of both p1 and p2, or (ii) G holds a data node that is the subject of p1 and a data property p3, with p3 and p2 being source-related.

2. p1; p2 ∈ G are target-related iff either: (i) a data node in G is the object of both p1 and p2, or (ii) G holds a data node that is the object of p1 and a data property p3, with p3 and p2 being target-related.

A maximal set of data properties in G which are pairwise source-related (respectively, target-related) is called a source (respectively, target) property clique.

In the graph in Figure 2.2, properties a and b are source-related due to n1 (condition 1. in the definition). Similarly, b and d are source-related due to n2; consequently, a and d are source-related (condition 2.). Thus, a source clique of this graph is SC1 = {a; b; d}. Table 2.1 shows the target and source cliques of all data nodes from Figure 2.2.

It is easy to see that the set of non-empty source (or target) property cliques is a partition over the data properties of G. Further, if a node n ∈ G is source of some data properties, they are all in the same source clique; similarly, all the properties of which n is a target are in the same target clique. This allows us to refer to the source (or target) clique of n, denoted SC(n) and T C(n).

Strong and weak node equivalences

Building on property cliques, we define two main node equivalence relations among the data nodes of a graph G:

Definition 2. (STRONG EQUIVALENCE) Two data nodes of G are strongly equivalent, denoted n1 ≡S n2, iff they have the same source and target cliques.

Strongly equivalent nodes have the same structure of incoming and outgoing edges. In Figure 2.2, nodes n1; n2 are strongly equivalent to each other, and so are e.g., a1; a2, b1, b2 and b3 etc.

A second, weaker notion of node equivalence could request only that equivalent nodes share the same incoming or outgoing structure, i.e., they share the same source clique or the same target clique. Figure 2.4 illustrates this. Nodes x1; x2 have the same source clique because they both have outgoing y edges. Further, x2 and x3 have the same target clique because both have incoming w edges. Since equivalence must be transitive, it follows that x1 and x3 must also be considered weakly equivalent, since they “follow the same pattern” of having at least one incoming w edge, or at least one outgoing y or z edge, and no other kinds of edges. Formally:

Definition 3. (WEAK EQUIVALENCE) Two data nodes are weakly equivalent, denoted n1 ≡W n2, iff: (i) they have the same non-empty source or non-empty target clique, or (ii) they both have empty source and empty target cliques, or (iii) they are both weakly equivalent to another node of G.

It is easy to see that ≡W and ≡S are equivalence relations and that strong equivalence implies weak equivalence.

In Figure 2.2, n1; : : : ; n4 are weakly equivalent to each other due to their common source clique SC1; a1; a2 are weakly equivalent due to their common target clique etc.

Weak and strong summarization

Notation: representation function. We say the summary node of G~≡ corresponding to the equivalence class of a G node n represents n, and denote it f≡(n) or simply f (n) when this does not cause confusion. We call f≡ the representation function of the equivalence relation ≡ over G.

Weak summarization. The first summary we define is based on weak equivalence:

Definition 4. (WEAK SUMMARY) The weak summary of a data graph G, denoted G~W, is its quotient graph w.r.t. the weak equivalence relation ≡W.

The the graph in Figure 2.2 is depicted by the black nodes and edges in Figure 2.55; summary nodes are shown as unlabeled circles, to denote that they are anonymous (new) nodes, each of which represents one or more G nodes. The central one represents n1, n2, n3 and n4. Its outgoing edges go towards nodes representing, respectively, a1 and a2; b1, b2 and b3; finally, d1 and d2. Its incoming edges come from the representative of n5 (which was a source of an f edge in G), respectively from the representative of n6 (source of g).

The weak summary has the following important property:

Proposition 1. (UNIQUE DATA PROPERTIES) Each G data property appears exactly once in G~W.

Proof. First, note that any two weak summary nodes n1; n2 cannot be targets of the same data property. Indeed, if such a data property p existed, let T C be the target clique it belongs to. By the definition of the weak summary, n1 corresponds to a set of (disjoint) target cliques ST C1, which includes T C, and a set of disjoint source cliques SSC1. Similarly, n2 corresponds to a set of (disjoint) target cliques ST C2, which includes T C, and a set of disjoint source cliques SSC2. The presence of T C in ST C1 and ST C2 contradicts the fact that different equivalence classes of G nodes correspond to disjoint sets of target cliques. The same holds for the sets of properties of which weak summary nodes are sources. Thus, any data property has at most one source and at most one target in G~W. Further, by the definition of the summary as a quotient, every data property present in G also appears in the summary. Thus, there is exactly one p-labeled edge in G~W for every data property in G.

Importantly, the above Proposition 1 warrants that SG~W S, the number of edges in G~W, is exactly the number of distinct data properties in G. This observation is used in our weak summarization algorithms (Section 4.1). By definition of a quotient summary (Section 2.2), this is the smallest number of edges a summary may have (since it has at least one edge per each distinct property in G). Thus, G~W is a minimal-size quotient summary (like G~⊺ from Section 2.2, but much more informative than it). As our experiments show, SG~WS is typically 3 to 6 orders of magnitude smaller than SGS.

Strong summarization. Next, we introduce:

Definition 5. (STRONG SUMMARY) The strong summary of the graph G, denoted G~S, is its quotient graph w.r.t. the strong equivalence relation ≡S.

The strong summary of the graph of Figure 2.2 is shown by the black edges and nodes in Fig-ure 2.6. Similarly to the weak summary (Figure 2.5), the strong one features a single node source of a, respectively, b, d, f and g edges. However, differently from G~W, the strong summary splits the data nodes whose source clique is {a; b; d} in three equivalence classes: n1 and n2 have the empty target clique, while that of n2 is {f} and that of n3 is {g}. Thus, two data nodes represented by the same strong summary node have similar structure both in their inputs and outputs; in contrast, a weak sum-mary (recall Figure 2.4) represents together nodes having similar structure in their inputs or outputs, or which are both equivalent to another common node. As we can see, strong summarization leads to finer-granularity summaries. An effect of this finer granularity is that in G~S, several edges may have the same label, e.g., there are three edges labeled b in Figure 2.5 (whereas for G~W, as stated in Proposition 1, this is not possible). Our experiments (Section 5.1) show that while G~S is often somehow larger than G~W, it still remains many orders of magnitude smaller than the original graph.

By definition of ≡S, equivalent nodes have the same source clique and the same target clique. This leads to:

Proposition 2. (STRONG SUMMARY NODES AND G CLIQUES) G~S has exactly one node for each source clique and target clique of a same node n ∈ DG.

Proposition 2 is exploited by the implementations of our strong summarization algorithms (Sec-tion 4.1).

Typed data graph summarization

We generalize our approach to summarize graphs with data and type triples, thus of the form G = DG; TG; ∅ .

Starting from an equivalence relation ≡ defined over data nodes, in order to summarize DG ∪ TG, two questions must be answered: (i) how should ≡ be extended on class nodes (such as C1 in Figure 2.2)? and (ii) how should the type edge(s) of a node n be taken into account when determining to whom n is equivalent? Below, we answer these questions for any equivalence relation ≡, then instantiate our answer to the weak and strong relations we defined.

To study the first question, consider the sample graph above, and a possible summary of this graph at its right. Assume that the types A and B are considered equivalent. Quotient summarization represents them both by the summary node at the top right, which (like all summary nodes) is a “new” node, i.e., it is neither A nor B. Observe that this summary compromises representativeness for queries over both the data and the type triples: for instance, the query asking for “nodes of type A having property r” is empty on the summary (as type A has been conflated with type B) while it is non empty on the graph.

To avoid this, we argue that when moving from data to typed data graphs, any equivalence relation

≡ between data nodes should be extended to class nodes as follows: 1. any class node is only equivalent to itself and 2. any class node is only represented by itself, hence a graph has the same class nodes as its summary.

We now consider the second question: how should ≡ be extended to exploit not only the data but also the type triples? Note that two nodes may have similar incoming/outgoing data edges but different type edges, or vice-versa, the same types but very different data edges. We introduce two main alternatives below, then decline them for weak and strong summarization.

Data-then-type summarization

This approach consists of using an equivalence ≡ defined based on data properties in order to deter-mine which data nodes are equivalent and thus to build summary nodes, and data edges between them. Afterward, for each triple n type C in G, add to G≡ a triple f≡(n) type C, where we recall that f≡ (n) is the representative of n in G≡. This approach is interesting, e.g., when only some of the nodes have types (often the case in RDF graphs). In such cases, it makes sense to first group nodes according to their data edges, while still preserving the (partial) type information they have. We extend the W, respectively S summaries to type triples, by stating that they (i) represent each class node by itself; and (ii) follow a data-then-type approach, as described above.

In Figure 2.5, the black and violet edges (including the C1 node) depict the weak summary of the black and violet graph triples Figure 2.2. The type edge reads as: at least one of the nodes represented by its source, was declared of type C1 in the input graph. Similarly, the black and violet edges in Figure 2.6 show the strong summary of the same subset of our sample graph.

To recap, in data-then-type summarization using ≡, two data nodes are represented together iff they are ≡ based on their incoming and outgoing data edges, while a class node is only equivalent to itself (and always represented by itself).

One more point needs to be settled. Some TG nodes may have types, but no incoming or outgoing data properties. Strong summarization represents all such nodes together, based on their (∅; ∅) pair of source and target cliques. For completeness, we extend weak summaries to also represent such nodes together, by a single special node denoted N∅.

Type-then-data summarization

This approach takes the opposite view that node types are more important when deciding whether nodes are equivalent. Observe that our framework (just like RDF) does not prevent a node from having several types. At the same time, representing a node by each of its types separately would violate the quotient summarization framework, because a quotient, by definition, represents each node exactly once. Thus, in type-then-data summarization, we extend a given equivalence relation ≡ (based on data properties alone) as follows.

Definition 6. (TYPED EQUIVALENCE) Typed equivalence, denoted ≡T, is an equivalence relation over DG ∪ TG defined as follows: two data nodes n1 and n2 are type-equivalent, noted n1 ≡T n2, iff they have exactly the same set of types in G, which is non-empty; any class node is only equivalent to itself.

Intuitively, typed equivalence performs a first-cut data node classification, according to their sets of types. In particular, all untyped nodes are equivalent to themselves. This enables the definition of type-then-data summaries as double quotients: first, quotient G by ≡T; then, quotient the resulting graph by some data node equivalence only on untyped nodes (each left alone in an equivalence class of ≡T), to group them according to their data edges.

RDF graph summarization

We consider now the summarization of general RDF graphs which may also have schema triples, i.e., of the form G = DG; TG; SG .

Extending summarization

First, how to extend an equivalence relation ≡ defined on data nodes (and extended as we discussed in Section 2.4 to class nodes, each of which is only equivalent to itself), to also cover property nodes, such as the boxed d node in Figure 2.2? Such property nodes provide important schema (ontology) information, which describes how the properties and types present in the data relate to each other, and leads to implicit triples (recall Section 2.1). For the summary to preserve the semantics of the input graph, by an argument similar to the one at the beginning of Section 2.4, we impose that ≡ be extended so that any property node should (also) be equivalent only to itself, and propose that in any summary, any property node should be represented by itself. As a consequence, since any class or schema node is equivalent only to itself and represented only by itself:

Proposition 3. (SCHEMA PRESERVATION THROUGH SUMMARIZATION) For any equivalent relation ≡ defined on data nodes and extended as specified above to class and property nodes, and any graph G = DG; TG; SG , it holds that: SG≡ = SG, that is: the summary of G through ≡ has exactly the schema triples of G.

This decision allows us to simply copy schema triples from the input graph to each of its summaries. Figures 2.5, 2.6, 2.7 and 2.8, considered in their entirety, show respectively full G~W, G~S, G~TW and G~TS summaries of the sample RDF graph in Figure 2.2.

Table of contents :

1 Introduction

1.1 Organizational aspects

1.2 Scientific outline

1.3 Report structure

2 Preliminaries

2.1 Data graphs

2.2 Summarization framework

2.3 Data graph summarization

2.3.1 Data property cliques

2.3.2 Strong and weak node equivalences

2.3.3 Weak and strong summarization

2.4 Typed data graph summarization

2.4.1 Data-then-type summarization

2.4.2 Type-then-data summarization

2.5 RDF graph summarization

2.5.1 Extending summarization

2.5.2 Summarization versus saturation

3 Summarization aware of type hierarchies

3.1 Novel type-based RDF equivalence

3.2 RDF summary based on type hierarchy equivalence

4 Graph summarization algorithms

4.1 Centralized summarization algorithms

4.1.1 Data graph summarization

4.1.2 Typed graph summarization

4.2 Type-hierarchy-based summarization algorithms

4.2.1 Constructing the weak type-hierarchy summary

4.2.2 Applicability

4.3 Distributed algorithms

4.3.1 Parallel computation of the strong summary

4.3.2 Parallel computation of the weak summary

4.3.3 Parallel computation of the typed strong and typed weak summaries

4.3.4 Apache Spark implementation specifics

5 Experiments

5.1 Centralized algorithms experiments

5.2 Distributed algorithms experiments

5.2.1 Cluster setup

5.2.2 Configuration

5.2.3 Speed up thanks to the increase of the degree of parallelism

6 RelatedWork

7 Conclusion