Get Complete Project Material File(s) Now! »

Recursive Systematic Convolutional codes

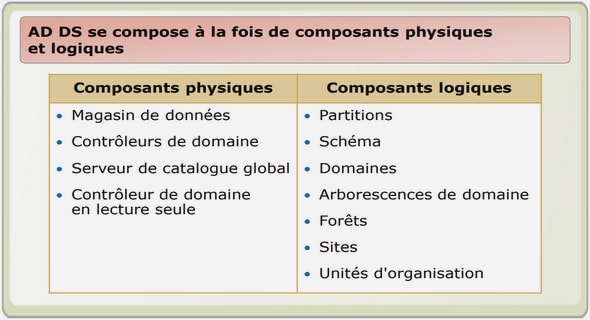

Convolutional codes have been widely used in applications such as space and satellite communications, cellular mobile, digital broadcasting, etc. Their popularity is due to their simple structure and easily implementable maximum likelihood soft decision decoding methods. A convolutional code of rate r = k=n is a linear function which, at every instant i, transforms an input symbol di of k bits into an output coded symbol ci of n bits (k > n). A code is called Systematic if a part of the output is made up of systematic bits si = di and the rest of the bits (n k) are made up of parity bits. The output bits are the linear combination of the current and the previous input bits. The linear function is usually given by generator polynomials. There are three main types of convolutional codes namely Non-Systematic (NSC), Systematic (SC) and Recursive Systematic (RSC) convolutional codes. The only difference in the encoding of the SC and NSC is that, in NSC the output does not contain input systematic bits as shown in Figure 1.5a. This figure illustrates too how a convolutional encoder can be described using shift registers and modulo two adders. The generator polynomials of the convolutional codes examples of Figure 1.5 are given as:

Si = di.

P1i = g1(x) = 1 + x1 + x2.

P2i = g2(x) = 1 + x2.

Ci (Si; P1i; P2i).

These generator polynomials represent the connections between the outputs of the shift register and the modulo two adders. They are generally represented by their coefficients in binary: (111) for P1i and (101) for P2i. If the encoder shift register has feedback and includes input data as part of the output then it is called Recursive systematic encoder (RSC) (Figure 1.6a). The encoder shown Figure 1.6a is a single binary Recursive Systematic Convolutional (RSC) code (from LTE standard [4]) which encodes one bit at a time. The parity bits are generated by the polynomial given by.

Metric level parallelism

The metric level parallelism concerns the processing of all metrics involved in the decoding of each received symbol inside a MAP SISO decoder. It exploits the inherent parallelism of the trellis structure and the parallelism of the MAP computations [16, 17].

Parallelism of trellis transitions: Trellis-transitions parallelism refers to the trellis structure as the same operations related to the computation of , , and the extrinsic information ( ext) should be repeated for all the trellis transitions. Thus, the first metric ( ) calculations can be done in parallel to the maximum degree of parallelism bounded by the number of transitions in the trellis. The degree of parallelism associated with the computation of the branch metric is bounded by the number of possible binary combinations of input and parity bits. For example, in WiMAX DBTC case, which has 2 bits for systematic and parity, there can be 22 22 = 16 different branch metric combinations. The other metrics , and extrinsic computation can be parallelized with a bound of total number of transitions (2M 2(bits per symbol)) in a trellis. This parallelism implies low area overhead as only the computational units have to be duplicated. In particular, no additional memories are required since all the parallelized operations are executed on the same trellis section, and in consequence on the same data.

Parallelism of MAP computations: The structure of the MAP algorithm permits parallel execution of the three MAP computations (, and extrinsic computation ext) [18]. Two scheduling strategies are followed, namely:

1. Forward-Backward schedule: Parallel execution of backward recursion and extrinsic computations was proposed with the original forward-backward schedule as depicted in Figure 1.9. First the Forward recursion () is calculated (parallelism degree =1), followed by backward recursion () and extrinsic computation computed in parallel (parallelism degree =2).

SISO decoder level parallelism

This level of parallelism exploits the use of multiple SISO decoders, each executing the MAP algorithm and processing a sub-block of the same frame in natural or interleaved orders. At this level, parallelism can be applied either on sub-blocks and/or on component decoders.

Frame Sub-blocking: In sub-block parallelism, each frame is divided into Nsubblocks sub-blocks and then each sub-block is processed by a MAP-SISO decoder using adequate initializations. Besides duplication of the SISO decoders, this parallelism imposes two other constraints:

The interleaving has to be parallelized in order to scale proportionally to the number of SISO decoders added. Because of the scramble property of interleaving, this parallelism can induce communication conflicts in accessing memories that store channel/extrinsic values. The interleavers of emerging standards are conflict-free for certain parallelism degrees (as explained in Section 1.2.2). In case of conflicts, an appropriate communication structure, e.g. Network On Chip (NoC), should be implemented for conflict management [20].

The MAP-SISO decoders have to be initialized adequately; this is done either by acquisition or by message passing.

1. Acquisition method: The acquisition method involves estimating sub-block boundary recursion metrics using an overlapping region, called acquisition window or prologue, as illustrated in Figure 1.12. The state metrics of the SISO decoders are initialized to zero and backward/forward recursion is carried out in order to find the more reliable state metric at the sub-block boundary. This has two implications on the implementation.

First of all, extra memory is required to store the overlapping windows. Secondly, extra time will be required for performing the acquisition related computations, which impacts the throughput performance of the decoder.

Table of contents :

Acknowledgements

Introduction

1 Background: Channel Codes and Decoding Algorithms

1.1 Communication system overview

1.2 Turbo codes

1.2.1 Recursive Systematic Convolutional codes

1.2.2 Turbo Code Interleaver

1.2.2.1 Almost regular permutation (ARP)

1.2.2.2 Quadratic polynomial permutation (QPP)

1.3 Turbo decoding

1.3.1 Maximum Aposteriori Probability (MAP) algorithm

1.3.2 Max-Log-MAP approximation

1.3.3 Max-Log-MAP for Turbo decoding

1.3.4 Parallelism in Turbo decoding

1.3.4.1 Metric level parallelism

1.3.4.2 SISO decoder level parallelism

1.3.4.3 Turbo decoder level parallelism

1.4 Low Density Parity Check codes

1.4.1 Linear block codes

1.4.2 QC-LDPC codes

1.4.3 LDPC in WiFi and WiMAX standard

1.5 Low Density Parity Check decoding

1.5.1 LDPC decoding algorithm: Normalized Min-Sum (NMS)

1.5.2 Scheduling

1.5.3 Modified NMS formulation for implementation

1.6 Summary

2 ASIP Design Methodology and State of the Art in Channel Decoder Design

2.1 Customizable embedded processors

2.1.1 Application-Specific Instruction-set Processors

2.1.2 ADL-based design tool: Processor designer

2.1.3 Classical ASIP design flow

2.2 State of the art in channel decoder design

2.2.1 Turbo decoding architectures

2.2.2 LDPC decoding architectures

2.2.3 Multi-code channel decoding architectures

2.3 Initial ASIP architecture for flexible Turbo decoding

2.3.1 Overview of the TurbASIP architecture

2.3.2 TurbASIP pipeline

2.3.3 Max and modulo operators

2.3.4 TurbASIP: sample assembly code

2.3.5 Memory partitions

2.3.6 ASIC synthesis results

2.4 Summary

3 DecASIP: Flexible Turbo/LDPC Decoder

3.1 Design motivations

3.1.1 Architecture Efficiency

3.1.2 Quantization analysis

3.2 DecASIPv1

3.2.1 System architecture

3.2.2 Turbo mode

3.2.2.1 Memory architecture

3.2.2.2 Processing schedule

3.2.2.3 Pipeline architecture

3.2.2.4 Interleave/deinterleave address generation

3.2.2.5 NoC messages

3.2.2.6 Assembly code

3.2.3 LDPC mode

3.2.3.1 Proposed scheduling illustrated with simple example using 2- DecASIPv1 architecture

3.2.3.2 Proposed scheduling with 8-DecASIPv1 architecture .

3.2.3.3 Memory architecture

3.2.3.4 NoC messages

3.2.3.5 Pipeline architecture and assembly Code

3.2.4 ASIC synthesis results

3.3 DecASIPv2

3.3.1 System architecture

3.3.2 Turbo mode

3.3.3 LDPC mode

3.3.3.1 NoC messages and NoC schedule

3.3.3.2 LDPC assembly code

3.3.4 Configuration memory

3.3.5 ASIC synthesis results

3.3.6 Discussions and analysis of recent related implementations

3.4 Summary

4 FPGA and ASIC Prototyping of DecASIP

4.1 Overview of the proposed FPGA 4-DecASIP system prototype

4.2 Flexible channel encoder

4.2.1 Flexible Turbo encoder

4.2.2 Flexible LDPC encoder

4.3 Flexible Turbo/LDPC decoder

4.4 Other blocks of the system prototype

4.4.1 Pseudo random generator

4.4.2 Flexible channel model

4.4.3 Global input interface

4.4.4 Error counter

4.4.5 Configuration module

4.4.6 Global system controller

4.4.7 Graphical User Interface (GUI)

4.4.8 USB interface

4.5 Results of the FPGA prototype

4.5.1 FPGA synthesis results

4.5.2 Speed of reconfiguration between different decoding modes

4.5.3 Scalability and throughput

4.5.4 Performance results

4.6 ASIC integration of DecASIP

4.6.1 MAG3D chip from CEA-LETI

4.6.2 Integration constraints

4.6.3 ASIC integration results

4.7 Summary

5 TDecASIP: Parameterized Turbo Decoder

5.1 Proposed design flow for parameterized cores

5.2 Design choices and TDecASIP decoder architecture

5.2.1 Design choices

5.2.2 TDecASIP decoder architecture

5.2.2.1 Pipeline control finite state machine

5.2.2.2 Pipeline architecture

5.2.3 Memory organization

5.3 FPGA prototype and synthesis results

5.4 ASIC synthesis results

5.5 Summary

6 LDecASIP: LDPC Decoder

6.1 Design motivations and LDecASIP decoder architecture

6.2 Prototype and incremental feature addition

6.3 FPGA and ASIC synthesis results

6.4 Summary

Conclusions and Perspectives

R´esum´e en Franc¸ais

Glossary

Notations

Bibliography