Get Complete Project Material File(s) Now! »

Message Acknowledgment

When communicating with nodes across the network, it is difficult to know whether a sent message has been received. Let us imagine a system such that for every received message, it replies with an acknowledgment to inform the sender that the message has been received. In this system if both messages are received, then both sender and receiver know the message. However if the receiver’s message, the acknowledgment, is lost then the sender might keep resending the original, and unless the receiver manages the duplicates it could lead into unexpected behaviors. This type of acknowledgment is a variation of the one bit sliding window algorithm and is used in the three-way handshake of TCP, as seen in Figure 2.1. Here we see two connections with a SYN and an acknowledgment message, both start a connection with a SYN and expect the ACK message. When the network is unreliable it might be preferable to use this mechanism. With reliable networks, messages are not frequently lost, it is more efficient to send negative acknowledgment, or NACK. These messages are issued by the receiver when it realizes that a message that should have been received is missing thereby requesting the sender to resend.

Another mechanism to acknowledge messages is selective acknowledgment [52], or SACK. This mech-anism does not acknowledge a specific message but instead, a group of send bytes. In TCP this group is called a window. Making the window larger makes it more efficient, as the ratio of acknowledg-ment bytes by useful diminishes. Errors make the connection less responsive, as it takes longer to realize of the error and recover. Jacobson [37, 51] proposed the algorithm for dynamically changing the window’s size that follows an AIMD, additive increase/multiplicative decrease. TCP uses the same mechanism to acknowledge the stream of sent bytes. Figure 2.2 shows this communication, the exchange of data and acknowledgments.

In addition to message acknowledgment, another factor influencing the reliability of inter-nodal communication is the question of how long a sender should wait prior to resending an unacknowledged message. TCP uses a timeout value called Retransmission TimeOut, or RTO. The initial value of RTO, before Round-Trip Time (RTT) values have been computed, is one second [60], and it some conditions it can revert to the old three seconds value [59]. After the first RTT has been acquired the RTO value will change according to Equation 2.5. Then when more RTT have been recorded, Equation 2.9 will be used to update the RTO value. A maximum value bound can be placed on RTO, as long as it is at least 60 seconds, and a minimum RTO value of one second is recommended [8] in order to get a conservative timeout that avoids unnecessary retransmissions. Jacobson et al, [37] suggested is 0:125 and is 0:25 in Equation 2.9. RTO0 = 3 (2.1).

Strong Consistency

Strong consistency represents the monolithic view of consistency, in which all clients observe the same version of data, and changes are rolled at the same time across all nodes. The system achieves strong consistency when operations are linearizable. Furthermore, in strongly consistent systems, there exists a total ordering of events, and all nodes execute the operations in the same order.

Models such as these, are very costly in (1) synchronization, as all nodes need to communicate to take decisions and inform them, having to stop and wait for the missing ones. (2) Scalability, due to synchronization will be less effective the more nodes are involved in the total ordering. The two strong consistency models are strict consistency and sequential consistency. Given that it is difficult to obtain a global clock in distributed systems it is difficult to implement.

Any other consistency less than sequential is usually regarded as a weak consistency model.

Weak Consistency

In weakly consistent systems, there is a trade-off between the guarantees imposed on the data and the latency and throughput of the system. With the lowest level of weak consistency, the user is not guaranteed to be able to read his own writes. This can cause expected behavior for the user, with the generation of duplicated operations. At the same time given the small number of constraints the system is more efficient at managing these operations and achieves a higher throughput. In this section we will explain several weak consistency models (causal and eventual) and we will introduce the shift to client-centric consistencies with session-guarantees.

Eventual Consistency

Without any other guarantee, eventual consistency [71, 73] ensures that messages will eventually be seen by the all nodes, as long as there are no unrecoverable crashes, or new messages, and that enough time is provided. This definition of eventual consistency does not ensure much except that a message will be in the other nodes at some point in the future. Bailis et al, [11] and Bermbach et al. [15] both propose probabilistic bounds in which this “eventually” could be determined. These studies, while not providing any guarantees, inform us of a systems behavior under eventual consistency, with some probabilistic metrics. In recent years eventual consistency has become very popular due to its lack of scalability limitations.

However the lack of guarantees makes it difficult for developers to build robust applications based upon eventual consistency, as in some cases [22] unexpected behaviors occur.

Session Guarantees

One of the issues with previous consistency models, is that they focus guarantees on the consistency of one object across the whole system. With in strong consistency this makes the system go too slow and on eventual consistency, while quick there are no ordering constraints which can cause missing events from the perspective of a user. Terry [70] shifted this paradigm and to focus on the user’s expectations. He proposed a stronger consistency model than eventual consistency in which there are session guarantees. A session is an abstraction of the list of operations that the user is running within the application. The objective of a session is not to behave as an atomic transaction but to provide the user with a consistent view of his operations during that session. Session guarantees can be summarized as:

Read Your Writes: read operations reflect on your writes.

Monotonic Reads: Successive reads reflect a non-decreasing set of writes.

Writes Follow Reads: Writes are propagated after reads on which they depend.

Monotonic Writes: writes are propagated after writes that logically precede them.

Note that if the user ends their session, the system does not guarantee that they will see their operations if they reconnect.

2PC

2PC stands for two-phase commit protocol [56], an atomic commitment protocol. As the name indi-cates, the protocol has two phases. A commit request phase or voting phase, and a commit phase or completion phase. During the voting phase, the coordinator sends a message to all the replicas that also have to commit the transaction, and wait for all the responses. Meanwhile, the replicas that receive the message, execute the transaction up to the commit point while logging the operations in a log. At this point every replica that gets to the commit point replies to the coordinator with a success, if all operations in the transaction were properly executed, or an abort if even one of them could not be executed. At this point the first phase is over and the completion phase begins. If all replicas replied a success the coordinator will send the commit message and all replicas will commit the previous operations, sending back an acknowledgment once the commit was successful. Once the coordinator receives all the acknowledgments, then and only then will new transactions be consid-ered. If only one of the replicas replies with an abort message from the first phase (or some timeout expires) then the coordinator will send the abort/rollback message instead. A rollback message will force all the replicas to go back to the state they were before the transaction began. After all the replicas rollback, an acknowledgment is sent to the coordinator that upon receiving all of them will abandon or retry the transaction.

The main disadvantage of this protocol is that if the coordinator fails after some of the replicas have sent their success/abort message from the voting phase, then as they never receive a reply from the coordinator they will be blocked.

Latency Model

Latency models, represent the different latency values that the messages can experience when sent from one node to another. P (X t) gives the probability for a given latency model that the sent message has been received before time t.

The first latency model that we use for our system is an exponential distribution in which the 1 represents the mean time of communication, as seen in Equation 3.1. Other latency models could be used like Equation 3.2 which we will be using in Section 3.6 to model a production-like latency scenario, in which the Pareto distribution models the bulk latency time and the exponential models the tail. P (X t) = 1 e t (3.1).

P (X t) = p(1 e t ) + (1 p)( m ) (3.2).

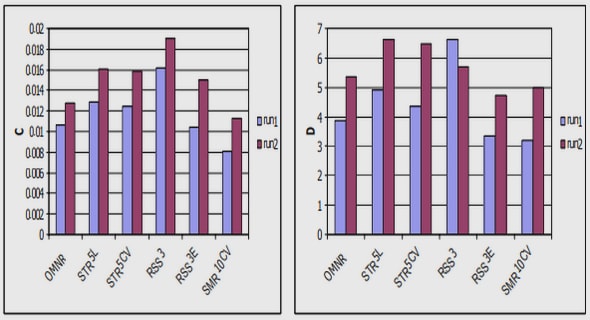

Equation 3.2 is a mixed distribution between the Pareto distribution; being the shape, and xm being the scale, which marks the lower bound in the distribution’s range; and the exponential distribution, with 1 being the mean value, while p is the proportion of exponential distribution. From Figure 3.1, the parameters that generate the CDF2 for both the exponential and Pareto distribution are such that they have the same mean value, and a relatively close median value. Nonetheless, they are two different distributions with different profiles.

Table of contents :

1 Introduction

2 Background

2.1 Reliability

2.1.1 Failure Classification

2.1.2 Message Acknowledgment

2.1.3 Multicast Messaging

2.2 Consistency Models

2.2.1 Strong Consistency

2.2.2 Weak Consistency

2.3 Consistency Management Algorithms

2.3.1 Consensus

2.3.2 2PC

2.3.3 Paxos

2.3.4 Quorum

2.3.5 CRDT

2.3.6 Bounding divergence

3 Theoretical Models

3.1 Notation

3.2 System Model

3.2.1 Latency Model

3.2.2 Visibility

3.3 Equations

3.3.1 Sending to 1 or P2P

3.3.2 Sending to n-1

3.3.3 Reception from n-1 (for n = 3)

3.3.4 Reception from n-1

3.4 Partial Orders

3.4.1 FIFO

3.4.2 Causal

3.5 Generalization

3.5.1 Window of events

3.5.2 Rate op for Delta

3.5.3 FIFO

3.5.4 Causal

3.6 Reactive Error Recovery

3.6.1 Mechanism description

3.6.2 Probability of False Positives

4 Simulations

4.1 Structure

4.1.1 Input parameters

4.1.2 Data Generation

4.1.3 Partial Order enforcement

4.1.4 Result analysis

4.2 Equation Experiments

4.2.1 Statistical Error between Equations and Simulations

4.2.2 Impact of partial order enforcement

4.3 RER Experiments

5 Experimental Validation

5.1 Motivations

5.2 Experimental Setup

5.2.1 AWS

5.2.2 Parameters

5.2.3 Data Recovered

5.3 Results

5.3.1 Data Processing

5.3.2 Single Datacenter

5.3.3 Multiple Datacenters

5.3.4 Fitting

5.4 Limitations

5.4.1 Global Clock

5.4.2 Performance

5.4.3 Not a real case-study

6 Conclusions

6.1 Future Works

Bibliography