Get Complete Project Material File(s) Now! »

Methodology

Methodology Overview

The methodology overview and research design presented in this chapter are the general overview of the overall study (i.e., Study 1, Study 2, and Study 3); detailed methods, data-analysis plans, relevant data-analysis procedures, and results are within the contents of the following chapters:

see Chapter 4 Study 1 for mixed methods: software evaluation e-portfolios, ANOVA, and qualitative iterative data analysis

see Chapter 5 Study 2 for CFA and SEM techniques

see Chapter 6 Study 3 for qualitative iterative data analysis

Therefore, this chapter presents the methods overture for achieving the study’s research aim and research questions. The research methodology of the study includes principles of research design and procedures for data collecting, data analysis, and interpretation of data collected through a case study (Creswell & Plano Clark, 2011). This thesis utilized three studies using a multiphase design and combining the sequential analysis of the quantitative and qualitative data sets over multiphase to form a constructed overview of e-portfolio usage in two faculties, EDSW and FMHS, of the University of Auckland, New Zealand.

This thesis takes a realist view of physical and psychological phenomena and attempts to examine e-portfolios from multiple perspectives in order to understand their real-world existence and functions. This goal requires using multiple methods, each selected to maximize understanding of different aspects of both physical and social uses of e-portfolios. To describe the current status quo, interviews, surveys, and observations are conducted. To explore the inter-relationship between a variety of e-portfolio facets and outcomes, sophisticated causal-correlational statistical techniques are used. To test hypotheses generated by statistical analyses, interview data are subjected to thematic analyses using both deductive and inductive methods. Interpretation across studies and methods has led to an integrated understanding of the status quo and aspects of e-portfolio that need revision to achieve intended purposes and goals.

The multiphase design, by definition, is the concurrent and/or sequential collection of quantitative and qualitative data sets over multiple phases of a program of study (Creswell & Plano Clark, 2011). Fundamentally, it offers a design structure that combines the differing strengths and generalizability of quantitative methods (large sample size and trends) with the specific and detailed scheme of qualitative methods (small N and in depth; Creswell & Plano Clark, 2007; Patton, 1990). Working with quantitative and qualitative approaches, in tandem, provides a more comprehensive and extensive procedural archetype of successful implementation of e-portfolios and usage factors that may lead to quality engagement with the system. This design is employed when the researcher wants to compare and contrast quantitative statistical results with quantitative findings for corroboration and validation purposes (Creswell & Plano Clark, 2007). Moreover, this methodological approach can be less vulnerable to errors and biases and enables a robust schematic analysis to best explain the research questions and investigative the goals of the research undertaking, as compared to a single-method approach (Creswell, 2014).

Research Design and Research Methods

The multiphase and sequential-analysis design structure consists of a series of quantitative measures of one-way analysis of variance (ANOVA), CFA, and SEM techniques, and a qualitative iterative data-analysis scheme to validate factors of e-portfolios in a cost-reward analysis framework. The multiphase design was employed due to the flexibility, intuitive sense, and effectiveness of having each type of data collected and analyzed independently (Creswell, 2014), and then compared and contrasted in the results section of the study and merged with one another to obtain an overall understanding of the research questions and goals (Creswell & Plano Clark, 2011). Figure 4 visually layers the three levels of the study and the processes of data collection, analysis, results, and the overall interpretation of the data.

Design purpose.

The design of the study is multiphase structure, which positions it to address the research questions of taking an evaluative approach to technology systems (i.e., e-portfolios) and developing a framework to identify the cost-reward factors of e-portfolios. The mixing of methods is an inquiry that combines both qualitative and quantitative data, integrates the forms of data, and uses distinct designs that may involve philosophical assumptions and a theoretical framework (Creswell, 2014). The design purpose of the study is based on identifying observable components of e-portfolios that would lead users to comply versus facilitating factors that would improve engagement with the system.

Therefore, Study 1 data collection begins with an evaluation of the technology and software features of prevailing e-portfolios (as identified by the associate course directors of the ECE, primary, and secondary GradDip teaching programs). Next, a standardized self-reported survey questionnaire, evaluating student satisfaction with, and the usability of, e-portfolios such as MyPortfolio (Mahara) and Google Sites, was completed by pre-service student teachers in the GradDip ECE and secondary teaching programs. This is then followed by qualitative interviews within the framework of the cost-reward analysis (i.e., likes, dislikes, and recommendations). Study 2 follows up in terms of empirically evaluating factors identified in Study 1 and the cost-reward analysis utilizing the Multigroup evaluative processes of CFA and SEM techniques to 1) validate survey measurement items of student perspectives such as perceived benefits, and importance and usefulness, and 2) identify factors of e-portfolios such as training and support, assessment practices, and long-term benefits, and their relationships to compliance and quality engagement with the system.

Study 3 interviews nursing students and centers around the cost-reward analysis and on the framework of student ownership of their learning with e-portfolios (Milner-Bolotin, 2001; Shroff et al., 2014). Study 3, using the qualitative iterative approach, examines students’ sense of personal value, feeling in control, and taking responsibility (Shroff et al., 2014) when using e-portfolios to satisfy nursing competencies.

The level of interaction between the phases of the study is organized sequentially with the results of each individual level of the study influencing the next level of the study. Ultimately, the design purpose of the study is to identify specific observable components of e-portfolios that would lead to compliance versus learning. The design purpose is also structured to create an evaluative analysis of the relationship of technology systems and how systems impact the educational goal of learning and the administrative goal of ensuring professional external standards. Table 5 shows the mixed-methods sequential research design of the study.

According to Johnson and Onwuegbuzie (2004), the explanatory sequential design collects either quantitative or qualitative data first and uses the data and results to inform the sequences of the research undertaking. The mixed-methods sequential design of the study was initiated and informed by the software evaluation of the prevailing e-portfolios. This was followed by a quantitative ANOVA analysis of user satisfaction and usability comparison of MyPortfolio (Mahara) and Google Sites e-portfolios, along with a qualitative, iterative data-analysis approach, interview of pre-service student teachers to identify factors of e-portfolios that will increase student engagement with the system. These factors were then analyzed using quantitative CFA and SEM techniques. The final method was a qualitative, iterative data-analysis approach, interview of pre-service Bachelor of Nursing (BNurs) students, under the same purpose of using an e-portfolio to satisfy a professional external standard.

This thesis reports a Phase-1 Software Evaluation, QUAN (ANOVA comparative analysis) + QUAL (student interview); Phase-2 QUAN (Factor Analysis); and, Phase-3 QUAL (student interview). This sequential explanatory approach was structured to generate a synthetic view of a cost-reward analysis of e-portfolio usage in a single academic institution, within two faculties, and to determine factors which increase students’ quality engagement with the system.

Sampling strategy.

A convenience sampling design was carried out to enlist participants for the research study. Participants were recruited with assistance of the associate dean, associate director, director of the Learning Technology Unit, and course coordinators. Participants were also identified based on their required use of e-portfolios to satisfy New Zealand teacher standards and New Zealand nursing competencies. Therefore, convenience sampling was adopted based on convenience and availability (Cresswell, 2014). On the other hand, this method of sampling strategy can be a major disadvantage, as it is prone to producing an inaccurate and unrepresentative sample of the population (Cresswell, 2014; Keyton, 2014). Further, this method also exposes potential biases due to the availability of volunteers who may differ in motivation, interest, and inclination compared to those who decline to participate. The Human Participants Ethics Committee (HPEC) does allow convenience sampling strategy as this design is sometimes a necessary option, or the only option for researchers, as long as ethical constraints are addressed and participants are protected, with confidentiality and anonymity and the right to withdraw their data based on an agreed timeframe (see Appendices A, B, and C for HPEC approved items).

In light of the purpose of the study and to provide an initial understanding of e-portfolio software platforms adopted at the University of Auckland, a convenience sampling design was deemed appropriate for the research undertaking. To adhere to HPEC and to protect the study’s participants, the researcher opted to create the surveys for Study 1 and Study 2 anonymously, which means participants’ names were omitted or not necessary anywhere on the survey questionnaire. For the interview protocol, participants were identified using their email but names or other pieces of information were removed from the interview data sheet. Without exception, all items were considered and informed by the voluntary response rate, total sample size, and limitations in light of sampling strategy from a case study and at a single site.

Quantitative Analysis Overview

Two quantitative data procedures were adopted for Study 1 and Study 2. Study 1 employed a one-way ANOVA, which was performed to compare the MyPortfolio (Mahara) and Google Sites e-portfolio software platforms in terms of user information satisfaction (UIS) and usability evaluation method (UEM) of these e-portfolios.

Study 2 utilized CFA and SEM techniques and used student perspectives on perceived benefits and importance and usefulness. The primary focus of the Study 2 data-analysis plan was to confirm the structure of the latent variables used, by evaluating the reliability of the measurement models prior to proceeding with exploration of the relations among the variables. Next, SEM was used to incorporate the concept of observed or manifest variables and latent variables. This is particularly useful in this study, as the key constructs or factors are understood as latent or implicit causal variables that cannot be directly measured but can be assumed through observable indicator items (Byrne, 2016). The aim of Study 2 revolves around confirming factors arising from Study 1 and the facilitating factors that will improve student engagement with e-portfolios. (see Chapter 5 for the detailed description and procedures of the quantitative data techniques and results of Study 2).

Qualitative Data-Analysis Framework Overview

A qualitative iterative data-analysis approach has been adopted (Srivastava & Hopwood, 2009) (see Chapter 6 for the detailed description and procedures of the quantitative data techniques and results of Study 3). Study 3 has also utilized the framework of student ownership of learning with e-portfolios to explore the cost-reward analysis of the system in the BNurs program (Milner-Bolotin, 2001; Shroff et al., 2014).

Iterative data analysis is the process in which patterns and themes emerge from the data but not on their own (Srivastava & Hopwood, 2009). Data are driven based on useful sets of triangulated inquiry: (1) self-reflexivity, for example what the researcher knows and wants to know; (2) reflexivity about those studied, for example how those studied know what they know; and (3) reflexivity about the audience, for example how those who receive my findings make sense of what I give them (Patton, 2002; Srivastava & Hopwood, 2009).

Data Collection Procedures

The teachers were asked to arrange venues and time for the administration of the paper-based survey questionnaire. All participants were briefed and allowed to ask questions after the researcher went over the participant information sheet (PIS). The survey was anonymous and a consent form (CF) was deemed not necessary, as per the instruction of HPEC (reference number 011928). Participants were also informed of their rights and ethical considerations (see Appendices A, B, C, F, H, I, and J for all approved CF, PIS, and Amendments).

Ethical Considerations

The study followed strict adherence to ethical standards during the planning and execution of both the quantitative and qualitative research study undertaking. The ethical issues and considerations were submitted to the University of Auckland HPEC for approval. The ethical issues involved in this study are informed consent and participants’ privacy, confidentiality, and anonymity. The researcher and the research team also guaranteed that students’ decision to participate, not participate, or withdraw at any time without giving any reason would not impact their evaluations and grades.

All participants had the right to withdraw at any time and have their data excluded, but a particular deadline was stipulated due to data analysis being conducted by the researcher normally within 4 weeks of completion of data gathering. All participants were also given a PIS informing them of the survey and interview process. Lastly, a CF was not necessary for the survey portion of the study, as it was anonymous; CFs were employed for the interview portion of the study but participants’ names were replaced with their email address and an alpha-numeric code only known to the researcher.

Data storage/retention/destruction/future use.

The paper data (hard copy) was stored and secured in a locked cabinet at the University of Auckland, EDSW office facility. Only the researcher and the research team had access to the office and storage facility. All electronic data were stored in a password-protected desktop computer. The data are to be destroyed after period of 6 years. The paper data (hard copy) will be disposed via shredding, which will be conducted by the school’s facilities coordinator. The electronic data will be expunged by the school’s IT support and technician.

Rights to withdraw.

Participants were informed that they had the right to withdraw and have their data removed from the analysis up to 4 weeks after they had completed either the self-reported survey and/or the retrospective interview of their e-portfolio use. The 4-week period was given to all participants to have their data removed from the analysis, as the researcher needed a deadline so that the results of the study could be published or presented at a conference.

Control over participants’ data and publishing options.

In research, it is important that all participants are informed, made aware of their options, and have their privacy maintained. Considering the potential threat to students’ information and privacy, it was imperative that the researcher protected the participants by the following provisions:

Alpha-numeric codes were given to survey questionnaires, interview recordings, and interview transcriptions to protect the privacy of participants.

All participant names were deleted from any paper or electronic document. It was not possible to match survey serial numbers to interview recordings or transcriptions.

No names were used in any reports arising from the study.

No personal information was collected or stored.

All participants were also informed that all collected data would only be used for the completion of the researcher’s Doctor of Philosophy in Education thesis requirements, academic publications, and conference proceedings. All participants were also informed that they could obtain a copy of the published work by emailing the researcher.

Table of Contents

Abstract

Acknowledgements

List of Tables

List of Figures

Introduction

1.1 Electronic Portfolio (E-Portfolio) Systems

1.2 E-Portfolios at The University of Auckland: A Tale of Two Faculties

1.3 The Cost-Reward Analysis of E-Portfolios

1.4 Research Significance

1.5 Structure of Thesis

Literature Review

2.1 E-Portfolios and the Social Exchange Framework

2.2 The Classification of E-Portfolios and the Stages of E-Portfolio Maturation

2.3 The Essential Technology Features of E-Portfolios

2.4 The Affordances and Benefits From E-Portfolios

2.5 The Student Ownership of Learning with E-Portfolios

2.6 E-Portfolios and Assessment Practices

2.7 The Cost-Reward Analysis of E-Portfolios: The Challenges with E-Portfolios in Practice

2.8 Research Questions

Methodology

3.1 Methodology Overview

3.2 Research Design and Research Methods

3.3 Quantitative Analysis Overview

3.4 Qualitative Data-Analysis Framework Overview

3.5 Data Collection Procedures

3.6 Ethical Considerations

Evaluating, Comparing, and Best Practice in Electronic Portfolio System Use

4.1 Abstract

4.3 Evaluating, Comparing, and Best Practice in Electronic Portfolio System Use

4.4 Essential Technology Features of E-portfolios

4.5 The Usability Evaluation of E-portfolios

4.6 Aim and Objectives

4.7 Methodology

4.8 Results

4.9 Discussion and Best Practice in E-portfolio Use.

4.10 Limitation and Conclusion

4.11 The Cost-Reward Analysis of E-Portfolios: Study 1

Study 2

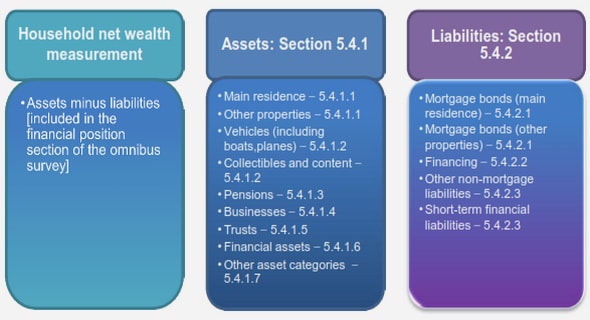

5.1 Study 2: Conceptual Model

5.2 Data-Analysis Plan

5.3 The GradDip in Teaching

5.4 Participants

5.5 Instruments

5.6 Descriptive Statistics: Training and Support Workshops Attended and Personalization of E-Portfolios

5.7 Validating the Perceived Benefits, and Importance and Usefulness of E-Portfolios

5.8 Factor Analysis and Invariance Testing

5.9 Summary

Study 3

6.1 Qualitative Data-Analysis Plan

6.2 Bachelor of Nursing (BNurs) Program and the Chalk & Wire System

6.3 Participants

6.4 Data Collection Procedures

6.5 Study 3 Interview Results

6.6 Cost-Reward Analysis and Recommendations

6.7 Summary

Discussion & Conclusion

7.1 Summary of Findings

7.2 Theoretical and Practical Implications

7.3 Limitations

7.4 Future Research Direction and Considerations

7.5 Conclusion

References

GET THE COMPLETE PROJECT

The Cost-Reward Analysis of Electronic Portfolios: Towards Best Practice and Improved Student Engagement