Get Complete Project Material File(s) Now! »

The greek sensitivities

The greek sensitivities will be used in approach 3 and is what gives the model the ability to esti-mate the option price for different shifts in the two risk factors. This will be better explained in Section 2.4.2 when the theory behind Taylor polynomials is explained. In the following section we will describe how these greek sensitivities are estimated.

The sensitivities that are used in this thesis are delta, gamma and vega. A simple explanation of delta is that it estimates how much the price of the option changes for a 1 SEK change in asset price. A delta of 0.65 for instance will result in an increase of 0.65 SEK in the price of the option when the underlying asset increases by 1 SEK. Depending on the payoff function for the option, delta can be either positive or negative. What determines the delta is how the payoff function is structured but also where the asset price is with respect to the strike price. Gamma describes the change in delta for a 1 point move in the underlying asset price. It defines the convexity for the option value relative to the underlying asset. Gamma is the second derivative of the value of a option with respect to the underlying assets’ prices (Daníelsson 2011, 116-117).

Vega is like delta, the first derivative but with respect to volatility instead of the underlying asset’s prices. Vega describes the change in the price of an option for a one percent change in implied volatility (Investopedia, 2018b).

There is two different broad categories for estimating the sensitivities, methods involving at least two values of the parameter of differentiation and methods that do not. The first category is called finite-difference approximations and is in theory easier to implement and is why it’s used in this thesis. The negative part of this method is that it produces biased estimates and therefore requires balancing the bias and variance. The other category, pathwise methods, produces unbiased estimates since it differentiates a probability density rather than an outcome.

Finite-Difference Approximation

Assume a model with a parameter ranging over some interval of the real line. Further on, suppose that for each value of there is a process for generating a stochastic variable Y ( ), which is the output for the model at parameter .

The problem of estimating the derivative breaks down to finding a way to estimate 0( ). In the case of option pricing Y ( ) is the discounted payoff of the option, ( ) is option’s price, and is any underlying risk factor that could have an impact of the option’s price. When is the initial price of an underlying asset, then 0( ) is the option’s delta. In the same way the gamma can be calculated but now using the second order derivative of the option’s initial price with respect to the underlying asset, put mathematically 00( ). When the underlying risk factor is volatility, 0( ) is called vega.

The central estimator is more computational demanding in that sense that two additional points, +h and h, need to be computed for estimating ( ) and 0( ). As seen, the forward-difference estimator only requires one additional point, + h.

Now illustrating the forward-difference estimator compared to the central-difference estimator in a simple example in Figure 2. Let’s assume a Black-Scholes option with the following attributes; volatility=0.3, interest rate=5 percent, strike price=100 and 0.04 years (about two weeks) until expiration. A comparison is done at the tangent line with underlying asset price of 95, using forward-difference from prices at 95 and 100 and a central difference with prices of 90 and 100. With respect to the tangent line at 95 one can see that the slope of the central difference is clearly closer than the slope of the forward difference.

When using deterministic algorithms to estimate the derivatives there is a problem with using very small values of h since in applications of Monte Carlo, specifically the variability of the estimates, prevents the user from doing that. Due to this, the user shall use a « sufficient » large value of h to get as accurate estimations of derivatives as possible but this leads to possible round-off errors. So when implementing it is advisable to be aware of the possible round-off error but it is rarely the main issue to accurately estimate the derivatives using simulation.

Taylor polynomials

When the greek sensitivities delta, gamma and vega are estimated they can be used in a Taylor approximation to estimate the option price for shifts in the different risk factors.Delta, gamma are in fact the first and second order derivative of the option price with respect to the value of the underlying asset and vega is the first order derivative with respect to volatility. In words the new option price can be estimated with the following equation, New option price = old option price + delta * (new stock price at time t+1 – starting stock price at time t) + 0.5 * gamma * (new stock price at time t+1 – starting stock price)2+vega * (new volatility at time t+1 – starting volatility at time t). The more theoretical explanation of Taylor polynomials is that it’s used to best describe the be-haviour of a function around a specific point. For a function f(x) about x = a the 1st order Taylor polynomial will be P1(x) = f(a) + f0(a)(x a) (21) and that function best describes the behaviour of f near a compared to any other polynomial of degree 1, since P1 and f both have the same derivative and value at a, P1(a) = f(a) and P10(a) = f0(a). Since its a first order Taylor polynomial the approximation will be linear.

To better approximate f(x) higher degree polynomials can be used as long as f is differentiable to that degree. For a function f that is twice differentiable near a the polynomial P2 (x) = f(a) + f0(a)(x a) + f00(a) (x a)2 (22) satisfies P2(a) = f(a); P20(a) = f0(a); and P200(a) = f00(a) and can best approximate the behaviour of f around a compared to any other polynomial of degree max 2. For the general case where f(n)(x) exists the polynomial will be Pn(x) = f(a) + f0 (a) (x a) + f00 (a) (x a)2 and be the best approximation for f around x = a. Pn is the nth order Taylor polynomial for f (Adams and Essex 2013, 271).

Interpolation techniques

A few interpolation techniques will be tested and the reason why interpolation is needed is because it will implicate a better approximation among the different approaches.

Suppose the value of a quantity y is uniquely determined by the value of some other quantity x. Since the exact dependence of y = f(x) is unknown there is an interest of finding that dependence. To obtain this dependence, variables are measured in different situations. These different situations implies the value y = f(x) of the unknown function f(x) for several values x1; :::; xn. Based on this, a prediction of the value f(x) is desirable for all other values x. When x is between the lowest and highest value of xi=1;:::;n, it is called interpolation. When x is lower than the lowest value and higher than the highest value of xi=1;:::;n, it is called extrapolation (Pownuk and Kreinovich, 2017).

Linear interpolation

One of the most common interpolation techniques is linear interpolation and is based on the as-sumption that the function f(x) is linear on the interval [x1; x2]. This leads to the following formula for f(x): f(x) = x x1 f (x2) + x2 x f (x1) : (24) x2 x1 x2 x1

The main argument of using this type of interpolation is because of its simplicity, and in many practical situations linear interpolation works quite well. The easiest functions to compute are also linear ones. But in computational science not to mention financial situations, often very complex computations are necessary which does not include linearity (Pownuk and Kreinovich, 2017).

Piecewise Cubic Hermite interpolation

The most effective interpolation techniques are based on piecewise cubic polynomials. Hermite interpolation uses the values of the function and the first derivatives at the nodes, the interpolated values. The interpolation functions are local cubics, thereby the name Piecewise Cubic Hermite interpolation.

Let P (xk) = yk, k = 1; :::; n be the interpolating polynomial.

Let hk := xk+1 xk be the length of the kth subinterval.

Then

k = yk+1 yk :

hk

Let dk := P 0(xk).

Note: If P (x), the interpolant, is piecewise linear, then dk is not defined since dk = k 1 on the left of xk, but on the right of xk, dk = k. In this case it´s undefined since k 1 6= k.

For higher-order interpolants, cubics for instance, it is possible to force the interpolant to be smooth at xk, which sometimes is referred to as breakpoints. By forcing the derivative at the end of one piecewise cubic to match the derivative at the next piecewise cubic, smoothness is received.

The Black-Scholes model

This famous model is quickly described to support the theory behind estimation of volatility dis-cussed in Section 2.7.

This is a special case and let us consider a financial market consisting of only two assets. The first asset is the stock price S and the second one is a so called risk free asset with price process B. Then assume we have a market with the following dynamics:

dB(t) = rB(t)dt

dS(t) = S(t) (t; S(t))dt + S(t) (t; S(t))dW (t) (26)

where r, and are deterministic constants and W is a Wiener process.

Then we can specialize the dynamics above to the case of the Black-Scholes model,

dB(t) = rB(t)dt

dS(t) = S(t)d(t) + S(t)dW (t): (27)

Reader is guided to Bjork (1998, 76-90) for all details and deeper understanding.

Historical volatility

Volatility is one of the most significant parameter when pricing an option but also one of the hardest to estimate. The historical volatility is commonly used as an indication of what the volatility for the assets is. Volatility is described as a statistical measure of the dispersion of returns for an asset or market index. Measurement of volatility can either be done by using the standard deviation or variance between returns from that same asset or market index. Riskier assets are generally connected to higher volatility. In stock market volatility is often associated with big swings in either directions, the market is called volatile if the stock rises and falls more than one basis point over a sustained period of time. The Volatility Index (VIX) expresses the market volatility. VIX was created to measure the 30-day expected volatility of the U.S. stock market derived from spot prices of S&P 500 call and put options. What many investors do not know when buying options is that they are paying a greater amount of money for the option if the implied volatility is higher (Investopedia, 2018c).

An example; assume that a quantitative investor wants to value a plain vanilla, European call, with one year to expiration date. The volatility is not constant over time and the future volatil-ity is unknown, so approximation is needed. By using historical volatility, for instance two years back, the investor can begin to value the option. It’s common that the lifetime of the option and calibration length is the same, meaning that the calibration length of volatility in this case is two year back of assets’ prices.

Risk free interest rate

The risk free interest also called risk free rate of return is the return on investments with no risk. No risk means that there is a zero chance for a default and the investor will get his or hers money back guaranteed plus a small return, the risk free return. Since there are no assets of this type, the risk free interest rate does not exist. It can only be approximated and to do that investments with a very low risk are used to benchmark the return. The three month U.S treasury bill is for example often used by US based investors because the probability that the US will default is practically zero.

This return or interest rate will then in theory be the absolute minimum an investor will be expecting for an investment. This is because if it is lower than this the investor will be accepting a higher risk for the same or lower expected return which no rational investor would be willing to do. This rate can be used to discount cash flows in risk neutral environments. It is also used in models like the Black-Scholes model for pricing European options (Investopedia, 2018d).

Method and model implementation

Methods

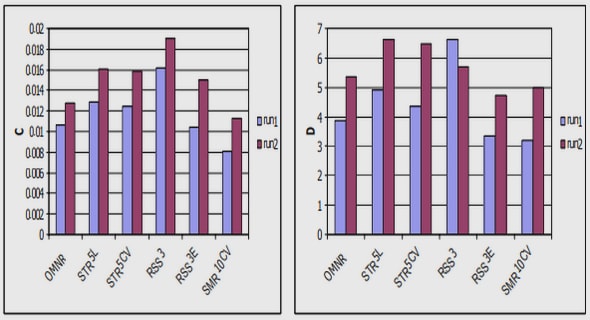

To reduce the computing time approaches 1-4 discussed in this chapter will simulate the stocks in the basket as an index. This means that instead of creating an individual path or future estimated value for each individual asset they are group up together. By then estimating the parameters needed for a Monte Carlo simulation for the entire group as one, prediction for the future index value can be done. Approach 5 (Swedbank’s own method) instead estimates a future path or value with individual Monte Carlo paths. By saying simulating individually we mean that each individ-ual index component (stock) has its own path with assumed correlation between the components in the Monte Carlo setup. Simulating our methods as an index will greatly reduce computing time but still generate reliable results. The potential side effects from this will be discussed later in this thesis. Approach 3 and 4 will also benefit from a grid based solution with multiply pre-evaluated points scattered over the estimation interval. For approach 4 these points will only include a fair option price estimated with Monte Carlo method as described in Section 2.3. The points in Approach 3 will on the other hand include both a fair value option price and the greek sensitivities described in Section 2.4. Approach 5 will in contrast only have one point with a pre-evaluated fair value option price and the greek sensitivities. Meaning that approach 5 has a similar setup as approach 3 but without a « grid-effect ».

Figure 3: A simple overview of the key aspects for each approach. Swedbanks own model is referred to as approach 5 in this thesis.

To evaluate the results from the different methods a fair value grid will be created using Monte Carlo simulations and will be referred to as reference points. This will then be used as an answer key and considered as the true value of an option and what the methods will try to estimate.

In the following sections where we discuss the methods we will refer to points where we have es-timated a fair value option price to as price point. Points where we have both a fair value option price and estimated sensitivities will be referred to as simulated values.

The underlying assets are ten American stocks. The portfolio is equally weighted and the return is depending on both the share development, relationship between US dollar and SEK and the par-ticipation rate, which determines the leverage. Leverage means that the final payoff is calculated using Equation (5) and multiplied by the participation rate. The underlying assets of the basket are listed below.

1. Kellogg Co (K UN Equity)

2. Kimberly-Clark Corp (KMB UN Equity)

3. Coca-Cola Co/The (KO UN Equity)

4. Mcdonald’S Corp (MCD UN Equity)

5. Pepsico Inc (PEP US Equity)

6. Procter Gamble Co/The (PG UN Equity)

7. Southern Co/The (SO UN Equity)

8. AtT Inc (T UN Equity)

9. United Parcel Service-Cl B (UPS UN Equity)

10. Verizon Communications Inc (VZ UN Equity)

Approach 1-3

Approach 1 will use the Greek sensitivity delta and Equation (21), the first order Taylor expansion, to estimate a new option price when there is a change in the value of the underlying assets. A delta grid is implemented in approach 1b and is done by simulating the option price and delta for different shifts in the value of the underlying assets. These simulated values are then used to estimate the new price when there is a shift in the value of the underlying assets. This is done by interpolating between the simulated values from the two closest points. The method is illustrated in Figure 4 where the two linear lines are the delta approximations (approach 1) from the two simulated values and the third curved line is the fair value for the option for different values of underlying assets. If there was no grid the estimated price would be the value obtained in the red point, but due to the grid-solutions weighing between the two simulated values we receive the value in the green point.

Table of contents :

1 Introduction

1.1 Description of problem

1.2 Swedbank

1.3 Background of problem

1.4 Goal

1.5 Purpose

1.6 Limitations

1.7 Approach and Outline

2 Theory

2.1 Options

2.2 Brownian Motion

2.2.1 One dimension

2.2.2 Random Walk Construction

2.2.3 Geometric Brownian motion

2.3 Monte Carlo Simulation

2.3.1 Law of large numbers

2.4 The greek sensitivities

2.4.1 Finite-Difference Approximation

2.4.2 Taylor polynomials

2.5 Interpolation techniques

2.5.1 Linear interpolation

2.5.2 Piecewise Cubic Hermite interpolation

2.6 The Black-Scholes model

2.7 Historical volatility

2.8 Risk free interest rate

3 Method and model implementation

3.1 Methods

3.1.1 Approach 1-3

3.1.2 Approach 4

3.1.3 Approach 5 – Swedbank’s internal estimation model

3.2 Model implementations

3.2.1 Evaluation method

3.2.2 Simulating the Greek sensitivities

3.2.3 Delta-Gamma-Vega Grid – Approach 3

3.2.4 Price Interpolation Grid – Approach 4

3.2.5 Reference points

4 Results

4.1 Approach 3

4.2 Approach 4

4.3 Approach 5

4.4 Computational complexity

5 Conclusion

6 Discussion

6.1 Further development

7 References

7.1 Books

7.2 Articles

7.3 Web pages

8 Appendices

8.1 Reference points

8.2 Relative shifts used in Approach 3

8.3 Relative shifts used in Approach 4

8.4 Relative shifts used in Approach 5

8.5 Historical volatility

8.6 Evaluated option

8.7 Approaches versus reference point