(Downloads - 0)

For more info about our services contact : help@bestpfe.com

Table of contents

1 Introduction

1.1 Motivations

1.2 Objectives

1.3 Overview of the Thesis

2 State of the Art

2.1 Feature extraction

2.1.1 Short-term features

2.1.2 Dynamic features

2.1.3 Prosodic features

2.2 Modeling

2.2.1 Gaussian Mixture Models (GMM)

2.2.2 Hidden Markov Models (HMM)

2.2.3 Neural networks

2.2.3.1 Multilayer Perceptron (MLP)

2.2.3.2 Convolutional Neural Network (CNN)

2.2.3.3 Recurrent Neural Network (RNN)

2.2.3.4 Encoder-decoder

2.2.3.5 Loss function and optimization

2.2.4 Speaker Modeling

2.2.4.1 Probabilistic speaker model

2.2.4.2 Neural network based speaker model

2.3 Voice Activity Detection (VAD)

2.3.1 Rule-based approaches

2.3.2 Model-based approaches

2.4 Speaker change detection (SCD)

2.5 Clustering

2.5.1 Oine clustering

2.5.1.1 Hierarchical clustering

2.5.1.2 K-means

2.5.1.3 Spectral clustering

2.5.1.4 Anity Propagation (AP)

2.5.2 Online clustering

2.6 Re-segmentation

2.7 Datasets

2.7.1 REPERE & ETAPE

2.7.2 CALLHOME

2.8 Evaluation metrics

2.8.1 VAD

2.8.2 SCD

2.8.2.1 Recall and precision

2.8.2.2 Coverage and purity

2.8.3 Clustering

2.8.3.1 Confusion

2.8.3.2 Coverage and purity

2.8.4 Diarization error rate (DER)

3 Neural Segmentation

3.1 Introduction

3.2 Denition

3.3 Voice activity detection (VAD)

3.3.1 Training on sub-sequence

3.3.2 Prediction

3.3.3 Implementation details

3.3.4 Results and discussion

3.4 Speaker change detection (SCD)

3.4.1 Class imbalance

3.4.2 Prediction

3.4.3 Implementation details

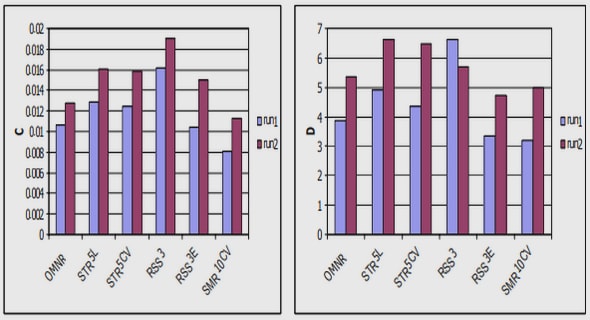

3.4.4 Experimental results

3.4.5 Discussion

3.4.5.1 Do we need to detect all speaker change points? .

3.4.5.2 Fixing class imbalance

3.4.5.3 \The Unreasonable Eectiveness of LSTMs »

3.5 Re-segmentation

3.5.1 Implementation details

3.5.2 Results

3.6 Conclusion

4 Clustering Speaker Embeddings

4.1 Introduction

4.2 Speaker embedding

4.2.1 Speaker embedding systems

4.2.2 Embeddings for xed-length segments

4.2.3 Embedding system with speaker change detection

4.2.4 Embedding system for experiments

4.3 Clustering by anity propagation

4.3.1 Implementation details

4.3.2 Results and discussions

4.3.3 Discussions

4.4 Improved similarity matrix

4.4.1 Bi-LSTM similarity measurement

4.4.2 Implementation details

4.4.2.1 Initial segmentation

4.4.2.2 Embedding systems

4.4.2.3 Network architecture

4.4.2.4 Spectral clustering

4.4.2.5 Baseline

4.4.2.6 Dataset

4.4.3 Evaluation metrics

4.4.4 Training and testing process

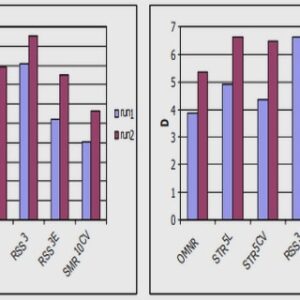

4.4.5 Results

4.4.6 Discussions

4.5 Conclusion

5 End-to-End Sequential Clustering

5.1 Introduction

5.2 Hyper-parameters optimization

5.2.1 Hyper-parameters

5.2.2 Separate vs. joint optimization

5.2.3 Results

5.2.4 Analysis

5.3 Neural sequential clustering

5.3.1 Motivations

5.3.2 Principle

5.3.3 Loss function

5.3.4 Model architectures

5.3.4.1 Stacked RNNs

5.3.4.2 Encoder-decoder

5.3.5 Simulated data

5.3.5.1 Label generation y

5.3.5.2 Embedding generation (x)

5.3.6 Baselines

5.3.7 Implementation details

5.3.7.1 Data

5.3.7.2 Stacked RNNs

5.3.7.3 Encoder-decoder architecture

5.3.7.4 Training and testing

5.3.7.5 Hyper-parameters tuning for baselines

5.3.8 Results

5.3.9 Discussions

5.3.9.1 What does the encoder do?

5.3.9.2 Neural sequential clustering on long sequences

5.3.9.3 Sequential clustering with stacked unidirectional RNNs.

5.4 Conclusion

6 Conclusions and Perspectives

6.1 Conclusions

6.2 Perspectives

6.2.1 Sequential clustering in real diarization scenarios

6.2.2 Overlapped speech detection

6.2.3 Online diarization system

6.2.4 End-to-end diarization system

References