Get Complete Project Material File(s) Now! »

Chapter 2 Literature review

Introduction

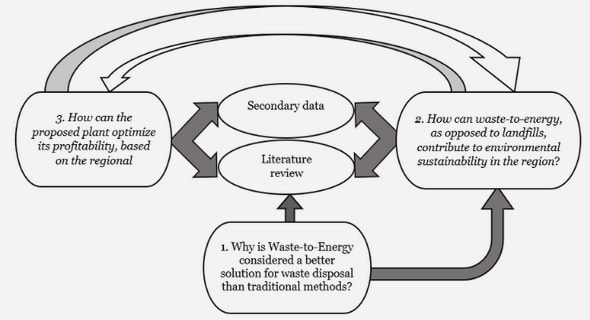

This study attempts to find out what kinds of MCQs are difficult for Linguistics students at the University of South Africa (Unisa), focusing on the issue of how the language of test questions impacts on readability, difficulty and student performance for L2 English students in comparison to L1 students. Much if not all the literature reviewed in this chapter is underpinned by an interest in the difficulty of test questions, particularly in cases where students are linguistically diverse.The literature that relates to the notion of MCQ difficulty is interdisciplinary – coming amongst others from education and specific disciplines within education, from applied linguistics,document design, psychology and psychometrics. By way of contextualisation, section 2.2 offers a discussion of academic discourse and reading in a second language, with a focus on identified lexical and grammatical features that contribute to text difficulty. The issue of whether lexical and grammatical aspects of text can be used to predict text difficulty informs the literature reviewed in section 2.3 on readability formulas. Section 2.4 focuses more narrowly on the language of multiple-choice questions. This section includes a discussion of empirical research on item-writing guidelines, trick questions and Grice’s cooperative principle in relation to MCQ item-writing, as all of these offer principles that can be followed to help minimise the linguistic difficulty of MCQs. Section 2.5 provides a discussion of relevant literature on the statistical analysis of MCQ results, including the difficulty of items for students and subgroups of students. Section 2.6 addresses the issue of MCQ difficulty from the point of view of research on the cognitive levels that can be tested using MCQs.

Academic texts and L2 readers

In order to provide some background context to the linguistic characteristics of MCQs, a brief description is given here of some research findings relating to the language of academic discourse and of how L2 students cope with reading and making sense of MCQs. Particular attention will be paid to vocabulary and syntactic constructions that have been shown by other research to be ‘difficult’ and which may therefore contribute to the difficulty of questions in my own study.

Linguistic characteristics of academic text

Analysis of the linguistic characteristics of academic text shows that there are particular constellations of lexical and grammatical features that typify academic language, whether it takes the form of a research article, a student essay or the earliest preschool efforts to ‘show-and-tell’ (Schleppegrell 2001:432). The prevalence of these linguistic features, Schleppegrell argues, is

due to the overarching purpose of academic discourse, which is to define, describe, explain and argue in an explicit and efficient way, presenting oneself as a knowledgeable expert providing objective information, justification, concrete evidence and examples (Schleppegrell 2001:441). Biber (1992) agrees that groups of features that co-occur frequently in texts of a certain type can be assumed to reflect a shared discourse function. At the lexical level, this discourse purpose of academic writing is achieved by using a diverse,precise and sophisticated vocabulary. This includes the subject-specific terminology that characterises different disciplines as well as general academic terms like analyse, approach,contrast, method and phenomenon, which may not be particularly common in everyday language (Xue & Nation 1984). The diversity and precision of academic vocabulary is reflected in relatively longer words and a higher type-token ratio than for other text types (Biber 1992).(Type-token ratio reflects the number of different words in a text, so a 100-word text has 100

tokens, but a lot of these words will be repeated, and there may be only say 40 different words (‘types’) in the text. The ratio between types and tokens in this example would then be 40%.)

Academic text is also often lexically dense compared to spoken language, with a high ratio of lexical items to grammatical items (Halliday 1989, Harrison & Bakker 1998). This implies that it has a high level of information content for a given number of words (Biber 1988, Martin 1989,Swales 1990, Halliday 1994). Academic language also tends to use nouns, compound nouns and long subject phrases (like analysis of the linguistic characteristics of academic text) rather than subject pronouns (Schleppegrell 2001:441). Nominalisations (nouns derived from verbs and other parts of speech) are common in academic discourse, e.g. interpretation from interpret or terminology from term. These allow writers to encapsulate already presented information in a compact form (Martin 1991, Terblanche 2009). For example, the phrase This interpretation can be used to refer to an entire paragraph of preceding explanation. Coxhead (2000) analysed a 3,5 million-word corpus of written academic texts in arts, commerce,law and science in an attempt to identify the core academic vocabulary that appeared in all four of these disciplines. After excluding the 2000 most common word families on the General Service List (West 1953) which made up about three-quarters of the academic corpus, Coxhead (2000) identified terms that occurred at least 100 times in the corpus as a whole. This enabled her to compile a 570-item Academic Word List (AWL) listing recurring academic lexemes (‘word families’) that are essential to comprehension at university level (Xue & Nation 1984, Cooper 1995, Hubbard 1996). For example, the AWL includes the word legislation and the rest of its family – legislated, legislates, legislating, legislative, legislator, legislators and legislature (Coxhead 2000:218). This division of the list into word families is supported by evidence suggesting that word families are an important unit in the mental lexicon (Nagy et al. 1989) and that comprehending regularly inflected or derived members of a word family does not require much more effort by learners if they know the base word and if they have control of basic wordbuilding processes (Bauer & Nation 1993:253). The words in the AWL are divided into ten sublists according to frequency, ranging from the most frequent academic words (e.g. area, environment, research and vary) in Sublist 1, to less frequent words (e.g. adjacent, notwithstanding, forthcoming and integrity) in Sublist 10. Each level includes all the previous levels. The AWL has been used primarily as a vocabulary teaching tool, with students (particularly students learning English as a foreign language) being encouraged to consciously learn these terms to improve their understanding and use of essential academic vocabulary (e.g. Schmitt & Schmitt 2005, Wells 2007). Researchers have also used the AWL to count the percentage of AWL words in various discipline-specific corpora, for example in corpora of medical (Chen &Ge 2007), engineering (Mudraya 2006) or applied linguistics texts (Vongpumivitch, Huang & Chang 2009). These studies show that AWL words tend to make up approximately 10% of running words in academic text regardless of the discipline. Other high frequency non-everyday words in discipline-specific corpora can then be identified as being specific to the discipline,rather than as general academic words. However, there is some debate (see e.g. Hyland & Tse 2007) as to whether it is useful for L2 students to spend time familiarising themselves with the entire AWL given that some of these words are restricted to particular disciplines and unlikely to be encountered in others, and may be used in completely different ways in different disciplines(Hyland & Tse 2007:236). For example, the word analysis is used in very discipline-specific ways in chemistry and English literature (Hyland & Tse 2007).

List of Tables

List of Figures

Chapter 1 Multiple-choice assessment for first-language

and second-language students

1.1 Introduction

1.2 The focus of enquiry

1.2.1 Assessment fairness and validity in the South African university context

1.2.1.1 What makes a test ‘fair’?

1.2.2 Multiple-choice assessment

1.2.2.1 Setting MCQs

1.2.2.2 What makes an MCQ difficult?

1.2.2.3 Multiple-choice assessment and L2 students

1.3 Aims of the study

1.4 Overview of methodological framework

1.4.1 Quantitative aspects of the research design

1.4.2 Qualitative aspects of the research design

1.4.3 Participants

1.4.4 Ethical considerations

1.5 Structure of the thesis

Chapter 2 Literature review

2.1 Introduction

2.2 Academic texts and L2 readers

2.2.1 Linguistic characteristics of academic text

2.2.2 L2 students’ comprehension of academic text

2.3 Readability and readability formulas

2.3.1 Readability formulas

2.3.1.1 The new Dale-Chall readability formula (1995)

2.3.1.2 Harrison and Bakker’s readability formula (1998)

2.3.2 Criticisms of readability formulas

2.4 The language of multiple-choice questions

2.4.1 Empirical research on item-writing guidelines

2.4.2 Trick questions

2.4.3 MCQs and L2 students

2.4.4 MCQ guidelines and Grice’s cooperative principle

2.4.5 Readability formulas for MCQs

2.5 Post-test statistical analysis of MCQs

2.5.1.1 Facility (p-value)

2.5.1.2 Discrimination

2.5.1.3 Statistical measures of test fairness

2.6 MCQs and levels of cognitive difficulty

2.7 Conclusion

Chapter 3 Research design and research methods

3.1 Introduction

3.2 The research aims

3.3 The research design

3.3.1 Quantitative aspects of the research design

3.3.2 Qualitative aspects of the research design

3.3.3 Internal and external validity

3.4 The theoretical framework

3.5 Research data

3.5.1 The course

3.5.2 The MCQs

3.5.3 The students

3.6 The choice of research methods

3.6.1 Quantitative research methods

3.6.1.1 Item analysis

3.6.1.2 MCQ writing guidelines

3.6.1.3 Readability and vocabulary load

3.6.1.4 Cognitive complexity

3.6.2 Qualitative research procedures: Interviews

3.6.2.1 Think-aloud methodology

3.6.2.2 Think-aloud procedures used in the study

3.7 Conclusion

Chapter 4 Quantitative results

4.1 Introduction

4.2 Item classification

4.3 Item quality statistics

4.3.1 Discrimination

4.3.2 Facility

4.3.3 L1 – L2 difficulty differential

4.3.4 Summary

4.4 Readability

4.4.1 Sentence length

4.4.2 Unfamiliar words

4.4.3 Academic words

4.4.4 Readability scores

4.5 Item quality statistics relating to MCQ guidelines

4.5.1 Questions versus incomplete statement stems

4.5.2 Long items

4.5.3 Negative items

4.5.4 Similar answer choices

4.5.5 AOTA

4.5.6 NOTA

4.5.7 Grammatically non-parallel options

4.5.8 Context-dependent text-comprehension items

4.5.9 Summary

4.6 Cognitive measures of predicted difficulty

4.6.1 Inter-rater reliability

4.6.2 Distribution of the various Bloom levels

4.6.3 Bloom levels and item quality statistics

4.6.4 Combined effects of Bloom levels and readability

4.7 Linguistic characteristics of the most difficult questions

4.7.1 Low facility questions

4.7.2 High difficulty differential questions

4.8 Conclusion

Chapter 5 Qualitative results

5.1 Introduction

5.2 Method

5.2.1 Compilation of the sample

5.3 Student profiles

5.3.1 English L1 students interviewed

5.3.2 L2 students interviewed

5.3.3 Assessment records

5.4 Student opinions of MCQ assessment

5.5 MCQ-answering strategies

5.6 Observed versus reported difficulties in the MCQs

5.7 Difficulties relating to readability

5.7.1 Misread questions

5.7.2 Unfamiliar words

5.7.3 Lack of clarity

5.7.4 Slow answering times

5.7.5 Interest

5.8 Difficulties related to other MCQ-guideline violations

5.8.1 Long items

5.8.2 Negative stems

5.8.3 Similar answer choices

5.8.4 AOTA

5.9 Other issues contributing to difficulty of questions

5.10 Conclusion

Chapter 6 Conclusions and recommendations

6.1 Introduction

6.2 Revisiting the context and aims of the study

6.3 Contributions of the study

6.4 Limitations of the study and suggestions for further research

6.5 Revisiting the research questions, triangulated findings and conclusions

6.5.1 Which kinds of multiple-choice questions (MCQs) are ‘difficult’?

6.5.2 What kinds of MCQ items present particular problems for L2 speakers?

6.5.3 What contribution do linguistic factors make to these difficulties?

6.6 Applied implications of the study for MCQ design and assessment

6.7 In conclusion

References

Appendices

Appendix A LIN103Y MCQ examination 2006

Appendix B LIN103Y MCQ examination 2007

Appendix C Consent form for think-aloud interview

Appendix D Think-aloud questionnaire

GET THE COMPLETE PROJECT

MULTIPLE-CHOICE QUESTIONS: A LINGUISTIC INVESTIGATION OF DIFFICULTY FOR FIRST-LANGUAGE AND SECOND-LANGUAGE STUDENTS