Get Complete Project Material File(s) Now! »

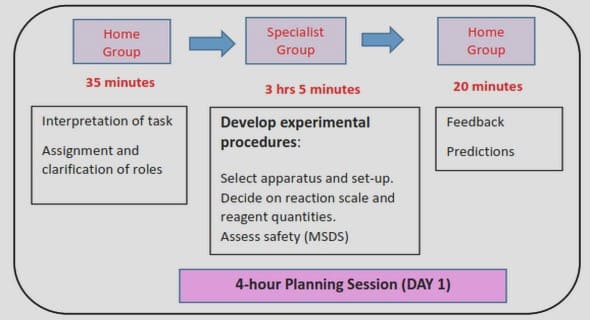

A modular architecture

Automatic Speech Recognition

The ASR module processes the speech signal. It produces interpretation hypotheses, as the most probable sentences the user could have said. Each hypothesis is associated with a score we call the Speech Recognition Score (SRS). As recalled in introduction, the state of the art of ASR includes Neural Network solutions as for example the Connectionist Temporal Classification (S. Kim et al. 2018), RNN (Graves et al. 2014) and LSTM (J. Kim et al. 2017). They can also be combined with language modelling (Chorowski et al. 2017; Lee et al. 2018).

Natural Language Understanding

Given those hypotheses, the NLU can extract meanings, and builds a semantic frame corresponding to the last user utterance, sometimes paired with a confidence score. Classic approaches parse the user utterance into predefined handcrafted semantic slots (H. Chen speech signal Automatic Speech hypothesis Natural Language semantic frame Recognition Understanding Dialogue Manager speech signal sentence Generation dialogue act et al. 2017). Recent solutions are discriminated between two approaches. The first one considers using NNs (Deng et al. 2012; Tür et al. 2012; Yann et al. 2014) or even Convolutional Neural Network (Fukushima 1980; Weng et al. 1993; LeCun et al. 1999) to directly classify the user sentence from a set a pre-defined intents (Hashemi 2016; Huang et al. 2013; Shen et al. 2014). The second one, put a label on each word of the user’s sentence. Deep Belief Networks (Deng et al. 2012) have been applied (Sarikaya et al. 2011; Deoras et al. 2013). RNNs have been also used for slot-filling NLU (Mesnil et al. 2013; Yao, Zweig, et al. 2013) and LSTM (Yao, Peng, et al. 2014). Some approaches consider bringing together user intent and slot-filling (X. Zhang et al. 2016).

Dialogue Manager

By leveraging from the other modules, the DM can decide the best thing to say, given the current state of the dialogue. It is divided into two sub-modules, the Dialogue State Tracking (DST), and the Dialogue Policy (DP).

Dialogue State Tracking The DST keeps track of the history of the dialogue (what the user already asked, what slot is already filled, etc) and compiles it into a dialogue state (could be called a belief state or also a dialogue context, depending on the framework). It usually takes the form of confidence probabilities of each slot. Traditional approaches are rule-based (Goddeau et al. 1996; Sadek et al. 1997). Statistical methods use Bayesian networks as recalled in (Thomson 2013; Henderson 2015). A recent approach is to consider merging NLU and DST into a single RNN. The dialogue state is then the hidden layer of a RNN (or LSTM) supposed to infer the next word in the dialogue (T. H. Wen et al. 2017; Barlier et al. 2018a). Dialogue Policy The next step involves the Dialogue Policy (DP), extensively de-scribed in Section 3.3, that chooses a dialogue act (Austin 1962; Searle 1969) according to the dialogue context. The most simple form of dialogue act is a parametric object used by the NLG module to reconstruct a proper sentence. For example, if the domain of application is restaurant reservation, the DM may ask for the area of the restaurant using the following dialogue act: request(slot=area)1. In some recent work, the DP actually outputs words (J. Li et al. 2016; Vries et al. 2017). It seems natural to process this way, but that means the DM must learn the semantic of the language. Without proper metrics, it may lead to DMs optimising the task with incoherent or ill-formed sentences.

It is worth noting that in the literature, DST and DP are not necessarily exposed as two distinct modules. For example, in (S. Young et al. 2009), they cast the DST and the DP as a single POMDP. The embedded Bayesian network of the POMDP acts as the DST.

Natural Language Generation

The NLG module would transcript the dialogue act request(slot=area) as « Where do you want to eat? ». Recently, LSTM for NLG has been used in the SDS context (T.-H. Wen et al. 2015). Finally, the TTS module transforms the sentence returned by the NLG module into a speech signal. State-of-the-art approaches use generative models (Van Den Oord et al. 2016; Y. Wang et al. 2017; Oord et al. 2018).

On the slot-filling problem

In this thesis, we more specifically address slot-filling dialogue problems. For instance, we may consider an online form to book train tickets. The form contains several slots to fill, as for example birthdate, name, and address. The regular approach consists in filling each slot manually then send the HTML form to a server. This method exists for decades and has been used extensively on websites. The advantage of this approach is that it is exact since forms include checkboxes and radio buttons and all other inputs are filled according to their labels (name, address, etc). The counterpart is that the filling procedure may feel cumbersome to the user. Interacting with the form directly through voice or chat instead of filling each slot may ease the process and this is the approach we consider. It involves a DS asking the user the value of each slot in order to fill the form, then return the result of the form to the user, partially or entirely, depending on the user request. The user experience is enhanced as the user interacts in a natural fashion with the machine. Also, it can speed up the process as the user can provide several slot values in a single utterance.

Settings

In order to describe the slot-filling problem, we use a taxonomy similar to the Dialogue State Tracking Challenge (DSTC) taxonomy (Jason D. Williams et al. 2013). The problem is, for the system, to fill a set of slots (or goals). A slot is said informable if the user can provide a value for, to use as a constraint on their search. A slot is said requestable if the user can ask the system the value of a slot. All informable slots are also requestable. In the book train tickets example, the departure city is an informable with a number of possible values equal to the number of cities served by the transport. A non informable slot, but requestable, would be, for example, the identification number of the train.

For purposes of conducting the dialogue, the user and the system are given respectively a set of user acts and system acts. Some acts are common to every dialogues such as hello, repeat or bye. Others acts depend on the ontology of the domain as they are direct instances of the requestable and informable slots. Both actors can request the value of a slot using the generic act request(slot= »a slot ») and inform the value of a slot using inform(slot= »a slot », value= »its value »). The counterpart of the DS slot-filling procedure is that an utterance may be misunderstood by the system. As the system is given an NLU or NLU score, it is able to judge if an utterance is worth asking for repeating. The system can ask the user to repeat with several system acts: repeat: the user may repeat the last utterance. For instance « I don’t understand what you said, please repeat. ».

expl-conf(slot= »a slot », value= »its value »): the system requests the user to explicitly acknowledge a slot value. For example « You want to book a train departing from Paris, is it correct ? ». impl-conf(slot= »a slot », value= »its value »): the system reports a slot value without explicitly asking the user to confirm it. If the user thinks the value is wrong, then he can decide to fix the mistake. For example « You want to book a train departing from Paris. Where do you want to go ? ». Note that, in Part II and Part III, experiments will be conducted on slot-filling problems with a similar taxonomy, but the acts may differ.

The Dialogue Manager

The particularity of the DM as opposed to the other SDS’s modules, is that it is stateful, in the sense that it needs to keep track of the dialogue state to operate optimally2. For example, we do not want to ask the same question twice if we have already got a clear answer. The DST is stateful by definition but most of the time the DP is stateless (it doesn’t keep track of a state). This thesis proposes solutions to optimise the DM. Since the DM is the only module involved, we abstract all the remaining modules into a single object called environment. Figure 3.2 describes the simplified workflow: we assume the DM to receive an object o called the observation. It contains the last user dialogue act ausr (the semantic frame) and the SRS. We assume the DST updates the next dialogue state s0 given the current state s, the last system act asys and the last observation o. In this thesis, the DST outputs a simple concatenation of the previous observations and system acts. Also, we restrict the system acts to dialogue acts only (and not words).

Traditionally, handcrafted approaches have been considered to design the DP. They just match the dialogue state to a dialogue act using a set of handcrafted rules for the DP. It has been shown to be unreliable in unknown situations and it requires an expert knowledge on the domain. Statistical methods may solve these issues. Generative models have been considered to predict the next dialogue act given the current state. This is typically how chit-chat bots work (Serban, Sordoni, et al. 2016; R. Yan 2018; Gao et al. 2019). State-of-the-art statistical approaches for task-oriented DSs involve RL.

Table of contents :

1 Symbols

Acronyms

2 Introduction

2.1 History of dialogue systems

2.2 A challenge for modern applications

2.3 Contributions

2.4 Publications

2.5 Outline

I Task-oriented Dialogue Systems

3 The pipeline

3.1 A modular architecture

3.2 On the slot-filling problem

3.2.1 Settings

3.3 The Dialogue Manager

4 Training the Dialogue Manager with RL

4.1 Assuming a given dialogue corpus

4.2 Online interactions with the user

4.3 To go beyond

5 User adaptation and Transfer Learning

5.1 The problem of Transfer Learning

5.2 State-of-the-art of Transfer Learning for Dialogue Systems

5.2.1 Cross domain adaptation

5.2.2 User adaptation

5.2.3 An aside on dialogue evaluation

II Scaling up Transfer Learning

6 A complete pipeline for user adaptation

6.1 Motivation

6.2 Adaptation process

6.2.1 The knowledge-transfer phase

6.2.2 The learning phase

6.3 Source representatives

6.4 Experiments

6.4.1 Users design

6.4.2 Systems design

6.4.3 Cross comparisons

6.4.4 Adaptation results

6.5 Related work

6.6 Conclusion

6.7 Discussion

III Safe Transfer Learning

7 The Dialogue Manager as a safe policy

7.1 Motivation

7.1.1 A remark on deterministic policies

7.2 Budgeted Dialogue Policies

7.3 Budgeted Reinforcement Learning

7.3.1 Budgeted Fitted-Q

7.3.2 Risk-sensitive exploration

7.4 A scalable Implementation

7.4.1 How to compute the greedy policy?

7.4.2 Function approximation

7.4.3 Parallel computing

7.5 Experiments

7.5.1 Environments

7.5.2 Results

7.5.3 Budgeted Fitted-Q policy executions

7.6 Discussion

7.7 Conclusion

8 Transfering safe policies

8.1 Motivation

8.2 « -safe

8.3 Experiment

IV Closing