Get Complete Project Material File(s) Now! »

Uniqueness issue

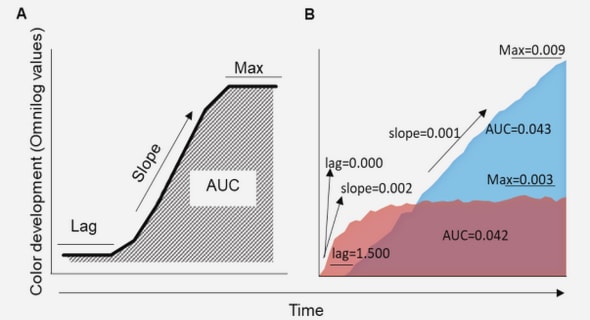

Figure 4.1 shows two critical situations wherein Cosine similarity’s weaknesses may produce inaccurate similarity results toward the vectors’ magnitudes. Based on Cosine similarity metric, the similarity between document A and document B, document A and document C and finally between document A and document D are equal. Despite the identical angles between them, there is a huge difference between the vectors’ magnitudes. As A and B have the higher proportion of the terms in their texts, it seems A and B are more more about the terms from searched query than C and D. Hence A has higher similarity to B, less similarity to C and least similarity to D. That is why Cosine produces many similarity values with the same value rather than producing unique similarity values when there are difference among similarities (uniqueness issue).

Manhattan Distance Drawbacks

Although Manhattan distance is not popular and seldom used in literature, we briefly explain why it is not a proper measure for measuring similarity level. As show in Figure 4.3, many vectors with the same magnitude like vector T and vector S can be drawn with different distances to a vector like R. If the purpose is to measure the similarity levels in order to identify whether S is more similar to R or T is more similar to R, the obvious answer would be S. Because from magnitude point of view S and T are equal, while from angular point of view S is closer to R. Based on experiments from the literature, Cosine is the most robust measure as it takes the angular differences into similarity measure (Salton & Buckley 1988) while, Manhattan distance does not do so. Likewise ED, Manhattan distance is not able to convey this concept. In this toy example, Manhattan(R, S)=Manhattan(R, T)=5, which means S and T are in the same level fo similarity to R. In some work (Mihalcea et al. 2006) Manhattan distance is considers identical to ED and it gives the same result as ED in terms of similarity measure.

TS-SS Method

TS and SS complete each other and that is the reason we combine them by multiplying them together. The range of TS-SS measure is from 0 to ∞. The reason for choosing multiplication but not summation to combine TS and SS is that in some cases the value of TS and SS are disproportionate where one is extremely larger then the other one due to their quadratic calculations with respect to the length of the vector. For example, in Figure 4.1, TS similarity is too big (TS(A,B)=5.91) while SS similarity is too small (SS(A,B)=0.047). If we use summation, they can not effect on each other significantly to give the realistic similarity value and we get TS(A,B)+SS(A,B)=5.95 > TS(A,D)+SS(A,D)=2.96 which is a false result because A is more similar to B as discussed earlier. But if we use multiplication we get TS(A,B)· SS(A,B)=0.27 < TS(A,D)·SS(A,D)=1.71 which is a true result. Using TS-SS method, similarity of 0 happens only when ED=MD=0 and it shows two vectors are absolutely identical in term of direction and magnitude which indicates the maximum similarity between two documents.

Experiments

In this section first we briefly describe the five datasets used in this study. Then we will explain the evaluation models and finally we compare the three metrics based on the results of evaluations. We assume that the end user enters three keywords in search engine and is interested in finding the clusters of similar documents where each document contains at least one of searched query’s keyword in a selected dataset. Based on this assumption, at first we apply the data integration and text preprocessing mentioned in Section 3.1 on all texts in each dataset and create a list of top 600 keywords which have the highest TF-IDF score (as mentioned earlier, the higher informative a keyword is, the higher TF-IDF score it has). Some of the selected keywords from the databases are listed in Table 6.1. Words are stemmed to their roots. Then we create a number of different search queries, each consisting of three keywords picked from the list of top keywords randomly and passed to our search engine.

Purity Results

Table 7.3 represents the most significant results in comparing the three metrics. As the table shows, ED is the weakest model for clustering. In our biggest dataset, 20NewsGroup, TS-SS outperforms Cosine with a significant difference, while in other datasets TS-SS outperforms Cosine slightly. In fact in the small datasets, there are few types of documents and the chance that documents of the same type get clustered together is higher than the condition where there are several types like 20 types of documents in 20News dataset. Therefore, the significant better result of TS-SS in 20News dataset justifies the robustness and reliability of the model for big data and real world data where the variety of documents/texts are high.

Time Complexity

For n documents, there are n 2 relationships. For each relationship the similarity can be measured using α (Cosine, ED and TS-SS). Let Q = {t1, . . . , tk} be the set of k terms (keywords) where the searched query is a subset. Measuring similarity for each measure can be done in O(k) and need to process O(n 2 ) combinations of n documents, so for all relationships the similarity can be computed in O(n 2 k) running time. For measuring the uniqueness and number of booleans, we take the entries above the main diagonal only. For n documents there are n 2−n 2 elements above the diagonal. Hence counting the unique values and booleans each can be carried out in O(n 2 ). The K-Means algorithm clusters n documents in O(i c n k) where i is the number of iteration, c is the number of clusters (number of clusters is equal to number of categories in each similarity matrix) and k is the vector dimension (number of terms). In computing purity values, group the documents of the same cluster is done in O(n) in worse case and finding the dominant category in all clusters is done in O(c log n) if we have more than one cluster, and O(n) if we have only one cluster. Finally calculating purity is done in constant time of O(c). Overall, computing the purity is done in O(n).

Contents :

- Table of Contents

- List of Tables

- List of Figures

- 1 Introduction

- 2 Related Work

- 3 Background

- 3.1 Text Mining

- 3.1.1 Data Selection

- 3.1.2 Text Pre-processing

- 3.1.3 Feature Selection

- 3.1.4 Bag of Words

- 3.2 Vector Space Model

- 3.3 Geometric Measures

- 3.3.1 Cosine Similarity

- 3.3.2 Euclidean Distance

- 3.3.3 Other Geometric Measures

- 3.4 Non-Geometric Measures

- 3.5 Clustering

- 3.5.1 K-Means

- 3.5.2 WEKA

- 3.6 Clustering Evaluation

- 3.6.1 F-Measure

- 3.6.2 Purity

- 3.6.3 Entropy

- 4 Motivation

- 4.1 Cosine Drawbacks

- 4.1.1 Uniqueness issue

- 4.1.2 Boolean values

- 4.2 Euclidean Distance Drawbacks

- 4.3 Manhattan Distance Drawbacks

- 5 TS-SS: Novel Geometric Similarity Measure Method

- 5.1 Triangle’s Area Similarity (TS)

- 5.1.1 Calculation and Strength of TS

- 5.1.2 Weakness of TS

- 5.2 Sector’s Area Similarity (SS)

- 5.3 TS-SS Method

- 6 Experiments

- 6.1 Datasets

- 6.1.1 20 News Group

- 6.1.2 7 sectors

- 6.1.3 WebKB

- 6.1.4 Classic

- 6.1.5 Twitter

- 6.2 Evaluations

GET THE COMPLETE PROJECT

A Hybrid Geometric Approach For Document Clustering And Measuring Similarity Level Among Documents