Get Complete Project Material File(s) Now! »

Data verification tools for minimising management costs of dense air-quality monitoring networks

Abstract

Aiming at minimising costs, both of expenditure and maintenance, for an air quality network, we present a simple statistical framework that does not need extensive training datasets for automated verification of the reliability of data. Data are hourly-averaged results from a network of both low-cost and regulatory instruments measuring ground-level ozone (O3). O3 is an ambient pollutant that has a relatively regular spatiotemporal profile over an urban area, although there can be significant small-scale variations. We take advantage of these characteristics to detect calibration drift in low-cost sensors within the network by using running tests and a suitably selected comparison dataset, or ‘proxy’. We define the required characteristics of the proxy by starting with a definition of the network purpose and measurement specification. Here, the definition was to extend upon the regulatory network by low-cost sensors that can deliver reliable indicative data. For O3 sensor data, indicative is where differences are less than 1 ± 30% in slope or 0 ± 5 PPB in offset to the proxy using the framework tests. Using results from a deployment of sensors around the Lower Fraser Valley, we show that a suitable selector of proxies is using land use similarity between the two locations. The network experienced widespread drift due to forest fires depositing dirt into the sensor inlets. Simple statistical tests in an automated framework identified drifting devices and therefore need attention within one-week. Results indicate that a minimal set of regulatory instruments can be used to verify the reliability of data from a more extensive network of low-cost devices.

Introduction

This chapter addresses how a framework can be set up that confirms if data from a network of low-cost air quality measurement devices are delivering reliable local pollutant concentrations. It also presents ideas on the trade-offs of using a hybrid measurement network in delivering reliable measurements about local-scale spatiotemporal processes. Previously, financial and logistical constraints have meant that air quality scientists and managers have had to choose between either accurate high temporal resolution measurements made at a limited number of sites or low temporal resolution measurements with low accuracy made at a larger number of sites [237]. This dichotomy in network design options made it difficult to capture accurately complex spatiotemporal patterns in urban air quality, thus limiting their ability to identify, understand, predict and mitigate air pollution episodes [121].

Recent low-cost sensor development, which often are portable and have minimal power and housing requirements, along with advances in data management and communication systems have made it possible to run spatiotemporally dense networks [124, 174, 198]. Such networks have the potential to capture these complex spatiotemporal patterns of concentrations in urban centres in near-real time [106, 143, 167] and would make it possible to answer new questions about local-scale processes. Some possible questions may involve enhancing links between air quality and adverse human health or environmental impacts, identifying potential air pollution “hot spots”, or quantifying the impacts of different mitigation strategies [124, 223]. Sensor development have been hailed as a new ‘paradigm’ in air quality monitoring, and welcomed as a way forward by both air quality managers and scientists alike [198].

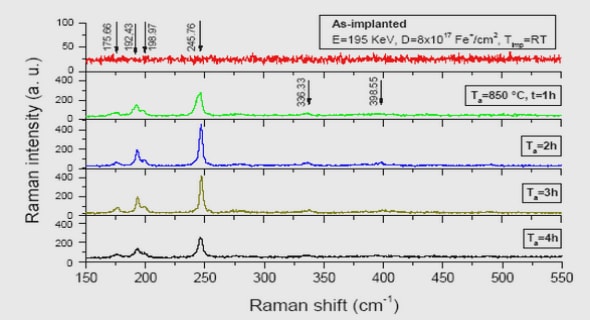

However, the ability to increase spatiotemporal network resolution has made it tempting to create low-cost networks before their reliability has been thoroughly assessed [124]. Popular gas sensor options include metal oxide and electrochemical sensors, which are known to encounter issues when monitoring in the field [56, 131, 153, 174, 178, 238]. Issues can arise from cross-sensitivity of the sensor to other pollutants, varying temperature and humidity concentrations which are not acknowledged in the sensor calibration, and long-term stability issues. The surrounding environment can also affect sensor readings, with evidence of dirt in the inlet of a sensor causing a shift in measured concentrations over time [235]. Accuracy and reliability of low-cost devices in the environment both need addressing, with a management framework that can run at minimal cost ideal [27, 152]. Those using data from any instrument, including low-cost devices, need to ensure that it is sufficiently accurate and precise to meet the stated network purpose. Limitations of the instruments also need to be clearly stated [180]. To date, there have been few published studies describing large-scale deployments of low-cost devices that also provide information or methodologies for assessing the reliability of measurements [27, 152, 158]. Conventionally, ensuring confidence in the data from an instrument uses a regular program of co-location calibration that are traceable to reference standards [59, 90, 98]. However, as the number of devices increases, associated costs for calibration and maintenance can become significant. For low-cost devices, where a large number of devices may be common, it is then useful to introduce a new metric, that of device ‘reliability’, to describe performance [164, 219, 223]. This metric is less restrictive than compliance, but it does require clarity in its definition such that users have confidence in the data within known and defined constraints. This metric is often referred to as indicative monitoring [219]. Determination of device reliability can use either temporary or permanent co-location to one or more devices. Other studies using mobile low-cost devices exploit random co-location of devices that allows spot-checking of one against another [88]. Yet, unusual trends in a monitor that is not co-located are difficult to differentiate between either natural local-scale environmental processes or device error. In these instances, reliability assessment can use computational techniques to detect and compensate unexpected patterns [137], or using specifically defined device conditions [207, 213]. These methods generally use a (knowledge-driven or data-driven) model for the phenomenon sensed, or a model for the behaviour of the sensor within the device. Multivariate time-series, principal component analysis or ‘soft sensor’ models that we [11] and others [59, 87, 88, 107, 244] have used before on air quality datasets have required long data histories to establish the model from which drifts can be detected. This requirement is often limited in low-cost sensor data, as long histories are unlikely to exist.

Here, we develop a simple management framework to addresses the aforementioned issues. Data is from a three-month network installation of low-cost devices measuring ground-level ozone (O3) around the Lower Fraser Valley (LFV) area (Figure 3-1). The network experienced widespread drift due to large forest fires in Siberia impacting air quality across the LFV [54]. Dirt was deposited into many of the low-cost sensor inlets, which caused a drift signal similar to patterns observed in [235].

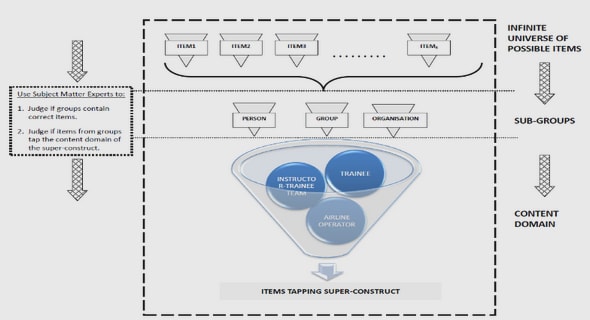

The framework is set up so that general knowledge can be used, both about the spatiotemporal behaviour of the pollutant and about the performance characteristics of the measurement device. To do so, we reflect on the stated purpose of the low-cost network. Following Snyder et al. [198] we define the network purpose as supplementing the compliant air monitoring network by extending spatial coverage, improving measurement density for local-scale exposure assessment, and enhancing source compliance monitoring.

Here, we put forward an argument that device reliability is assessable using a (suitably selected) comparison, or proxy dataset. The proxy is not a prediction about concentration at a site, but rather follows a general distribution about the pollution field that is loosely similar to the device location. Simple statistical tests can then answer the binary question: does the device seem similar to the proxy, or not? Device ‘reliability’ is thus reduced to a binary decision: the data are either considered reliable based on test results using the proxy, or they are not and require further inspection. Further inspection can determine whether it is the device or its local environment that has changed and whether there is a need for recalibration or replacement of the device. If the data are considered reliable then they become a “ground truth” measurement, including their stated uncertainty bounds (e.g. this measurement is true within X PPB). Thus, this approach is different in principle from ideas of ‘blind’ or ‘semi-blind’ calibration which attempts to remotely correct individual devices to achieve a correct estimate on the variable field [24]. I revisit the ‘semi-blind’ correction approach in later chapters of this thesis. The approach in this chapter is also different from ideas which use a large number of low-accuracy devices to generate an approximate estimate of a variable field in order to detect unusual perturbations [47].

1. INTRODUCTION

1.1 CURRENT OPPORTUNITIES IN AIR QUALITY SENSOR NETWORKS

1.2 CURRENT CHALLENGES IN AIR QUALITY SENSOR NETWORKS

1.3 DATA

1.4 THESIS MOTIVATION AND AIMS

1.5 THESIS STRUCTURE

2. BACKGROUND

2.1 AIR QUALITY

2.2 URBAN ATMOSPHERIC CHEMISTRY

2.3 AIR QUALITY IMPACTS

2.4 STANDARDS AND GUIDELINES GLOBALLY AND LOCALLY

2.5 MEASUREMENT TECHNIQUES

2.6 SENSOR NETWORKS

2.7 AIR QUALITY SENSOR NETWORK EXAMPLES

2.8 SUMMARY

3. DATA VERIFICATION TOOLS FOR MINIMISING MANAGEMENT COSTS OF DENSE AIR-QUALITY MONITORING NETWORKS

ABSTRACT

3.1 INTRODUCTION

3.2 METHODS

3.3 RESULTS

3.4 CONCLUSION

3.5 SUPPLEMENTARY INFORMATION

4. LOW-COST SENSORS AND CROWD-SOURCED DATA: OBSERVATIONS OF SITING IMPACTS ON A NETWORK OF AIR QUALITY DEVICES

ABSTRAC

4.1 INTRODUCTION

4.2 METHODS

4.3 RESULTS

4.4 CONCLUSION

4.5 SUPPLEMENTARY INFORMATION

5. SOLUTION TO THE PROBLEM OF CALIBRATION OF LOW-COST AIR QUALITY MEASUREMENT SENSORS IN NETWORKS

ABSTRACT

5.1 INTRODUCTION

5.2 THEORY

5.3 METHOD

5.4 RESULTS

5.5 CONCLUSION

5.6 SUPPLEMENTARY INFORMATION

6. USE OF A HANDHELD LOW-COST SENSOR TO EXPLORE THE EFFECT OF URBAN

DESIGN FEATURES ON LOCAL-SCALE SPATIAL AND TEMPORAL AIR QUALITY

VARIABILITY

ABSTRACT

6.1 INTRODUCTION

6.2 METHODS

6.3 RESULTS

6.4 CONCLUSION

6.5 SUPPLEMENTARY INFORMATION

7. SUMMARY AND CONCLUSIONS

7.1 MAIN FINDINGS

7.2 FUTURE DIRECTIONS

7.3 CONCLUDING REMARKS

8. REFERENCES

GET THE COMPLETE PROJECT

Reliable data from low-cost sensor networks