Get Complete Project Material File(s) Now! »

An introduction to fair learning

Statistical algorithms trained on personal data take pivotal decisions which influence our lives on a daily basis. Recent studies show that a naive use of these algorithms in sensitive domains may lead to unfair and discriminating decisions, often inheriting or even amplifying biases present in data (Barocas and Selbst 2016). Consider an example from a recent survey on the subject (Barocas, Hardt, and Narayanan 2017): “Amazon uses a data-driven system to determine the neighbourhoods in which to offer free same-day delivery. A 2016 study found stark disparities in the demographic make-up of these neighbourhoods: in many U.S. cities, white residents were more than twice as likely as black residents to live in one of the qualifying neighbourhoods.”. This example highlights a worrying trend that data-driven algorithms can lead to unfair decisions in much more sensitive domains such as, for instance, court decisions1, school/university admissions, loan approvals, etc. Therefore, there is a growing need for ensuring that practical algorithms are not contradicting neither moral nor legal grounds while still being useful. This issue sits at the intersection of several domains such as political philosophy, sociology, statistics, computer science, etc. Thus, its full comprehension must ultimately involve interdisciplinary discussions. Following the wisdom of the Latin expression “Sutor, ne ultra crepidam”2, we will primarily focus on aspects of this issue which lie inside our domain of expertise. In particular, we will rely on recent advances in learning theory which allow to address some aspects of this problem within a rigorous statistical framework. We refer the reader to Mehrabi et al. (2019) and Barocas, Hardt, and Narayanan (2019) for a general introduction on algorithmic fairness and to Oneto and Chiappa (2020) and Barrio, Gordaliza, and Loubes (2020) for reviews of the most recent theoretical advances.

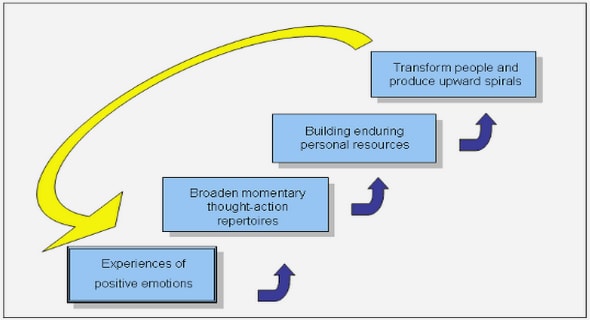

In this chapter of the manuscript we will focus on the problem of fairness-aware learning (Menon and Williamson 2018a). Once again, we do not pretend or aim to derive a general theory of fairness nor to debate about what is fair and what is not. This is a political debate, which should be held on the level of society. Our goal will be much more modest. As presented in the next section, inspired by recognized legal or philosophical concepts, (mainly) computer scientists have proposed several mathematical formalizations of discrimination of decision rules. Our goal as statisticians will be to evaluate, given a measure of performance and fairness criteria, what is the best one can hope to achieve from a learning perspective regarding fairness and performance. In particular, we will see that a natural trade-off appears between satisfying fairness constraints and achieving good (predictive) performance. Our approach to fairness-related issues does not discuss the relevance of a given choice (such as the choice of a fairness criterion and a measure of risk), which is ultimately left to the decision-maker, but yields a better understanding of the consequences of this choice. Such an approach is in phase with what Weber (1992) defined as one of the goals of science in general – not a substitute for human’s judgment but a tool for informed decision-making.

Problem formalization and definitions

In what follows, we place ourselves in the supervised learning setting: a statistician, which is given couples of feature and label variables, aims to express the label as a function of the feature variables in order to predict correctly the label associated to new and unseen feature variables. The fairness-aware (supervised) learning setting slightly differs from the usual setting in that we do not treat all features equally. In particular, we distinguish between two types of features: a set of (nominally) unsensitive features X and a set of sensitive features S. The reader can think of the latter as, for instance, gender, ethnicity, and/or age. Importantly, the set of sensitive features S contains those features against which we want to control potential discriminations. The set S will generally be a finite set in this manuscript. We would like to point out that in the considered framework, the choice of sensitive attributes belongs to the decision-maker, potentially incentivized by legal or ethical motives. It is not the statistician’s task to determine which feature should be regarded as sensitive.

More formally, the statistician observes independent copies of a triplet (X, S, Y ) ∼ P where X is the feature vector, Y the label variable and S is a sensitive feature (e.g. gender, ethnicity or age). Those random variables take their values in the sets X , Y, and S, respectively. For full generality, we will consider predictors of the form f : Z → Y where, following the notation from Donini et al. (2018), the set Z is either the set of unsensitive features X or the whole set of features X × S, depending on whether the statistician is allowed to access the sensitive attribute for prediction. Analogously, we define Z as X or (X, S) depending on the type of predictions at hand. Note that any predictor f induces group-wise distributions of the predicted outcomes Law(f(Z) | S=s) for s ∈ S. The general goal of the statistician is two-fold: maximize prediction performance by minimizing a given risk while satisfying one or several given fairness constraints. Let us now dive into the mathematical definitions of fairness to specify this general goal.

Individual and group fairness. Basically, the mathematical definitions of fairness can be divided into two groups (Dwork et al. 2012): individual fairness and group fairness. The former notion reflects the principle that similar individuals must be treated similarly, which translates into Lipschitz type constraints on possible (often randomized) prediction rules. The latter defines fairness on population level via (conditional) statistical independence of a prediction from sensitive attribute S (e.g., gender, ethnicity). The high-level idea of group fairness notions can be seen as bounding or diminishing an eventual discrepancy between group-wise distributions of the predicted outcomes. In this thesis we will focus on the group fairness. We refer the reader to the seminal paper of Dwork et al. (2012) for an introduction to individual fairness and to Jung et al. (2019), Dwork, Ilvento, and Jagadeesan (2020), and Mukherjee et al. (2020) for examples of recent works in this context.

Disparate Treatment. Before introducing the main group fairness definitions, let us define a first natural (and naive) notion of fairness which essentially restricts the class of predictors to those which do not take as input the sensitive attribute S.

Definition 2.1.1 (Disparate Treatment). Any function f : X → Y that cannot receive the sensitive attribute S in its functional form does not produce Disparate Treatment.

Gajane and Pechenizkiy (2017) refer to this definition as fairness through unawareness, as opposed to fairness through awareness, introduced by Dwork et al. (2012). The latter type of prediction allows one to build separate model for each sensitive attribute, while the former obliges one to fix a single model which is later applied across all groups. This property might be desirable for obvious legal and/or privacy reasons (Primus 2003; Barocas and Selbst 2016; Gajane and Pechenizkiy 2017; Lipton, McAuley, and Chouldechova 2018). For instance, in France it is forbidden to use ethnicity as a sensitive attribute for any statistical treatment3 (hence in particular for prediction).

Remark 2.1.2. For some authors, treatment disparity encompasses a larger phenomenon. For instance, Lipton, McAuley, and Chouldechova (2018) consider that disparate treatment addresses intentional discrimination which includes decisions explicitly based on protected char-acteristics, as in Definition 2.1.1, but also intentional discrimination via proxy variables (e.g literacy tests for voting eligibility). Since the mathematical formalization of such phenomenon is not evident, we prefer to stick to the definition we introduced.

Importantly, the absence of Disparate Treatment does not guarantee the prediction to be statistically independent from the sensitive attribute S because of correlations and, more generally, dependencies between the sensitive attribute S and the feature vector X (Pedreshi, Ruggieri, and Turini 2008). Indeed, consider the Bayes optimal predictor x f∗(x) defined as f∗(x) = E[Y | X = x].

By definition, it does not take as input the sensitive attribute and achieves the lowest possible squared risk among predictions avoiding Disparate Treatment. Yet, the predictor f∗ might still promote disparity between sensitive groups if the distributions of features X differ between groups. An example of such scenario is given in Figure 2.1: even though the predictor does not produce DT (it is the same for both groups), the distribution of the predictions for the first group significantly differ from that of the second group because of the distributions of features.

Moreover, in the classification setting, Lipton, McAuley, and Chouldechova (2018) showed that avoiding Disparate Treatment (DT) is not necessarily desirable, even when combined with other fairness criteria as in Disparate Learning Processes, a class of learning procedures which can access sensitive attribute during training but cannot use it for prediction. They prove that a decision rule that does not produce DT cannot achieve a better accuracy than an optimal rule that uses this information; leading them to the conjecture that any rule which avoids DT yields a suboptimal trade-off between fairness and performance. Furthermore, the authors provide empirical evidence that avoiding DT leads to indirect treatment disparity, via proxy variables, or within-class discrimination. Several questions stem from those insightful observations. Is it possible to provide satisfactory theoretical justifications of their results ? Are those phenomenon limited to the classification setting ? How fair and accurate can one hope to be by allowing to treat subgroups differently ? Chapter 7 and 8 will provide a tentative theoretical answer to those questions in the regression setting.

Disparate Impact. As we have seen, given a predictor f, the prediction distributions (Law(f(Z)|S = s))s S may differ across sensitive subgroups and, importantly, this phenomenon may happen unintentionally, i.e., not because of a pernicious statistician, but as a by-product of the learning process. The decision-maker might deem as undesirable such difference; for instance, if a predictor f is used to determine the salaries of employees in a company, the board might want (or have to) choose a predictor which yields similar salary distributions across subgroups (e.g., men and women). Hence, most fairness notions focus on controlling the discrepancy between the distributions of the predictions across groups. We will now provide some of the most popular fairness definitions regarding the distributions of the predictions. Since most of the literature focuses on the fair classification problem (see Calders, Kamiran, and Pechenizkiy (2009) and previous references), the subsequent fairness definitions were initially given in this framework. For clarity we will provide those definitions in the binary classification with binary-valued sensitive attribute setting. We will explain, when needed, how to extend those definitions to regression problems and to non-binary sensitive attributes. Those extensions will be based on the independence property between random variables. We will use the notation A ⊥⊥ B to express the independence between random variables A and B. First of all, it might be the case that the risk of a predictor is small on average across the whole population but with a high group-wise discrepancy in risk. Such a situation could be considered as discriminatory for groups with high level of risk. To prevent such issues, we introduce a first definition of fairness in binary classification, which asks for equality of group-wise risks.

Definition 2.1.3 (Equality of Group-wise Risks). A classifier f : Z → {0, 1} achieves Equal Group Wise risk with respect to the distribution P of (X, S, Y ) if P(f(Z) ≠ Y | S = 0) = P(f(Z) ≠ Y | S = 1).

Buolamwini and Gebru (2018) argues that it corresponds to the requirement that all group receive good service and Zafar et al. (2017) advocates for this notion to avoid disparate mistreatment. Note that this definition immediately generalizes to any expected risk notion. A relaxed formulation of this fairness notion was considered in the context of regression by Agarwal, Dudík, and Wu (2019).

Next, we introduce the main fairness notion that this thesis will focus on. It was formally introduced by Calders, Kamiran, and Pechenizkiy (2009) and essentially asks for the prediction to be (statistically) independent from the sensitive attribute.

Definition 2.1.4 (Demographic Parity). A classifier f : Z → {0, 1} achieves Demographic Parity with respect to the distribution P of (X, S, Y ) if P(f(Z) = 1 | S = 0) = P(f(Z) = 1 | S = 1).

Simply put, this definition assigns equal chances of positive decision for both groups. It acts as an incentive to promote diversity through affirmative action (Mouzannar, Ohannessian, and Srebro 2019). We can equivalently express Demographic Parity as the (probabilistic) independence between the distribution of the prediction f(Z) and that of the sensitive attribute S, which we denote by f(Z) ⊥⊥ S. (DP)

Such characterization immediately generalizes the initial definition to any supervised learning problem and any sensitive attribute.

As argued by Hardt, Price, and Srebro (2016), if deployed in reality, such a notion may cause more negative effects than positive ones in some cases. An example they give is connected with credit landing, where Y = 1 means that an individual (X, S) is able to pay back the loan and f(Z) = 1 means the bank approves the credit. In case when the paying abilities of two groups are drastically different, providing equal chances of getting a credit without looking at the paying ability Y sets less privileged individuals to the path of default. To circumvent the above issue Hardt, Price, and Srebro (2016) proposed the following two definitions.

Definition 2.1.5 (Equalized Odds). A classifier f : Z → {0, 1} achieves Equalized Odds with respect to the distribution P of (X, S, Y ) if P(f(Z) = 1 | S = 0, Y = y) = P(f(Z) = 1 | S = 1, Y = y), ∀ y ∈ {0, 1}.

This fairness notion asks for the prediction f : Z → {0, 1} to equalize True Positive and True Negative rates across both groups. One immediately sees that this definition can be expressed in a more general form as (f(Z) ⊥⊥ S) | Y. (EOdd)

It means that, given the true label of an individual, knowing the value of the sensitive attribute does not bring any information on the distribution of the prediction. Typically, the equalization of True Negatives is not necessary in practice, since f (Z ) = 1 is interpreted as a positive decision and it can be natural to focus on such decisions. In this way we arrive at the definition of Equal Opportunity.

Definition 2.1.6 (Equal Opportunity). A classifier f : Z → {0, 1} achieves Equal Opportu-nity with respect to the distribution P of (X, S, Y ) if P(f(Z) = 1 | S = 0, Y = 1) = P(f(Z) = 1 | S = 1, Y = 1).

Equal Opportunity is less constraining than Equalized Odds as it just asks for the True Positive rates to be the same across all groups. In the credit landing example, Equal Opportunity means that among the clients who are able to pay back their loan, the proportion of attributed loans across groups should be the same. It is easy to see that this definition is equivalent to the more general conditional independence constraint (f(Z) ⊥⊥ S) | Y = 1. (EOpp)

Note, however, that the extension of this notion to the regression setting is not obvious at all. That is because the definition of Equal Opportunity relies on the fact that Y = 1 can be interpreted as a positive decision (e.g., attribute a loan) and there is no immediate equivalent of a « positive decision » in the regression setting. It is still an open question to find a transposition of the notion of Equal Opportunity outside of the classification setting.

All the fairness definitions introduced up until now are expressed as the conditional probability of a positive prediction f(Z) = 1 given the value sensitive attribute S and eventually the true label Y . Unlike the previous definitions, the next one deals with conditional probabilities w.r.t. to the event of a positive prediction (f(Z) = 1).

Definition 2.1.7 (Test fairness). A classifier f : Z → {0, 1} satisfies Test Fairness with respect to the distribution P of (X, S, Y ) if P(Y = 1 | S = 0, f(Z) = 1) = P(Y = 1 | S = 1, f(Z) = 1).

As for the previous definitions, the last one can naturally be expressed as the conditional independence condition (Y = 1 ⊥⊥ S) | f(Z) = 1. (Test fairness)

Test fairness is also referred to as predictive parity. It imposes equality across sensitive groups of the rates of positive outcomes Y = 1 among those who received a positive prediction f(Z) = 1. It is tightly related to the concept of calibration (Barocas, Hardt, and Narayanan 2017, Chapter 2).

We have given five definitions of fairness which encompass different conceptions of discrimina-tion. Considering those definitions as constraints, we can now clarify the goal of fairness-aware learning: minimize a given risk over the class of predictors which satisfy (a subset of) those definitions. Note that all the constraints are distribution-dependent i.e., they depend on the joint distribution (X, S, Y ). Hence, the resulting constrained optimization problems are quite different from the usual shape-constrained problems in which distribution-independent constraints are imposed on the predictors, such as smoothness constraints.

Let us now briefly expose an illuminating well-known fairness case study to point out some issues one might encounter. COMPAS (which stands for Correctional Offender Management Profiling for Alternative Sanctions) is a risk-assessment software developed by Northpointe Group, a technology and management consulting firm which is used to predict recidivism risk in US courts. A study by ProPublica (Angwin et al. 2016) showed that COMPAS yields a high discrepancy regarding False Positive and False Negative rates across ethnicity groups, hence it does not satisfy Equalized Odds. However, Northpointe’s refutation (Dieterich, Mendoza, and Brennan 2016) claimed that COMPAS satisfies (a generalized notion of) predictive parity. This example highlights that the choice of the fairness criterion is crucial when discussing discriminatory behaviour of a decision rule. Furthermore, it brings the question of whether one should stick to a particular fairness criterion or try to satisfy several criteria simultaneously.

Of course, ideally (and naively), one would like to obtain a predictor which satisfies as many fairness constraints as possible. However this is not necessarily desirable since the class of predictors might be small or even empty. Indeed, Chouldechova (2017) showed an impossibility result which states that unless P(Y = 1|S = s) is the same across groups, no classifier f : Z → {0, 1} can simultaneously satisfy Test fairness and Equalized Odds. What lessons can we take out of this ? First of all, one indeed needs to be careful about chosen fairness criteria for a given task; secondly, it might indicate that the fairness notions we consider are too stiff and it would be worth exploring ways of relaxing those constraints. This will be the subject of the next section.

A word on bias in data and causality. Before going on to the next section, let us discuss an important point to us. An attentive reader might have noticed that all the definitions we gave focus on potential discrimination on the prediction level while nothing has been said on the potential biases in the data. Why did we make such a choice ? In a nutshell, we are interested in the outcome of the algorithms, not in the identification of biases in the data, because the reasons for which a procedure or an algorithm can have discriminatory behaviour are, most of the time, far from obvious and potentially impossible to unveil unless we are ready to make strong assumptions about our data. For example, if features are high-dimensional images, it might be an extremely difficult task to provide or test a causal model for the data because of its complexity (Bühlmann 2013). Nevertheless, we would like to mention a complementary growing sub-branch of algorithmic fairness literature which tries to incorporate causal reasoning into fairness-aware learning (see,e.g., Kilbertus et al. 2017; Kusner et al. 2017; Loftus et al. 2018; Makhlouf, Zhioua, and Palamidessi 2020). They provide new formalizations of fairness notions and new procedures to overcome potential bias and discriminations using the machinery of causal learning. The setting that is of interest to us is one in which providing a causal model for the problem of interest is too difficult, too time consuming or too expensive. Hence we focus on controlling bias in prediction, which is something we can observe and test.

Relaxation and trade-offs.

In the previous section, we have provided some popular ways of formalizing what it means for a predictor to be fair, i.e., to avoid particular discriminations. We have broadly stated the fairness-aware learning problem as that of finding a predictor f which has a low risk R(f) while satisfying one or several fairness criteria, such as the ones we have defined. Following this paradigm, fairness-aware learning problem can be expressed a constrained optimization problem: arg min {R(f) : f is “fair” } , f :Z→Y

where the constraint could be, for instance, imposing that the predictors satisfy one or several of the definition we have introduced such as Definitions 2.1.4 and 2.1.5. Given this general formulation of the fairness-aware learning problem, a natural question arises: what is the impact of introducing the fairness constraint on the risk of the best fair predictors ? In order to form a relevant answer, we will argue why relaxation of the fairness constraints is needed and provide different ways of achieving it. This will allow us to derive a general framework for studying the trade-off between performance and fairness.

Relaxation of fairness constraints. There are four main reasons why relaxation is necessary. First, mathematical fairness definitions are imperfect transposition of qualitative ideas, thus it might not be desirable to aim at exact satisfaction of the resulting constraints. Second, two sources of randomness might blur the satisfaction our definitions: we have introduced fairness definitions for fixed predictors while in practice we will be working with estimators i.e. predictors which depends on a finite number of samples from the joint distribution (X, S, Y ); furthermore, the quantities of interest (such as the False Positive Rate for instance) also have to be estimated from finite samples. Those unavoidable uncertainties and the resulting errors needs to be taken into account in the constraints. Third, a drastic change of a prediction policy can have a negative impact: for instance, if the task consists in setting wages in a company, employees will probably not tolerate an abrupt (negative) change in their salary. We might want to introduce fairness in a continuous manner hence we need a way to interpolate smoothly between fair and unfair situations. Finally, the introduced definitions are too stiff as do not allow to compare the discriminatory behaviour of two predictors which do not satisfy the fairness constraint(s): a predictor is either considered as fair or unfair. As for the risk, we would like to have some kind of order relation on the predictors regarding their fairness.

Hence the need to find a way to relax the initial definitions and provide meaningful notions of unfairness, defined as the violation of the fairness constraints. In what follows, we will focus on relaxations of the Demographic Parity constraint as all works from this thesis are based on this constraint. Recall that DP requires equality of two quantities of interest, P(f(Z) = 1 | S = 0) and P(f(Z) = 1 | S = 1).

A first natural way to relax exact fairness constraints is to ask for the difference of the quantities of interest to be small. In the case of Demographic Parity, this amounts to |P(f(Z) = 1 | S = 0) − P(f(Z) = 1 | S = 1)| ≤ ε,

for some prescribed threshold level ε ≥ 0. One can also consider other types of relaxations; for instance, multiplicative instead of additive. Note that those ideas easily generalize to other notions of fairness we have introduced in the classification with binary-valued sensitive attribute setting, S × Y = {0, 1}2. However, it is not clear how to proceed for other settings such as regression or general sensitive attribute.

Another way of relaxing fairness criteria relates to the alternative (conditional) independence definitions we have provided. As we have seen, three popular fairness constraints can be expressed as (conditional) independence conditions depending on the joint distribution of the triplet (f(Z), Y, S). In order to formalize this idea, any metric/divergence d on the space of probability distributions (such as Kolmogorov-Smirnov distance, total variation distance, Kullback-Leibler divergence, etc.) can be used to measure the discrepancy between group-wise predictions as

d Law(f(Z)|S = s), Law(f(Z)|S = s′) , for any values s, s′ ∈ S of the sensitive attribute. In particular, if d satisfies the “identity of indiscernible” (namely, for any probability distributions P and Q, d(P, Q) = 0 =⇒ P = Q) Demographic Parity is satisfied if and only if d Law(f(Z)|S = s), Law(f(Z)|S = s′) = 0, ∀s, s′ ∈ S.

A natural idea is to define a functional U on the set of predictors which quantifies the violation of the DP constraint using a chosen metric and to declare a prediction approximately fair if this functional does not exceed a user pre-specified threshold. In recent years a large variety of such relaxations has been proposed: correlation based (Baharlouei et al. 2019; Mary, Calauzènes, and El Karoui 2019; Komiyama et al. 2018); Kolmogorov-Smirnov distance (Agarwal, Dudík, and Wu 2019); Mutual information (Steinberg et al. 2020; Steinberg, Reid, and O’Callaghan 2020); Total Variation distance (Oneto, Donini, and Pontil 2019b; Oneto et al. 2019); Equality of means and higher moment matching (Raff, Sylvester, and Mills 2018; Fitzsimons et al. 2019; Calders et al. 2013; Berk et al. 2017; Olfat et al. 2020; Donini et al. 2018); Maximum Mean Discrepancy (Quadrianto and Sharmanska 2017; Madras et al. 2018); Wasserstein distance (Chiappa et al. 2020; Le Gouic, Loubes, and Rigollet 2020; Chzhen et al. 2020c; Gordaliza et al. 2019).

Table of contents :

1 An introduction to statistical learning problems

1.1 A typology of learning problems

1.2 Online learning and anytime deviation bounds

1.3 Generative models

2 An introduction to fair learning

2.1 Problem formalization and definitions

2.2 Relaxation and trade-offs

2.3 Fair regression and optimal transport

2.4 Contributions

3 Une introduction à l’apprentissage équitable

3.1 Formalisation du problème et définitions

3.2 Relaxation et compromis

3.3 Régression équitable et transport optimal

4 A nonasymptotic law of iterated logarithm for general M-estimators

4.1 Introduction

4.2 Uniform LIL for univariate M-estimators

4.3 Uniform LIL for M-estimators of a multivariate parameter

4.4 Application to Bandits

4.5 Numerical experiments

4.6 Conclusion and further work

4.7 Proofs

5 Bounding the expectation of the supremum of empirical processes indexed by Hölder classes

5.1 Introduction

5.2 A primer on Hölder classes and integral probability metrics

5.3 Empirical processes, metric entropy and Dudley’s bounds

5.4 Main result

5.5 Some extensions

5.6 Proofs

6 Statistical guarantees for generative models without domination

6.1 Introduction

6.2 Related work (and contributions)

6.3 Problem statement

6.4 Warming up: guarantees in the noiseless setting for W1

6.5 Main result in the noisy setting for smooth classes

6.6 Conclusion and outlook

6.7 Proofs

7 A minimax framework for quantifying risk-fairness trade-off in regression

7.1 Introduction

7.2 Problem statement and contributions

7.3 Prior and related works

7.4 Oracle α-relative improvement

7.5 Minimax setup

7.6 Application to linear model with systematic bias

7.7 Conclusion

7.8 Reminder

7.9 Proofs for Section 7.4

7.10 Proof of Theorem 7.5.3

7.11 Proofs for Section 7.6

7.12 Relation between UKS and U

8 An example of prediction which complies with Demographic Parity and equalizes group-wise risks in the context of regression

8.1 Introduction

8.2 Setup and general goal

8.3 Description of the family

8.4 Discussion and open questions

8.5 Conclusion

8.6 Extension to non-binary sensitive attribute

8.7 Proofs

9 Classification with abstention but without disparities

9.1 Introduction

9.2 Problem presentation

9.3 Optimal classifier

9.4 Empirical method

9.5 Finite sample guarantees

9.6 LP reduction

9.7 Experiments

9.8 Conclusion

9.9 Proofs

Bibliography