Get Complete Project Material File(s) Now! »

Models of stimulus-driven populations

A significant part of correlations between sensory neurons can be due to the stimulus. Thus, explicitly adding the influence of the stimulus on neural responses should further improve the description of responses. Furthermore, taking into account neural correlations can significantly improve the description of responses to stimuli, and thus improve decoding precision (Schwartz et al., 2012).

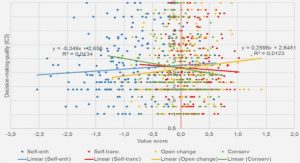

Importantly, the framework presented here can describe both correlations with a stimulus or with behaviors (Lawhern et al., 2010). Behaviors can correlate both with motor neurons controlling them, but are also known to modulate sensory neurons (Nienborg et al., 2012). In general, the models presented here correlate neural responses to some external variable s, without assuming any causality. Thus, although we refer to s as the stimulus for simplicity, it can also be understood as behaviors in general, such as arm movements, influenced by or with influence on the neural population.

An extensive part of the neuroscience literature has focused on modeling the influence of a stimulus s on responses, P(|s). A complete review of such models is beyond the scope of this review. Here, we focus on models of response where correlations cannot be explained by the stimulus alone.

Complex noise models

A simple approach is to model the probability of responses to each stimulus s, P(|s), using any model previously described. This method can model complex noise correlations between neurons. If the set of stimuli is discrete, than the amount of parameters to estimate would grow linearly with the number of stimuli. In order to limit the amount of parameters to estimate, one can impose constraints on the parameters, e.g. that some parameters are constant across stimuli.

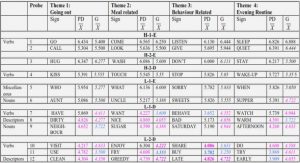

A common example is the stimulus-dependent Ising model, where each distribution P(|s) is modeled by an Ising model (eq. II.7). Schaub and Schultz (2012) applied this model to a population of orientation selective neurons, and showed how decoding could be achieved in this framework, using simulations. But this direct approach used 10000 responses per stimulus, which is challenging experimentally.

A possible approximation proposed by da Silveira and Berry (2014) is to separate neurons in two pools, and constrain that for each stimulus, fields hi and Jij only depend on which pool each neuron belongs to. Indeed, this drastically reduces the number of parameters, but it is unclear how accurate this model is at describing biological responses. Another possible approximation is to consider that in eq. II.7 fields h depend on the stimulus, but not couplings J. A challenge is then to model how fields h depend on stimulus s. Granot-Atedgi et al. (2013) modeled it with a Linear Nonlinear model: hi(s) = fi(ki s) (II.18).

where ki is a linear filter and fi is a nonlinearity. This was made possible by the simplicity of the stimulus and neurons used: retinal ganglion cells stimulated by a uniform time-varying light intensity. But often, there is no simple model to predict how neurons respond to a stimulus, e.g. natural movies (Gollisch and Meister, 2010; McIntosh et al., 2016). A helpful trick is to use time-dependent models instead of stimulus-dependent ones (Tkacik et al., 2010; Granot-Atedgi et al., 2013; Köster et al., 2014; Ganmor et al., 2015). One records multiple responses to repetitions of a stimulus, and computes the mean response for each time bin, also called PSTH. Then one studies the ME model that reproduces this mean response for each time bin, and correlations between neurons: P(|t) / exp (ht + |J).

Transition models with latent dynamics

The transition models presented above can account for some correlations between recorded neurons. Often, recorded neurons are part of a larger population, especially in the cortex. In this larger population, neural dynamics can have important effects. The effect of such dynamics has thus been included in multiple transition models, in the form of dynamical latent variables. In practice, these models correspond to GLM rather than leaky integrate and fire neurons, as they are more simple to learn. This kind of model is sometimes called generalized linear model augmented with a state-space model (Vidne et al., 2012). They have proven very successful at decoding and predicting correlations between neurons in the retina (Vidne et al., 2012) in V1 (Archer et al., 2014) or in motor cortex (Lawhern et al., 2010; Macke et al., 2011).

There are multiple ways to account for latent dynamics in the GLM input current (eq. II.30). A simple way is to take a latent variable z following a Gaussian autoregressive process (eq. II.26), and add the influence of the latent variable to the input current I (Kulkarni and Paninski, 2007; Lawhern et al., 2010). Iit = i(st) + Ipop,it + Cizt.

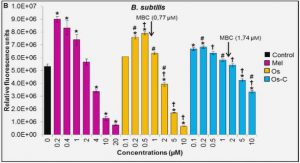

Recordings from retinal ganglion cells

We analyzed previously published ex vivo recordings from retinal ganglion cells of the tiger salamander (Ambystoma tigrinum) (Tkacik et al., 2014). In brief, animals were euthanized according to institutional animal care standards. The retina was extracted from the animal, maintained in an oxygenated Ringer solution, and recorded on the ganglion cell side with a 252 electrode array. Spike sorting was done with a custom software (Marre et al., 2012), and N = 160 neurons were selected for the stability of their spike waveforms and firing rates, and the lack of refractory period violation.

Maximum entropy models

We are interested in modeling the probability distribution of population responses in the retina. The responses are first binned into 20-ms time intervals. The response of neuron i in a given interval is represented by a binary variable i, which takes value 1 if the neuron spikes in this interval, and 0 if it is silent. The population response in this interval is represented by the vector = (1, . . . , N) of all neuron responses (Figure VI.2A). We define the population rate K as the number of neurons spiking in the interval: K() = PNi =1 i.

We build three models for the probability of responses, P(). These models reproduce some chosen statistics, meaning that these statistics have same value in the model and in empirical data. The first model reproduces the firing rate of each neuron and the distribution of the population rate. The second model also reproduces the correlation between each neuron and the population rate. The third model reproduces the whole joint probability of single neurons with the population rate. It is a hierarchy of models, because the statistics of each model are also captured by the next one. Minimal model. We build a first model that reproduces the firing rate of each neuron, P(i=1) = hii, and the distribution of the population rate, P(K). We also want the model to have no additional constraints, and thus be as random as possible. In statistical physics and information theory, the randomness of a distribution P is measured by its entropy S(P): S(P) = − X P() ln P().

Tractable maximum entropy model for coupling neuron firing to population activity

The principle of maximum entropy (Jaynes, 1957a,b) provides a powerful tool to explicitly construct probability distributions that reproduce key statistics of the data, but are otherwise as random as possible. We introduce a novel family of maximum entropy models of spike patterns that preserve the firing rate of each neuron, the distribution of the population rate, and the correlation between each neuron and the population rate, with no additional assumptions (Figure VI.2A). Under these constraints, the maximum entropy distribution over spike patterns in a fixed 20-ms time window is given by (see Materials and Methods):where i equals 1 when neuron i spikes within the time window, and 0 otherwise, K = P i i is the population rate, and Z is a normalization constant. The parameters (i)i=1,…,N , ( i)i=1,…,N and (K)K=0,…,N must be fitted to empirical data. We refer to this model as the linear-coupling model, because of the linear term iKi in the exponential. Unlike maximum entropy models in general, this model is tractable, meaning that its prediction for the statistics of spike patterns has an analytical expression that can be computed efficiently using polynomial algebra. This allows us to infer the model parameters rapidly for large populations on a standard computer, using Newton’s method (see Materials and Methods). We learned this model in the case of a population of N = 160 salamander retinal ganglion cells, stimulated by a natural movie. It took our algorithm 14 seconds to fit the 3N −2 model parameters (see Mathematical derivations) so that the maximum discrepancy between the model and the data was smaller than 10−6 (Figure VI.2 B-D). The linear-coupling model provides a rigorous mathematical formulation to the hypotheses underlying the modeling approach of Okun et al. (2015) applied to cortical populations.

In that work, synthetic spike trains were generated by shuffling spikes from the original data so as to match the three constraints listed above on the single-neuron spike rates, the distribution of population rates, and their linear correlation. Shuffling data, i.e. increasing randomness and hence entropy, while constraining mean statistics, has previously been shown to be equivalent to the principle of maximum entropy in the context of pairwise correlations (Bialek and Ranganathan, 2007). Our formulation provides a fast way to learn the model and to make predictions from it, as we shall see below. In addition, it allows us to calculate the probability of individual spike patterns, Eq. III.10, which a generative procedure such as the one in Okun et al. (2015) cannot.

Table of contents :

Introduction

I Sophisticated structure, complex functions: the retina

I.1 Anatomy of the retina

I.2 Stimulus encoding by retinal ganglion cells

II Correlations in neural systems: models of population activity

II.1 Instantaneous correlations

II.2 Temporal correlations

IIIA tractable method for describing complex couplings between neurons and population rate

III.1 Introduction

III.2 Materials and Methods

III.3 Results

III.4 Discussion

III.5 Mathematical derivations

IV Closed-loop Estimation of Retinal Network Sensitivity by Local Empirical Linearization

IV.1 Introduction

IV.2 Materials and Methods

IV.3 Results

IV.4 Discussion

IV.5 Mathematical derivations

V Accurate discrimination of population responses in the retina using neural metrics learned from activity patterns

V.1 Introduction

V.2 Materials and Methods

V.3 Results

V.4 Discussion

VI Discussion

VI.1 Population correlations

VI.2 Discrimination

Bibliography