Get Complete Project Material File(s) Now! »

Bridging social networking and cloud computing

The gap between social networking and cloud computing can be bridged by developing remote viewer solutions.

In the widest sense, the thin client paradigm refers to a terminal (desktop, PDA, smartphone, tablet) essentially limited to I/O devices (display, user pointer, keyboard), with all related computing and storage resources located on a remote server farm. This model implicitly assumes the availability of a network connection (be it wired or wireless) between the terminal and the computing resources.

Within the scope of this thesis, the term remote display refers to all the software modules, located at both end points (server and client), making possible, in real time, for the graphical content generated by server to be displayed on the client end point and for subsequent user events to be sent back to the server. When these transmission and display processes consider, for the graphical content, some complementary semantic information (such as its type, format, spatio-temporal relations or usage conditions to mention but a few) the remote display then becomes a semantic remote display1.

Our study brings to light the potential of multimedia scene-graphs for supporting semantic remote displays. The concept of the scene-graph emerged with the advent of the modern multimedia industry, as an attempt to bridge the realms of structural data representation and multimedia objects. While its definition remains quite fuzzy and application dependent, in the sequel we shall consider that a scene-graph is [BiFS, 2006]: “a hierarchical representation of audio, video and graphical objects, each represented by a […] node abstracting the interface to those objects. This allows manipulation of an object’s properties, independent of the object media.” Current day multimedia technologies also provide the possibility of direct interaction with individual nodes according to user actions; such a scene-graph will be further referred to as an interactive multimedia scene-graph.

In order to allow communitarian users to benefit from the cloud computing functionalities, a new generation of thin clients should be designed. They should provide universal access (any device, any network, any user …) to virtual collaborative multimedia environments, through versatile, user-friendly, real time interfaces based on open standard and open source software tools.

Objectives

The main objectives when developing a mobile thin client framework is to have the same user experience as when using a fixed desktop applications, see Figure 1.11.

Under this framework, the definition of a multimedia remote display for mobile thin clients remains a challenging research topic, requiring at the same time a high performance algorithm for the compression of heterogeneous content (text, graphics, image, video, 3D, …) and versatile, user-friendly real time interaction support [Schlosser,2007], [Simoens, 2008]. The underlying technical constraints are connected to the network (arbitrarily changing bandwidth conditions, transport errors, and latency), to the terminal (limitations in CPU, storage, and I/O resources), and to market acceptance (backward compatibility with legacy applications, ISO compliance, terminal independence, and open source support).

The present thesis introduces a semantic multimedia remote viewer for collaborative mobile thin clients, see Table 1.1. The principle consists of representing the graphical content generated by the server as an interactive multimedia scene-graph enriched with novel components for directly handling (at the content level) the user collaboration. In order to cope with the mobility constraints, a semantic scene-graph management framework was design (patent pending) so as to optimize the multimedia content delivery from the server to the client, under joint bandwidth-latency constraints in time-variant networks. The compression of the collaborative messages generated by the users is done by advancing a devoted dynamic lossless compression algorithm (patented solution). This new remote viewer was incrementally evaluated by the ISO community and its novel collaborative elements are currently accepted as extensions for ISO IEC JTC1 SC 29 WG 11 (MPEG-4 BiFS) standard [BiFS, 2012].

Thesis structure

The thesis structure is divided in three main chapters.

Chapter 2 represents threefolded analysis of the state-of-the-art technologies encompassed by the remote viewers. In this respect, Section 2.1 investigates the use of multimedia content when designing a remote viewer. The existing technologies are studied in terms of binary compression, dynamic updating and content streaming. Section 2.2 considers the existing wired or wireless remote viewing solutions and assess their compatibility with the challenges listed in Table 1.1. Section 2.3 brings to light the way in which the collaborative functionalities are currently offered. This chapter is concluded, in Section 2.4, by identifying the main state-of-the-art bottlenecks in the specification of a semantic multimedia remote viewer for collaborative mobile thin clients.

By advancing a novel architectural framework, Chapter 3 offers a solution in this respect. The principle is presented in Section 3.1, while the details concerning the architectural design are presented in Section 3.2. In this respect, the main novel blocks are: XGraphic Listener, XParser, Semantic MPEG-4 Converter, Semantic Controller, Pruner, Semantic Adapter, Interaction Enabler, Collaboration Enrichment, Collaboration and Interaction Event Manager and Collaboration and Interaction Handler.

Chapter 3 is devoted to the evaluation of this solution, which was benchmarked against its state-of-the-art competitors provided by VNC (RFB) and Microsoft (RDP). It was demonstrated that: (1) it features high level visual quality, e.g. PSNR values between 30 and 42dB or SSIM values larger than 0.9999; (2) the downlink band-width gain factors range from 2 to 60 while the up-link bandwidth gain factors range from 3 to 10; (3) the network roundtrip-time is reduce by factors of 4 to 6; (4) the CPU activity is larger than in the Microsoft RDP case but is reduced by a factor of 1.5 with respect to the VNC RFB.

The Chapter 4 concludes the thesis and open perspectives for future work.

The references and a list of abbreviations are also presented.

The manuscript contains three Appendixes. The first two present the detailed descriptions of the underlying patents, while Appendix III gives the conversion dictionary used for converting the XProtocol requests into their MPEG-4 BiFS and LASeR counterparts.

State of the art

Ce chapitre donne un aperçu critique sur les solutions existantes pour instancier les systèmes de rendu distant sur les terminaux mobiles légers (X, VNC, NX, RDP, …). Cette confrontation entre les limites actuelles et les défis scientifiques / applicatives met en exergue que : (1) une vrai expérience multimédia collaborative ne peut pas être offerte au niveau du terminal, (2) la compression du contenu multimédia est abordée d’un seul point de vue image statique, ainsi entraînant une surconsommation des ressources réseau; (3) l’inexistence d’une solution générale, indépendante par rapport aux particularités logicielles et matérielles du terminal, ce qui représente un frein au déploiement des solutions normatives.

Par conséquent, définir un système de rendu distant multimédia pour les terminaux légers et mobiles reste un fort enjeu scientifique avec multiples retombées applicatives. Tout d’abord, une expérience multimédia collaborative doit être fournie côté terminal. Ensuite, les contraintes liées au réseau (bande passante, erreurs et latence variantes en temps) et au terminal (ressources de calcul et de mémoire réduites) doivent être respectées. Finalement, l’acceptation par le marché d’une telle solution est jalonnée par son indépendance par rapport aux producteurs de terminaux et par le soutien offert par les communautés.

Content representation technologies

Introduction

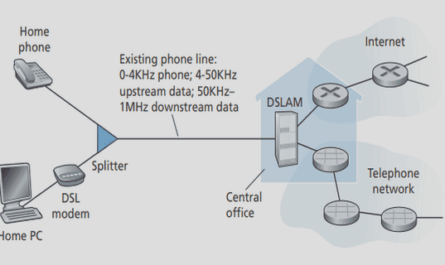

Any thin client solution can be accommodated by a client-server model, where the client is connected to the server through a connection managed by a given protocol. From the functional point of view, the software application (text editing, www browsing, multimedia entertainment, …) runs on the server and generates some semantically structured graphical output (i.e. a collection of structured text, image/video, 2D/3D graphics, …), see Figure 2.1. This graphical content should be, in the ideal case, transmitted and visualized at the client-side.

Consequently, a key issue in designing a thin client solution is the choice of multimedia representation technology. Moreover, in order to fully reproduce the same experience on the user’s terminal, it is not sufficient to transmit only the raw audio-visual data. Additional information, describing the spatio-temporal relations between theses elementary data should be also available.

This basic observation brings to light the potentiality of multimedia scenes for serving the thin client solutions. A multimedia scene is composed by its elementary components (text, image/video, 2D/3D graphics, …) and by the spatio-temporal relations among them (these relations are further referred to as scene description). Actually, scene description specifies four aspects of a multimedia presentation:

- how the scene elements (media or graphics) are organized spatially, g. the spatial layout of the visual elements;

- how the scene elements (media or graphics) are organized temporally, e. if and how they are synchronized, when they start or end;

- how to interact with the elements in the scene (media or graphics), g. when a user clicks on an image;

- how the scene changes during the time, g. when an image changes its coordinates.

The action of transforming a multimedia scene from a common representation space to a specific presentation device (i.e. speakers and/or a multimedia player) is called rendering.

By enabling all the multimedia scene elements to be encoded independently the development of authoring, editing, and interaction tools are alleviated. This permits the modification of the scene description without having to decode or process in any way the audio-visual media.

Comparison of content representation technologies

Amongst the technologies for heterogeneous content representation existing today, we will consider the most exploited by the mobile thin client environment: BiFS [BiFS, 2005], LASeR [LASeR, 2008], Adobe Flash [Adobe, 2005], Java [Java, 2005], SMIL/SVG [SMIL/SVG, 2011], [TimedText, 2010], [xHTML, 2009].

We benchmarked all the solutions according to their performances in the areas of binary encoding, dynamic updates and streaming.

Binary encoding

Multimedia scene binary encoding is already presented by several market solutions: BiFS, LASeR, Flash, Java…, to mention but a few. On the one hand, LASeR is the only technology specifically developed addressing the needs of mobile thin devices requiring at the same time strong compression and low complexity of decoding. On the other hand, BiFS takes the lead over LASeR by its power of expression and its strong graphics features with their possibility for describing 3D scenes.

A particular case is represented by the xHTML technology which has no dedicated compression mechanism, but exploits some generic lossless compression algorithms (e.g. gzip) [Liu, 2005], [HTTP, 1999].

Table of contents :

Acknowledgments

Abstract

Table of contents

List of figures

List of tables

List of code

Chapter 1. Introduction

1.1 Context

1.1.1. Online social networking

1.1.3. Bridging social networking and cloud computing

1.2 Objectives

1.3 Thesis structure

Chapter 2. State of the art

2.1 Content representation technologies

2.1.1. Introduction

2.1.2. Comparison of content representation technologies

2.1.3. BiFS and LASeR principles

2.1.4. Conclusion

2.2 Mobile Thin Clients technologies

2.2.1. Overview

2.2.2. X window system

2.2.3. NoMachine NX technology

2.2.4. Virtual Network Computing

2.2.5. Microsoft RDP

2.2.6. Conclusion

2.3 Collaboration technologies

2.4 Conclusion

B. Joveski, Semantic multimedia remote viewer for collaborative mobile thin clients

Chapter 3. Advanced architecture

3.1 Functional description

3.2 Architectural design

3.2.1. Server-side components

3.2.2. Client-side components

3.2.3. Network components

3.3 Conclusion

Chapter 4. Architectural benchmark

4.1 Overview..

4.2 Experimental setup and results

4.2.1. Visual quality

4.2.2. Down-link bandwidth consumption

4.2.3. User interaction efficiency

4.2.4. CPU activity

4.3 Discussions

4.3.1. Semantic Controller performance

4.3.2. Pruner performance

Chapter 5. Conclusion and Perspectives

5.1 Conclusion

5.2 Perspectives

List of abbreviations

References

Appendix I

Appendix II

Appendix III

List of publications