Get Complete Project Material File(s) Now! »

Image and Pattern Classification

The properties of scattering operators are exploited in the context of signal classification in Chapter 3. Given K signal classes, we observe L samples for each class, xk,l , l = 1..L, k = 1..K , which are used to estimate a classifier ˆk(x) assigning a class amongst K to each new signal x.

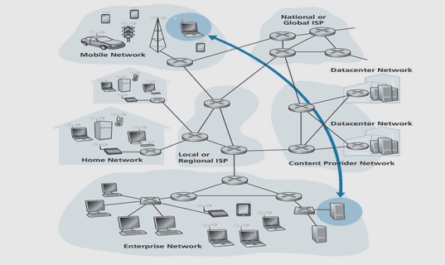

Complex object recognition problems require more forms of invariance other than those modeled by physical transformations. For instance, image datasets such as Caltech or Pascal exhibit large variability in shape, appearance, clutter, as shown in Figure 1.3. Similarly, challenging problems such as speech recognition have to take into account variability of speaker. However, even these datasets are exposed to variability coming from physical transformations, and hence most object recognition architectures require a feature extraction step which eliminates such variability, while building stability to deformations and keeping enough discriminative information. The efficiency of scattering representations for such a role is first tested in environments where physical transformations and deformations account for most of the variability.

Texture Discrimination and Reconstruction from Scat- tering

Chapter 4 studies the efficiency of scattering representations to discriminate and reconstruct image and auditory textures. These problems require a statistical treatment of the observed variability as realizations of stationary processes. Stationary processes admit a spectral representation. Its spectral density is computed from second moments, and completely characterizes Gaussian processes. However, second moments are not enough to discriminate between most real-world textures. Texture classification and reconstruction requires a representation for stationary processes capturing high order statistics in order to discriminate non-Gaussian processes. One can include high order moments E(|X(t)|n) , n ≥ 2, in the texture representation, but their estimation has large variance due to the presence of large, rare events produced by the expansive nature of xn for n ≥ 1. In addition, discrimination requires a representation which is stable to changes in viewpoint or illumination. Julesz [Jul62] conjectured that the perceptual information of a stationary texture X(t) was contained in a collection of statistics {E(gk(X(t))) , k ∈ K}. He originally stated his hypothesis in terms of second-order statistics, measuring pairwise interactions, but he later reformulated it in terms of textons, local texture descriptors capturing interactions across different scales and orientations. Textons can be implemented with filter-banks, such as oriented Gabor wavelets [LM01], which form the basis for several texture descriptors.

The expected scattering representation is defined for processes with stationary increments. First order scattering coefficients average wavelet amplitudes, and yield similar information as the average spectral density within each wavelet subband. Second order coefficients depend upon higher order moments and are able to discriminate between non-Gaussian processes. The expected scattering is estimated consistently from windowed scattering coefficients, thanks to the fact that it is computed with non-expansive operators. Besides, thanks to the stability of wavelets to deformations, the resulting texture descriptor is robust to changes in viewpoint which produce non-rigid small deformations.

Characterization of Non-linearities

This section characterizes from a stability point of view the nonlinearities necessary in any invariant signal representation in order to produce its locally invariant coefficients; and in particular in scattering representations. Every stable, locally invariant signal representation incorporates a non-linear operator in order to produce its coefficients. Neural networks, and in particular convolutional networks, introduce rectifications and sigmoids at the outputs of its “hidden units”, whereas SIFT descriptors compute the norm of the filtered image gradient prior to its pooling into the local histograms. Filter bank outputs are by definition translation covariant, not invariant. Indeed, if y(u) = x ⋆ h(u), then a translation of the input xc(u) = x(u − c) produces a translation in the output by the same amount, xc ⋆ h(u) = y(u − c) = yc.

Table of contents :

Contents

List of Notations

1 Introduction

1.2 The Scattering Representation

1.3 Image and Pattern Classification

1.4 Texture Discrimination and Reconstruction from Scattering

1.5 Multifractal Scattering

2 Invariant Scattering Representations

2.1 Introduction

2.2 Signal Representations and Metrics for Recognition

2.2.1 Local translation invariance, Deformation and Additive Stability

2.2.2 Kernel Methods

2.2.3 Deformable Templates

2.2.4 Fourier Modulus, Autocorrelation and Registration Invariants

2.2.5 SIFT and HoG

2.2.6 Convolutional Networks

2.3 Scattering Review

2.3.1 Windowed Scattering transform

2.3.2 Scattering metric and Energy Conservation

2.3.3 Local Translation Invariance and Lipschitz Continuity to Deformations

2.3.4 Integral Scattering transform

2.3.5 Expected Scattering for Processes with stationary increments

2.4 Characterization of Non-linearities

2.5 On the L1 continuity of Integral Scattering

2.6 Scattering Networks for Image Processing

2.6.1 Scattering Wavelets

2.6.2 Scattering Convolution Network

2.6.3 Analysis of Scattering Properties

2.6.4 Fast Scattering Computations

2.6.5 Analysis of stationary textures with scattering

3 Image and Pattern Classification with Scattering

3.1 Introduction

3.2 Support Vector Machines

3.3 Compression with Cosine Scattering

3.4 Generative Classification with Affine models

3.4.1 Linear Generative Classifier

3.4.2 Renormalization

3.4.3 Comparison with Discriminative Classification

3.5 Handwritten Digit Classification

3.6 Towards an Object Recognition Architecture

4 Texture Discrimination and Synthesis with Scattering

4.1 Introduction

4.2 Texture Representations for Recognition

4.2.1 Spectral Representation of Stationary Processes

4.2.2 High Order Spectral Analysis

4.2.3 Markov Random Fields

4.2.4 Wavelet based texture analysis

4.2.5 Maximum Entropy Distributions

4.2.6 Exemplar based texture synthesis

4.2.7 Modulation models for Audio

4.3 Image texture discrimination with Scattering representations

4.4 Auditory texture discrimination

4.5 Texture synthesis with Scattering

4.5.1 Scattering Reconstruction Algorithm

4.5.2 Auditory texture reconstruction

4.6 Scattering of Gaussian Processes

4.7 Stochastic Modulation Models

4.7.1 Stochastic Modulations in Scattering

5 Multifractal Scattering

5.1 Introduction

5.2 Review of Fractal Theory

5.2.1 Fractals and Singularitites

5.2.2 Fractal Processes

5.2.3 Multifractal Formalism and Wavelets

5.2.4 Multifractal Processes and Wavelets

5.2.5 Cantor sets and Dirac Measure

5.2.6 Fractional Brownian Motions

5.2.7 α-stable L´evy Processes

5.2.8 Multifractal Random Cascades

5.2.9 Estimation of Fractal Scaling Exponents

5.3 Scattering Transfer

5.3.1 Scattering transfer for Processes with stationary increments

5.3.2 Scattering transfer for non-stationary processes

5.3.3 Estimation of Scattering transfer

5.3.4 Asymptotic Markov Scattering

5.4 Scattering Analysis of Monofractal Processes

5.4.1 Gaussian White Noise

5.4.2 Fractional Brownian Motion and FGN

5.4.3 L´evy Processes

5.5 Scattering of Multifractal Processes

5.5.1 Multifractal Scattering transfer

5.5.2 Energy Markov Property

5.5.3 Intermittency characterization from Scattering transfer

5.5.4 Analysis of Scattering transfer for Multifractals

5.5.5 Intermittency Estimation for Multifractals

5.6 Scattering of Turbulence Energy Dissipation

5.7 Scattering of Deterministic Multifractal Measures

5.7.1 Scattering transfer for Deterministic Fractals

5.7.2 Dirac measure

5.7.3 Cantor Measures

A Wavelet Modulation Operators

A.1 Wavelet and Modulation commutation

A.2 Wavelet Near Diagonalisation Property

A.3 Local Scattering Analysis of Wavelet Modulation Operators

B Proof of Theorem 5.4.1

B.1 Proof of Lemma 5.4.2

B.2 Proof of Lemma 5.4.3

B.3 Proof of Lemma 5.4.4

References