Get Complete Project Material File(s) Now! »

Supervised learning to predict and understand

As generally as it gets, machine learning can be defined as a set of powerful tools to make sense of data. Through modeling and algorithms, one major goal of the discipline is to extract interesting patterns from the data and take advantage of them to make sensible decisions. In this thesis, we focus on supervised learning, where the response to a specific question is known for a given set of observations called training set and the aim is to predict the response when new input is given. When the response is discrete, i.e., encoded as classes, the problem is called classification. On the other hand, real responses call for regression models and algorithms. This section first introduces supervised learning in a very general way. We then discuss two important concepts in this field, referred to as the approximation/estimation trade-off and the accuracy/interpretability dilemma. The last part of this section focuses on a particular type of models, called penalized methods.

Supervised machine learning: problem and notations

The input data consists in n observations, each described by a set of p features. In the remaining, we will denote the observations by (xi)i=1…n, where for all i, xi 2 X ✓ Rp. Concretely, we observe a matrix X = (xi,j)i=1…n,j=1…p consisting of n lines and p columns. The output data, or response is described a vector Y = (yi)i=1…n. In a classification setting, the response consists of C classes: Y 2 {1…C}n. In the particular case of binary classification, Y 2 {−1, +1}n. In a regression setting, the response is a continuous variable: Y 2 Rn. For instance, predicting the outcome of breast cancer is a binary classification problem, with yi specifying the outcome (yi = +1 in case of a metastatic event, and −1 otherwise). Predicting the expression level of a gene is a regression problem (expression yi 2 R). For the general case, we write that yi 2 Y ✓ R for all i.

Pairs (xi, yi)i=1…n are realizations of the random variables (Xi, Yi)i=1…n assumed to be i.i.d. from the same unknown joint distribution P of (X, Y ).

Training, validation and test

In order to properly evaluate the performance of a given algorithm, it is crucial to measure the accuracy on samples that have not been used to train it. Although this might be obvious to readers in the machine learning community, we cannot emphasize enough the importance of this procedure as it is commonly misunderstood or spuriously performed, particularly in multidisciplinary fields such as bioinformatics. See for instance Simon et al. (2003), Ioannidis (2005a), Boulesteix (2010) for opinion papers on that matter. In the latter alarming letter, the author warns the community about the possibly terrible consequences of sloppiness in these regards. In particular, she mentions how assessing the performance of a model wrongly can lead to over-optimism. Obviously, we should be particularly careful as members of the bio-medical research community, as over-optimistic papers can turn into dangerous tools when directly used by practicians.

The correct procedure is known as training, validation and test (see, e.g., Hastie et al., 2009). In general,

• the training set contains data that are used for the learning part, i.e., to output a model;

• the validation set is used to perform model selection, e.g., to choose the correct parameters

(the validation test is therefore not required when only one parameter-free model is considered);

• the test set has performance assessment purposes only.

However, we are now facing a new problem: given one dataset, how should we proceed to divide it into these three sets? In a first attempt to remove some bias, we argue for balancing these sets in the classification setting, i.e., for making sure that they exhibit about the same proportion of positive and negative examples. However, even so, we might still be facing variability: what if we happened to choose some specific sets that do not represent well the entire data? One way to remove most of it is to repeatedly train and test: instead of choosing one training/test division, it makes sense to repeat the performance estimation several times and keep the average as the final performance. Note how doing this will additionally make it possible to estimate the variance of the estimation.

Table of contents :

Acknowledgement

List of Figures

List of Tables

Abstract

Résumé

1 Introduction

1.1 Supervised learning to predict and understand

1.1.1 Supervised machine learning: problem and notations

1.1.2 Loss functions

1.1.3 Defining the complexity of the predictor

1.1.4 Penalized methods

1.2 Feature selection and feature ranking

1.2.1 Overview

1.2.2 Filter methods

1.2.3 Wrapper methods

1.2.4 Embedded methods

1.2.5 Ensemble feature selection

1.3 Evaluating and comparing

1.3.1 Accuracy measures

1.3.2 Training, validation and test

1.3.3 k-fold cross-validation

1.3.4 Avoiding selection bias

1.4 Contributions of this thesis

1.4.1 Gene expression data

1.4.2 Biomarker discovery for breast cancer prognosis

1.4.3 Gene Regulatory Network inference

2 On the influence of feature selection methods on the accuracy, stability and interpretability of molecular signatures

2.1 Introduction

2.2 Materials and Methods

2.2.1 Feature selection methods

2.2.2 Ensemble feature selection

2.2.3 Accuracy of a signature

2.2.4 Stability of a signature

2.2.5 Functional interpretability and stability of a signature

2.2.6 Data

2.3 Results

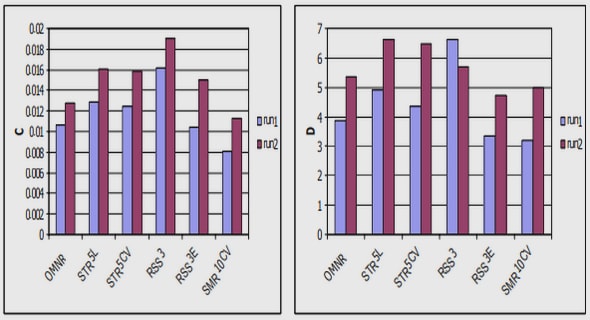

2.3.1 Accuracy

2.3.2 Stability of gene lists

2.3.3 Interpretability and functional stability

2.3.4 Bias issues in selection with Entropy and Bhattacharrya distance

2.4 Discussion

3 Importing prior knowledge: Graph Lasso for breast cancer prognosis.

3.1 Background

3.2 Methods

3.2.1 Learning a signature with the Lasso

3.2.2 The Graph Lasso

3.2.3 Stability selection

3.2.4 Preprocessing

3.2.5 Postprocessing and accuracy computation

3.2.6 Connectivity of a signature

3.3 Data

3.4 Results

3.4.1 Preprocessing facts

3.4.2 Accuracy

3.4.3 Stability

3.4.4 Connectivity

3.4.5 Biological Interpretation

3.5 Discussion

4 AVENGER:Accurate Variable Extraction using the support Norm,Grouping and Extreme Randomization

4.1 Background

4.1.1 Material and methods

4.1.2 Structured sparsity: problem and notations

4.1.3 The k-support norm

4.1.4 The primal and dual learning problems

4.1.5 Optimization

4.1.6 AVENGER

4.1.7 Accuracy and stability measures

4.1.8 Data

4.2 Results

4.2.1 Convergence

4.2.2 Results on breast cancer data

4.3 Discussion

5 TIGRESS:Trustful Inference of Gene REgulation Using Stability Selection

5.1 Background

5.2 Methods

5.2.1 Problem formulation

5.2.2 GRN inference with feature selection methods

5.2.3 Feature selection with LARS and stability selection

5.2.4 Parameters of TIGRESS

5.2.5 Performance evaluation

5.3 Data

5.4 Results

5.4.1 DREAM5 challenge results

5.4.2 Influence of TIGRESS parameters

5.4.3 Comparison with other methods

5.4.4 In vivo networks results

5.4.5 Analysis of errors on E. coli

5.4.6 Directionality prediction : case study on DREAM4 networks

5.4.7 Computational Complexity

5.5 Discussion and conclusions

6 Discussion

6.1 Microarray data: the official marriage between biology and statistics

6.2 Signatures for breast cancer prognosis: ten years of contradictions?

6.3 From data models to black boxes .