Get Complete Project Material File(s) Now! »

Methodology and Method

The following section presents the methodology, method and research ethics of the study. Starting with the methodology the parts will be introduced in the consecutive order: paradigm, approach and design of the research. Thereafter, the method will follow including an introduction to the two case companies, data collection, sampling method, a description of the interviews and data analysis. The last part encircle the ethical considerations.

Methodology

Research Paradigm

The research paradigm is the theoretical foundation that serves as guidance on how to conduct scientific research grounded on human philosophies and assumptions about the world (Collis & Hussey, 2014). This study follows the paradigm of interpretivism to allow a subjective judgement (Collis & Hussey, 2014), which would not be possible if using positivism as it is an objective approach to research (Lin, 1998). Since values, beliefs and ideas of what is right and wrong are personal and because ethics in artificial intelligence is relatively unexplored by researchers (Larsson et al., 2019; Wallace, 2019), the answers received from the participants may vary and therefore the topic and its complexity could benefit from being explored in an interpretive way (Collins & Hussey, 2014).

Research Approach

Since this study follows an interpretive approach, it naturally will adopt an inductive reasoning (Saunders et al., 2016). To make a comparison, deductive reasoning uses the rule and the explanation as a basis for deriving observations (Mantere & Ketokivi, 2013).

However, this study is not testing a hypothesis based on a theory. Rather, data is gathered through interviews and later linked to relevant theory to give a conclusion. Hence the most appropriate approach seems to be inductive reasoning which juxtaposes the observation and the explanation to conclude a rule (Mantere & Ketokivi, 2013).

Research Design

Since the chosen paradigm is interpretivism, the design of the research will also be aligned with this philosophical view. Therefore, a qualitative approach has been chosen to identify the ethical reasoning related to artificial intelligence within the IT and telecom industry. This type of data is expressed through words, and therefore it fits a qualitative study. Moreover, since the data is not numerical it could be argued as unfitting to attain the data through quantitative methods (Saunders et al., 2009).

The design of this research is a case study, which is a methodology typically associated with interpretivism (Bonoma, 1985; Collins & Hussey, 2014), and has been adopted by several researchers (Chaskin, 2001; Hanna; 2000; Marwell, 2007; Nathan, Lund, Gausset Andersen, 2007). It was chosen due to the fact that case studies seek to investigate phenomenon in their contexts (Eisenhardt, 1989; Gibbert, Ruigrok & Wicki 2008; Yin, 2018), which in this study are ethical principles in the context of EVRY (IT) and Ericsson (telecom) that develop solutions based on AI-technology. Therefore, the unit of analysis is at an organizational level where the companies represent two cases, making it a multiple-case study (Yin, 2018). The justification for using a multiple-case study is that findings found in both cases result in a more robust and rigorous study, compared to only having a single case (Yin, 2018). Furthermore, case studies use multiple sources of data in order to obtain a rich understanding of the phenomenon (Collis & Hussey, 2014; Eisenhardt, 1989), which is done in this study through primary qualitative data as well as secondary data about the specific companies.

Method

Introduction to Case Companies

EVRY and Ericsson are two multinational business-to-business (B2B) companies that both operate in Sweden. EVRY is an Information Technology (IT) service and software solutions provider (EVRY, n.d.), whereas Ericsson is a telecom company (Ericsson, n.d.).

In more detail, EVRY create solutions to businesses in different industries, for example banking, defense and health care (EVRY, n.d.). Ericsson, however, is offering Information and Communications Technology (ICT) services to telecom operators across the globe (Ericsson, n.d.). ICT refers to “the use of computers and other electronic equipment and systems to collect, store, use, and send data electronically” (Cambridge Dictionary, n.d.). It is related to IT which is computing technology, but ICT’s main focus is communications technologies such as the Internet, mobile phones or wireless networks (Techterms, n.d.). In the telecom industry ICT is used to handle immense amounts of data in real-time, which is enabled and maintained by automation and decision-supporting technology like machine learning and AI systems (Ericsson Mobility Report, 2018). Nevertheless, the IT industry is essential to create products and services that improve performance and productivity. IT exists everywhere in a modern society and continues to grow immensely, where IT systems manage and control cars, phones, production processes etc. (Technology Industries of Finland, 2019).

Both EVRY and Ericsson are working substantially with narrow AI (Andersson, 2018; Desai, 2018). One example of EVRY’s solutions involving AI is preventing card fraudulent for DNB bank. By adopting AI and machine learning, the detection of frauds become more precise, resulting in stopping transactions before they go through (EVRY, 2018). Nevertheless, Ericsson utilizes AI across their products and services in their fifth generation (5G) platform to make the 5G network more efficient (Ericsson, n.d.). The development of the 5G network is about the massive increase of speed and responsiveness of the wireless network connection, which improves connectivity between consumers, businesses and society (Ericsson, n.d.).

EVRY and Ericsson follow set guidelines covering ethical issues that might arise, hence they should be complied by everyone at the company (EVRY, n.d.; Ericsson code of business ethics, 2017). These guidelines are built on the companies’ values and explain how to behave towards each other and the society. Some of the fundamental aspects of the way that the companies administer their business involves for example a good working environment where everyone should behave with respect and integrity. Essentially, one should respect human rights and contribute to creating an environment free from discrimination. Another fundamental element is to ensure protection of data to prevent unauthorized access (EVRY code of conduct, 2017; Ericsson code of business ethics, 2017). From a sustainability perspective EVRY promotes environmental responsibility which focuses on the development of environmentally friendly technologies (EVRY code of conduct, 2017), meanwhile Ericsson encourages sustainable development by increasing benefits from its technology (Ericsson Code of Business Ethics, 2017) and by establishing the initiative “technology for good” (Ericsson, n.d.).

Data Collection

The process of data collection for this study is based on primary data accumulated through interviews, which are commonly used in interpretivist studies (Collis & Hussey, 2014). Initially, two pilot interviews were conducted in order to aid the design of the research. One of the pilot interviews was with a former employee of Ericsson and the other with a leader at EVRY. A total of ten interviews were conducted with AI aware employees, leaders and researchers at EVRY and Ericsson as well as a former employee of Ericsson who now is a founder of a number of IT startups. Furthermore, white papers, online articles and website information about the cases were gathered as secondary data.

Purposive and Snowball Sampling

The primary method of sampling for this research is purposive sampling since it was of great importance to select case companies that are informative, experienced and have a perspective on the phenomenon that is being explored (Collis & Hussey, 2014; Robinson, 2013). The criteria for the case companies were that they had to work with AI, since the aim of the study was to explore ethics in artificial intelligence which require the representatives from the case companies to be familiar with the technology. The secondary sampling method used was snowball sampling which took place by asking contacts and interviewees at the case companies for recommendations on people who might qualify for participation (Robinson, 2013). Snowball sampling is essential when there is an involvement of people with experience of the phenomenon that is being explored, as in the two case companies of the study. Thus, this method of sampling allowed the researchers to reach other valuable interviewees from the participants’ networks (Collis & Hussey, 2014).

Semi-structured Interviews

The semi-structured interviews held had open-ended questions to allow a discussion and an opportunity for the interviewees to elaborate on their responses (Saunders et al., 2009; Collis & Hussey, 2014). In addition, it enabled the researcher to explore the respondents’ answers in-depth (Collis & Hussey, 2014). The interview guide used can be viewed in appendix A.

Prior to the interviews, the participants were informed about the overall purpose of the thesis and the ones who wanted to see the questions beforehand received them a day before the interview was going to take place. Furthermore, all interviews were conducted face-to-face which allowed the researchers to create a pleasant interview atmosphere for the participants to feel comfortable and safe when sharing their thoughts (Opdenakker, 2006). Also, each interview location was chosen by the participant where he or she would feel the most at ease.

Interview Questions

At the beginning of each interview, questions regarding work position and background were asked to get to know the respondent and their prior work experiences. Thereafter questions about artificial intelligence were asked to gain a better understanding of how the company works with AI and their thoughts on its implementation and responsibilities. Lastly, questions based on theory from the frame of reference were asked to get valuable information for this study. In addition to the prepared interview guideline, probes were used to get more clarified and deeper information (Collis & Hussey, 2014). Furthermore, since two of the participants had expert knowledge on ethics and artificial intelligence, they were asked an additional set of questions based on secondary data, meaning articles or reports they had written about the subject.

Data analysis

With the aim of deepening the understanding, a thematic analysis was done in order to find common themes and patterns. The process started with a deeper analysis, where the researchers coded the data from the interviews to recognize patterns (Saunders et al., 2009). Furthermore, the thematic analysis was carried out according to the six-step procedure by Braun & Clarke (2006) which began with the researchers becoming acquainted with the collected data through transcription, reading and taking notes. Secondly, the data was systematically sorted into identified codes that were relevant for the study. Thirdly, the codes were categorized into appropriate themes which can be seen in Appendix B. Thereafter, the codes and the complete data set were reviewed to ensure compatibility with the themes. Further, the core of the themes were labeled and defined to be presented and elaborated upon in the analysis. Lastly, making the final study coherent and delivered in a logical way in tune with the data analysis.

Research Ethics

Research ethics refers to the way which research is managed and how results are presented (Collis & Hussey, 2014). As ethical concerns emerge in the process of the study, it is important to behave appropriately in relation to the rights of those affected by the research (Saunders et al., 2009). One of the most significant principles in research ethics is that pressure should not be used to demand any participants to be a part of the study (Collis & Hussey, 2014). Hence, when approaching the participants, they were asked if they would volunteer to take part in the study.

Anonymity and Confidentiality

As the participant agreed on voluntarily partake in the study, steps to ensure their rights were taken. Before each interview, the participant was informed about their authority to refuse answer any questions and take a break or end the interview. Furthermore, all interviewees are anonymous in the study, to protect the identity of the participants (Bell Bryman, 2007). Moreover, confidentiality is another aspect to consider during the research, which involves protecting the data obtained from the participant (Bell & Bryman, 2007). To ensure confidentiality in this study, raw primary data is only used by the researchers and deleted with the completion of the study.

Credibility

Credibility refers to when researchers seek to establish trustworthiness through designing the study in a way that will ensure a correct presentation of the inquiry being studied (Collis & Hussey, 2014). A way to reach a high level of credibility is through conducting semi-structured interviews with open-ended questions (Saunders et al., 2009), which is part of this study’s design. Another approach is peer debriefings (Collis & Hussey, 2014), which actively has been done in this research through formally structured feedback sessions. A third method is triangulation, which refers to incorporating various sources of data and methods as well as multiple researchers to analyze the same phenomenon (Collis & Hussey, 2014). Since this study is based on multiple cases, which relies on multiple sources of evidence, triangulation has been covered (Yin, 2018). Furthermore, because there have been three researchers conducting this study, this results in a triangulation that facilitates the validation of data from multiple researchers that are being cross-checked (Guba, 1981).

Transferability

Another important aspect of data quality is transferability, which refers to findings that are relevant to other similar settings, and hence permit generalization (Collis & Hussey, 2014). There are doubts among researchers whether qualitative case studies can be transferable due to the small number of particular contexts and participants (Sheton, 2004). However, other scholars argue that each unique case is an example of a larger group, and therefore, transferability is possible (Denscombe, 1998). Furthermore, case studies on large corporations may cover multiple geographical settings which are more rigorous than data collected within a restricted locality (Bryman, 1998). This research is based on two international companies with participants located in both Stockholm and Jönköping, with some who also have prior working experience abroad with the case companies. To ensure transferability, sufficient information needs to be provided to enable the reader to determine if the findings are transferable and applicable to other contexts (Cope, 2014). Such information could be on how many organizations and individuals are partaking in the research, where they are based as well as data collection methods including number, length and time period of data collection sessions (Shenton, 2004). For this reason, all previously mentioned information particular to this study has been conveyed.

Dependability

The concept of dependability relates to whether the research process is organized, rigorous and thoroughly documented (Guba, 1981; Shenton, 2004). To keep the research organized, a journal was kept by the researchers documenting the process. Furthermore, constant feedback was received from the tutor and the opposition group, which assured accurate implementation of widely approved research practices (Guba, 1981). Lastly, all interviews were recorded and transcribed with the consent of the participants, ensuring that the research material was carefully documented.

Confirmability

The essence of confirmability is whether the research has been fully illustrated, referring to the researcher’s ability to display that the data represent the interviewees’ responses and not the interviewers’ biases (Collis & Hussey, 2014; Cope, 2014). To ensure confirmability, coding of data was initially done individually by the three researchers, who then compared the different results. Codes that two of three researchers recognized were saved, whereas codes less apparent in the data set were rejected. Therefore, the procedure of triangulation was adopted by the use of drawing upon a variety of perspectives to reduce biases of the study (Guba, 1981).

Empirical Findings

In this part of the study, the empirical findings in form of primary data from the interviews, will be presented. Foremost, it will start with two tables containing some information about each participant and their opinions regarding the principles. Secondly, a brief background is presented to clarify the context of the study. Lastly, the detected themes with related findings from the interviews will be presented to give a clear overview of the interviewees’ different viewpoints.

An overview of the participants is presented in table 2 which have been coded through the use of numbers instead of their names to ensure anonymity. Information about gender, company, professional background, and number of years in the company for each participant is also provided, without risking revealing their identity.

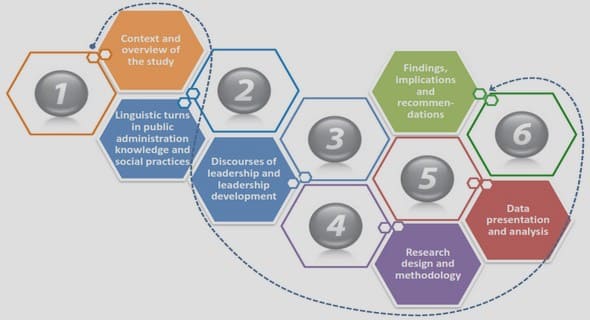

Table of Contents

1. Introduction

1.1 Background

1.2 Problem Discussion

1.3 Research Purpose & Research Question

1.4 Delimitations

1.5 Definitions

2. Frame of Reference

2.1 Methodology to Frame of Reference

2.2 Ethics and Business Ethics

2.3 Defining Artificial Intelligence (AI)

2.4 Introducing Ethics and Artificial Intelligence

2.5 The Responsibility Gap in AI

2.6 Bioethical Principles applied to an AI context

3. Methodology and Method

3.1 Methodology

3.2 Method

3.3 Research Ethics

4. Empirical Findings

4.1 Background

4.2 Principles in the Ethical framework for AI

5. Analysis

5.1 Autonomy

5.2 Beneficence

5.3 Non-maleficence

5.4 Justice

5.5 Explicability

5.6 Relevancy of the Ethical framework for AI

6. Discussion

6.1 Contributions

6.2 Practical implications

6.3 Limitations

6.4 Future Research

7. Conclusion

Reference List

GET THE COMPLETE PROJECT

Why you should care: Ethical AI principles in a business setting