Get Complete Project Material File(s) Now! »

Brief History of the Research on Acoustic Emissions

Acoustic emissions (AE) are naturally occurring phenomena caused by the radia-tion of stress waves in a continuous medium. They occur when a material endures a sudden stress redistribution due to some changes in its internal structure (such as bond breaking, granular crushing, fracturation in rocks) under the effect of one or more internal or external agents (e.g. chemicals, mechanical forces, tempera-ture) [Huang 1998]. In metals « tin cry » can be said to be the very first acoustic emission heard, which happened during the Bronze Age after learning smelting to purify tin from its form existing in the nature. The earliest work about acous-tic emission in the literature was published in London in 1678 under the title of » The Works of Geber » [Holmyard 1928]. In his work, Geber (Jabir ibn Hayyan) noted that « tin » (called Jupiter at that time) releases a « harsh sound » or « crash-ing noise ». Another example of tin cry was mentioned in the work of Muir and Morley [Muir 1893]. They said that « When a bar of tin is bent, a cracking sound may be heard due to crystals in the inner parts of the bar breaking against one another. » It took a while until the scientists commented on acoustic emissions coming from the rock samples. In 1924 in Russia, scientists found that during shear deformation in heated rock specimens create a noise similar to a clock “tic-toc” [Classen-Nekludowa 1929, Classen-Nekludowa 1927, Classen-Nekludowa 1928]. Then, in 1925, in United States Robert J. Anderson mentioned that the acoustic emissions generated during aluminum alloy yielding have their pitch varying de-pending on the thickness of the specimen [Anderson 1926b, Anderson 1926a]. Thin sheets produce high pitched (similar to Japanese glass chimes) sounds while thick sheets produce low pitch sounds like grunting. However, in these mentioned works none of these sounds were experimentally recorded.

Acoustic Instrumentation

Instrumentation is a necessity to have more information out of an experiment and possibly to record it for further analysis. Robert Hooke, a scientist from Royal Society of London worked in the second half of 16th century, thought that using devices to increase the range of the senses – particularly hearing – may lead to new inventions in science. For him acquiring information to understand nature can be obtained by three methods [Hooke 1969]:

• By the Help of the Naked Senses.

• By the Senses assisted with Instruments, and arm’d with Engines.

• By Induction or comparing the collected Observations, by the two preceding helps, and ratiocinating from them.

Furthermore, he stated his ideas (which eventually lead to the discovery of the acoustic horn and the stethoscope) as:

« There may be also a possibility of discovering the internal motions and actions of bodies by the sound they make. Who knows but that as in the watch we may hear the beating of the balance, and the running of the teeth, and multitudes of other noises: who knows, I say, but that it may be possible to discover the motions of the internal parts of bodies, whether animal, vegetable, or mineral, by the sound they make. That one may discover the works perform’d in the several offices and shops of a man’s body, and thereby discover what instrument or engine is out of order, what works are going on at several times, and lies still at others, and the like; that in plants and vegetables one might discover by the noise the pumps for raising the juice, the valves for slopping it, and the rushing of it out of one passage into another, and the like. »

To hear the sounds propagating in a different medium than air (such as in water or in the ground), Leonardo da Vinci had some ideas as well. He said [Da Vinci 1939]:

« If you cause your ship to stop, and place the head of a long tube in the water, and place the other extremity to your ear, you will hear ships at a great distance from you. You can also do the same by placing the head of the tube upon the ground, and you will then hear anyone passing at a distance from you. »

Under the direction of these bright ideas, the invention of the stethoscope was made by the French physician René Théophile Hyacinthe Laennec, whose study focused on the diseases of chest and heart in 1816 [Lindsay 1973, Laennec 1838].

Instrumentation to amplify the acoustic emissions was not only limited with the stethoscope. There are experiments in the early 19th century in Russia using optics and acoustics together to see if the optically monitored plastic deformation coincides with 10.000 times amplified acoustic emissions [Classen-Nekludowa 1929, Classen-Nekludowa 1927, Classen-Nekludowa 1928]. The problem with these studies was the lack of organization in presenting the knowledge. The first scientific report on planned acoustic experiment was presented in Tokyo in 1933 [Kishinouye 1990, Kishinouye 1937]. The research of Kishinouye was focusing on linking the acoustic emissions during deformation of wood (amplified and recorded) to compare with the crustal deformation which is leading to earthquakes. After some more years of research in the same domain, he recorded the very first acoustic emission waveforms using the oscillograms made by him. Around 1940, in the United States, acoustic emissions is used in research about mines to predict and control rock and mine failures [Obert 1941, Obert 1945, Obert 1977, Lockner 1993].

Developments in acoustic emission studies created the necessity to use better instruments to achieve higher quality datasets from the same experiments. Thus, in 1950, Mason worked with transducers made of piezoelectric crystals [Mason 1948, Mason 1951]. In his work he recorded acoustic emissions while deforming speci-mens on a quartz crystal transducer which were covering a frequency range from 1-2 kHz to 5 MHz. Playing with the broadband frequency data eventually gave a way to the question, « ‘which part of this frequency band is the real informa-tion and which part is the noise? »’. In the same year with Mason, Josef Kaiser, a graduate student at the Technische Hochschule München in Germany, worked in a more comprehensive way to understand acoustic emissions. In his study, he deter-mined which frequency range corresponds which type of process within the material [Kaiser 1950, Kaiser 1952, Kaiser 1953, Kaiser 1957a, Kaiser 1957b]. In addition to that, he studied different materials to compare these behaviors. His most important finding is the irreversibility of the acoustic emissions. He mentioned that the plastic deformation, which is known to be irreversible, causes acoustic emissions which are irreversible as well.

Brief History of the Signal Localization

Localization of the source of acoustic signals is a widely applicable technology used in many different academical and industrial areas. From robotics to medicine, from telecommunication to earth science it is possible to find applications of signal lo-calization [Gershman 1995, Valin 2003, Elnahrawy 2004, Malioutov 2005, Zhu 2007, Fink 2015, Garnier 2015].

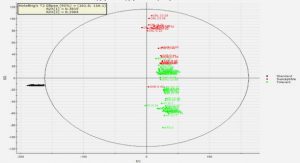

Even though it is trivial to locate impulsive, non-dispersive signals without any reflections [Aki 2002], as the signal and propagating medium get more complex lo-calization becomes more difficult. Mainly we can subgroup the localization methods into three main types. There are localization methods available based on the time difference of arrival of the signal (TDOA), based on the energy of the received signal or based on the time reversal of the signal.

Time Difference of Arrival

TDOA can work in broadband sources but it requires a very accurate measure-ment of the signal to find even the tiniest differences in time delay. Carter in 1980 published a special issue about different methods to estimate the travel time of an acoustic signals and thus the delay between two different receivers [Carter 1980]. In 1987 Smith worked on closed-form least-squares solutions of time difference local-ization with noniterative techniques [Smith 1987]. He also included the maximum likelihood into his localization formulation in one case. He made numerical simu-lations to compare the normalized error obtained from these different methods and their statistical performances. Brandstein et al. focused on finding different error regions for different array setups. He used Monte Carlo simulations in a context of speech in a video-conference to follow the speaker [Brandstein 1996]. Following this work, he also worked on closed form solutions of estimators. He proposed linear intersection estimator method which is robust and fast enough to be used in real time, where search-based location algorithms are inappropriate [Brandstein 1997]. Yao et al. in 1998 worked mainly on beamforming techniques for localizing signals. He also used time delay localization in his work to compare performances of these two methods [Yao 1998].

TDOA is extremely sensitive to signal to noise ratio. The delay on the arrival time (or simply the arrival time on each sensor) has to be measured (thresholding, modeling the shape of the first arrival etc.) very precisely. In addition to that, signal velocity has to be computed carefully since the dispersion of the medium can make the travel time complicated to calculate. It has to be considered that the geometrical spreading which leads the signal to noise ratio to decrease with the distance traveled. This causes unavoidable uncertainties in estimations [Grabowski 2014].

Time Reversal Localization

Time reversal localization is based on the idea of sending the signals recorded at different sensors back to a point and stack them to see the maximum amplitude of the sum over all points. For the estimation, stacking on a correct source point should give a larger amplitude compared to a false one. This method is tested to locate seismic source location with simple wave velocity models by McMechan in 1982 [McMechan 1982]. This work is continued by McMechan and Chang in the next years [McMechan 1983, Chang 1991]. Rietbrock and Scherbaum in 1994 made first attempts of localization using time reversal using seismic waves in local scale.

Reversing signals back to a source in local scales (or lab scale experiments) are easier than the global scale since there are fewer inhomogeneities (or at least a con-trollable amount of) in the system [Rietbrock 1994, Larmat 2006]. Several works conducted by Fink et al. proved that time reversal is a reliable technique to locate signal sources [Ficek 1997, Fink 2000, Fink 2003]. Furthermore, Tourin et al. in his work in 2001 pointed out that small inevitable perturbations during recording and re-emitting phases do not create significant estimation error [Tourin 2001]. In 2006, Larmat et al. worked on imaging of seismic sources using time reversal with different numerical models [Larmat 2006]. One of the most important advantages is that time reversal localization can easily be applied to very large datasets without picking particular phases in different recordings since it uses the entire waveform [Larmat 2008]. Moreover, Larmat et al. continued working on localization of real tremor data in global scale models [Larmat 2009, Larmat 2010]. Very recently, in 2016, Bacot et al. published their work on application of time reversal by changing the properties of the medium instantaneously to generate the mirror of the waves [Bacot 2016]. In their experimental work they show with water waves that instan-taneous wave mirroring in 2D propagation causes waves to refocus on the source point.

Energy Based Localization

Energy based signal localization methods follows the main principle of received signal strength based localization (RSSL), which relies on the fact that the energy decays with distance [Meesookho 2008]. For a brief history of energy based methods, it is worth to mention their predecessors.

In 2000, Bahl and Padmanabhan from Microsoft Research Team worked on a system called « RADAR », a radio frequency based system which is using signal strength information gathered in multiple sensors to triangulate the position of the user [Bahl 2000]. Girod et al. focused on their research to find a better way to estimate the range of these signals, which is essential to have a high quality estimation [Girod 2001]. Another work on RSSL was conducted by Flathagen and Korsnes in Norway. In 2010, they worked on localization in wireless sensor networks using signal strength measured by different sensors by using different schemes to make the localization more accurate [Flathagen 2010].

Going back to energy based localization, Li and Hu worked on energy based localization using a microsensor array [Li 2003]. They mentioned that the potential advantage of energy based method is the requirement of low intersensor communi-cation and robustness against noise and parameter based perturbations. This work is followed by the work of Sheng and Hu in the same year. They compared several different methods (Maximum Likelihood, Nonlinear Least Squares etc.) to estimate the source position after computing the signal energy [Sheng 2003b]. In 2005, Sheng published another part of his work where he compares the maximum likelihood implemented energy based localization method extensively with the other available energy based methods [Sheng 2005]. In the following year.

Article: Aerofracture through a double looking glass, mixing optics and acoustics

This study is conducted 50% – 50% by Semih Turkaya (analysis and interpretation of acoustic data) and Fredrik K. Eriksen (analysis and interpretation of optical data) under the supervision of Renaud Toussaint. Then, scientific discussions to develop the work during the redaction are done with co-authors. All co-authors contributed with some suggestions on references, scientific methods on analysis and comparison, and presentation of the obtained results. This work was published in the FlowTrans Review, April 2014 ( http://www.flowtrans.net/newsletters/ ). In this work the main objective is to test the experimental equipment, setup and procedures to ana-lyze the acquired data. Using this preliminary work, we obtained a guideline for our work. The main focus of research and the comparison are clearly indicated in this newsletter proceedings. By trial and error, we have developed stepwise experimental procedures and sample preparation methods. When we prepare the experimental samples by following the same steps each time, we can create fairly reproducible initial conditions, although the randomness of the particle arrangement will always account for some differences. The acoustic properties of the experimental setup (initial noise, noise due to injection etc.) are checked. In different stages which are identified using the optical data, the characteristic properties of the acoustic data are found to be different as well. Two different methods of signal localization are compared using the acoustic signals, showing different source positions. For the optical data, the improvements that can help to obtain a good the contrast between the emptied structure and granular material are tested. Finally we used black card-board below the Hele-Shaw cell to obtain a homogenous dark color on the carved areas. Then, we converted raw image data into segmented binary images of the emptied structure. From these segmented images we can quickly extract observable quantities of the pattern using a script. Some of the particles are dyed with In-dian Ink to have contrast between particles so that any possible displacement can be tracked during Particle Image Velocimetry (PIV) analysis using the subsequent images. As a conclusion, the experimental procedures that enable to have repro-ducible aerofracturing experiments in a Hele-Shaw cell are developed. Using these results, it is possible to obtain several optical and acoustic datasets to investigate, compare and eventually understand the mechanics behind these complex fluid-solid interactions. Furthermore, in the following work, we would like to locate the source of these acoustic events (optically and acoustically) and eventually compare these two different monitoring methods.

Table of contents :

1 Resumé

1.1 Problématique et Enjeux

1.2 La Fracturation dans un Milieu Poreux

1.3 Localisation des Emissions Acoustiques

1.4 Conclusion

1.5 Sommaire des Articles

1.5.1 L’Article: Aerofracture through a double looking glass, mixing optics and acoustics

1.5.2 L’Article: Bridging aero-fracture evolution with the characteristics of the acoustic emissions in a porous medium

1.5.3 L’Article: Numerical Studies of the Acoustic Emissions during Pneumatic Fracturing

1.5.4 L’Article: Pneumatic fractures in confined granular media .

2 Introduction

2.1 Brief History of Research on Porous Medium

2.2 Brief History of the Research on Acoustic Emissions

2.2.1 Acoustic Instrumentation

2.3 Brief History of the Signal Localization

2.3.1 Time Difference of Arrival

2.3.2 Time Reversal Localization

2.3.3 Energy Based Localization

2.4 Motivations and Objectives

3 Investigating Solid-Fluid Interactions inside the Hele-Shaw Cell

3.1 Introduction

3.2 Experimental Setup

3.3 Article: Aerofracture through a double looking glass, mixing optics and acoustics

3.4 Article: Bridging aero-fracture evolution with the characteristics of the acoustic emissions in a porous medium

3.5 Draft Article: Numerical Studies of the Acoustic Emissions during Pneumatic Fracturing

3.6 Draft Article: Explanation of Earthquake Types using Lab-scale Experiments

3.7 Conclusion and Future Work

4 Analysis of the Deformation of the Porous Medium Using Optical Data

4.1 Introduction

4.2 Draft Article: Pneumatic fractures in confined granular media

4.3 Conclusion and Future Work

5 Finding the Source of the Acoustic Emissions

5.1 Introduction

5.2 Article: Localization Based On Estimated Source Energy Homogeneity

5.3 Draft Article: Source Localization of Acoustic Emissions during Pneumatic Fracturing

5.4 Conclusion and Future Work

6 Conclusion and Perspectives

A Appendix

A.1 Experiments in an Open Cell with Mobile Plates

A.2 Dispersion Curve for Lamb Wave Simulations

A.2.1 Dispersion Curve

A.2.2 The Effect of Shear Forces