Get Complete Project Material File(s) Now! »

Graph Signal Processing

Although graphs are interesting on their own, very often they come with data associated with their nodes. Going back to the citation network example, one could think of the graph as a domain where each node is associated with a document, represented as a feature vector. We are going to say such features are signals coming from a graph domain. We formalize this notion in the following definition: Definition 4. Given a graph G = (V, E), a graph signal is a function f : V → R. Let n = |V|, then f can be represented as a vector in Rn, ( f1, . . . , fn).

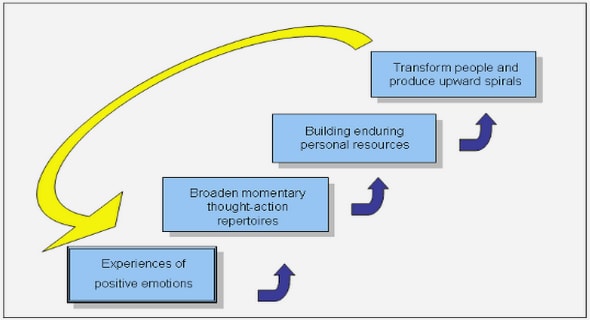

Graph Signal Processing (GSP) [60, 6, 55] extends the concepts of classic Signal Process-ing to data coming from graph domains and provides the necessary tools to perform calculus on discrete structures. This field has served as a framework to formalize a vast variety of problems. For instance, the task of node classification can be addressed with the tools GSP provides once we realize that node labels can be seen as a graph signal (see Figure 2.3).

A central actor in GSP is the graph Laplacian we introduced in Definition 3. An important thing to point out about the graph Laplacian is its nature as an operator that acts upon signals Fig. 2.3 A random sensor graph generated with PyGSP Python package where nodes belong to one of two classes (colored yellow and purple). All the true labels are displayed. In a classification problem many of these labels are missing and we have to infer them. in the graph measuring how much their steepness changes at each node. To see this, let us first observe that the gradient of a graph signal f boils down to the partial differences between nodes because it realizes in a discrete domain. That is, (∇ f )i j = fi − f j.

Graph-based Semi-Supervised Learning

Semi-supervised Learning (SSL) is a learning paradigm that exploits both labeled and unlabeled data. SSL algorithms have been successfully applied to problems where labeled data is very scarce, and where there is access to relatively large amount of unlabeled data. Formally, we assume we have access to a dataset of the form D = Dsup ∪ Dunsup where Dsup = {(xi, yi)}k= is the supervised set and Dunsup = {xi}n is the unlabeled set. We i 1 i=k+1. assume each observation (xi, yi) comes from a distribution p(x, y) where x ∈ X and y ∈ Y. We also assume the supervised set is relatively small with respect to the unlabeled set. The goal is to find a mapping g : X → Y that fits the observed data as best as possible. SSL algorithms work by making some assumptions about the distribution of the input data so that one can leverage the information provided by the unlabeled set. A few popular assumptions are described below.

Smoothness assumption The smoothness or continuity assumption establishes that points that lay in a dense region have outputs that are likely to be close. Cluster assumption The cluster assumption is a discrete version of the smoothness assump-tion: points form clusters, and if two elements belong to the same cluster then they are likely to belong to the same class. For a classification task this implies that an optimal decision boundary will pass through a low density region. Manifold assumption High-dimensional data lies in a low-dimensional manifold. This assumption is key to avoid the « curse of dimensionality ».

SSL methods can be classified into inductive and transductive. The former aims at learning a classifier that is able to handle data from the whole domain X . The latter is only concerned with finding the labels corresponding to the unlabeled elements of the training set. Graph-based semi-supervised learning (GSSL) algorithms are a class of semi-supervised methods that additionally rely on a graph structure associated with the data. In the taxonomy describe above they are usually placed among the transductive methods. These methods received a lot of attention in the last two decades for many reasons [61]. In the first place, in many applications the data is naturally endowed with a graph. Such a graph is assumed to represent the underlying topology of the data, and it thus provides grounds to develop algorithms that comply with the three assumptions presented above. As a matter of fact, the massive use of Internet results in graph-structured datasets to be more widespread. In the second place, many problems can be formulated as convex programs in a straightforward manner [61]. Finally, graphs are expressive objects and can encode rich information about the data. At a high-level, GSSL algorithms can be classified into methods that introduce an explicit form of regularization to the objective based on the topology of the data, and more modern methods based on graph neural networks. We will describe these different approaches below. In what follows we will assume the data is endowed with a graph G = (V, E, ω) with associated adjacency matrix W ∈ Rn×n.

Algorithms based on Manifold Regularization

Algorithms under this category are characterized by an objective function that consists of a supervised term and a graph regularization term [61]: l(y) = ∑ lsup(y(xi), yi) + ∑ lreg(y(xi)). (2.6) (xi,yi)∈Dsup xi∈Dunsup.

The first term is a regular supervised loss, while the second term ensures the solution will be consistent with the topology of the data. A widely used criterion of graph regularization is that of smoothness: the solution has to be as smooth as possible with respect to the graph in the sense of Equation (2.1). Some methods use the graph as a propagation operator that propagates labels according to how elements are connected. Examples of these are Label Propagation [75] and Label Spreading [72]. Considering binary labels for simplicity, Label Propagation takes the random walk normalized Laplacian Lrw = D−1W , initializes y(0) = [y1, . . . , yl , 0, . . . , 0] and iteratively updates y as: y(t+1) = Lrwy(t) (2.7) yi(t+1) = yi for i = 1, . . . , l.

When normalized as such, Lrw can be deemed a random walk matrix, that is, a matrix whose entries represent transition probabilities. Keeping that in mind, what Equation 2.7 does is to iteratively visit the neighborhood of each node, and diffuse the labels according to the strength of the edges. Label Spreading differs mainly in two things. First it uses the symmetric normalized Laplacian as propagation operator, that is, it uses Lsym = D−1/2W D−1/2, and it allows changes in the predicted labels corresponding to the training set: y(it+1) = αLsymy(t) + (1 −α)y(0),

Table of contents :

List of figures

List of tables

1 Introduction

1.1 Published Work

1.2 Outline

2 Background

2.1 Graphs

2.1.1 Spectral Graph Theory

2.1.2 Graph Signal Processing

2.2 Graph-based Semi-Supervised Learning

2.2.1 Algorithms based on Manifold Regularization

2.2.2 Algorithms based on Graph Neural Networks

2.2.3 Graph Construction

2.3 Metric Learning

2.4 Latent Variable Models and Variational Inference

2.5 Unsupervised Graph Learning

3 Joint Learning of the Graph and the Data Representation

3.1 Introduction

3.2 Model

3.2.1 Problem Formulation

3.2.2 Choices of Representation Functions

3.2.3 Optimization

3.3 Experiments and Results

3.3.1 Synthetic Data

3.3.2 Real Data

3.4 Conclusion

4 Graph Inference and Semi-Supervised Learning with Auto-Encoding Variational Bayes

4.1 Introduction

4.2 Model

4.2.1 Architecture

4.2.2 Encoder

4.2.3 Decoder

4.2.4 Choice of Prior

4.2.5 End-to-End Training Algorithm

4.3 Experiments and Results

4.3.1 Datasets

4.3.2 Competing Approaches and Setup

4.3.3 Results in Transductive Setting

4.3.4 Discussion

4.4 Concurrent Work in Joint Models for GSSL

4.5 Conclusion

5 Conclusion

5.1 Summary

5.2 Future directions

References