Get Complete Project Material File(s) Now! »

Probe-based Confocal Laser Endomicroscopy

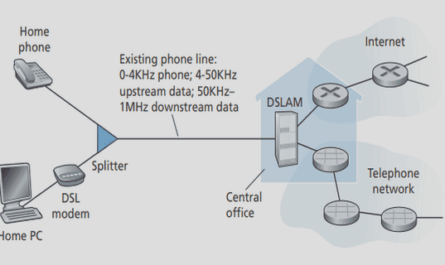

During an ongoing endoscopy procedure, pCLE consists of imaging the tissue at microscopic level, by inserting, through the standard endoscope, a miniprobe made of tens of thousands of optical fibers. A proximal part laser scanning unit uses two mirrors to emit, along each fiber, an excitation light that is locally absorbed by fluorophores in the tissue; the light which is then emitted by the fluorophores at a longer wavelength is transferred back along the same fiber to a mono-pixel photodetector, as illustrated in Fig. 2.3. As a result, endomicroscopic images are acquired at a rate of 9 to 18 frames per second, composing video sequences. From the irregularly-sampled images that are acquired, an interpolation technique presented by Le Goualher et al. [Le Goualher 04] produces single images of diameter 500 pixels, which corresponds to a FoV of 240 μm, as illustrated in Fig. 2.6. All the pCLE video sequences that are used for this study have been acquired by the Cellvizio system of Mauna Kea Technologies.

Considering a video database of colonic polyps, our study focuses on supporting the early diagnosis of colorectal cancers, more precisely for the differentiation of neoplastic and non-neoplastic polyps.

Endomicroscopic Database

At the Mayo Clinic in Jacksonville, Florida, USA, 68 patients underwent a surveillance colonoscopy with pCLE for fluorescein-aided imaging of suspicious colonic polyps before their removal. For each patient, pCLE was performed of each detected polyp with one video corresponding to each particular polyp. All polyps were removed and evaluated by a pathologist to establish the “gold standard” diagnosis. In each of the acquired videos, stable sub-sequences were identified by clinical experts to establish a diagnosis. They differentiate pathological patterns from benign ones, according to the presence or not of neoplastic tissue which contains some irregularities in the cellular and vascular architectures. The resulting Colonic Polyp database is composed of 121 videos (36 benign, 85 neoplastic) split into 499 video sub-sequences (231 benign, 268 neoplastic), leading to 4449 endomicroscopic images (2292 benign, 2157 neoplastic). For all the training videos, thepCLE diagnosis, either benign or neoplastic, is the same as the “gold standard” established by a pathologist after the histological review of biopsies acquired on the imaging spots.

More details about the acquisition protocol of the pCLE database can be found in the studies of Buchner et al. [Buchner 08], [Buchner 09b], which included a video database of colonic polyps comparable to ours, and demonstrated the effectiveness of pCLE classification of polyps by experts endoscopists.

State-of-the-Art Methods in CBIR

In the field of computer vision, Smeulders et al. [Smeulders 00] presented a large review of the state of the art in CBIR. At the macroscopic level, Häfner et al. [Häfner 09] worked on endoscopic images of colonic polyps and obtained rather good classification results by considering 6 pathological classes. At the microscopic level, Désir et al. [Désir 10] investigated the classification of pCLE images of the distal lung. However, the goal of these two studies is classification for computer-aided diagnosis, whereas our main objective is retrieval. Petrou et al. [Petrou 06] proposed a solution for the description of irregularly-sampled images, which could be defined by the optical fiber positions in our case. Nevertheless, we chose for the time being not to work on irregularly-sampled images, but rather on the interpolated images, for two reasons: first, we plan to retrieve pCLE mosaics which are interpolated images, and second, most of the available retrieval tools from computer vision are based on regular grids. The following paragraphs present several state-of-the-art methods that can be easily applied to endomicroscopic images and that will be used as baselines in this study to assess the performance of our proposed solutions.

In addition to the BoW method presented by Zhang et al. [Zhang 07] which is referred to as the HH-SIFT method combining sparse feature extraction with the BoW model, we will take as references the two following methods for CBIR method comparison: first, the standard approach of Haralick features [Haralick 79] based on global statistical features and experimented by Srivastava et al. [Srivastava 08] for the identification of ovarian cancer in confocal microendoscope images, and second, the texture retrieval Textons method of Leung and Malik [Leung 01] based on dense local features.

The Haralick method computes global statistics from the co-occurrence matrix of the image intensities, such as contrast, correlation or variance, in order to represent an image by a vector of statistical features; this method is worth being compared with, because of its global scope. The Textons method defines for each image pixel p a “texton”, as the response of a patch centered on p to a texture filter which is composed of orientation and spatial-frequency selective linear filters. While only texture information is extracted by this method, the fact that its extraction procedure is dense makes it interesting for method comparison, as shown in Section 2.3.

Framework for Retrieval Evaluation

Assessing the quality of content-based data retrieval is a difficult problem. In this paper, we focus on a simple but indirect means to quantify the relevance of retrieval: we perform classification. We chose one of the most straightforward classification method, the k-nearest neighbors (k-NN) method, even though any other method could be easily plugged in our framework. We first consider two pathological classes, benign (C = −1) and neoplastic (C = +1), then we propose a multi-class evaluation of the retrieval in Section 2.6. As an objective indicator of the retrieval relevance, we take the classification accuracy (number of correctly classified samples / total number of samples).

In order to determine if the improvement from one retrieval method to another is statistically significant, we will perform the McNemar’s test [Sheskin 11] based on the classification results obtained by the two methods at a fixed number of nearest neighbors. We refer the reader to the Appendix A for a detailed description of the McNemar’s test.

Given the small size of our database, we need to learn from as much data as possible. We thus use the same database both for training and testing but take great care into not biasing the results. If we only perform a leave-one-out cross-validation, the independence assumption is not respected because several videos are acquired on the same patient. Since this may cause bias, we chose to perform a leave-one-patient-out (LOPO) cross-validation, as introduced by Dundar et al. [Dundar 04]: All videos from a given patient are excluded from the training set before being tested as queries of our retrieval and classification methods. Even though we tried to ensure unbiased processes for learning, retrieval and classification, it might be argued that some bias is remaining because splitting and selection of video subsequences were done by one single expert. For our study we can consider this bias as negligible.

It is worth mentioning that, in the framework of medical information retrieval, some scenarios require predefined sensitivity or specificity goals, depending on the application. Some applications, such as brain surgery, may require a predefined high specificity. For our application, physicians prefer to have a false positive caused by the misdiagnosis of a benign polyp, which could lead for example to unnecessary but well supported polypectomy, than to have a false negative caused by the misdiagnosis of a neoplastic polyp, which may have serious consequences for the patient.

Thus, our goal is to reach the predefined high sensitivity, while keeping the highest possible specificity. For this reason, we introduce a weighting parameter 2 [−1, 1] to trade-off the cost of false positives and false negatives.The default value of the additive threshold is = 0, which corresponds to the situation where the pathological votes of all the k neighbors have the same weight.

Negative values of correspond to putting more weight to neoplastic votes. The closer is set to −1 (resp. +1), the more weight we give on the neoplastic votes (resp. the benign votes) and the larger the sensitivity (resp. the specificity) is. ROC curves can thus be generated by computing the couple (specificity, sensitivity) at each value of 2 [−1, 1], which provides another way to evaluate the classification performance of any of the retrieval methods.

One may argue that our methodology uses an ad-hoc number of visual words and is thus dependent on the clustering results. This is the reason why, in Section 2.5.2, we will compare the classification performances of our retrieval method with those of a simple yet efficient image classification method, the Naive-Bayes Nearest-Neighbor (NBNN) classifier of Boiman et al. [Boiman 08], that uses no clustering but was proven to outperform BoW-based classifiers. For each local region of the query the NBNN classifier computes, in the description space, its distances respectively to the closest region of the benign and neoplastic training data sets. If the sum of the benign distances DB is smaller than the sum of the neoplastic distances DN, the query is classified as benign, otherwise as neoplastic [Boiman 08]. The construction of ROC curves for the NBNN classification method requires the use of a multiplicative threshold NBNN 2 [0,+1[ according to which the query is classified as neoplastic if and only if: DN < NBNN DB (2.2)

The default value of the multiplicative threshold NBNN is NBNN = 1, which corresponds to the situation where the pathological votes of all the k neighbors have the same weight. Values of NBNN greater than 1 correspond to putting more weight to neoplastic votes. The larger (resp. smaller) NBNN is set, the more weight we give on the neoplastic votes (resp. the benign votes) and the larger the sensitivity (resp. the specificity) is.

Another characteristic of our application is that pCLE videos diagnosed as neoplastic may contain some benign patterns whereas benign epithelium never contains neoplastic patterns. Therefore, it seems logical to put more weight on the neoplastic votes, being more discriminative than benign votes. The weighting parameters and NBNN may also be useful to compensate for our unbalanced dataset, which contains more benign images than pathological ones.

Table of contents :

1 Introduction

1.1 A Smart Atlas for Endomicroscopy: How to Support In Vivo Diagnosis of Gastrointestinal Cancers?

1.2 From Computer Vision to Medical Applications

1.3 Manuscript Organization and Contributions

1.4 List of Publications

2 Adjusting Bag-of-Visual-Words for Endomicroscopy Video Retrieval

2.1 Introduction

2.2 Context of the Study

2.3 Adjusting Bag-of-Visual-Words for Endomicroscopic Images

2.4 Contributions to the State of the Art

2.5 Endomicroscopic Videos Retrieval using Implicit Mosaics

2.6 Finer Evaluation of the Retrieval

2.7 Conclusion

3 A Clinical Application: Classification of Endomicroscopic Videos of Colonic Polyps

3.1 Introduction

3.2 Patients and Materials

3.3 Methods

3.4 Results

3.5 Discussion

4 Estimating Diagnosis Difficulty based on Endomicroscopy Retrieval of Colonic Polyps and Barrett’s Esophagus

4.1 Introduction

4.2 pCLE Retrieval on a New Database: the “Barrett’s Esophagus”

4.3 Estimating the Interpretation Difficulty

4.4 Results of the Difficulty Estimation Method

4.5 Conclusion

5 Learning Semantic and Visual Similarity between Endomicroscopy Videos

5.1 Introduction

5.2 Ground Truth for Perceived Visual Similarity and for Semantics

5.3 From pCLE Videos to Visual Words

5.4 From Visual Words to Semantic Signatures

5.5 Distance Learning from Perceived Similarity

5.6 Evaluation and Results

5.7 Conclusion

6 Conclusions

6.1 Contributions and Clinical Applications

6.2 Perspectives

Appendix A: Statistical Analysis Methods

Appendix B: DDW 2010 Clinical Abstract

Appendix C: DDW 2011 Clinical Abstract

Bibliography