Get Complete Project Material File(s) Now! »

CHAPTER 3 RESEARCH DESIGN AND METHODS

INTRODUCTION

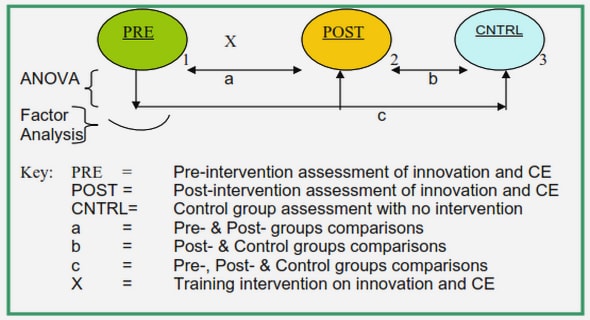

This chapter deals with issues related to the procedures followed to accomplish the investigation. These include the means in which validity and reliability are secured, the research design or plan, the research approach, and the research strategies employed. Among other things, this chapter focuses on the data collection and data analysis strategies that include factor analysis, dependent t-test and regression analysis.

VALIDITY AND RELIABILITY

Researchers use survey instruments that have previously been standardised, or they have the freedom to develop new instruments. Whether or not these instruments are newly-developed or adopted from the standardised ones, instruments need to be characterised by the qualities of validity and reliability. Validity and reliability increase the credibility of the specific instrument and, as a result, also the findings of the research (McMillan, 2012:131). Validity refers to how accurate the instrument measures the construct it is intended to measure. Reliability, on the other hand, has a bearing on how consistent the instrument is in measuring the construct under study (Tavakol & Dennick, 2011:53).

There are three main types of validity, namely content, construct and criterion validity. Content validity, often regarded as the sophisticated feature of face validity, is established by asking experts to rate if each item fits the measuring instrument. The items are checked against a sample frame of dimensions that are intended to be included in the instrument. Content validity is more relevant in achievement tests but it is also fully applicable in affective tests, like the one that was developed in this study (Coolican, 1994:153; Domino & Domino, 2006:53; Murphy & Davidshofer, 2005:173). This issue is discussed in more detail in section 3.6.4.2 of this chapter. Construct validity is usually achieved through exploratory or confirmatory factor analysis. Exploratory factor analysis is discussed in some detail in section 3.6.4.4 of this chapter. Criterion validity, which did not constitute a part of this study, refers to the statistical significance of relationships (Nunnally & Bernstein, 1994, cited in Rubio, Berg-Weger, Tebb, Lee & Rauch, 2003:95).

Reliability also has different forms, like inter-rater, split-half and test-retest reliability. Inter-rater reliability analysis, as discussed in section 3.6.4.1 of this chapter, is used to observe the agreement (or disagreement) between two or more persons who independently rate a specific subject of research or behaviour (Domino & Domino, 2006:48; McMillan, 2012:140). Split-half reliability refers to splitting (dividing) the items in an instrument randomly and expecting the scores from the two halves to show a meaningful relationship, which indicates a high reliability of the instrument. Test-retest reliability comprises administering the instrument to the same respondents with space of time and if the results of the two tests correlate, it shows the reliability of the instrument (Field, 2009:673-74; Domino & Domino, 2006:47).

Another way to increase the reliability and validity of an instrument is to consider data which are missing from the data set, including data cleaning and missing data analysis. Data cleaning is done by conducting a frequency distribution on the data set so that items that have been incorrectly coded can be identified and corrected. Missing data can be the result of many different causes, one of which is non-response on the part of the respondents. Individual items or respondents with more than 10% missing values should be excluded from the data set (Hair, Black, Babin & Anderson, 2014:45; Everitt & Hothorn, 2011:5-6). In this study, inter-rater reliability, content validity, and factor analysis were utilised to increase the reliability and validity of the instrument and also the findings of the study.

RESEARCH DESIGN

Research design, which is the manner in which the researcher tries to answer the research questions, is the plan upon which the “how” of addressing the problem under investigation is structured. In this plan, the research questions and objectives of the study should be outlined along with the sources of data that are collected to answer the questions, the ways of how to analyse and interpret them and related ethical considerations (Creswell, 2009:3; Leedy & Ormrod, 2010:85; Saunders, et al., 2012:159). The table below shows the research plan that was employed in this study. It shows the phases of the design-based research along with the research questions, the objectives, the sample used as a data source, data collection tools and data analysis techniques employed.

RESEARCH PARADIGMS

Research is influenced by paradigms which are lenses that guide the types of questions that should be identified by a specific investigation, the methods that should be used in addressing the research questions, and how data should be interpreted (Aliyu, Bello, Kasim, & Martin, 2014:80; Bryman, 2012:630). There are four major types of paradigms; the positivist (knowledge being empirical and objective), the interpretivist (knowledge being socially constructed and subjective), the critical (knowledge based on many truths) and the afrocentric (knowledge being indigenous) (Okeke & van Wyk, 2015:60-61). All these paradigms have their ontological, epistemological and methodological assumptions. Ontology refers to the nature of reality whereas epistemology refers to acceptable knowledge (Saunders, et al., 2012:130-32). In this chapter, only the first two paradigms will be highlighted.

In the positivist paradigm, the ontological assumption is that reality is objectively found in the outer world. It is an objective, independent and separate entity from the researcher, who tries to understand what is in the world of objective reality through quantifiable strategies, and as experienced by the senses. This is in contrast to the interpretivist paradigm whose ontological assumption is that reality is subjectively constructed in the minds of both the researcher and the research participants, who are also part of the reality. In the interpretivist paradigm, there are multiple realities as socially constructed and perceived by different persons (Neuman, 2000, cited in Okeke & van Wyk, 2015:23).

Research is also guided by the epistemological premises of a paradigm, which involves the relationship between the researcher and the subject/object of research. Positivists take an independent and a value-free approach. The researcher has minimal or no interaction with the research participants so as to secure objectivity by avoiding bias. Moreover, for positivists, knowledge is gained by means of reasoning and not by speculation. On the other hand, for interpretivists, the researcher and the researched have closer relationships in co-constructing knowledge which is influenced by the culture, history and values of societies, which in turn can be studied ethnographically (Aliyu, et al., 2014:81; Cohen, Manion, & Morrison, 2005:8; Jayasundara, 2009:135; Okeke & van Wyk, 2015:23; Wilson, 2013:9).

The two paradigms have clear preferences for the use of research methods. The positivist paradigm provides for research to be done quantitatively by employing experimental studies or statistical procedures that basically emanated from checking relationships between (among) independent and dependent variables. Experimental results and scientific statistical investigation have, among others, the ability of generalisability of the findings from the sample to the general population (Aliyu, et al., 2014:81-82; Creswell, 2009:4; Okeke & van Wyk, 2015:25; Wilson, 2013:9, 12). A deductive approach in doing research encompasses collection of data through survey instruments, giving concepts operational definitions, and accomplishing the research in a strictly structured manner (Wilson, 2013:14). The positivist paradigm is characterised by its use of deductive reasoning which starts from the general theory followed by answering the variables in question empirically. In the interpretivist paradigm, research is undertaken inductively from the specific to the general. The procedures employed in this paradigm focus on building theories rather than testing them (Okeke & van Wyk, 2015:25). In the interpretivist paradigm, tools like interview guides or focus-group discussions are employed like in qualitative studies. In addition, inductive reasoning is used in which case “the researcher logically establishes a general proposition (or grounded theory [emphasis original], based on the observed facts” (Okeke & van Wyk, 24-25). The war between positivist and interpretivist paradigms subsided when the mixed-methods approach was introduced (Bryman, 2012:650). In mixed methods, the researcher may employ quantitative methods to be supported by qualitative methods or use qualitative methods dominantly, supported by quantitative data (Saunders, et al., 2012:164).

In this study, the Gaps Model of services marketing (discussed in chapter 2, section 2.8) was adopted as a theoretical model upon which the concepts of student support service quality were discussed. This study followed the positivist paradigm, which presupposes that knowledge is external to the researcher, because the major focus of this study (student support service quality) is a reality that is separate from how it is perceived by the students who responded to the instrument that was used in this study.

GENERAL RESEARCH STRATEGY

To achieve its aims, the study adopted an educational design research strategy which has its roots in the fields of design and engineering. The educational design research strategy is also called design-based research so as to show that it mainly accounts for characteristics of “design-analysis-redesign cycles” (Shavelson, Phillips, Towne & Feuer, 2003:26). Being different from everyday life of educational processes but with a purpose of improving them, the educational design research strategy, involves non-linear, cyclic and iterative processes. Some of these processes may run simultaneously whereas others run on their own. At the end, all processes help develop context-based educational research outcomes that would give applicable solutions to problems at hand (Bakker, 2014:38; Design-Based Research Collective, 2003:5).

Design-based research is also called “developmental research” because of its nature that encompasses, among other things, processes that go back-and-forth so as to reach the desired outcome (Bakker, 2014:37; Design-Based Research Collective, 2003:5; Lijnse, 1995, cited in Plomp, 2007:19). A design / developmental research strategy was regarded as ideal for this study because, through iterative processes, a measuring instrument inevitably had to be developed.

The design-based research strategy closely collaborates with all research strategies, and also encapsulates disciplines from fields in both the natural and social sciences. In this regard, proponents of design-based research, Blessing and Chakrabarti (2009:102) maintain that, “because of the variety of factors involved in design, the study of design often requires the selection and combination of research methods from various disciplines.” In addition to this, design-based research is applicable to different kinds of research problems. It is “used successfully in a wide range of domains and for a variety of research questions” (Edelson, 2002, cited in Bakker, 2014:37). However, when it is applied to specific situations, like it is used in this study, design-based research gives each research strategy the liberty of its uniqueness. “Every design project is by definition unique: the aim of a project is to create a product that does not exist yet … the uniqueness may relate to a particular detail [emphasis added] as well as to the overall concept; the tools, methods, resources and context [emphasis added] in which the project takes place will differ…” (Blessing & Chakrabarti, 2009:2). This research strategy is believed to be most fitting for a study such as the current one because of its emphasis on the setting / context, and which corresponds with the nature of quality which is also context-specific. Design-based research also tailors interventions that are fit for the specific purpose under consideration (Design-Based Research Collective, 2003:6; Kelly, 2006:175; Tait, 1997:1). This is apart from its wisdom in allowing flexibility and iterative approaches to come up with some result. Since contextual factors differ from place to place, the importance of finding practical solutions to problems or challenges in one context rarely has the same solution to those in another context (Tilya, 2003:63). Design-based research, as applied in this study, involves the development of an instrument that measures student support service quality and using this instrument to determine students’ expectations and experiences of service quality, as well as to identify gaps between students’ expectations and experiences of service quality. In addition, the instrument is used to observe whether service quality measures are related to students’ satisfaction. This study accounted for “context-sensitivity” by having been done in cross-border education, in an ODL environment, in Ethiopia. As the design-based research strategy allows for incorporating different disciplines and methods, this study employed survey as a strategy in data collection along with accompanying statistical tools for data analysis like Cronbach’s alpha, factor analysis, dependent t-tests, and regression analysis. Examples of studies that employed the design-based research include Mafumiko (2006) who developed ways of improving the high school Chemistry curriculum in Tanzania, and Bakker (2004) who developed methods of teaching statistics to junior high school (grades 7 and 8) students.

SPECIFIC RESEARCH STRATEGIES

The specific research strategies employed in this study correspond with the general design-based research strategy that has been discussed above. Bannan-Ritland’s (2003) study on the role of design in research can be regarded as a hands-on guide to implement the different strategies employed in this study. In Bannan-Ritland’s study, the steps a researcher needs to follow in making use of the design-based research were clearly highlighted. The four phases of the design-based research strategy comprised informed exploration, enactment, evaluation of local impact and evaluation of broader impact (Urlich & Eppinger, 2000, cited in Bannan-Ritland, 2003:21).

Informed exploration is the first step of the design-based research strategy. It is geared towards identifying and defining the problem under investigation. It involves exploring and consulting possible sources like related literature and other documents that may give an idea of what is intended to being designed; in case of this study, it is the development of an instrument. This phase is understood to be the foundation in building a new model (Bannan-Ritland, 2003:22; Sloane & Gorard, 2003:29).

The second stage, enactment, involves the development of a preliminary intervention that works as a base for further refinement. It involves multiple iterative steps of remoulding the design, which could take a considerable amount of time. Stakeholders like researchers, experts, teachers and parents may contribute by providing the necessary inputs (Bannan-Ritland, 2003:23). In this study, it comprised getting feedback from different groups of knowledgeable persons.

The third and fourth phases of design-based research involve evaluation. The third phase concentrates on an evaluation of local impact which consists of two stages. The first stage can be taken to be a formative assessment that assists to secure feedback from the actual users of the design (Bannan-Ritland, 2003:23). The second stage aims at getting a response from a larger group of respondents and can be regarded as summative evaluation (ibid.). These two stages of the evaluation phase usually result in changes in the design that can bring about substantial transformation. Chapter 4 of this study elaborates on the processes as applied in this study: whereas the first stage of formative assessment was undertaken as a pilot test procedure, the second stage implied summative assessment and was demonstrated by administering the instrument within a larger sample.

The fourth phase of design-based research is the evaluation of broader impact phase. It is the phase where “publication or presentation of findings [is] seen as a closure event. [It also has] concerns related to the adoption (and adaptation) of researched practices and interventions” (Bannan-Ritland, 2003:23). In this phase, the final product of the design is applied on what the design is planned to be used for. Further research can continue from the outcome of the design (Bannan-Ritland, 2003:24) as “it often leads to products that are useful in educational practice because they have been developed in practice” (Bakker, 2014:38). In this study, the data set that resulted from an application of the final version of the instrument led to findings which lent itself to practical interventions. The details of this last phase are covered in chapter 5 of the current study. In design-based research, it is recommended that the researcher should be more concerned about the parts of the final design that did not function very successfully. Consistently identifying failures in the design (in this study: the measuring instrument) assists in further refinement and improvement of the model (Sloane & Gorard, 2003:31).

Population

The concept “population” represents a defined set of persons, objects, items, or organisations, which constitute the major focus of the research. This is the group in which the researcher is interested and intends making generalisations about. All elements in a population must have some common characteristics to be categorised as a single group of interest. The population of any scientific investigation is the group on which inferences are ultimately based and from which the sample is drawn. It should be identified by the researcher before sample selection and data collection starts (Babbie, 2013:134; Gay, et al., 2011:130; Saunders, et al., 2012:260). A population is classified into a target population and an accessible population. The target population is “the population to which the researcher would ideally [emphasis added] like to generalize study results” whereas an accessible population is “the population from which the researcher can realistically [emphasis added] select subjects” (Saunders, et al., 2012: 130). Once the accessible population is clearly known, the researcher draws samples to accomplish the actual research. In the case of this study, the ideal population is all doctoral students enrolled in the UNISA-Ethiopia Centre in the academic year of 2014 whereas the real population is the 260 students who responded by filling out the instrument that was developed in the study.

Sampling

Sampling is a technique for selecting a sub-section of a population of interest, whereas the concept “sample” refers to a “group of participants from whom data are collected”. Methods of sampling can broadly be classified into probability and non-probability sampling (McMillan, 2012:95; Saunders, 2012:130).

Probability sampling procedures give every member of the population an equal chance of being selected. Commonly-employed probability sampling techniques include simple random, stratified, and cluster sampling. Non-probability sampling, on the other hand, does not give every member of the population an equal chance of being selected. Non-probability sampling techniques include convenience sampling, quota sampling, purposive sampling and snowballing (Saunders, et al., 2012:140-41).

From the population of doctoral students registered at the UNISA-Ethiopia campus, selection of participants in this study was done by using the convenience sampling technique “in which respondents [were] chosen based on their convenience and availability” (Babbie, 1990, cited in Creswell, 2009:148). The students were reached telephonically and asked for their willingness to participate in the study. Once they confirm their willingness, they were asked for their private (non-UNISA) e-mail addresses (this was done for the sake of confidentiality) through which the instrument was sent to each one of them and data was collected by means of their forwarding the filled-out instrument to the researcher’s private e-mail address. Convenience sampling is often criticised for a perceived lack of generalisability to a larger population (Babbie, 2013:128; Creswell, 2009:148; McMillan, 2012:103; Saunders, et al., 2012: 141).

However, the advice of McMillan (2012:104) was followed “not to dismiss the findings but to limit them to the type of subjects in the sample”. A study that was done by Sousa, et al. (2004:130), on the other hand, justifies that findings from respondents that are chosen through convenience sampling can be taken to indicate about the target population. These authors cited Cochran (1977) who “… suggests that known data from a population can be compared with data from a sample in terms of average variability to determine whether there are similarities between the two data sets” (Sousa, et al., 2004:130).

TABLE OF CONTENTS

DECLARATION

DEDICATION

ACKNOWLEDGEMENTS

ABSTRACT

ABBREVIATIONS/ACRONYMS

LIST OF TABLES

LIST OF FIGURES

CHAPTER 1: INTRODUCTION

1.1 INTRODUCTION

1.2 BACKGROUND OF THE STUDY

1.3 PROBLEM STATEMENT

1.4 AIM AND OBJECTIVES OF THE STUDY

1.5 SIGNIFICANCE OF THE STUDY

1.6 PRELIMINARY EXPLANATION OF CONCEPTS

1.7 RESEARCH DESIGN AND METHODS

1.8 ETHICAL CONSIDERATIONS

1.9 CHAPTER BREAKDOWN

1.10 CHAPTER SUMMARY

CHAPTER 2: QUALITY AND DIMENSIONS OF STUDENT SUPPORT SERVICES

2.1 INTRODUCTION

2.2 CONTEXTUAL BACKGROUND

2.3 MEANING AND HISTORY OF DISTANCE EDUCATION

2.4 STUDENT SUPPORT SERVICES IN DISTANCE EDUCATION

2.5 QUALITY AND ITS MEANING IN THE CONTEXT OF HIGHER EDUCATION

2.6 SERVICE QUALITY

2.7 MEASUREMENT AND DIMENSIONS OF SERVICE QUALITY

2.8 THEORETICAL FRAMEWORK: THE GAPS MODEL

2.9 CHAPTER SUMMARY

CHAPTER 3: RESEARCH DESIGN AND METHODS

3.1 INTRODUCTION

3.2 VALIDITY AND RELIABILITY

3.3 RESEARCH DESIGN

3.4 RESEARCH PARADIGMS

3.5 GENERAL RESEARCH STRATEGY

3.6 SPECIFIC RESEARCH STRATEGIES

3.7 CHAPTER SUMMARY

CHAPTER 4: DEVELOPMENT OF AN INSTRUMENT

4.1. INTRODUCTION

4.2. THE PHASE OF INFORMED EXPLORATION

4.3. THE PHASE OF ENACTMENT

4.4 THE EVALUATION PHASE: LOCAL IMPACT

4.5 CHAPTER SUMMARY

CHAPTER 5: EVALUATION: BROADER IMPACT

5.1 INTRODUCTION

5.2 PROFILES OF RESPONDENTS OF THE STUDY

5.3 EXTENT OF STUDENTS’ EXPECTATIONS OF STUDENT SUPPORT SERVICE QUALITY

5.4 EXTENT OF STUDENTS’ EXPERIENCES OF STUDENT SUPPORT SERVICE QUALITY

5.5 GAPS IN STUDENT SUPPORT SERVICE QUALITY

5.6 RELATIONSHIP BETWEEN STUDENT SUPPORT SERVICES AND STUDENTS’ SATISFACTION

5.7 RELATIVE WEIGHT (CONTRIBUTION) OF THE FIVE DIMENSIONS

5.8 PEER COLLABORATION

5.9 CHAPTER SUMMARY

CHAPTER 6: SUMMARY, DISCUSSION, CONCLUSIONS AND RECOMMENDATIONS

6.1 INTRODUCTION

6.2 SUMMARY AND DISCUSSION OF FINDINGS

6.3 CONCLUSIONS

6.4 RECOMMENDATIONS

6.5 CONTRIBUTIONS OF THE STUDY

6.6 THE RESEARCH PROCESS IN RETROSPECT

6.7 IDEAS FOR FURTHER RESEARCH

6.8 FINAL WORD

REFERENCES

GET THE COMPLETE PROJECT