Get Complete Project Material File(s) Now! »

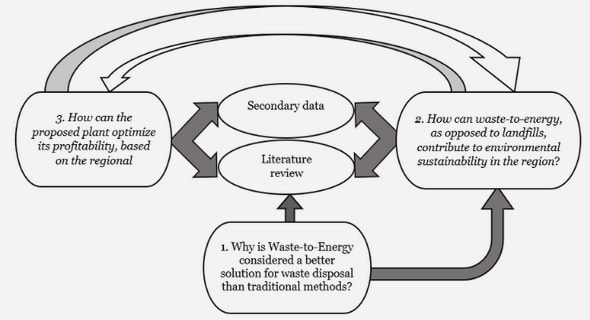

Monetary macroeconomic theory

The theoretical landscape in monetary macroeconomics is rich and burgeoning. Currently, New Keynesian Dynamic Stochastic General Equilibrium models are the reference tools to think about business cycles and test alternative policies. The origin of NKDSGE models dates back to Real Business Cycle models (Kydland and Prescott, 1982; Long and Plosser, 1983) and, farther back, to the growth model introduced by Solow (1956) and extended by Lucas (1972, 1975, 1977), although the focus is admittedly on short-run horizons. They rely on microfoundations to overcome the Lu-cas (1976) critique, and feature intertemporal constrained optimal choices under varying degrees of uncertainty.

In a nutshell, NKDSGE models add frictions and inefficiencies to the core structure of RBCs, so to sidestep monetary neutrality and carve a role for monetary policy. In their simplest form, NKDSGEs summarise aggregate supply and demand in two linearised, dynamic, and forward-looking equations. The supply side incorporates a Phillips curve that depends on inflation ex-pectations and current economic activity. Aggregate demand is condensed in an Euler equation, which relates current consumption to interest rates and future consumption. Finally, if the fiscal side of government is assumed away, the model is completed with a third equation that sets the path for interest rates, in essence a representation for the central bank. Most often, the stylised economy is a cashless limit case, or otherwise a money demand is introduced with real balances entering the utility function.

This framework presents an appealing flexibility but also a number of predictions at odds with empirical evidence. Especially when the policy rate is pegged (eg, at the Zero Lower Bound, ZLB), NKDSGE models overpower the current effects of policy changes that are distant in the future. For example, the current effect of an announced policy rate cut increases with the dis-tance into the future. Moreover, and key to correctly place the contribution of this dissertation, the relation governing the path of interest rates turns out to be crucial in disciplining the model characterisation. These paradoxes are often addressed by reworking the rational expectations hypothesis: by assuming rational inattention (Sims, 2010), bounded rationality (Gabaix, 2016), or positing an ex-plicit cognitive process (Garcia-Schmidt and Woodford, 2019). The result is that current decisions are much less responsive to changes occurring far into the future. The dynamics of an otherwise standard NKDSGE radically change and in particular, the model is not subject to indeterminacy at the zero lower bound.

In a feedback rule such as that often postulated, how intensively the interest rate reacts to expected inflation is a key factor for the equilibrium determinacy. If the central bank levers the policy rate less than one-to-one with respect to inflation expectations, the economy displays nom-inal and real indeterminacy. This implies that any level of prices and output is consistent with the model, and hence negligible shocks can lead to explosive paths. In this light, the ZLB episodes in the recent past proved damaging for the workhorse model, and motivated numerous attempts to fix such puzzles. Liquidity factors were included in this set of fixes, but seldom included in simple and compact settings.

A complementary approach addresses the core structure of NKDSGEs and the role of money and liquidity. As previously pointed out, NKDSGEs build on a RBC kernel, and hold on to the real dimension of economic forces (Kocherlakota, 2016). Thus, studies like Canzoneri et al. (2011), Diba and Loisel (2021), and Michaillat and Saez (2021) introduce money or bonds in various forms to circumvent a set of puzzles or paradoxes implied by NKDSGEs. In this vein, particularly rele-vant are the recent contributions by Calvo (2016), who puts at centre stage in the macroeconomic models issues related to money and liquidity.

The chapter 4 titled ”A simple model with liquidity” is part of such an effort to amend NKDS-GEs, but has the advantage of keeping close contact with the workhorse model while proposing minor deviations. Such deviations, though, suffice to relax the requirement of an active and ag-gressive central bank: the model displays nominal and real determinacy even when the interest rate is nearly irresponsive to inflation expectations. The chapter offers a systematic study on the in-terplay between liquid assets and monetary policy from a reduced-form standpoint. As cash and liquid bonds (or equivalently bank account deposits) provide transactional services, and hence utility, their inclusion in the utility function of the representative agent is a straightforward option to model different forms of liquidity.

The central bank, in contrast to the workhorse NKDSGEs, operates with two policy instru-ments. First, it sets the total level of nominal liquidity, defined as the sum of liquid bonds and money in circulation. Second, it adjusts the interest rate on the liquid bond in reaction to ex-pected inflation, rather than the nominal interest rate on the illiquid bond that serves intertempo-ral smoothing. Crucially, the resulting set of equilibrium conditions is modified in two ways: the Euler equa-tion is augmented with a money wedge that alters its usual form; then, total liquidity demand also allocates between money and liquid bonds, both in real terms. Inflation, besides imparting movements in interest rates, also influences total real liquidity. This feedback loop does not com-promise the behaviour of the economy, which reacts to technological and monetary policy shocks in line with the standard NKDSGE.

Relaxing the requirement of an active feedback rule allow exploring how aggregates are af-fected by liquidity factors and a potentially destabilising monetary policy. Specifically, the model can be used to rerun a liquidity dry-up similar to that of the 2008 GFC, when several assets suf-fered liquidity losses. It turns out that an active central bank, swiftly adjusting the policy rate, can significantly speed up the convergence of output, trading off lower levels of real liquidity and stranded liquid bonds. Symmetrically, a passive monetary stance prolongs the contraction of output and also struggles to revive inflation, while preserving real liquidity and bond holdings.

Passive monetary policy stance also affects aggregate dynamics, as the chapter illustrates. First off, the mere inclusion of liquidity induces a higher level of inertia in the observed aggregates. This inertia is still sizeable in comparison with much richer, detailed NKDSGEs that include trend inflation or sophisticated financial frictions and nominal indexation. Focusing on inflation, the model shows by means of stochastic simulations that a less responsive central bank induces more lag-dependency, as the liquidity dry-up exercise suggests.

The chapter also provides an alternative setup, where a mix of liquid assets is used to purchase consumption goods, in the vein of cash-in-advance models. Such alternative channel does not al-ter the fundamental insights of the reduced-form model, but rather reinforces the role of liquidity, especially for it significantly affects monetary policy.

All in all, this chapter offers an account of the relevance of liquidity conditions in the economy and its implications for policy. As the toolkit of unconventional policies shows, policymakers have clear considerations for financial matters in general, and liquidity provision in particular. The model, keeping the core structure of the baseline NKDSGEs, allows for promising extensions: many of the additional blocks developed for existing NKDSGEs can be included in its setup in a relatively easy fashion.

This is hardly possible in other approaches that abandon fundamental assumptions that have characterised models in the past 40 years, such as rational expectations. This does not imply that new ways for modelling expectation formation or other modifications of behavioural assump-tions of the traditional model are not worth exploring. Focusing on the monetary-financial side of the models and introducing concepts, such as liquidity, which are well-founded in microeco-nomic and financial theories (Eggertsson and Krugman, 2012; Holmstrom¨ and Tirole, 2011). The model discussed in the chapter lends itself as a bedrock for further extensions and refinement, particularly for analysing fiscal policy and banking.

Nevertheless, the most appealing way forwards sits in a full endogenisation of total nomi-nal liquidity management by the central bank. This would provide monetary policy with a full-fledged second instrument, replicating more closely the actual conduction of QEs in recent years.

In light of the considerations presented above, hitting the ZLB in 2008 and 2020 while also implementing liquidity policies provided extremely valuable data points to bring to the empirical test the predictions of NKDGEs. The next section overviews the most relevant contributions on the empirical side of monetary macroeconomics, and places the first and third chapters in such literature.

Empirical Monetary Macroeconomics

The tools traditionally used to study macroeconomic empirical questions are drawn from the time series toolkit. This includes reduced-form or Structural Vector Autoregressive (SVAR) models, as well as less recent Dynamic Simultaneous Equations models. Restricting the focus to monetary issues, (S)VARs are the reference tools, since they require simple but stringent assumptions, and NKDSGEs can often be recast in SVARs form. In the last two decades, in particular, Bayesian meth-ods further pushed the frontier of empirical investigation, providing powerful tools to estimate rather complex models with fairly transparent assumptions.

With respect to monetary policy, it is useful to distinguish two approaches: limited informa-tion estimation and full information one. The latter typically involves a system of structural re-lations and is informed by a theoretical apparatus – either on the restrictions (VARs) or on the mutual influence of shocks and observable aggregates. The former, instead, zooms in on a partic-ular macroeconomic relation and assesses its reduced-form validity in isolation from other forces, although with possibly complex modelling assumptions.

Taylor (1993) monetary policy rules and Phillips (1958) curves are especially salient examples of the limited information approach: both relations are intimately intertwined and are crucial components of the modern monetary framework.

The next sections offer a broad overview of the current state of affairs for monetary policy rules and Phillips curves in relation to the first and third chapters of this dissertation.

Monetary policy rules

Feedback rules are currently the first-line instrument for monetary policy. Therefore, correctly identifying how central banks operate in the economic environment is essential for policy pre-dictability and transparency. In the same vein, correct estimates of the monetary policy rule are decisive in evaluating how effectively central banks keep inflation near its target.

Eyeballing actual data as pictured by Figure 1.2, it is possible to appreciate the different phases the US economy traversed since World War II. The figure presents time series for the Federal Funds Rate, headline Consumer Price Index, and an ex-post measure of economic activity, the output gap. Until the early 1970s, inflation was relatively stable under 5% and not particularly volatile. Similarly, the FFR followed a smooth upward path, following the apparent trend of inflation. In contrast, the dynamics for economic activity appears relatively more volatile, with large swings in negative and positive territory. Then, since the 1970s, inflation ramps on an upward, decade-long trend, closely tracked by the policy rate. The output gap still displays large swings, but mainly in negative territory, signalling an economy under its full potential.

A break seems to take place around the beginning of the 1980s: FFR shoots up, the output gap signals a recession, and a deflation takes place. Indeed, the inflation rate returns to previous levels with reduced volatility, while the FFR moves more smoothly and responds both to inflation and economic activity. The latter also shows decidedly less volatility than previous periods, with longer upward trends recovering from mild recessions. This period, often referred to as Great Moderation, indeed starkly contrasts with previous years: less volatile in general, with lower inflation. The switch corresponds roughly to the appointment of Volcker as Chairman of the Federal Reserve Bank, suggesting that he brought about a discrete policy break.

The later decades contrast with the Great Moderation, too, but along different dimensions. In particular, at the onset of the 2008 Global Financial Crisis, the Fed pushed the policy rate to the ZLB, which bound for several years afterwards. Yet, inflation did not spiral out of control as dur-ing the 1970s. The key difference in this late part of the sample is summarised by Figure 1.3: since mid-2008 the Fed inflated its balance sheet in order to inject unprecedented amounts of liquidity in the financial system. This large balance sheet expansion was composed of several distinct oper-ations and facilities, but is usually referred to as Quantitative Easing policy. Interestingly, similar policies were deployed by many other major central banks – including the European Central Bank, Bank of England, Bank of Japan.

The effects of such policies, though, are not yet well understood, even if they signal two rele-vant facts: first, interest rate setting is not the only policy tool at central banks’ disposal; second, liquidity, broadly speaking, is a relevant factor for inflation control and economic stability, at least in central banks’ framework. As chapter 4 explored theoretically the role of liquidity in NKDS-GEs, chapter 3 takes an empirical approach to liquidity and monetary policy rule, in the narrow financial sense.

The modern literature on monetary policy rule estimation sprung from the seminal paper of Taylor (1993), which in a simple setting estimated the reaction function of the Fed. In that contri-bution, the specification takes to the empirical test the then-recent theoretical insights on policy credibility, the effectiveness of rule-based policies vis-a`-vis discretion, and the focus on interest rates rather than money growth. Taylor postulates a feedback rule that implements a strong re-sponse of the Federal Funds Rate to the year-on-year inflation rate, calibrated to 1.5. Assuming a response to output of about .5 and a long-run target of 1% for inflation, this simple rule fit very well the actual behaviour of the Fed over the period 1983Q1-1992Q3. The result, making direct contact with the nascent research of NKDSGEs, sparked a lively debate on policy rules, so much so that monetary policy rules of this form are often referred to as Taylor rules. Alongside a rich theoretical discussion, Taylor (1993) kick-started a whole strand of empirical literature on the estimation of policy rules. The early focus of this research was to understand how policy regimes changed in the US, especially to ascertain the prevailing monetary regime during the high inflation period of the 1970s. Clarida, Gali, and Gertler (2000) is one of the main contributions to such literature, setting the ground for the methods employed and the ensuing narrative about policy stance. In a nutshell, estimating policy rules is a complex task because of the issue of intrinsic endogene-ity, which biases standard estimates. When the error terms are correlated with the regressors, in fact, plain OLS asymptotic does not hold and estimated parameters are biased. To overcome these issues, Clarida, Gali, and Gertler (2000) deploy a GMM-IV approach, taking lags of endogenous variables as instruments for their expected values. Moreover, they pioneer a split-sample strategy, which consists in estimating the policy rule on two subsamples: the splitting point corresponds to Volcker’s first chairmanship. The approach is palatable enough as it makes contact with con-ventional wisdom and anecdotal evidence at the time. The findings, in sum, are that the first period sees an accommodative central bank, corresponding to high inflation and great volatility. The key estimate for the reaction to expected inflation is well below one: drawing from the theo-retical apparatus, this policy stance engenders sunspot equilibria and severe indeterminacy. Over the second subsample, initiated by Volcker’s disinflation, the Fed is reported to strongly react to expected inflation, thus ruling out indeterminacy and effectively keeping inflation under control. This analysis, taken together, informs much of the established consensus on the conduct of mone-tary policy in the US; it also affords some criticisms. For instance, instrumenting expected inflation by its lagged values leads to weak instruments, since the inflation rate is often best described by a white noise. Moreover, two choices turned out particularly consequential: the use of revised data, and the – sensible but somewhat exogenous – choice of subsamples’ timing.

Following research improved on both aspects. Reproducing as closely as possible the infor-mation set of the policy-maker at the time of each decision is at odds with revised data, which undergo corrections as more precise information becomes available. In this sense, Orphanides (2001) improves on Clarida, Gali, and Gertler (2000) by collecting real-time data on inflation and output gap forecasts available to the Federal Open Market Committee at each meeting, drawing from the Greenbook database. The timing bias is sizeable in the estimates of policy rules, to the extent that a Taylor rule estimated over the 1987Q1-1993Q4 with real-time data and forecasts pic-tures a passive stance for the Fed – at least at forecast horizons shorter than three quarters. In a follow-up contribution, Orphanides (2004) replicates the split-sample exercise of Clarida, Gali, and Gertler (2000) employing only real-time data. The two regimes in place before and after Vol-cker’s appointment turn out to be more similar than expected. Estimates are broadly similar across the two regimes: both subsamples consistently produce estimates of proactive reaction to expected inflation, which downplays its stabilising power. The findings with real-time data actually point to excessive responsiveness to output during the 1970s, hinting at overconfident policy-makers about smoothing fluctuations of the business cycle. These contributions relied essentially on OLS or Non-Linear Least Squares (NLLS), without instrumenting for expected inflation but rather us-ing actual forecasts from the FOMC staff. The use of real-time data, though, does not solve entirely the endogeneity issue, as forecasts are usually conditional on the future path for the policy rate, which risks polluting the estimates.

The issue of a historically informed, but exogenous subsampling strategy has been tackled with a variety of econometric approaches. Among these, Time-Varying Parameters (TVP) methods yielded insightful results, together with adaptations of Markov Switching estimates. Boivin (2006) and Kim and Nelson (2006) present limited information results based on TVP estimates. The former depart from the GMM-IV apparatus to venture into dealing with TVP models with endogenous regressors and heteroskedasticity in the shocks. Summing up the main takeaways, they exploit standardised forecast errors in the IV step to do away with endogeneity and cor-rect the bias it brings. The resulting estimates paint yet a more nuanced picture. There appear to be three distinct phases in the post-WWII Fed: the first phase from 1970 to 1975 the Fed’s re-action to inflation was not statistically different from one; during the second phase, from about 1975 through the early 1980s, the reaction ticked up slightly, still not significantly different from one, though. By contrast, the final regime, which covers at least the 1980-2002 period, features a proactive stance for the Fed, but with increasing uncertainty around the point estimate. The latter is possibly due to the low volatility of inflation, which further weakens the power of lags as instruments.

Finally, Boivin, 2006 combines heteroskedasticity-robust TVP with real-time data, summaris-ing the contributions of Kim and Nelson (2006) and Orphanides (2004). Contrary to Kim and Nelson, 2006, Boivin finds an active stance towards inflation at the beginning of the 1970s and from about 1982, with a steep decrease in between. The transition back to a proactive stance, in particular, does not look discrete, while uncertainty still increases from the 1990s onwards.

A later strand of literature took a similar approach, but relied on discrete regimes instead of smooth transitions. It is the case for Davig and Leeper (2006, 2011) and Murray, Nikolsko-Rzhevskyy, and Papell (2015). The former in particular consider jointly fiscal and monetary policy rules, focusing on active and passive Markov regimes for both. While the combination of fiscal and monetary rules nuances the general picture, they find evidence of an active stance over the periods 1979-1990, 1995-2001, and a passive stance during 1949-1979, 1990-1995, 2001 onwards, in part confirming the early results from Clarida, Gali, and Gertler (2000). At the aggregate level, each state results particularly persistent, despite the frequent switches.

Following this trend of endogenous policy switches, Murray, Nikolsko-Rzhevskyy, and Papell (2015) apply the Markov switching tooling developed by Hamilton (1989) to the sole Taylor rule. Mixing real-time data and regime switch they confirm in a limited information exercise the likely existence of multiple regimes within the usual periodisation. The distribution of such regimes con-tradicts the typical narrative built on Clarida, Gali, and Gertler (2000): Fed’s stance was virtually always active during the episodes of high inflation. In particular, the Fed proactively counter-acted expected inflation in the periods 1965Q4-1972Q4, 1975Q1-1979Q3, and 1985Q2 until the end of their sample.

Chapter 3 builds on this approach and augments the baseline Taylor rule specification to ac-count for liquidity factors, which proved relevant in actual policymaking.

The inclusion of financial stress indexes was previously explored in the monetary policy lit-erature, but the role of liquidity was overlooked until 2008. Some notable exceptions are Baxa, Horvath,´ and Vasˇ´ıcekˇ (2013) and Istrefi, Odendahl, and Sestieri (2020), which relate financial sta-bility concerns to policy decisions – testing a battery of financial indexes for the former; parsing information from official minutes for the latter. The set of unconventional policies included a great deal of liquidity management: one of the main concerns at the Fed was to unlock the financial mar-kets and reboot trades. This concern is evident in the notes that introduced each policy innovation in the wake of the GFC: AMLF; CPFF; MMIFF; PDCF; TAF; TSLF notes all mention liquidity as the target for such policies. Extending the traditional Taylor rule to account for the role of financial markets essentially boils down to proxy the state of liquidity in the financial system.

Including liquidity is reasonable in light of policy developments, but its empirical counterpart is particularly thorny. Liquidity is a rather ephemeral feature of assets. For one, it hinges on the trade side one takes: an asset in high demand is easy to sell, therefore liquid; conversely, it is hard to buy, thus illiquid. A narrower operative definition of liquidity rests on the discount that is necessary to bear when transforming an asset into cash. To overcome such issues, the chapter proposes to capture a proximate measure of liquidity via spreads on largely traded, low risk assets, so as to pick a measure that is devoid of risk and endogeneity as much as possible. These measures are two spreads between extremely safe assets, ie US Treasury bills, and less safe ones. Precisely, the measures used in the estimates are spreads between BAA corporate bonds’ average yield and quarterly returns on the Standard & Poor’s 500 index. Maturity at issuance for the latter is a relevant factor in determining the premium over safer assets. To account for this timing factor, the spreads are computed on Treasury bills of comparable maturity, either ten years or three months.

The inclusion of such measures of liquidity in the policy rule, thus, captures the role of finan-cial concerns in actual policymaking. The intuition builds partially on leaning-against-the-wind literature (Svensson, 2017b), but elicits the input set of the Fed with respect to liquidity concerns.

The main results of the chapter follow from estimating a Markov switching model with liquidity-augmented Taylor rules. Such empirical model has the advantage of relying solely on information contained in the data to endogenise regime changes. While the standard specification, including Greenbook forecasts for current output gap and expected inflation, finds virtually only proactive regimes, at odds with the established consensus. Liquidity measures, though, provide a different narrative: when the short-run liquidity proxy is included, there is evidence of both active and pas-sive stances. Interestingly, the passive regime seems to prevail over the post-WWII sample, while the active one prevails for some short-lived periods, precisely the first half of the 1970s and for 1979-1982. Taking the long-run liquidity proxy, based on BAA bonds, the relevance of expected inflation is severely disputed, so much so that the Fed appear to react negatively to it – remarkably during the ZLB period. Across specifications, though, financial liquidity is consistently found as a significant predictor for movements in the policy rate. Interestingly, passive regimes were likely in place during periods of tranquil inflation: this result casts doubts on the stringency of active monetary policy to tame inflation. The estimates also suggest an association between the prob-ability of different parameter regimes and the direction of policy changes. Periods of increasing interest rates are associated with a high probability of being in a Volcker type regime (high coeffi-cient on inflation in the policy reaction function). By contrast, periods of monetary easing through declining interest rates are associated with a higher probability of being in a less reactive regime.

In an effort to summarise established empirical strategies, the chapter proposes also full- and split sample analyses, which broadly corroborate the main result, on top of the relevance of finan-cial liquidity for monetary policy-making. The full-sample analysis reveals that including either proxy of liquidity severely downsizes the coefficient on expected inflation: both specifications es-timate a reaction to inflation slightly below one, much lower than the estimates for classic Taylor rule. In the split-sample exercise, the cuts follow the established empirical strategy, but add a third subsample covering the post-2008 periods. The latter part is naturally less informative because of data availability (Greenbook data are released with a five-year lag) and features little to zero vari-ation in the policy rate. In line with Orphanides (2004), the standard specification finds an active stance during the pre-Volcker subsample, and a stronger active stance during the Great Modera-tion. The specifications that include financial proxies report weak responses to expected inflation in the pre-Volcker period, and, similarly, stronger responses from the 1980s onwards, although point estimates are significantly smaller than the standard specification.

Inflation dynamics

Akin to the Taylor rule, the Phillips curve is the key mechanism behind inflation dynamics: it re-lates current inflation with some measure of economic activity and, more elusively, with expected inflation. Notably, the expectations-augmented version of this relation is the channel for possible self-fulfilling expectations when the monetary policy stance is passive. More recently, the Phillips curve has mobilised a great deal of research efforts: has it disappeared? Has it flattened and how much? These questions spring from the unexpected behaviour of inflation in the last two decades: current price changes appear less connected to economic activity. The clearest example of this weakened connection is the missing deflation and reflation after the 2008 recession: before the 2020 pandemic, indeed, the US posted the longest expansion and pushed the unemployment rate below 3%, all with inflation in check. For the European economy, this fact is even more severe and puzzling (Ciccarelli and Osbat, 2017).

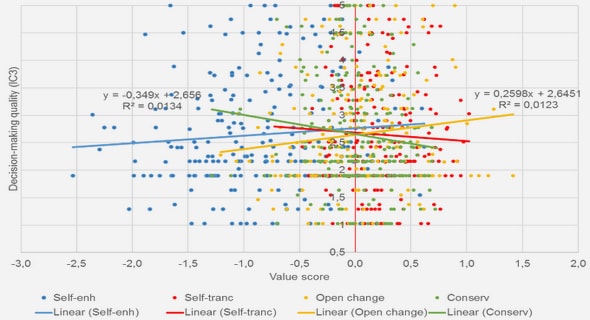

Moreover, the expectations channel does not provide guidance, but rather poses additional questions. As Figure 1.5a shows for the US, expectations from professional forecasters, regular consumers, and actual inflation follow significantly different paths. The same, for professional forecasters only, holds for inflation in the Euro Area, as shown in 1.5b.

Within the Phillips curve framework, therefore, observed inflation is influenced by current economic activity and future inflation. A simple forward iteration of this basic relation, thus, expresses current inflation as the present discounted sequence of deviations from the natural level of economic activity. That is, fluctuations around the so-called potential output, often expressed as the economy’s frictionless and non-stochastic equilibrium. The straightforward implication is that, as long as shocks are zero mean and serially uncorrelated, inflation dynamics reflects the underlying dynamics of the business cycle and its determinants.

An additional challenge in estimating the New Keynesian Phillips Curve comes, somewhat paradoxically, from policy effectiveness. Indeed, if inflation expectations are exceptionally well-anchored and monetary policy offsets demand shocks, then observed inflation will appear to ”dance to its own music,” driven solely by transitory shocks.

Table of contents :

1 General Introduction

1.1 Monetary macroeconomic theory

1.2 Empirical Monetary Macroeconomics

1.2.1 Monetary policy rules

1.2.2 Inflation dynamics

2 Introduction G´en´erale

3 Taylor Rules and Liquidity in Financial Markets

3.1 Data

3.2 Empirical Results

3.2.1 Specifications

3.2.2 Full sample analysis

3.2.3 Exogenous breaks

3.3 Diagnostics on Structural Breaks and Markov Switching

3.3.1 Markov Switching estimation

3.4 Conclusion

3.A Data Sources and Transformations

3.B Additional plots

3.C Empirical Appendix

3.C.1 Output gap

3.C.2 Full sample regression: residuals

3.C.3 GMM estimates

3.C.4 CUSUM test plots

4 A simple model with liquidity

4.1 A model with liquidity

4.1.1 Consumer

4.1.2 Firms

4.1.3 Monetary authority and market clearing

4.1.4 Linearised model

4.2 Calibration and IRFs

4.2.1 Liquidity with an accommodative central bank

4.3 Dissecting simulated inflation dynamics

4.4 The 2008 crisis: severe liquidity shortage

4.5 Conclusion

4.A Model appendix

4.A.1 Obtaining the system of equations

4.B Loglinearisation

4.C CIA setup

4.D Sensitivity analyses

4.D.1 Semi-elasticities for bonds and cash

4.E How g affects inflation dynamics

4.E.1 Misspecification

4.F Full lags structure

4.G Extreme policies

5 Inflation Persistence

5.1 Data and tools

5.2 Autoregressive analyses

5.2.1 Optimal lags selection

5.3 A Bayesian estimation of inertia

5.4 RNN-LSTM approach to persistence

5.4.1 LSTM forecasting

5.4.2 LSTM setup

5.4.3 Full sample: forecasting inflation and its persistence

5.4.4 Regressions on LSTM

5.4.5 Rolling LSTMs

5.5 Conclusion

5.A A primer on the RNN-LSTM framework

5.B Year-on-year series

5.C Isolating oil and commodities’ inflation from headline

5.D Robustness with varying window width

5.E Draw distributions

5.F LSTM data and forecasts

5.F.1 LSTM predictions on non-overlapping subsamples

5.G LSTM analyses

5.G.1 OLS regressions on decades

5.H Trend estimates

5.H.1 Frequentist application

5.H.2 LSTM output: full sample

5.H.3 LSTM output: subsample analysis

5.H.4 LSTM output: rolling window

6 Conclusion

6.1 Policy Rules and Liquidity

6.2 Optimal Liquidity Management

6.3 Inflation Persistence

6.3.1 Macroeconometrics with Deep-Learning

6.3.2 Inflation and Structural Change