Get Complete Project Material File(s) Now! »

MPSoC Programming Model

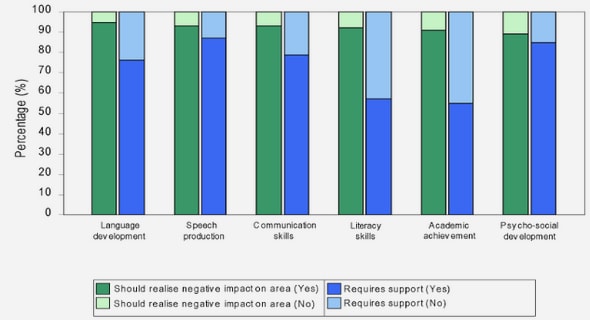

Using ordinary sequential code of any application and facilitating to run on multi-processor parallel execution environment needs many modification steps to follow. The ordinary sequential C code will be using full breadth of C environment, but some of them are not suitable for multiprocessor platform. First job is to detect, correct and follow such guidelines to make sequential code more compliant with hardware platform. The code will be provided with platform processor information, to optimize for target hardware, in form of pragmas. The ordinary sequential code does not care about the memory hierarchy present, but for efficient usage of target memory hierarchy the code need to be analyzed for its memory accesses, identify extensive accesses to some small data and making it available to local memory. Optimizing code for target hardware improves overall application performance and offers efficient energy consumption. The proposed programming model is presented in Figure 3. Each part is discussed in detail in this chapter.

ADRES

ADRES (Architecture for Dynamically Reconfigurable Embedded System) tightly couples a VLIW processor and a coarse-grained reconfigurable matrix. ADRES provides single architecture integration for VLIW processor and coarse-grained reconfigurable matrix. This kind of integrations provides improved performance, simplified programming model, reduced communication cost and substantial resource sharing [6].

ADRES Architecture

The ADRES core consists of many basic components, including mainly FUs, register files(RF) and routing resources. The top row can act as a tightly coupled VLIW processor in the same physical entity. The two parts of ADRES share same central register file and load/store units. The computation-intensive kernels, typically dataflow loops, are mapped onto the reconfigurable array be the compiler using the module scheduling technique[7] to implement software pipelining and to exploit the highest possible parallelism, whereas the remaining code is mapped onto the VLIW processor[6-8].

The two functional views of ADRES, the VLIW processor and the reconfigurable matrix, share some resources because their executions will never overlap with each other because of processor/co-processor model[6-8].

For VLIW processor, several FUs are allocated and connected together through one multi-port register file, which is typical for VLIW architecture. These FUs are more powerful in terms of functionality and speed compared to reconfigurable matrix’s FUs. Some of these FUs are connected to the memory hierarchy, depending on available ports. Thus the data access to the memory is done through the load/store operation available on those FUs[6].

For the reconfigurable matrix, there are a number of reconfigurable cells (RC) which basically comprise FUs and RFs too. The FUs can be heterogeneous supporting different operation sets. To remove the control flow inside loops, the FUs support predicted operations. The distributed RFs are small with fewer ports. The multiplexers are used to direct data from different sources. The configuration RAM stores a few configurations locally, which can be loaded on cycle-by-cycle basis. The reconfigurable matrix is used to accelerate dataflow-like kernels in a highly parallel way. The access to the memory of the matrix is also performed through the VLIW processor FUs.[6]

In fact, the ADRES is a template of architectures instead of a fixed architecture. An XML-based architecture description language is used to define the communication topology, supported operation set, resource allocation and timing of the target architecture [7].

Memory Hierarchy

Using a memory hierarchy boosts performance and reduces energy by storing frequently used data in small memories that have a low access latency and a low energy cost per access[9]. The easiest approach is to use hardware-controlled caches, but caches are very energy consuming so not suitable for portable multi-media devices. Moreover, cache misses may lead to performance losses[9].

In multimedia applications, access patterns are often predictable, as they typically consist of nested loops with only a part of data heavily used in the inner loops[9]. Using closer memories to processor for this heavily used data, can leverage faster and energy-efficient processing. In this case, Direct Memory access will transfer between background memories and these closer memories in parallel with computation executions.

Scratch Pad Memories

The software controlled scratch pad memories (SPM) can produce better performance and efficient energy consumption. Memory hierarchy utilization can be improved by design-time analysis. Scratch pad memories are difficult to implement, as analysing application and then selecting best copies and scheduling the transfers will not be easily manageable job. To handle this issue IMEC has developed a tool called Memory Hierarchy (MH)[9].

THE MH TOOL

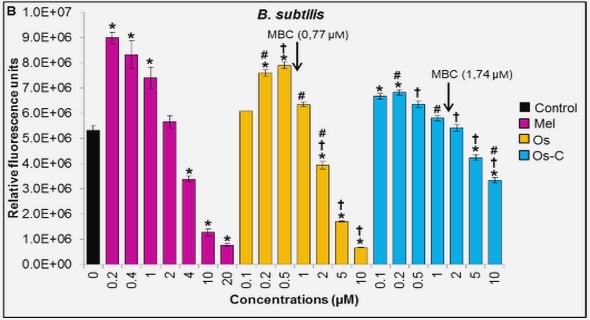

The MH tool uses compile-time application knowledge and profiling data to first identify potential and beneficial data copies, and secondly to derive how the data and the copies have to be mapped onto the memory architecture for optimal energy consumption and/or performance[9].

The figure below shows the inputs and outputs of the MH tool. The platform description describes which memory partitions exist, their properties and how they are connected. Cycle count information is selected for all computational kernels by running automatically instrumented code on an instruction-set simulator. Memory access and loop iteration counts are collected separately by source-code instrumentation and subsequent execution. The data-to-memory assignment describes data-structures allocation to memory. The MH tool is responsible for data-reuse analysis and its optimization[9].

Data-reuse analysis

In first step code is analyzed for re-use opportunities. All global and stack arrays are considered and also a limited class of dynamically allocated arrays. For data-reuse analysis, loop boundaries are important places to explore. If in a nested loop, a small part of a large array is being accessed heavily, then it will be better to move that part to local memory to save energy[9].

Optimization

MH takes into account two cost factors: the cycle cost and the energy due to memory accesses. There may be several optimal solutions each targeting different objective. These solutions can be connected through a Pareto curve. Optimization phase may target two issues: first, how to traverse the solution space of possible assignments; the second is how to minimize the overhead of transferring data[9].

The tool searches heuristically, starts by calculating the potential gain, in terms of energy, processing cycles or a combination of these, for each copy-candidate if it was assigned to the SPM (Scratch Pad Memory). The copy candidate with highest gain is then selected for assignment to the SPM. The next most promising copy candidate is selected by re-calculating the potential gains, as the previous selection may influence the potential gains of the remaining copy-candidates[9].

For MPEG-4 encoder application, the MH tool evaluates about 900 assignments per minute, of which 100 are valid[9].

For transfer scheduling, the MH tool will try to issue block transfers as soon as possible and to synchronize them as late as possible. This may expand the life-span of the copy buffers, and may arise a trade-off between buffer size and cycle cost. To minimize process stalls, a DMA unit will transfer block in parallel with the processing by the processor. Block transfer issues and synchronizations can also be pipelined across loop boundaries, to exploit even more parallelism. This kind of pipelining will also cost for more extended copy buffer life span.

The Run-time Manager

For a multiple advanced multimedia applications scenario, running in a parallel on a single embedded computing platform, where each application’s respective user requirements are unknown at design time. Hence, a run-time manager is required to match all application needs with the available platform resources and services. In this kind of scenario, one application should not take full control of resources, so one need a platform arbiter. Here run-time manager will act as platform arbiter. This way multiple applications can coexist with minimal inter-application interference. The run-time manager will not just provide hardware abstraction but also give sufficient space for application-specific resource management[10].

The run-time manager is located between the application and platform hardware services. Run-time manager components are explained below.

Quality Manager

The quality manager is a platform independent component that interacts with the application, the user and the platform-specific resource manager. The goal of the quality manager is to find the sweet spot between

The capabilities of the application, i.e. what quality levels do the application support.

The requirements of the user, i.e. what quality level provides the most value at a certain moment.

The available platform resources.

The quality manager contains two specific sub-functions: a Quality of Experience (QoE) manager and an operating point selection manager[10].

The QoE Manager deals with quality profile management, i.e. what are, for every application. The different supported quality levels and how are they ranked[11, 12].

The operating point selection manager deals with selecting the right quality level or operating point for all active applications given the platform resource and non-functional constraints like e.g. available energy[10]. Its overall goal is to maximize the total system application value[13].

Resource Manager

The resource requirements of an application are well known after selecting operating point. The run-time resource manager gives flexibility in mapping task graph to MPSoC platform. Application tasks need to be assigned to processing elements, data to memory and that communication bandwidth needs to be allocated[10].

For executing the allocation decisions, the resource manager relies on its mechanisms. A mechanism describes a set of actions, their order and respective preconditions or trigger events. To detect trigger events, a mechanism relies on one or more monitors, while one or more actuators perform the actions[10].

Run-Time Library

The run-time library implements the primitives APIs used for abstracting the services provided by the hardware. These RTLib primitives are used to create an application at design-time and called by the application tasks at run-time. The run-time library also acts as interface to the system manager on different levels[10]. RTLib primitives can be categorized as under :

Quality management primitives link the application or the application specific resource manager to the system-wide quality manager. This allows the application to reinitiate the selected quality.

Data management primitives are closely linked to programming model. The RTLib can also provide memory hierarchy primitives for managing a scratchpad memory or software controlled caches.

Task management primitives allow creating and destroying tasks and manage their interactions. The RTLib can also provide primitives to select a specific scheduling policy, to invoke the scheduler or to enable task migration and operating point switching.

The run-time cost of the RTLib will depend on its implementation: either in software executing on the local processor that also executes the application tasks or in a separate hardware engine next to the actual processor .

Clean C

The C language provides much expressiveness to designer, but unfortunately, this expressiveness makes hard to analyze C program and transform to MPSoC platform. Clean C provides designers with capability to avoid constructs, which are not well analyzable. The sequential Clean C code will be derived from sequential ANSI C. This derivation gives better mapping results .

Clean C gives a set of rules, can be divided into two categories as code restrictions and code guidelines. Code restrictions are constructs that are not allowed to be present in the input C code, while code guidelines describes how code should be written to achieve maximum accuracy from mapping tools .

If existing C code does not follow the Clean C rules, a complete rewrite is not necessary to clean it up. A code cleaning tool suit will help to identify the relevant parts for clean-up and will try to clean up most frequently occurring restrictions[14].

While developing new code, the Clean C rules can be followed immediately. But as following rules related to code structure are difficult to follow while evolving code, the code cleaning tool suit will support the application for Clean C rules during code evolution[14].

Some of the proposed guidelines and restrictions are as under: details can be found in [15].

o Overall code architecture

o Restriction: Distinguish source files from header files

o Guideline: Protect header files against recursive inclusion

o Restriction: Use preprocessor macros only for constants and conditional exclusions

o Guideline: Don’t use same name for two different things

o Guideline: Keep variable local

o Data Structures

o Guideline: Use multidimensional indexing of arrays

o Guideline: Avoid struct and union

o Guideline: Make sure a pointer should point to only one data set

o Functions

o Guideline: Specialize functions to their context

o Guideline: Inline function to enable global optimization

o Guideline: Use a loop for repetition

o Restriction: Do not use recursive function calls

o Guideline: Use switch instead of function pointer.

Table of contents :

Chapter 1 Introduction

1.1 Background

1.1.1 Design Time Application Mapping

1.1.2 Platform Architecture Exploration

1.1.3 Run-time Platform Management

Chapter 2 MPSoC Programming Model

2.1 ADRES

2.1.1 ADRES Architecture

2.2 Memory Hierarchy

2.2.1 Scratch Pad Memories

2.2.2 THE MH TOOL

2.2.2.1 Data-reuse analysis

2.2.2.2 Optimization

2.3 The Run-time Manager

2.3.1 Quality Manager

2.3.2 Resource Manager

2.3.3 Run-Time Library

2.4 Clean C

2.5 ATOMIUM / ANALYSIS (ANL)

2.5.1 Atomium/Analysis Process Flow

2.6 MPA TOOL

2.7 High Level Simulator (HLSIM)

Chapter 3 Previous Work

3.1 Experimental workflow and code preparation

3.2 MPEG-4 Encoder Experiment

3.3 Platform Template

Chapter 4 Methodology & Implementation

4.1 ANL Profiling for Sequential MPEG-4 Encoder

4.1.1 Preparing the program for ATOMIUM

4.1.2 Instrumenting the program using ATOMIUM

4.1.3 Compiling and Linking the Instrumented program

4.1.4 Generating an Access count report

4.1.5 Interpreting results

4.2 Discussion on Previous Parallelizations

4.3 Choosing optimal parallelizations for run-time switching

4.4 Run-time parallelization switcher

4.4.1 Configuring new component

4.4.2 Switching decision spot

4.4.3 Manual adjustment to code and tools

4.4.3.1 Adding new threads and naming convention

4.4.3.2 Parsection switching & parallelization spawning

4.4.3.3 Replicating and Adjusting functionalities called by threads

4.4.3.4 Adjusting ARP instrumentation

4.4.3.5 Updating platform information

4.4.3.6 Block Transfers and FIFO communication

4.4.3.7 Execution and Profiling

Chapter 5 Results

5.1 Interpreting ANL profiling results

5.2 Profiling Parallelization switcher component

5.3 The Final Pareto Curve

Chapter 6 Conclusions and Future Work

6.1 Conclusions

6.2 Future Work

REFERENCES