Get Complete Project Material File(s) Now! »

The challenges in the design of complex systems

In engineering, the design of complex systems, such as spacecraft and aircraft, consists of a long process of analysis and optimization that allows the designer to specify the design variables most adequate to the purpose of the designed system. On of the critical challenges arises in the late phase of this process where high-fidelity models are deployed to integrate all the disciplines of interest simultaneously [Balesdent et al., 2012b]. For instance, in the design of aerospace systems, the multi-disciplinary models include multiple disciplines such as structure, propulsion, aerodynamics, trajectory, and costs (Fig. 1.2). The simultaneous integration of all these disciplines makes it possible to interestingly exploit the different interactions between them. However, this comes with an important computational burden. In fact, one evaluation of such a model comes back to an internal loop between the different disciplines. Furthermore, some disciplines are inherently computationally expensive. For instance, the structure and aerodynamic disciplines can constitute a computational bottleneck due to methods such as finite element analyses [Segerlind, 1976] and computational fluid dynamics [Anderson and Wendt, 1995]. Moreover, these disciplines typically rely on legacy codes that may not provide analytical forms of the functions involved. Therefore, the design of complex systems is based on the analysis and optimization of computationally intensive black-box functions. The optimization is performed with respect to different constraints that express the physics to which the design variables are subjected or some specifications imposed by the designer. Another characteristic of these optimization problems is that usually multiple objectives are optimized. In fact, considering only one

Low-fidelity models: numerical relaxation, coarse mesh, linear approximations, abstractions High-fidelity models: multi-disciplinary, multiple objectives, non-linear analysis, high-dimensional objective may result in limited performance in other disciplines. Hence, the objectives have to be taken into account simultaneously within the optimization problem. The resolution of these optimization problems is difficult in the context of complex systems. In fact, due to the black-box aspect, the exact optimization approaches based on the analytical form of the functions and the gradients is challenging. Moreover, the high-computational cost makes the use of meta-heuristics that require a large number of evaluations not suitable.

In addition to the computationally intensive and black-box aspects, the design of such systems takes into account complex physical phenomena inducing abrupt change of physical properties, here referred as non-stationary behavior. This is usually the case in the modeling of constrained optimization problems. In fact, the objective function and the constraints may have an inconsistent behavior and discontinuities between the feasible and non-feasible regions of the design space [Gramacy and Lee, 2008].

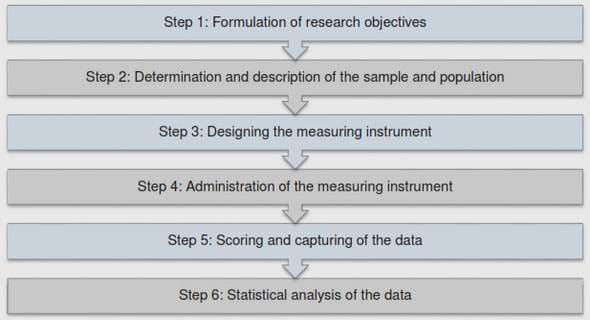

The design of complex systems goes through different phases. The computationally expensive black-box physical models are usually in the late phases of design that are the detailed phase and manufacturing phase (Fig. 1.1). These models are accurate to the detriment of computational cost. The early phases of design, however, are in general characterized by models that are not sufficiently representative of the final system. Such models are called low-fidelity models and have the advantage to be computationally efficient. One of the challenges of the design engineer is to use these different levels of fidelity obtained throughout the design phases to obtain a trade-off between computational costs and accuracy. Considering these different levels of fidelity in a given framework is called multi-fidelity modeling [Fernández-Godino et al., 2016].

Other challenges arise in the design of complex systems. For instance, the imperfect knowledge of the different physics behaviors makes it necessary for the designer to account for different sources of uncertainty. Uncertainty quantification is a key topic in reliability and risk analysis as well as sensitivity analysis [Sudret, 2007; Ghanem et al., 2017; Brevault et al., 2020b]. Another challenge comes from the nature of the design variables. In fact, the design of complex systems may include discrete technological and architectural choices, hence, increasing the difficulty of the optimization. Moreover, the number of design variables involved in the modeling of a complex system is typically large, inducing a high-dimensional design space.

Recently, these various challenges have been partially addressed by machine learning methods. In fact, the recent advances in machine learning have brought design engineering into an era of data-driven approaches.

Machine learning for the analysis and optimization of complex systems

Machine learning encompasses different methods that allow to discover statistical relationships in observed data and to use these found patterns to predict unobserved data [Bishop, 2006; Alpaydin, 2020]. During this last decade, machine learning has gained large popularity across diverse fields including engineering [Fuge et al., 2014; Mosavi et al., 2017; Bock et al., 2019; Brunton and Kutz, 2019]. This gain in popularity is mainly due to deep learning [Goodfellow et al., 2016] and the astonishing possibilities that it now offers thanks to the advances in high-performance computing and the use of large data sets. In fact, problems that seem not possible to be solved by traditional machine learning models are now classic routines for deep learning models [Lee et al., 2018]. In engineering, machine learning models have been widely used even before the deep learning revolution. Actually, due to the computationally expensive black-box aspect of the physical models involved in the design of complex systems, machine learning models are used to avoid an excessive number of expensive evaluations [Simpson et al., 2001; Wang and Shan, 2007; Forrester et al., 2008]. Regression and classification machine learning models, called also in this context surrogate models or meta-models, perform supervised learning to predict the behavior of the physical model in new design variables. This is performed by inferring from a set of observations the statistical relationship between the design variables, considered as the inputs of the model, and their evaluations through the engineering model, considered as the outputs. The set of evaluations used to train the machine learning model is obtained via design of experiments approaches [Anderson and Whitcomb, 2000]. Diverse machine learning models have been used as surrogate models in the literature. Simple regression models such as linear regression and their polynomial expansion [Ostertagová, 2012], kernel methods as support vector machines [Filippone et al., 2008; Cholette et al., 2017], decision trees [Agrawal et al., 2014], ensemble approaches including random forests and gradient boosting [Moore et al., 2018], artificial neural networks [Rafiq et al., 2001; Simpson et al., 2001], Bayesian models such as Gaussian processes [Kleijnen, 2017], and also recently the deep learning generalization of these models as deep neural networks [Dimiduk et al., 2018; Hegde, 2019] and deep Gaussian processes [Hebbal et al., 2020; Radaideh and Kozlowski, 2020]. The deep learning models, thanks to their increased power of representation emulate highly varying and non-stationary functions.

These surrogate models are used for optimization purposes of the physical model in unconstrained or constrained cases [Jones et al., 1998; Sasena, 2002] and single or multi-objective configurations [Emmerich et al., 2006]. For analysis tasks, surrogate models also provide interesting applications. Actually, machine learning models with multi-source input information called multi-task models have been used to handle the multi-fidelity information obtained during the design process [Kennedy and O’Hagan, 2000; Le Gratiet and Garnier, 2014; Perdikaris et al., 2017; Cutajar et al., 2019]. Moreover, machine learning models and specifically probabilistic machine learning models including Gaussian processes and Bayesian neural networks, are well-suited for representing multiple sources of uncertainty (e.g., the uncertainty on the design variables and the noise in measurement), by physically realistic priors and likelihood distributions. This makes Bayesian models interesting to use for reliability and sensitivity analysis [Dubourg et al., 2011; Sudret, 2012; Nanty et al., 2016]. Some machine learning models have been extended to handle categorical design variables that occur in the design of complex systems [Pelamatti et al., 2019].

Certainly not as much as supervised learning, unsupervised machine learning is also used in design engineering. One of its most popular application is for dimensionality reduction to avoid the curse of dimension. This is achieved by methods such as principal component analysis [Ivosev et al., 2008], factor analysis [Yu et al., 2008] where the design space is projected to a low-dimensional sub-space that is sufficient to explain the statistical relationship between the design variables and their evaluations.

The third class of approaches in machine learning that is semi-supervised learning has been applied to engineering design problems through reinforcement learning [Lee et al., 2019]. In fact, reinforcement learning has successfully been applied to single and multi-objective optimization [Van Moffaert and Nowé, 2014]. Through trial-and-errors, it explores the design space and obtains feed-back on the performance evaluation to find the optimal long-term policy.

These different applications of machine learning to the analysis and optimization problems occurring in the design of complex systems are summarized in Fig. 1.3.

Bayesian modeling

The task of a model in machine learning, in its essence, is to predict a response of interest given available data (called observations or training data). In contrast with a physical model, which is based on physical equations to give a response, a machine learning model (henceforth referred simply as model) executes the prediction task based on statistical patterns deduced (inferred) from the available data. Therefore, there is no guarantee that the response of the model for a new set of data would be accurate with respect to the exact response of interest. In that case, an important information for the user of the model is the degree of precision of this prediction. However, it is not an information that is intrinsic to classical machine learning models. Consider for instance a linear regression model (Fig. 2.1). The prediction obtained using this model does not match the exact function and the model does not give information about when its prediction is close to the exact function (over-confident prediction) and when it is far from it (under-confident prediction). However, a desirable output of the model would be a degree of belief associated to its prediction, which may depend on the spatial distribution of the training data in the input space. In fact, a prediction at a new data-point that is similar to a set of training data-point would have a high degree of belief (a low level of uncertainty). While, for a new data-point which is completely different than the training data-set, its prediction would have a low degree of belief associated to it (a high level of uncertainty). This type of uncertainty is due to our lack of knowledge (episteme in latin) about the latent (non-observed) function that we aim to approximate, and is therefore called epistemic uncertainty. In the same category of uncertainty, there is the model uncertainty i.e. uncertainty on its parameters and uncertainty on its structure to best explain the data. These uncertainties are reduced when a better knowledge of the latent function is acquired by gathering more training data. Another type of uncertainty is the one due to aleatoric sources as the error in measurements, this type of uncertainty induces noise in the training data.

An illustrative integration of Bayesian concepts into a model

In this section, in order to introduce some definitions and concepts with an illustrative example, the Bayesian concepts are used with a linear regression model. Consider a regression problem Preg defined by the couple of training inputs/outputs (X,y), the size of the data-set (number of observations) n, and the dimension of the input data (number of features) d. The maximum likelihood estimate procedure A linear regression model M with a basis function expansion and a Gaussian noise is defined as: y(x) = w⊺ϕ(x) + ϵ (2.1)

Table of contents :

List of figures

List of tables

1 Introduction

1.1 The challenges in the design of complex systems

1.2 Machine learning for the analysis and optimization of complex systems

1.3 Motivations and outline of the thesis

I Overview of Gaussian Processes and derived models, applications to single and multi-objective optimization, and multi-fidelity modeling

2 From Linear models to Deep Gaussian Processes

2.1 Bayesian modeling

2.1.1 An illustrative integration of Bayesian concepts into a model

2.1.2 Review on approximate inference techniques

2.2 Gaussian Processes (GPs)

2.2.1 Definitions

2.2.2 Sparse Gaussian Processes

2.2.3 Gaussian Processes and other models

2.3 Deep Gaussian Processes (DGPs)

2.3.1 Definitions

2.3.2 Advances in Deep Gaussian Processes inference

2.3.3 Applications of Deep Gaussian Processes

3 GPs applications to the analysis and optimization of complex systems

3.1 Non-stationary GPs

3.1.1 Direct formulation of non-stationary kernels

3.1.2 Local stationary covariance functions

3.1.3 Warped GPs

3.2 Bayesian Optimization (BO)

3.2.1 Bayesian Optimization Framework

3.2.2 Infill criteria

3.2.3 Bayesian Optimization with non-stationary GPs

3.3 Multi-objective Bayesian optimization

3.3.1 Definitions

3.3.2 Multi-Objective Bayesian Optimization with independent models

3.3.3 Multi-objective Bayesian Optimization taking into account correlation between objectives

3.4 Multi-Fidelity with Gaussian Processes

3.4.1 Multi-fidelity with identical input spaces

3.4.2 Multi-fidelity with variable input space parameterization

3.5 Conclusion

II Single and Multi-Objective Bayesian Optimization using Deep Gaussian Processes

4 BO with DGPs for Non-Stationary Problems

4.1 Bayesian Optimization using Deep Gaussian Processes

4.1.1 Training

4.1.2 Architecture of the DGP

4.1.3 Infill criteria

4.1.4 Synthesis of DGP adaptations proposed in the context of BO

4.2 Experimentations

4.2.1 Analytical test problems

4.2.2 Application to industrial test case: design of aerospace vehicles .

4.3 Conclusion

5 Multi-Objective Bayesian Optimization taking into account correlation between objectives

5.1 Multi-Objective Deep Gaussian Process Model (MO-DGP)

5.1.1 Model specifications

5.1.2 Inference in MO-DGP

5.1.3 MO-DGP prediction

5.2 Computation of the Expected Hyper-Volume Improvement (EHVI)

5.2.1 Approximation of piece-wise functions with Gaussian distributions

5.2.2 Proposed computational approach for correlated EHVI

5.3 Numerical Experiments

5.3.1 Analytical functions

5.3.2 Multi-objective aerospace design problem

5.3.3 Synthesis of the results

5.4 Conclusions

III Multi-fidelity analysis

6 Multi-fidelity analysis using Deep Gaussian Processes

6.1 Multi-fidelity with identically defined fidelity input spaces

6.1.1 Improvement of Multi-Fidelity Deep Gaussian Process Model (MF-DGP)

6.1.2 Numerical experiments of the improved MF-DGP on analytical and aerospace multi-fidelity problems

6.2 Multi-fidelity with different input domain definitions

6.2.1 Multi-fidelity Deep Gaussian Process Embedded Mapping (MFDGP- EM)

6.2.2 The input mapping GPs

6.2.3 The Evidence Lower Bound

6.2.4 Numerical experiments on multi-fidelity problems with different input space domain definitions

6.2.5 Synthesis of the numerical experiments

6.2.6 Computational aspects of MF-DGP-EM

6.3 Conclusion

7 Conclusions and perspectives

7.1 Conclusions

7.1.1 Contributions on Bayesian optimization for non-stationary problems

7.1.2 Contributions on multi-objective Bayesian optimization with correlated objectives

7.1.3 Contributions on multi-fidelity analysis

7.2 Perspectives

7.2.1 Improvements and extensions of the framework BO & DGPs

7.2.2 Improvements and extensions of the proposed algorithm for MOBO with correlated objectives

7.2.3 Improvements and extensions for multi-fidelity analysis

7.2.4 Extensions of deep Gaussian processes to other problems in the

design of complex systems

References