(Downloads - 0)

For more info about our services contact : help@bestpfe.com

Table of contents

Introduction

I optimization and machine learning

1 optimization

1.1 Problem statement

1.2 The curse of dimensionality

1.3 Convex functions

1.4 Continuous differentiable functions

1.5 Gradient descent

1.6 Black-box optimization and Stochastic optimization

1.7 Evolutionary algorithms

1.8 EDAs

2 from optimization to machine learning

2.1 Supervised and unsupervised learning

2.2 Generalization

2.3 Supervised Example: Linear classification

2.4 Unsupervised Example: Clustering and K-means

2.5 Supervised Example: Polynomial regression

2.6 Model selection

2.7 Changing representations

2.7.1 Preprocessing and feature space

2.7.2 The kernel trick

2.7.3 The manifold perspective

2.7.4 Unsupervised representation learning

3 learning with probabilities

3.1 Notions in probability theory

3.1.1 Sampling from complex distributions

3.2 Density estimation

3.2.1 KL-divergence and likelihood

3.2.2 Bayes’ rule

3.3 Maximum a-posteriori and maximum likelihood

3.4 Choosing a prior

3.5 Example: Maximum likelihood for the Gaussian

3.6 Example: Probabilistic polynomial regression

3.7 Latent variables and Expectation Maximization

3.8 Example: Gaussian mixtures and EM

3.9 Optimization revisited in the context of maximum likelihood

3.9.1 Gradient dependence on metrics and parametrization

3.9.2 The natural gradient

II deep learning

4 artificial neural networks

4.1 The artificial neuron

4.1.1 Biological inspiration

4.1.2 The artificial neuron model

4.1.3 A visual representation for images

4.2 Feed-forward neural networks

4.3 Activation functions

4.4 Training with back-propagation

4.5 Auto-encoders

4.6 Boltzmann Machines

4.7 Restricted Boltzmann machines

4.8 Training RBMs with Contrastive Divergence

5 deep neural networks

5.1 Shallow v.s. deep architectures

5.2 Deep feed-forward networks

5.3 Convolutional networks

5.4 Layer-wise learning of deep representations

5.5 Stacked RBMs and deep belief networks

5.6 stacked auto-encoders and deep auto-encoders

5.7 Variations on RBMs and stacked RBMs

5.8 Tractable estimation of the log-likelihood

5.9 Variations on auto-encoders

5.10 Richer models for layers

5.11 Concrete breakthroughs

5.12 Principles of deep learning under question ?

6 what can we do ?

III contributions

7 presentation of the first article

7.1 Context

7.2 Contributions

Unsupervised Layer-Wise Model Selection in Deep Neural Networks

1 Introduction

2 Deep Neural Networks

2.1 Restricted Boltzmann Machine (RBM)

2.2 Stacked RBMs

2.3 Stacked Auto-Associators

3 Unsupervised Model Selection

3.1 Position of the problem

3.2 Reconstruction Error

3.3 Optimum selection

4 Experimental Validation

4.1 Goals of experiments

4.2 Experimental setting

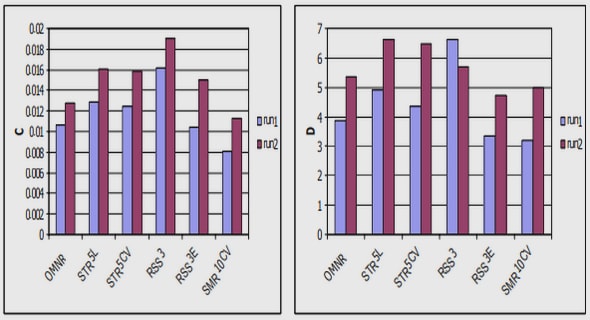

4.3 Feasibility and stability

4.4 Efficiency and consistency

4.5 Generality

4.6 Model selection and training process

5 Conclusion and Perspectives

References

7.3 Discussion

8 presentation of the second article

8.1 Context

8.2 Contributions

Layer-wise training of deep generative models

Introduction

1 Deep generative models

1.1 Deep models: probability decomposition

1.2 Data log-likelihood

1.3 Learning by gradient ascent for deep architectures

2 Layer-wise deep learning

2.1 A theoretical guarantee

2.2 The Best Latent Marginal Upper Bound

2.3 Relation with Stacked RBMs

2.4 Relation with Auto-Encoders

2.5 From stacked RBMs to auto-encoders: layer-wise consistency

2.6 Relation to fine-tuning

2.7 Data Incorporation: Properties of qD

3 Applications and Experiments

3.1 Low-Dimensional Deep Datasets

3.2 Deep Generative Auto-Encoder Training

3.3 Layer-Wise Evaluation of Deep Belief Networks

Conclusions

References

8.3 Discussion

9 presentation of the third article

9.1 Context

9.2 Contributions

Information-Geometric Optimization Algorithms: A Unifying Picture via Invariance Principles

Introduction

1 Algorithm description

1.1 The natural gradient on parameter space

1.2 IGO: Information-geometric optimization

2 First properties of IGO

3 IGO, maximum likelihood, and the cross-entropy method

4 CMA-ES, NES, EDAs and PBIL from the IGO framework

5 Multimodal optimization using restricted Boltzmann machines .

5.1 IGO for restricted Boltzmann machines

5.2 Experimental setup

5.3 Experimental results

5.4 Convergence to the continuous-time limit

6 Further discussion and perspectives

Summary and conclusion

Appendix: Proofs

References

9.3 Discussion

Conclusion and perspectives

bibliography