Get Complete Project Material File(s) Now! »

Lossless source coding with side information

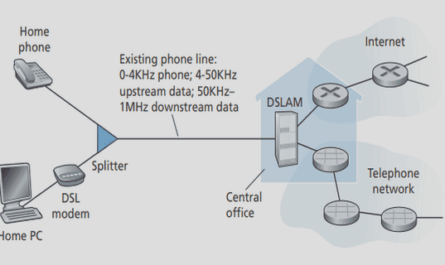

In FTV, the already received views can be used as side information at the decoder. When there is only one possible side information Y , this can be represented as the Slepian-Wolf [42] source coding problem. Figure 2.4 represents Slepian-Wolf source coding. The Slepian-Wolf source coding theorem [42] shows that a rate R is achievable if and only if: R≥H(X|Y) (2.8).

Since H(X | Y ) ≤ H(X), the side information at the decoder can help to decrease the source coding rate compared to source coding without side information. When the side information Y is available at the decoder, the minimum rate R will depend on the statistical relation between X and Y . Example 2.2.2 In BSC for a source X ∈ {0, 1} with P (X = 0) = 0.4, and crossover probability p = 0.1 we have H(X | Y ) = 0.4585 (2.9).

So the minimum lossless source coding rate is R ≥ 0.4585 bits/symbol. Com-pared to the example 2.2.1, 0.4585 < 0.971, it shows that the source coding with side information can help to reduce the minimum source rate. In FTV, several different side informations can be available at the decoder.

Lossless source coding for FTV

For FTV, as described in Figure 2.1, several views Y (j), j ∈ {1, . . . , J} can be potentially available at the decoder. Only one of these potentially available side information will be used for decoding, and the available side information depends on the previous views requested by the user. For instance, Y (1) can be the prediction of X based on the previous received views received by user 1, and Y (1) will serve as side information for the transmission of X to user 1. We suppose that the transmissions of X to different viewpoints are independent. Figure 2.5 presents the transmission of

sending a single source X, with several clients request for this view X using different SI Y (1), . . . , Y (J ) by network. S defines the storage rate on the server. Rj defines the rate needed to transmit source X to user j with side information Y (j). For FTV, we are interested in the achievable rate-storage region which is a set of Assuming that in binary case pj ≤ 0.5, with the properties of the entropy function, we have: S≥H( max p ) (2.15) j=1,…,J j .

LDPC code construction from protographs

An alternative way to represent irregular LDPC codes is the use of protographs [33, 46]. Protographs allow for a precise control of the connections in the parity check matrix of the code, and lead to efficient Quasi-Cyclic (QC) parallel hardware imple-mentations [50].

A protograph S is a small Tanner Graph of size Sm × Sn with Sm/Sn = m/n = R. Each row (respectively column) of S represents a type of CN (respectively of VN). The protograph S thus describes the number of connections between Sn different types of VNs and Sm different types of CNs. A parity check matrix H can be gener-ated from a protograph S by repeating the protograph structure Z times such that n = ZSn, and by interleaving the connections between the VNs and the CNs. The interleaving can be done by a PEG algorithm [37] that not only permits to satisfy the protograph constraints, but also to lower the number of short cycles that could severely degrade the decoding performance of the matrix H.

Decoding algorithms for LDPC codes

There exist several decoding methods for LDPC codes. These methods were invented in the context of channel coding, but they can also be applied to source coding with side information, as described in this section. Bit-flipping decoder, Gallager-A/B decoder and Sum-product decoder are among the most common decoders [49]. In this section we describe these three decoders.

Bit-flipping decoder

The rows of the LDPC matrix H represent parity check equations. The idea of the Bit-flipping [47] algorithm is to correct one by one the bits that are involved in the largest number of unsatisfied parity check equations. Some definitions are given first:

• We denote by ei the number of unsatisfied parity check equations associated to VN xi.

• xˆi ∈ {−1, 1} is the polar representation of xi, xˆi = −1 corresponds to xi = 1, xˆi = 1 corresponds to xi = 0.

• The number of iterations is denoted by ℓ.

• The message from CN uj to VN xi (i ∈ Vj ) is denoted by Ψc(j → i).

• The message from VN xi to CN uj (j ∈ Ci) is denoted by Ψv(i → j).

• The function that takes the decision on the value of xi is denoted by Qi.

The steps of the Bit-flipping are as follows:

1. Initialization: The value xˆi of VNs xi, i ∈ {1, · · · , n} are initialized with the side information bits yi, that is xˆi = 1 − 2yi. The counters ei are set to zero. The messages from VNs to CNs are initialized as Ψv(0)(i → j) = xˆi, ∀j ∈ Ci (3.12).

2. CN update: The messages Ψc(ℓ)(j → i) are calculated as: (ℓ) Π (ℓ) (3.13) Ψc (j → i) = (1 − 2uj ) Ψv (i → j) i Vj.

3. VN update: With (3.13), all the m parity equations are checked. Once a parity equation is not satisfied, the counters ei of the associated VN are increased by one. It means that, ei(ℓ) =1{Ψc(ℓ)(j → i) = −1}, ∀i ∈ Vj (3.14).

Density evolution for the Gallager-A decoder

We now describe into details the density evolution equations for the Gallager A decoder and for the sum-product decoder. For Gallager-A decoder, we calculated the messages Ψ(cℓ)(j → i) by equation (3.18), and Ψ(vℓ)(i → j) by equation (3.19). The Density evolution evaluates the performance of an ensemble of codes with the same CN and VN degree distributions. Here, we consider regular LDPC code with CN degree dc and VN degree dv. In order to evaluate the probability distributions of Ψ(cℓ), Ψ(vℓ), we define.

• Probability distribution of Ψ(cℓ): {−1, 1}.

• Probability distribution of Ψ(vℓ): {−1, 1}.

qα(ℓ) = P (Ψ(cℓ)(j → i) = α), where α ∈.

p(αℓ) = P (Ψ(vℓ)(i → j) = α), where α ∈.

Then, the probability distribution of Ψ(cℓ) after applying equation (3.18) is [26]. (ℓ) 1 (ℓ−1) (dc−1) (ℓ) 1 (ℓ−1) (dc−1) q−1 = 1 − 1 − 2p−1 q1 = 1 + 1 − 2p−1 (3.28) Then with the majority voting operation in equation (3.19), we have p−(ℓ1) = p1(0) q−(ℓ1) dv −1 + p−(0)1 1 − q1(ℓ) dv −1 (3.29)

Table of contents :

List of Figures

List of Tables

1 Introduction

1.1 Source coding for Free Viewpoint Television

1.1.1 InterCom project

1.1.2 Source coding for FTV

1.2 Limitations of standard compression systems to tackle FTV

1.3 Slepian-Wolf source coding with LDPC codes

1.4 My contributions

1.5 Organization of the manuscript

2 Information theory results

2.1 Entropy definitions

2.2 Theoretical results

2.2.1 Lossless source coding without side information

2.2.2 Lossless source coding with side information

2.2.3 Lossless source coding for FTV

2.3 Conclusion

3 Low Density Parity Check codes

3.1 LDPC codes

3.1.1 Definition of LDPC codes

3.1.2 LDPC code construction from protographs

3.2 Decoding algorithms for LDPC codes

3.2.1 Bit-flipping decoder

3.2.2 Gallager-A/B decoder

3.2.3 Sum-Product decoder

3.3 Density evolution

3.3.1 Density evolution for the Gallager-A decoder

3.3.2 Density evolution for the Sum-product decoder

3.4 Protograph optimization

3.4.1 Differential Evolution

3.4.2 Optimization of protographs using Differential Evolution

3.4.3 Optimization results

3.5 LDPC codes construction

3.5.1 Progressive Edge-Growth (PEG) construction

3.5.2 LDPC codes construction using protograph based on PEG algorithm

3.6 Performance comparison of the three decoders

3.7 Rate-compatible LDPC codes for channel coding

3.7.1 Puncturing

3.7.2 Parity Check Matrix extension

3.8 Rate-adaptive LDPC codes for source coding

3.8.1 Rateless

3.8.2 LDPCA

3.8.3 Method starting from rate 1/2

3.9 Conclusion

4 Proposed methods for the construction of rate-adaptive LDPCcodes

4.1 Rate-adaptive code construction

4.1.1 Rate-adaptive code construction

4.1.2 Rate adaptive condition

4.2 Intermediate matrix construction without protograph

4.2.1 Degree distribution for H1→2

4.2.2 Connections in H1→2

4.2.3 Construction of the set S′

4.3 Intermediate matrix construction with protograph

4.3.1 Protograph S2 of parity check matrix H2

4.3.2 Optimization of the intermediate protograph S1→2 .

4.3.3 Algorithm Proto-Circle: connections in H1→2

4.3.4 Construction of the set S′

4.4 Generalization to several rates

4.4.1 Protograph extension

4.4.2 Anchor rates

4.5 Simulation results

4.5.1 Simulation results without protograph

4.5.2 Simulation results with protograph

4.5.3 Simulation results at full rate range

4.6 Conclusion

5 Application to FTV

5.1 Generation of video files

5.2 Symbol-based and bit-based models

5.3 Joint statistical model between Xn and Yn

5.3.1 Laplacian Model

5.3.2 Q-ary symmetric Model

5.4 Probability calculation from symbol-based model to bit-based model

5.5 Decoding scheme with bit-based source and symbol-based side information

5.6 Rate-adaptive construction

5.7 Simulation results

5.7.1 X and Y (j) with high dependency

5.7.2 X and Y (j) with middle dependency

5.7.3 X and Y (j) with low dependency

5.8 Conclusion

6 Conclusions & Perspectives

Bibliography