Get Complete Project Material File(s) Now! »

Mathematical introduction

This mathematical introduction describes the frameworks in which this thesis works take place, and presents mathematical tools used in the following chapters, for self-containment.

First, it introduces the setting of forecasting with expert advice, and two corresponding important frameworks: the \individual sequences » setting and the \batch » setting. Then, it presents several classical algorithms for sequential aggregation, and some bounds they guar-antee. Afterwards, it tackles a generalization of sequential aggregation: online convex optimi-sation, with a focus on the importance of the losses at hand, and introduces \online-to-batch » framework conversions. The nal part is devoted to a discussion about regularization, which will be at the heart of Chapter 3.

Aggregation for individual sequences and in the batch setting

Aggregation for individual sequences

Online prediction. The framework of online forecasting consists in predicting a time-evolving quantity yt in a convex space E (often R or Rd), the \observation », at several instants (\rounds of prediction ») t = 1; 2; : : : The total number of rounds, T , can be known beforehand or not. Before each round, the statistician predicts a value ybt, then the environment reveals the real observation value yt, and the statistician su ers a loss ‘(ybt; yt). The goal of the statistician is to minimize the cumulative loss

Classical loss functions include: the square loss: ‘(y ; y ) = (y y )2 the absolute loss: ‘b(t yt;t yt) =btyt tyt j b jb the \0/1 loss »: ‘(ybt; yt) = 1ybt6=yt

Online prediction is the framework of a large number of problems, from weather forecasting (Mauricette et al. [2009],Zhu and Pi [2014]) to tra c and travel times (Bajwa et al. [2005]), electricity consumption (Devaine et al. [2013]) or stock markets (Gokcan [2000]).

The case of experts aggregation. The experts aggregation setting consists in building the forecast ybt by a combination (in this thesis, it will be a linear combination) of predictions of exterior forecasters, often called \experts ». These can be algorithms, institutions, human beings. . . We assume that the number of experts is nite and denote it by K. The experts are indexed by k 2 f1::K g, and release a forecast at the beginning of each round t. We denote by fk;t the forecast of the k-th expert for round t.

The object of an aggregation algorithm is to choose the weights uk;t.

The process of online prediction with expert advice is summarized in Setting 3.

Some modi ed versions of this framework exist: for example, Blum [1997] and Freund et al. [1997] deal with specialized experts, that do not all provide forecasts at every round, and Gofer et al. [2013] tackles the problem of so-called \branching experts ».

One can notice that this framework covers, for example, the case of geometric (and more generally multiplicative) averages, since applying the log function transforms these averages into \additive » averages considered in our framework.

Setting 3 Online forecasting with expert advice

Input: Decision space E, K experts.

for t = 1; 2; : : :

1. Each expert k provides a forecast fk;t

2. Based on all available data, the statistician chooses weights (u1;t; : : : ; uK;t) and a fore-

3. The environment reveals a value yt

4. The statistician incurs the loss ‘(ybt; yt) and the experts incur losses ‘(fk;t; yt)

Formally, expert aggregation is a particular case of linear regression, though with some speci cities.

Firstly, if some (or even most) of the experts are assumed to perform well, then it makes sense to use convex algorithms (i.e., algorithm with nonnegative weights summing up to 1; we will then denote these weights by pk;t instead of uk;t), whereas these algorithms may have less interest in more general linear regression setups. Yet, classical linear aggregation methods can also be used in the experts setting (cf. the Ridge and LASSO forecasters discussed in Section 2.2.3, and applied for instance in Chapter 6).

Secondly, the performance is generally not measured in some absolute manner, (e.g., through the cumulative loss): but rather relatively to a reference benchmark linked to the experts. The cumulative loss of the k-th expert until round t will be denoted by:

In this thesis, we will often focus on the following quantity:

b t b t b

Xs X min Lk;t

Rt := ‘(ys; ys) min ‘(fk;s; ys) := Lt

=1 k=1::K s=1 k=1::K

It is called the (cumulative) regret, because it measures how much the statistician would have improved his or her performance if he or she had followed the best (in hindsight) expert (supposing that the real observations would have remained y1; : : : ; yt).

It is also possible to compare the algorithm to other benchmarks that the best expert, for example the best constant combination of experts:

t ( 1 ;:::; K ) t K !

‘(ys; ys) 2 ‘ kfk;s; ys

with being RK (best linear combination), or the probabilistic simplex (best convex combi-nation):

( K ) = ( 1; : : : ; K ) : 8 k; 0 6 k 6 1 and X k = 1 : k=1

This leads to various notions of regret.

Inequalities on the regret are sometimes described as \oracle inequalities » because they involve benchmarks (e.g., the minimum cumulative loss among the experts or their combina-tions) that depend on quantities that are not available to the learner during the forecasting period (the \best » expert and the \best » expert combination are only known in hindsight).

Individual sequences In the \individual sequences » setting, the data is assumed generated in an unknown deterministic way: no modeling (except boundedness) is performed on the data-generating process. It can take any value (within some range) at any instant. Therefore, theoretical results do not focus on the prediction of one precise round, rather they are often uniform bounds on the (cumulative) regret, valid for any individual sequence of values for the data (hence the name \individual sequences »): 8 y1; : : : ; yT ; RbT 6 rT :

The bound rT on the regret depends on the algorithm and on the hypotheses on the loss. For a loss bounded by M, a trivial bound on the regret for any algorithm is the linear (in T ) quantity M T . Therefore, theoretical results aim at bounds that sublinear in T as for the regret:

rT = o(T )

Equivalently, they aim at a vanishing bound on the averaged regret: RbT =T 6 rT =T with lim rT =T = 0: T!+1

The absence of major hypotheses prevents deducing links among the observations, and therefore limits the theoretical impact of a learning sample (preliminary sample given to the learner to help building the algorithm), which is usually not considered at all in the theorems. However, such a learning sample is often very useful in practical applications, allowing to \tune » the algorithm beforehand and avoid some chaotic rst forecasts.

The absence of modeling and the uniform guarantees make these methods quite robust, that is why the term \robust aggregation » can be used to refer to the algorithms of expert aggregation in this individual sequences framework.

An important reference in this eld is the monograph by Cesa-Bianchi and Lugosi [2006]. One can also cite the papers by Freund and Schapire [1997], Littlestone and Warmuth [1994], or Cesa-Bianchi et al. [2007].

Batch setting

In the so-called \batch setting », the learner has immediately access to all the past observations and forecasts (the full \batch » of them, hence the name), and generally has to make only one forecast (or several forecasts, but without supplementary information or feedback).

Formally, the learner has observed N vectors x1; : : : ; xN (the regressors), the corresponding N output values y1; : : : ; yN and tries to forecast the output value yN+1 given xN+1. The (xi; yi)i=1:::N form the learning sample. Contrary to the previous setup (individual sequences online learning), in the batch setting the learning sample plays a crucial role, as it is the main source of information for the \one-shot » forecast.

The process of batch prediction is summarized in Setting 4.

Setting 4 Batch forecasting process

Input: Decision space E.

Learning sample:

The statistician has access to N vectors x1; : : : ; xN and N corresponding values y1; : : : ; yN Forecasting stage:

1. A vector xN+1 is revealed

2. The statistician chooses a forecast yb

3. The environment reveals a value yN+1

4. The statistician su ers the loss ‘(y;b yN+1)

In this context, the data is generally considered as random variables, upon which some stochastic assumptions are made (it is then called \stochastic batch setting »). In particular, to enable learning, the distribution of (xN+1; yN+1) is generally linked to the distributions of the (xi; yi)i6N , for example through an assumption of stationarity, or even more strongly through an i.i.d. (independent and identically distributed) assumption.

The performance of an algorithm is measured by the risk, which is the expected loss:

Ry := E ‘(y(xN+1); yN+1) (x1; y1; : : : ; xN ; yN )

In the same spirit as above, one usually seeks guaranteesj not directly on the risk, but relatively bb b to the risk of some benchmark, either in expectation or with high probability with respect to (x1; y1); : : : ; (xN ; yN ).

For instance, one can seek guarantees relatively to the best linear function with high probability:

P(x1;y1);:::;(xN ;yN ) Rbyb f linear E N+1 ); y N+1 ) 6 N > 1 N (2.1.1)

min ‘(f(x

for some N and N , where P(x1;y1);:::;(xN ;yN ) denotes the probability with respect to (x1; y1); : : : ; (xN ; yN ).

Two main settings exist: the case where both the xi’s and the yi’s are random variables is called \random design »; the case where the xi’s are xed (chosen or not by the learner) and only the yi’s are random variables is called \ xed design ».

One can notice that \classical » linear regression problems, such as nding an estimator b of a vector , given a matrix X and the values Y =X + »

where » is a random noise vector, correspond to the batch setting. Similarly to the individual sequences forecasting, the term \aggregation » is usually used for situations where some indi-vidual predictors (the columns of X in the previous example) are assumed to be good and are used as benchmarks to be competed against. However, one should note that Tsybakov [2003] uses the term of \aggregation » for di erent problems, depending on the benchmark (which is the \best » vector in a set one wants to compete against. These problems are the model selection aggregation ( is the canonical basis of Rd); the convex aggregation ( is the probabilistic simplex of Rd); the linear aggregation ( = Rd).

In the batch setting one can derive results on the forecasts (cf. (2.1.1)), like in the individual sequences framework, but also on the \quality » of the learning (e.g. bounds on kb k in the previous example). These two objectives are known as the \prediction problem » and the \estimation problem ».

Some comparisons between batch and individual sequences settings

One can see that in the batch setting, the N rounds taken into consideration are learning rounds, on which no predictions and no errors are made by the statistician, and which help to better know the data, so that the expected error at the nal forecasting round tends to decrease when N grows. On the contrary, in the online setting, the T rounds taken into account are rounds of prediction, so the cumulative error tends to grow when T grows. However, the averaged regret RbT =T tends (for \reasonable » algorithms!) to decrease; some links between averaging in online learning and batch results will be shown in the \online-to-batch » parts (Section 2.3.3 and Section 4.3). More generally, it is possible to \transfer » methods that are e cient in the online setting to the batch setting.

Conversely, we will make use in Chapters 5 and 6 of two algorithms, the Ridge and LASSO forecasters, that were initially developed for linear regression with xed design, but that give interesting results for individual sequences predictions (cf. Section 2.2.3).

Algorithms for the forecasting of individual sequences

Introductory remarks

Failure of some naive algorithms. One of the simplest aggregation algorithm is the (arithmetic) average of the experts’ predictions: yt = K k=1 fk;t=K: It is obvious that for most losses it can fail: even if some experts are good, some poorly-predicting experts may drive P he average to su er a large loss. b

Another \natural » approach is to \follow the leader »: allocate a weight 1 to the expert that has su ered the smallest cumulative loss until the instant to forecast (the \leader »), and a weight 0 to the other experts. This approach also fails: it su ces to consider a situation where, at each instant t, the \leader » until t 1 su ers the highest loss at time t, and then loses its leadership; such situations exist (in particular when two experts have a similar overall performance).

Necessity of hypotheses on the loss. Without hypotheses on the loss, it is not possible to guarantee a sublinear regret, whatever the algorithm. For instance, consider the loss b ‘(x; y) = 1×6=y, the set of predictions 0; 1 and two constant experts: f0 always forecasting this loss, for any sequence of predictions, there exist a 0 and f1 always forecasting 1. With sequence of observations in 0; 1 that leads to a loss of 1 at each round (y = 1 when y = 0, and yt = 0 when yt 6= 0), so the cumulative loss of this sequence of predictionst is T . Ast for the experts f0 and f1, at each round one of them receives a loss 0 and the other one receives b a loss 1, so over T rounds one of them has a cumulative loss inferior (or equal) to T =2. As a consequence the regret is superior (or equal) to T =2, which is linear in T .

We will see below that a su cient assumption to get interesting results is the convexity of the losses (in their rst argument).

Table of contents :

1 Introduction générale

1.1 Présentation générale de la thése

1.2 Cadre mathématique de la thése

1.3 Chapitre 3 : Obtention d’une régularisation optimale dans un cadre batch stochastique

1.4 Chapitre 4 : Amélioration d’un algorithme adaptatif : MetaGrad

1.5 Chapitre 5 : Faisceaux de prévision par agrégation séquentielle

1.6 Chapitre 6 : Application de méthodes d’agrégation _a la prévision pétroliére

2 Mathematical introduction

2.1 Aggregation for individual sequences and in the batch setting

2.2 Algorithms for the forecasting of individual sequences

2.3 Online convex optimization

2.4 Regularization in a stochastic batch setting

3 Minimax regularization

3.1 Introduction

3.2 Proof of Theorem 3.1.4

3.3 Technical material and proof of Proposition 3.1.6

3.4 Minimax regularization function in the _xed design setup

4 Improvements on an online convex optimization algorithm: MetaGrad

4.1 The MetaGrad algorithm

4.2 Speeding MetaGrad up

4.3 Improved \online-to-batch » conversions for Online Newton Step and MetaGrad

5 Providing long-term forecast intervals using sequential aggregation

5.1 Introduction

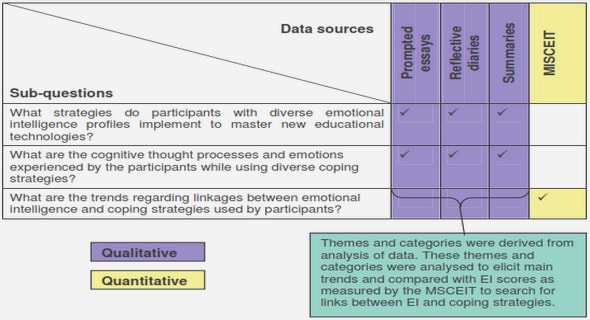

5.2 The forecast intervals framework and methodology

5.3 Forecast intervals with the Ridge regression forecaster

5.4 Forecast intervals with the EWA algorithm

5.5 Extension to the Fixed-Share algorithm

5.6 Lines of future works

5.7 Supplementary material

6 Sequential model aggregation for production forecasting

6.1 Introduction

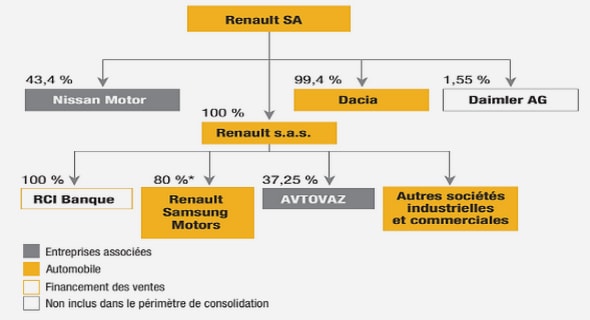

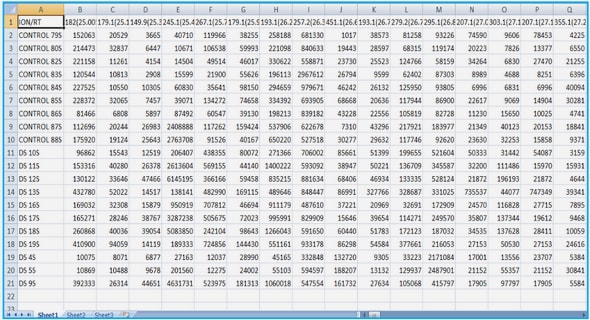

6.2 Brugge case

6.3 How to combine the forecasts of the 104 models considered

6.4 Results of point aggregation for one-step-ahead forecasts

6.5 Results for interval aggregation

6.6 LASSO

6.7 Appendix: Technical details for interval forecasts

6.8 Supplementary material