Get Complete Project Material File(s) Now! »

Statistical learning

Machine learning (ML) is a scienti c domain at the interface between applied mathematics, opti-mization and computer science. It focuses on the study of algorithms and statistical models that computer systems use to perform a speci c task. The process of learning begins with observations or data, such as examples, direct experience, or instruction, in order to look for patterns in data and make better decisions in the future based on the examples that we provide. ML has various applica-tions such as email ltering, recommendation systems, natural language processing, bio-informatics, economics, computer vision or even fraud detection. For example, based on a dataset of items and ratings, a recommendation system seeks to predict the \rating » or \preference » a user would give to an new item given its previous preferences. Another example is image classi cation. The learner received di erent types of labeled images (dogs or cats for instance). Given this dataset, the goal is to automatically label a new image occurring in the process. This is an example of supervized Learning. On the other hand, in community detection, the learner aims to identify communities interacting with each other. This is an example of unsupervised learning.

The main focus of this thesis is supervized learning. More precisely, we will be interested in robust supervized learning. Although robust unsupervised learning also exists, it is out the scope of this thesis.

Some de nitions In this chapter, we present and use many tools borrowed from empirical pro-cesses. Here, are some very useful de nitions and notations that we will use all along this chapter.

Definition 1.1: Empirical measure, empirical process

Let X; X1; ; Xn be independent random variables taking values in a measurable space (E; E) with common distribution P . The empirical measure based on the sample (X1; ; Xn) is defined where x denotes the Dirac’s measure. For a class F of measurable functions f : E 7!R we write P f = E[f(X)] and Pnf = 1 n f(Xi) :n Xi =1

The empirical process indexed by F is de ned by f(P Pn)(f) : f 2 Fg, see (Van Der Vaart and Wellner, 1996).

In supervized machine learning (Vapnik, 2000; Friedman et al., 2001; Shalev-Shwartz and Ben-David, 2014; Bishop, 2006), the goal is to predict an output Y in Y based on features X in X , that is to say, to understand the relationship between an ouput Y and inputs X. The set Y can be either nite (typically f0; 1g) or in nite (Y R), leading to two important problems in supervized machine learning: Classi cation, when Y is nite. If jYj = 2, where j j denotes the cardinality of Y, the problems is binary classi cation.

Regression, when Y is a continuous subset of R.

Typically X = Rp, where p is large and denotes the dimension of the problem. For example:

For binary classi cation: X = Rp can correspond to a set of images encoded with their p pixels and Y = f0; 1g, if the label is 1, the image is labelled as a dog, otherwise as a cat.

For a regression problem, X can summarize socioeconomic factors and Y = [0; 100] depicts the score of the left-wing during the next presidential election.

The output Y 2 Y is not always a deterministic function of an input X 2 X due to random factors such as measurement errors. Thus, the couple (X; Y ) is modeled as a random variable with a certain unknown distribution P . Let PX denote the marginal distribution of X. The goal becomes to predict the output Y with the input X given that (X; Y ) is sampled from P . To do so, we de ne a predictor as a measurable function f : X 7! Y. The random variable f(X) serves to predict Y . The set of possible predictors (i.e measurable functions from X to Y) is denoted by F(X ; Y). To measure the quality of a predictor f in F(X ; Y), we introduce a loss function ‘:Y Y7!R+ ;

such that ‘(f(X); Y ) measures the error of predicting f(X) while the true answer is Y . It is always assumed that ‘(y; y) = 0, for every y 2 Y. Two important examples are:

Example of classi cation: let Y = f 1; 1g and ‘(y; y0) = 1fy 6= y0g. Thus, ‘(y; y0) = 1 if y 6= y0 and ‘(y; y0) otherwise. This loss function is often replaced by convex surrogates for computation purposes such as the Hinge loss, ‘(f(X); Y ) = max(0; 1 Y f(X)) or the logistic loss, ‘(f(X); Y ) = log(1 + exp( Y f(X))).

Example of regression: let Y be a continuous subset of R and ‘(y; y0) = (y y0)2=2. It is also called least squares regression.

Given a random couple (X; Y ) with distribution P , the quality of a prediction function is measured by its risk, or generalization error, de ned as the averaged loss under the distribution P of the observations: R(f) = E(X;Y ) P [‘(f(X); Y )] :

Adopting the notations in De nition 1.1, we also write R(f) = P ‘f . The optimal predictor fP is de ned, when it exists, as the minimizer of the risk over the set of all measurable functions F(X ; Y) fP 2 argmin R(f) : f2F(X;Y)

Although the function fP may not exist, it does for standard loss functions used in practice. In this case, fP is called a Bayes predictor. For example, in regression with the square loss, fP (X) = E(X;Y ) P [Y jX]. However, the distribution P being unknown, the Bayes predictor fP is also unknown and must be approximated. To do so, a training dataset of n independent and indentically observations D = (Xi; Yi)i2J1;nK in (X ; Y)n with the same distribution P as (X; Y ), is given. One would like to use the dataset D to predict the output Y associated with the input X. We can formalize the problem through the notion of learning rule Z : [1n=1(X Y)n 7! F(X ; Y) de ned as a measurable function that maps the set of observations to an estimator f^. Note that the observations (Xi; Yi)i2J1;nK are random. Consequently, the predictor f^ = Z (Xi; Yi)i2J1;nK and its risk are also random. One of our main goal, is to nd learning rules with a risk close to the one of fP with high probability. In a non informative way, we search for learning rules such that with large probability R(Z (Xi; Yi)i2J1;nK R(fP ). Such results can also be derived in expectation E(Xi;Yi)i2J1;nK P n R(Z (Xi; Yi)i2J1;nK R(fP ) :

In this thesis, we propose results holding with exponentially large probability. In fact, it turns out that such results often imply bounds in expectation (see Section 1.2.2 for an example).

Empirical Risk Minimization (ERM)

Definition and properties

Since the risk R( ) is unknown, the most common and wide-spread learning rule consists in replacing the expectation with respect to P by the empirical measure and minimize it. This method is known as Empirical Risk Minimization (ERM) and is de ned as Z (Xi; Yi)i2 J K 2 2FXY Xi Rn(f) with Rn(f) = n 1;n fargmin(;) ‘(f(Xi); Yi) : =1

Adopting the notations in De nition 1.1 we write Rn(f) = Pn‘f . This natural idea dates back to works of Gauss in the early 19th-century who introduced the least-squares estimator, which is the ERM for linear predictors with the square loss function. Many important methods of statistical estimation such as maximum likelihood or more general M-estimation (Van de Geer, 2000) are versions of empirical risk minimization. The general theory of empirical risk minimization began with the works of Vapnik and Chervonenkis (Vapnik, 2000) in the late 1970s and the early 1980s. Their main idea was to relate the quality of prediction of the empirical risk minimizer with the accuracy of approximation of the true distribution P by it empirical counterpart Pn, uniformly over a well-chosen class of functions. Their approach necessitates a uniform control the empirical process f(P Pn)(f); f 2 Fg, for F a well-chosen class of functions (see below in this section). The authors introduced a number of natural and important measures of complexity of class of functions, such as entropy and VC-dimension (VC standing for Vapnik-Chervonenkis ).

This intuitive learning rules raises a natural question: how does a minimizer of the empirical risk Rn performs, that is, how does its risk behave compared with the one of fP ? Although a prediction rule works well on observed points, it does not guarantee that its risk is small. Indeed, the set of all measurable functions is very large, and it is easy to nd a prediction function f such that f(Xi) = Yi for every i = 1; ; n. Such a predictor f is a minimizer of the empirical risk. However, tting perfectly the dataset yields in general to poor generalization properties (Shalev-Shwartz and Ben-David, 2014), a phenomenon known as over- tting. A standard tool to avoid such pathological situations is to use regularization methods. There are two equivalent formulations.

Restriction to a small class of functions: let F F(X ; Y) be a class of functions, large enough to reasonably approximate any measurable function, but not too large to avoid the over- tting phenomenon. The minimization of the empirical risk is restricted to the class of functions F f^F 2 argmin Pn‘f : f2F

Introduction of a penalization: Let : F(X ; Y) 7!R+ be a function penalizing the least regular measurable functions and > 0. We de ne f^ 2 argmin fPn‘f + (f)g : f2F(X;Y)

Since these two approaches are equiv-alent, we will focus on the rst one, when the minimization of the empirical risk is restricted to a sub-class of measurable functions F. Let fF be the minimizer of the risk over F. fF 2 argmin R(f) : f 2F With these de nitions in mind, we have R(f^F ) R(fF ) R(fP ) : Let R(f^ ) R(f ) be the excess risk. Figure 1.1: Risk decomposition F P

This quantity is always non-negative and the smaller it is, the better f^ predicts. The excess F P k‘F . Results risk can be decomposed in two terms: the estimation error and approximation error. R(f^F ) R(fP ) = R(f^F ) R(fF ) + R(fF ) R(fP ) : | {z } | {z }

Estimation error Approximation error

We illustrate this error decomposition in Figure 1.1. The estimation error comes from the fact that f^F minimizes the empirical risk instead of the true risk. It increases with the complexity1 of F. On the other hand, an increasing number of observations makes the empirical risk closer to the true risk and thus reduces the estimation error. The approximation error is due to the fact that fF minimizes the risk only on a subset of all measurable functions. It decreases with the size of F. Consequently, there is a trade-o to nd, to optimize the choice of F . It is known as the bias-variance trade-o where the variance is due to the estimation error while the bias is due to the approximation error. In this thesis, we will only focus on the estimation error of a given estimator. We want to relate

F F F . Taking the risk of f^ with the one of f , the best risk one can hope using functions in the class the point of view of Vapnik and Chervonenkis, we relate the estimation error R(f^F ) R(fF ) with the empirical process f(Pn P )(‘f ); f 2 Fg, indexed by the class F. In particular, we have the following upper bound for the estimation error R(f^F ) R(fF ) = R(f^F ) Rn(f^F ) + Rn(f^F ) Rn(fF ) + Rn(fF ) R(fF ) | {z } | {z } | {z } supf2F jP ‘f Pn‘f j f j k 0 supf2F jP ‘f Pn‘f j 2 sup j P ‘ f P n ‘ := P n P k‘F f 2F

To derive upper bounds for the estimation error, it is su cient to uniformly control the empirical process f(Pn‘f P ‘f ); f 2 Fg over the class F. Thus, by analysing deviations between the risk and its empirical version it is possible to control the estimation error R(f^F ) R(fF ). Such deviations are at the heart of the theory of empirical processes (Van Der Vaart and Wellner, 1996; Pollard et al., 1989; Van de Geer, 2000). Concentration results coupled with powerful tools from empirical processes theory allow to prove many non-trivial and deep results in statistical learning. In particular, in the 1990s, Talagrand proved a uniform version of Bernstein’s inequality allowing to concentrate kPn P k‘F around its expectation (Talagrand, 1996). This result had a huge impact on the theory of empirical processes and empirical risk minimizer. We will present in Section 1.3 an example of application of this outstanding concentration inequality.

General analysis of the statistical error

In this section, we present standard arguments to bound supf2F jPn‘f P ‘f j = kPn are derived with high probability and in expectation. The main tools are concentration inequalities and Rademacher complexities. The rst step consists in quantifying the deviation of kPn P k‘F around its expectation EkPn P k‘F . To do so, it is necessary to impose strong assumptions over the class ‘F , where ‘F = f‘ f( ); ; f 2 Fg. For a long time (Devroye et al., 2013; Koltchinskii, 2001; Bartlett, 1998), it has been assumed that the class ‘F was bounded, that is, there exists a constant c > 0 such that j‘(f(x); y)j c, for every f 2 F and (x; y) 2 X Y. The main reason is that no concentration results were available to handle unbounded class ‘F . Even if more general results are now available, we focus here on bounded classes ‘F . Two common examples are:

Example 1: if ‘(y; y0) = 1 f 6 g then c = 1. y = y0

Example 2: if y 7!‘( ; y) is convex and L-Lipschitz (j‘(y1; y) ‘(y2; y)j Ljy1 y2j, for every y1; y2 in k ) and F = f ; ( ) ; 2 Rp s.t k k2 Bg, where ( ) is a bounded feature k 2 X

map i.e (x) Y2 D for every x . In this case, c = LBD because for every in Rp such that k k2 B and (x; y) 2 X Y ‘(0; 0)j Lj ; (x) j Lk k2k (x)k2 LBD : j‘ ; (x) ; y j = j‘ ; (x) ; y

In this example, the minimizer of the empirical risk minimizer is unique and de ned as ^ 1 Xi ; (1.1) 2 k k = argmin B n ‘ (Xi); ; Yi* Rp: 2 =1 where the loss is L-Lipschitz.

This boundedness assumption allows to control the deviations of kPn P k‘F around its expectation with high probability. To do so, we use Theorem 1.1, known as Mc Diarmid’s inequality that we recall here.

Table of contents :

1 Introduction

1.1 Statistical learning

1.2 Empirical Risk Minimization (ERM)

1.3 Localization methods for ERM

1.4 Localization methods for regularized procedures

1.5 Complexity parameters in statistical learning

1.6 Robustness in learning theory

1.7 Summary of the contributions

2 Robust ERM and minmax-MOM

2.1 Introduction

2.2 ERM in the sub-Gaussian framework

2.3 Minmax MOM estimators

2.4 Relaxing the Bernstein condition

2.5 Bernstein’s assumption

2.6 Comparison between ERM and minmax MOM

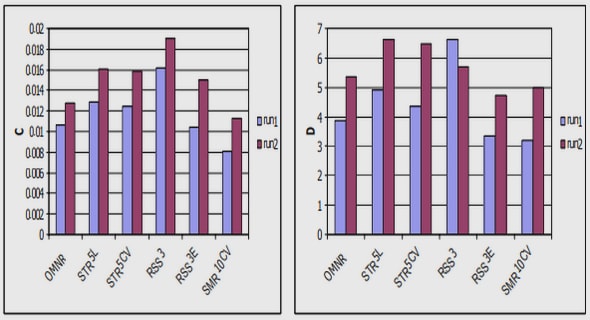

2.7 Simulation study

2.8 Conclusion

2.9 Proof of main Theorems

2.10 Other proofs

3 Robust RERM and minmax-MOM

3.1 Introduction

3.2 Mathematical background and notations

3.3 Regularized ERM with Lipschitz and convex loss functions

3.4 Minmax MOM estimators

3.5 Relaxing the Bernstein condition

3.6 Applications

3.7 Simulations

3.8 Conclusion

3.9 Proof main theorems

4 Complexity dependent bounds

4.1 Introduction

4.2 Regularized Empirical Risk Minimization (RERM)

4.3 Robustness to outliers and heavy-tailed data via Minmax MOM estimators

4.4 Applications

4.5 Conclusion

4.6 Proof main theorems

4.7 Supplementary lemmas

5 Robust RERM: outliers in the labels

5.1 Introdution

5.2 Non-regularized procedures

5.3 High dimensional setting

5.4 Conclusion and perspectives

5.5 Simulations

5.6 lower bound

5.7 Non-isotropic design

5.8 Proofs main Theorems

6 Benign overtting in the large deviation regime

6.1 Introduction

6.2 Setting

6.3 Main results

6.4 Proofs of the main results

6.5 Supplementary material