Get Complete Project Material File(s) Now! »

Related work

In this section, I will present the existing literature and tools on some topics related to the TheoremKB project and more precisely to my intern-ship.

Information extraction from Scientific publications

There as been a lot of research around the extraction of information from scholarly documents, mainly focused on two main areas of the document: The header (title, authors, abstract, etc.) and citation data. A few other works have looked at the extraction of other parts of the document, such as gures [21] and tables [13]. grobid [2, 15], created by Patrick Lopez is a free, open-source tool im-plementing these techniques. It takes as input the PDF of a scienti c article and returns an XML le of the paper parsed into di erent sections, with gures, tables and equations extracted. It relies on PDFAlto [3], another useful tool created by the same team, which can read a PDF and return the position of every single word of the PDF.

This tool proved to be very powerful and useful for our task, even if it contains some bugs, like mathematical results often detected as gures. These bugs are probably due to the fact that grobid is trained on a very small corpus of paper, all of them being manually annotated.

Scientific digital libraries and open archives

An important part of the project is to create a knowledge base storing all relevant information about mathematical results and their proof, and more generally about Scientific papers as a whole. It would then be essential to have a public web platform on which researchers could easily navigate, and query the graph of Scientific papers and mathematical results. I will present in this section some existing digital libraries and open archives platform that enables to browse the graph of scholarly documents.

Digital libraries Nowadays, a large number of platforms give access to the Scientificliterature. Most of them are digital libraries. Often these services are directly provided by commercial publishers, such as Scopus (by Elsevier), Web of Science (by Clarivate), or the ACM digital library. Some others are general search engines for scienti c publication, the best known being Google Scholar and Microsoft Academic [23].

Some platforms are more independent digital libraries gathering data from various publishers on one platform (MathSciNet and EuDML for mathematics, DBLP for computer science, PubMed for biology and medicine, etc.).

All these platforms propose the PDF le of the publication, often meta-data (authors, abstract, venue, etc.) and sometimes extract gures and tables from the paper, but they never allow to query directly the mathe-matical statements from the research article. Dissem.in is another digital library, gathering data from various platforms and showing precisely which papers are open access and which are not. It also proposes an API that enables to nd the doi of a paper given its title and authors.

There also exist illegal digital libraries, such as Sci-Hub and Libgen, which give access to a lot of publication without any paywall. We only consider here sources with no legal issues.

Open archives. Another kind of platforms is open archives, on which authors can upload preprints and publications, together with the sources of these publications. For instance, the project HAL originated by the CNRS, counts more than 700; 000 hosted publications. The best known is arXiv, created by Cornell University, and it is the one we will use in this paper. It contains more than 1:7 million papers published on various scienti c topics (mostly mathematics, computer science and physics) since 1991. We can also mix these open archives with a social network style and obtain something like ResearchGate, on which researchers can share and comment articles, as we comment posts on Facebook or LinkedIn.

The S2ORC dataset. SemanticScholar is one of the most interesting of these digital libraries. As a lot of them, it shows the metadata of the article, the references and the citations. They also automatically extract gures, tables and discussed topics in the paper, and they detect related papers. A very interesting feature of this platform is that it sorts references into three categories and automatically detect important references. I will talk about this in more detail in Section 2.3. The website counts more than 80 millions papers and propose a very useful API to easily get a JSON le of any article.

Even more interestingly, the Allen Institute for AI, at the origin of Se-manticScholar, released a huge dataset called s2orc [14]. This contextual citation graph contains 81:1 million academic publications and links between them, but it also contain the parsed full text of 8:1 million open access papers. For each publication, they gathered the di erent versions of the paper and picked the most representative one. Then they used grobid [2, 15] presented above to split the paper in di erent subsections and automat-ically extract gures, table, formulas from the text. As I explained before, grobid also extract references to these elements, and to bibliographical ref-erences. Finally, they simply linked papers in the bibliography of the PDF les to existing papers in their dataset. Figure 2 (and its caption), taken from [14], sums up the content of one document in the s2orc dataset.

Figure 2: [14] For each paper, in-line citations are linked to the correspond-ing bibliography entry within that paper. The bibliography entries are then linked to one of 81.1M candidate papers. References to gures and tables are also extracted from the full text and linked to gure and table objects within the paper.

Other citation graphs. This is not the only contextual citation graph publicly available. In 2009 was published the ACL Anthology Network [19], a graph of scienti c publications derived from the ACL Anthology Corpus manually augmented in order to link references in the bibliography to papers in the corpus (it contains around 25K papers). More recently, Saier and Farber introduced a citation graph derived from arXiv LATEX sources in [22].

References extraction and analysis

Digital libraries and contextual citation graphs mentioned in the pre-vious section are directly useful to researchers, but they are also useful in-directly by the mean of various research tasks. We can cite for instance the citation recommendation task for authors writing a paper, either at a paper level [5, 12] or an in-line level, using the context [9]. It can also be used to detect similar papers in order to build a recommendation system (for instance, Microsoft Academic built is own recommendation system for scienti c papers [10]). There is also work done to generate citations in a realistic and natural sentence, given two papers (the rst one citing the second one) [16].

An interesting task for us is citation extraction and disambiguation. Cohan et al. proposed in [7] a classi er trained to detect the intent of a ci-tation, given the sentence in which the citation appears. They trained it to detect three main intents: Background (for instance, citations in this Section of this report are mainly background citation), Result (these cita-tions are the one that we aim to extract with the algorithm presented in Section 5), and Method. To train their classi er, they used a bidirectional Long Short-Term Memory (LSTM) with an attention layer, followed by three independent Multi-Layer Perceptron (MLP) with three di erent tasks: one with the main task (guessing the citation intent) and the two other with sca olds (e.g., guessing the title of the section from which the citation is taken).

Knowledge bases of theorems

Another aspect of this project is that we want a structured knowledge base of mathematical results. There has been work done on knowledge bases of Theorems in the context of automated theorem provers. Some of these tools include libraries which contain a lot of theorems and their proof. The model MBASE [11] proposed in 2001 aimed to create a knowledge base with formal representations of mathematical results. However, the devel-opment of this model seems to have stopped in 2003, without any system demonstration.

Logipedia is one of the most complete library of mathematical results, put as formal statements and useable for a theorem prover. However, this is not as human-readable as TheoremKB aims to be.

Finally, Ganesalingam and Gowers proposed in [8] a very interesting au-tomated model which aims to write proofs in a human style by trying to mimic researchers’ reasoning.

The CS-CC database

Dataset creation. For our experiments, we needed a dataset of scienti c papers dense enough so that this is likely that there exist links between results from di erent papers. The dataset must not be too big so that our scripts will not take too much time. It is possible to download bulks of papers from arXiv (and other websites), but publications are sorted by months and paper published the same month are less likely to quote each other. Moreover, arXiv enables to download both the pdf and the source of papers published on it (when available).

Instead, I crawled the export version of the arXiv open archives, and downloaded all PDFs and sources of papers published between 2010 and 2020 under the cs-cc category. cs-cc stands for \Computer Science – Com-putational Complexity ». We chose this category because computational com-plexity is a domain with a lot of mathematical results and close enough so that papers will very probably quote each other.

The dataset I gathered contains 6:000 scienti c papers. The second step was to get the links between the di erent articles of the dataset. The rst methods I used to obtain them were not very e cient, but it enabled me to familiarize myself with some useful tools.

Extracting citations. Indeed, I rst tried to use the dissem.in API. If one sends the title of an article, the publication year and the names of the authors to the dissem.in API, the API returns the DOI of the paper. The idea was to nd the DOI of each paper in the dataset, and then the DOI of articles in the bibliography of all papers in the dataset. Once this is done, we just have to nd matching DOI. I tried to extract the information required by the API from the LATEX source code, but, as it is shown in Table 1, more than one fth of all papers in the dataset do not have any latex le in their sources (or no sources at all). Only 62% have a le for the bibliography, often a .bbl le, which is not ideal because its format can vary from one paper to another. Only 3% of them have a .bib le for the bibliography.

Instead of directly using the source les, I decided to extract information directly from the PDF using grobid [2, 15], a tool based on machine learning and able to convert a PDF of a scienti c paper into an XML le. The returned XML le contains structured information on the paper, such as header data (title, authors name, universities, etc.), body data (sections, gures, equations, references, etc.) and most importantly bibliographic data (title, authors name and publication year of each quoted article). How-ever, the results were still not convincing since grobid and dissem.in have their own bugs. Fortunately, the digital library SemanticScholar for scienti c literature o ers an API which only needs the arXiv id of an article and returns a de-tailed JSON le, containing the arXiv id of every arXiv paper referenced in the bibliography. Thanks to that, I was able to obtain a dense graph of articles and inter-articles dependencies.

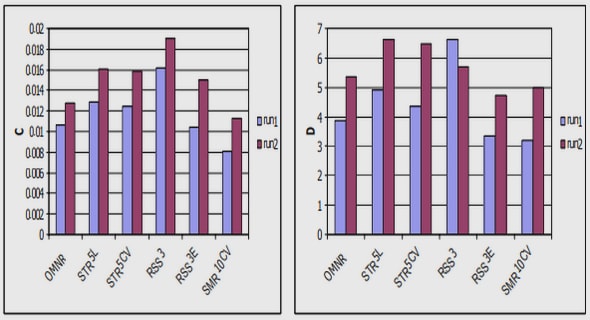

Graph analysis. 2:666 of the 6:000 papers are cited by at least one other paper from the corpus, and 3:190 of them are citing another paper of the corpus. Figure 3 shows the distribution of the number of citations intra-corpus per article. This highlights the fact that despite its small size, this dataset is dense enough for our experiments.

Figure 3: Distributions of the number of citations from (left panel) and to (right panel) other papers of the corpus.

The longest path in this graph is of length 19 and the biggest con-nected component contains 3:167 papers and 8:106 edges between papers. Figure 4 shows a representation of this component obtained using the Net-workX library on Python.

Figure 4: Representation of the graph of dependencies between papers of the corpus. The x-coordinate of a point represents its publication date (between 2009 and 2020) and the y-coordinate represents its number of citations from papers in the corpus.

Theorem Matching

This section is dedicated to my rst attempt at linking results from di erent papers. The idea was to detect pairs of theorems which are similar. For example, it would detect if a theorem in some paper is a restatement of a theorem in one of the papers referenced in the bibliography.

The theorem dataset

For each paper in the cs-cc dataset, I extracted the set of mathematical results and their statement from the LATEX source les. To do so, I sim-ply searched for each \nbeginftheoremg » and \nendftheoremg » using the TexSoup library in Python. For each result, a regexp detects if some paper is cited inside the result and we check if the cited paper is in the cs-cc dataset. If a citation to another paper is detected, we use a classi er to nd which particular results of the cited paper is associated to this one. The di erent classi ers we tried are described in the next sections. Note that we only consider citations which are inside the statement and not the ones which are in the proofs. Indeed, in the rst case, it is more likely that the result is a restatement of a result in the cited paper, and in the second case, the result probably relies on a result of the cited paper.

A total of 41; 167 results were successfully extracted from LATEX les and 308 theorems are referencing another paper from the corpus (there might be duplicates). To see the e ciency of the di erent methods, I selected a subset of 39 theorems in which the precise result of the cited paper we want to nd is already mentioned in the header (e.g « Theorem 3 from [4] »).

Table of contents :

1 Introduction

2 Related work

2.1 Information extraction from scientific publications

2.2 Scientific digital libraries and open archives

2.3 References extraction and analysis

2.4 Knowledge bases of theorems

3 The CS-CC database

4 Theorem Matching

4.1 The theorem dataset

4.2 TF-IDF Vectorizer

4.3 Autoencoder

5 The graph extraction algorithm

5.1 From PDF to XML

5.2 Extracting results

5.3 Associate tags and papers

5.4 Results on the CS-CC dataset

6 The arXiv Database

6.1 The dataset

6.2 Quantitative Analysis

6.3 Qualitative Analysis

7 Future work

7.1 Improve the extraction algorithm

7.2 Crowdsourcing