Get Complete Project Material File(s) Now! »

The Unscented Kalman filter

In the Extended Kalman filter (EKF), the state distribution is approximated by a Gaussian random variable, which is propagated analytically using the first-order linearization of the nonlinear system. This linearization can introduce large errors in the true posterior mean and covariance, which may lead to suboptimal performance and, in some cases, divergence of the filter. The Unscented Kalman filter (UKF) can be seen as an alternative to the EKF, which shares its computational complexity while avoiding the Jacobian computations [JUD95; JU97; JUD00; Wv00; JU04; van04; GA14].

The UKF addresses the approximation issues of the EKF by using a deterministic sam-pling approach called unscented transformation. The state distribution is approximated by a Gaussian using a minimal set of carefully chosen sample points. These sample points completely capture the true mean and covariance of the Gaussian random variable to the third-order (using Taylor series expansion) for any nonlinearity [JU97; Wv00; van04]. The unscented transformation is a method for calculating the statistics of a random variable that suffer a nonlinear transformation. Consider propagating the target state x ∈ Rn through a nonlinear function z = h(xk). Assume x has mean x¯ and covariance Pxx. To calculate the statistics of z, form a matrix X of 2n + 1 sigma vectors X i with corresponding weights Wi, according to the following procedure [JU97; van04.

Random Finite Set (RFS) for multi-object filtering

The standard Bayesian approach provides an optimal filtering solution for target state esti-mation when the number of targets is known and fixed, and when it is known which obser-vation belongs to each target. When the number of targets to be estimated is unknown and time-varying, a probabilistic model of the underlying random process must be considered to accommodate variations in the number of targets as well as their states. A more natural model for this kind of system is called a point process, and it is the subject of a branch of probability theory known as stochastic geometry [SKM96; Ngu06].

For multi-object tracking applications, a more useful and realistic model is the simple-finite point process, also known as the Random Finite Set (RFS). The RFS theory was first systematically investigated in the 1970s by Kendall and Matheron, who carried out studies using statistical geometry [Ken74; Mat75]. In 1980, Serra applied the RFS theory to two-dimensional image analysis [Ser80] and, since then, this theory has become an essential tool in theoretical statistics, inspiring early practical works in data fusion. However, it was only in the mid-1990s with Mahler’s work on Finite Set Statistics (FISST) and on the approximations for the multi-object Bayesian filter that the RFS approach has been consistently adopted in the filtering community [Mah94a; Mah94b; GMN97].

The main idea behind the RFS approach is to treat the collection of individual states and observations as multi-object sets, and then to characterize uncertainties present in the multi-object tracking problem by modeling these sets as random finite sets as illustrated in Figure 1.3.

Multi-object filtering using RFS

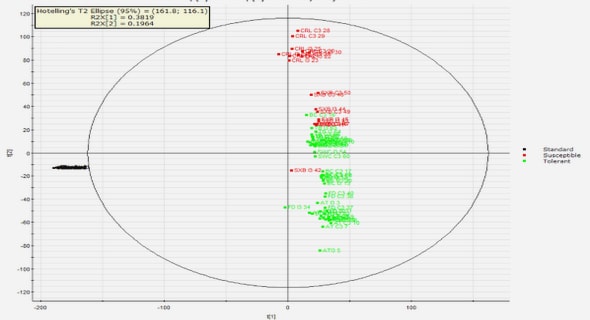

In the multi-object filtering problem, not only do the states of the objects are time-varying, but the number of items also changes due to objects that can appear and disappear. The targets can be observed by different types of sensor (or sensors) such as radar, sonar, lidar, camera, and infrared camera, and the sensor signals are preprocessed into a set of points or detections. Thus, the goal is to jointly estimate the number of objects and their states from the accumulated observations. In the random finite set framework, the objective is to estimate an RFS of multi-object states Xk ∈ X at time k: in other words, to estimate the cardinality |Xk| = n and the constituent object states xi,k, i ∈ [1, · · · , n] given a sequence of measurement sets Z1:k = {Z1, · · · , Zk}. The symbols X and Z represent the state and observation spaces. Figure 1.4 shows a schematic representation of the multi-object filtering using random finite sets.

Table of contents :

Abstract

Acknowledgements

List of Acronyms

List of Symbols

Résumé

Introduction

1 Fundamentals of Multi-Object Tracking

1.1 Bayesian filtering

1.1.1 The Bayes filter

1.1.2 The Kalman filter

1.1.3 The Extended Kalman filter

1.1.4 The Unscented Kalman filter

1.1.5 The Particle filters

1.2 Random Finite Set (RFS) for multi-object filtering

1.2.1 Random Finite Set notations and abbreviations

1.2.2 Multi-object probability distributions

1.2.3 Multi-object filtering using RFS

1.2.4 Multi-object dynamic model

1.2.5 Multi-object observation model

1.2.6 The Multi-object Bayes filter

1.2.7 The Probability Hypothesis Density (PHD) filter

1.2.8 The Gaussian-mixture PHD filter

1.2.9 Optimal Subpattern Assignment (OSPA) metric

1.3 Random Finite Set (RFS) for multi-object tracking

1.3.1 The Probability Hypothesis Density Tracker (PHDT)

1.3.2 Labeled Random Finite Set (LRFS)

1.3.3 Labeled multi-object dynamic model

1.3.4 Labeled multi-object observation model

1.3.5 Labeled Optimal Subpattern Assignment (LOSPA) metric

1.3.6 The Generalized Labeled Multi-Bernoulli (GLMB) filter

1.4 Conclusion

2 The labeled version of the PHD filter

2.1 Labeled PHD notations and abbreviations

2.2 Heuristic labeled PHD filter

2.3 The Labeled Multi-Bernoulli (LMB) filter

2.3.1 LMB prediction

2.3.2 LMB update

2.4 The Labeled Probability Hypothesis Density (LPHD) filter

2.4.1 LPHD prediction

2.4.2 LPHD update

2.5 The Gaussian-Mixture LPHD (GM-LPHD) filter

2.5.1 GM-LPHD prediction

2.5.2 GM-LPHD update

2.5.3 GM-LPHD track extraction and prunning

2.6 Numerical simulation using the LPHD filter

2.7 Conclusion

3 The multi-sensor version of the LPHD filter

3.1 Superpositional measurement model

3.2 The multi-sensor LPHD filter

3.2.1 Multi-sensor LPHD prediction

3.2.2 Multi-sensor LPHD update

3.3 Conclusion

4 Sensor Management for multi-object tracking

4.1 Fundamentals of Sensor Management

4.1.1 Partially Observed Markov Decision Problems

4.1.2 Task-based Sensor Management

4.1.3 Information-Theoretic Sensor Management

4.2 Risk-based Sensor Management

4.2.1 Expected cost of committing a type 1 error

4.2.2 The Expected Risk Reduction (ERR) metric

4.3 ERR numerical example using the EKF filter

4.3.1 Binary classification – known initial classification

4.3.2 Binary classification – unknown initial classification

4.3.3 Ternary classification – unknown initial classification

4.4 ERR numerical example using the GM-PHD Tracker

4.4.1 Binary classification

4.4.2 Mono classification

4.5 Conclusion

Conclusion and future work

Appendix A Finite Set Statistics

Appendix B Gaussian identities

Appendix C Kalman filter derivation

Appendix D Kinematic models for Target Tracking

Bibliography