Get Complete Project Material File(s) Now! »

Cross-frequency coupling

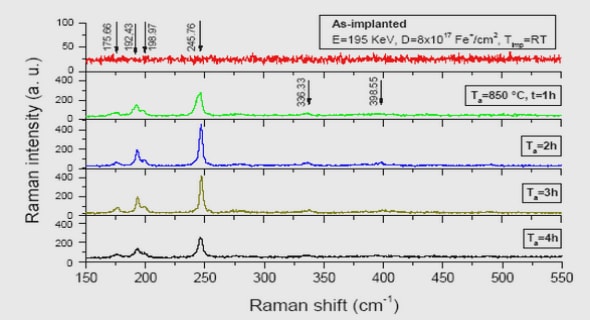

Cross-frequency coupling (CFC) The characterization of neural oscillations have given rise to important mechanistic hypotheses regarding their functional role in neurosciences (Buzsáki, 2006, Fries, 2015). One working hypothesis suggests that the coupling across neural oscillations may regulate and synchronize multi-scale communication of neural information within and across neural ensembles (Buzsáki, 2010, Fries, 2015). The coupling across different oscillatory activity is generically called cross-frequency-coupling (CFC) and has started receiving much attention (Jensen and Colgin, 2007, Lisman and Jensen, 2013, Canolty et al., 2006, Canolty and Knight, 2010, Hyafil et al., 2015). The most frequent instance of CFC consists in the observation that the power of high frequency activity is modulated by fluctuations of low-frequency oscillations, resulting

in phase-amplitude coupling (PAC). This can be observed for instance in Figure 1.2, where at the peak of each 3 Hz oscillation we see a increase of energy at 80 Hz. Other instances of CFC include phase-phase coupling (Tort et al., 2007, Malerba and Kopell, 2013), amplitude-amplitude coupling (Bruns and Eckhorn, 2004, Shirvalkar et al., 2010), and phase-frequency coupling (Jensen and Colgin, 2007, Hyafil et al., 2015). By far, PAC is the most reported CFC in the literature. Figure 1.3 presents these different couplings on simple sinusoids.

Non-linear autoregressive models

Given these limitations of PAC estimation metrics, we propose to use autoregressive (AR) models to capture PAC in neurophysiological time-series. Indeed, we first note that PAC corresponds to a modulation of the power-spectral density (PSD) of the signal. This remark naturally leads to AR models, which are stochastic processes that naturally estimate the PSD of signals. As we not only want to estimate the PSD, but also the PSD modulation, we develop non-linear AR models which estimate the PSD conditionally to a slowly-varying oscillation.

Giving a proper signal model to the signal enables easy model selection and clear hypothesis-testing by using the likelihood of the given model. This data-driven approach is fundamentally different from the traditional PAC metrics, and constitutes a major improvement in PAC estimation by adopting a principled modeling approach. We first limit ourselves to univariate signals, yet a multivariate extension is proposed later in this manuscript.

Autoregressive models (AR) AR models, also known as linear prediction modeling (Makhoul, 1975), are stochastic signal models, which aim to forecast the signal values based on its own past. More precisely, an AR model specifies that y depends linearly on its own p past values, where p is the order of the model: 8t 2 [p + 1, T] y(t) +Xp i=1 aiy(t − i) = « (t)

Stability in DAR models

In this section, we discuss stability in DAR models. We first recall standard stability definitions and criteria for stationary AR models. We then extend them to non-stationary AR models, assuming that all instantaneous AR models are stable, and that the system is slowly varying. Finally, we describe an alternative DAR parametrization, which forces all instantaneous AR models to be stable. For these models, we propose an efficient estimation scheme based on a Netwon procedure with a good initialization. ,

Table of contents :

1 Introduction

1.1 Neuroscientific challenges

1.2 Cross-frequency coupling

1.3 Non-linear autoregressive models

1.4 Convolutional sparse coding

1.5 Chapters summary

2 Driven autoregressive models

2.1 Driven autoregressive models

2.2 Stability in DAR models

3 DAR models and PAC

3.1 DAR models and PAC

3.2 Model selection

3.3 Statistical significance

3.4 Discussion

4 Extensions to DAR models

4.1 Driver estimation in DAR models

4.2 Multivariate PAC

4.3 Spectro-temporal receptive fields

5 Convolutional sparse coding

5.1 Convolutional sparse coding

5.2 CSC with alpha-stable distributions

5.3 Multivariate CSC with a rank-1 constraint

Conclusion

A Appendices

A.1 Convolutional sparse coding

Bibliography