Get Complete Project Material File(s) Now! »

Statistical and machine learning techniques

Statistical and machine learning (ML) techniques can be leveraged for complex problems arising in network operation and management [11]. Last years have witnessed a surge of such tech-niques, thanks to significant improvements in computing capacities and recent advances in data storing and processing. Applied to network security, data analysis has been widely explored to develop novel automation and detection techniques. We first describe the theory behind statis-tical learning models and their relevance to the networking problem. Then we further review in detail the process for data analysis, which consists in various steps: (i) learning paradigms and ML techniques, (ii) data collection, (iii) features design, (iv) model evaluation, and (v) ML applications.

Statistical learning

Originally, ML detection tools rely on statistical learning theories to build their model. There also exist unsupervised detection tools that use plain statistical approaches; their central assumption is that the phenomena the most rarely observed are the most likely to be anomalous. In statistical approaches, the fine analysis of the built statistical profiles of traffic allows one to understand how the detected anomalous instances differ from the usual behavior; however, they work on a mono feature basis, thus do not correlate the different features by design. Moreover, statistical approaches work well combined with other algorithms as they are usually unable to provide additional information, such as the IP addresses of attackers or the attack root causes; in addition, they are of practically no computational complexity and easy to implement, which makes them a wise approach when the detection should operate with limited computational resources.

Hidden Markov models

Hidden Markov Models (HMMs) are based on augmented Markov chains [12]. HMMs consist of statistical Markov models where the probability functions modeling transitions between the states are determined in the training phase, contrary to Markov chains where they are set a priori. HMMs are widely used in pattern recognition, and now tend to be extensively applied to intrusion detection as well. They generally show excellent performance, although they are not yet adapted to fit real-world network constraints. Indeed, they require a large amount of time to model normal behaviors and the false positive rate stands relatively high. In [13], authors propose an IDS where the payload is represented as a sequence of bytes, and the analysis is based on an ensemble of HMMs.

Changepoint detection-based techniques

The implicit assumption behind changepoint detection-based techniques is that anomalies induce significant changes in the probability distribution of feature values. Therefore, these approaches are quite fit to detect coarse anomalies, which have a significant effect on traffic, as DoS and DDoS would do. A pioneer work devising an online anomaly detection technique in computer network traffic using changepoint detection methods is [14]. The algorithm is based on the multi-cyclic Shiryaev–Roberts detection procedure, which is computationally inexpensive and performs better than other detection schemes.

For changepoint detection algorithms, the z-score is a well-known and simple statistical met-ric commonly used to automatically detect sudden changes in time series. More precisely, it is the measure of how many standard deviations below or above the mean a data point is. Ba-sically, a z-score equal to zero means that the data point is equal to the mean, and the larger the z-score, the more unusual the value. An anomaly is detected if the absolute value of the modified z-score exceeds a given threshold. There also exist algorithms based on variants of this metric. The modified z-score uses the median and the median absolute deviation (MAD) from the median, instead of the classical mean and standard deviation respectively, which makes it outlier-resistant [15]. In addition, the smoothed z-score considers the influence of outliers, i.e., the weights of the past samples on the mean and standard deviation, with respect to the current sample.

Other approaches

Histograms are used to count or visualize the frequency of data (i.e., the number of occurrences) over bins, which consist of units of discrete intervals. Historically, they have been widely used in the data and image processing fields. Histogram-based algorithms, also named frequency-based or counting-based algorithms, rely on histograms containing the bins associated with the values of their attributes. [16] proposes an alternative approach to feature-based anomaly detection tools that builds detailed histogram models of the features and identifies deviations from these models. In addition, building comprehensive histograms is less computationally expensive than using coarse distribution or graph-based features.

ML techniques: paradigms and addressed problems

Now that we reviewed statistical learning-based approaches, we focus on ML techniques, inves-tigating the whole ML design pipeline.

STATISTICAL AND MACHINE LEARNING TECHNIQUES

Figure 2.1: Learning paradigms that benefit from machine learning: classification and regres-sion for supervised learning, and clustering and rule extraction for unsupervised learning.

1. Supervised learning techniques learn from a labeled dataset what constitutes either normal traffic or attacks – there exist different techniques such as SVM-based classifiers, rule-based classifiers, and ensemble-learning detectors [17].

2. Unsupervised approaches learn by themselves what is normal or abnormal – among them, MAWILab [18] finds anomalies by combining detectors that operate at different traffic granularities (the results against the MAWI dataset are in [18]); numerous works compare themselves to MAWILab, as for instance change-detection techniques [14, 19] (defining an anomaly as a sudden change compared to a model), and ORUNADA [20] (relying on a discrete time-sliding window to continuously update the feature space and cluster events).

3. Hybrid or semi-supervised approaches benefit from only a small part of labeled traffic, meant to be enough to learn from, as proposed in [21].

4. Reinforcement learning (RL) is an iterative process with agents that take actions in order to maximize the notion of cumulative reward. In the purpose of decision making, the learning is traditionally based on exemplars from training datasets. The training data in RL constitutes a set of state-action pairs and rewards (or penalties).

Problem categories

Four broad categories of problems can leverage ML, namely classification, regression, clustering, and rule extraction , as illustrated in Fig. 2.1. First of all, classification and regression are two supervised learning approaches; their objective is to map an input to an output based on example input-output pairs from labeled data. Regression approaches predicts continuous values output, whereas classification predicts discrete values, consisting in the different labels. Then, clustering and rule extraction are unsupervised learning techniques: clustering is the task of partitioning the dataset into groups, called clusters – the goal is to determine grouping among unlabeled data, while increasing the gap between the groups; rule extraction techniques are designed to identify statistical relationships in data, by discovering rules that describe large portions of the dataset.

Note that the choice of the learning paradigm strongly depends on the training data. For example, if the dataset is not labeled, supervised learning cannot be employed and other learning paradigms must be considered.

Concrete ML techniques applications.

To illustrate the diversity in ML techniques applications, we provide concrete use cases for each of the aforementioned learning paradigms:

• Classification techniques are traditionally used in problems that contain labeled datasets, with two or more distinct classes. Botnet detection is but one example of such cases, where we can distinguish between malicious and benign flows; this way, the ML algorithm implicitly learns the inherent characteristics of a bot, and those of a benign host.

• Regression techniques are traditionally used for time series forecasting [22, 23]. The objec-tive is to construct a regression model able to induce future traffic volume based on previous instances of traffic. Regression techniques are also employed to assess the impact of the global network condition on the QoS or QoE [24]. Finally, monitoring Key Performance Indicators (KPI) in large-scale networks enables the quick detection of network outages and attacks.

• Clustering techniques are usually employed for outlier detection purposes. In network cyber-security, many intrusion detection schemes [20] rely on data clustering to highlight significant deviations compared to usual end-user behaviors.

• Finally, rule extraction techniques, also named association rule mining, are commonly employed for personalized recommendations. Market Basket Analysis [25] is one of the key techniques used by large retailers to discover correlations within sets of items. These techniques are also used by recommendation engines as for Netflix (for personalized movies recommendation) and Amazon (for suggestions of other articles related to the purchased one).

Data collection

The process of collecting data to apply and validate a given ML technique is an important step, but nonetheless difficult. Finding representative data, possibly without bias and labeled is a non-trivial task. Datasets also vary from one problem to another and from one time period to the next one.

Data monitoring techniques are classified into active, passive, and hybrid techniques [26]. Active monitoring uses traffic measurement in order to collect relevant data and examine the state of networks. Such approaches commonly consist in a set of distributed vantage points hosting measurement tools like ping and traceroute; among them, RIPE Atlas [27] is a global network of over 10,000 probes that measure Internet connectivity and reachability, used for instance in [28] where authors identify data-center collocation facilities in traceroute data from RIPE Atlas built-in measurements, then monitors delay and routing patterns between facilities. In [29], given an arbitrary set of traceroutes, the authors first spot routing paths changing similarly over time, then aggregate them into inferred events and collect details to identify its cause.

In contrast, passive monitoring collects existing traffic and infer the state of networks from it. Compared to active monitoring, it ensures that the inferred statistics correspond to real traf-fic and it does not introduce additional overhead due to bandwidth consumption from injected traffic. Passive monitoring data can be obtained from various repositories, given it is relevant to the networking problem being studied. Such traces include CDN traces [30], darknets [31], and network telescope datasets [32, 33]. The latter consists of a globally routed, but lightly utilized network prefix – a /8 for the University of California San Diego (UCSD) Network Telescope Aggregated Flow Dataset [32] and /20 for the Network Telescope dataset from LORIA [33]. Inbound traffic to non-existent machines is unsolicited and results from a wide range of events, including misconfiguration, scanning of address space by attackers or malware looking for vul-nerable targets, and backscatter from randomly spoofed DoS attacks. Other examples of passive data repositories include the Measurement and Analysis on the WIDE Internet (MAWI) Working Group Traffic Archive [34] and the CTU-13 dataset [35]. We later review these datasets in detail, respectively in Sections 3.3 and 4.2.

Feature design

Before applying an ML algorithm to the dataset, the collected raw data must be formatted to cover an adequate set of features. The first phase named Feature Extraction consists in cleaning the dataset that may contain missing values or noise. In addition, the collected raw dataset may be too voluminous to be handled. The need for dimensionality reduction is justified by multiple reasons. First, a large number of features may induce a high computational overhead. Also, a phenomenon called the curse of dimensionality refers to the sparsity in data increasing with the number of dimensions, which makes the dataset no more consistent. We first depict the features traditionally selected for anomaly detection depending on the aggregation level. We then review the main strategies employed for feature extraction.

Common feature choice

In reality, the feature choice directly depends on the problem formulation (i.e., the detection tar-get) and thus on the granularity level. The taxonomy of aggregation levels for network anomaly detection includes payload-based, host behavior-based, and flow feature-based techniques.

Payload-based anomaly detection systems parse the packet payload looking for known ap-plication signatures. However, this incurs a high computational overhead and requires manual interventions from humans to monitor the alerts and regularly update the signatures database. In addition, the payload tends to be systematically encrypted due to privacy concerns. Payload-based systems usually employ features such as the payload size, but also more complex ones like specific byte sequences or particular key-words present in the payload that would execute malicious actions.

Host behavior-based anomaly detection systems compute per-host traffic features to model behavioral characteristics of hosts. Contrary to payload-based systems, it examines the inherent characteristics of hosts but also assesses graph-based features by considering hosts as nodes in a graph, to measure for example the centrality of nodes or the amount and frequency of traffic exchanged between the nodes. IDSes implemented at the host-level are named Host-based Intrusion Detection Systems (HIDSes), whereas those at the network-level are named Network-based Intrusion Detection Systems (NIDSes). Common features used by such systems include packet counts exchanged between nodes [36], service proximity, activity profiles, session duration, periodicity [37], and byte encoding [38, 39] or statistical characterization of bytes [40], for each packet or each flow coming from a host.

Flow feature-based anomaly detection systems aggregate communications on a per-flow basis, which consists of a 5-tuple made from the protocol, the source and destination IP addresses, and the source and destination port numbers. It is then a unidirectional exchange of consecutive packets on the network from a port at an IP address to another port at another IP address using a particular application protocol, including all packets pertaining to session setup and tear-down, and data exchange. A feature is an attribute representing unique characteristics of a flow, such as the number of packets in a flow, mean packet length, packet inter-arrival time, flow duration, entropy, to name a few. Entropy basically represents the traffic distribution predictability and enables one to detect volume-based anomalies such as DoS and DDoS. Flow feature-based techniques use flow features as discriminators to map flows to classes of interest.

Feature extraction

Two main processes are usually employed to adequately select features. The process of Feature Selection consists in removing the features that are not relevant or redundant in order to keep only a limited set of features. The filtering strategy (e.g. information gain), the wrapper strategy (e.g. search guided by accuracy), and the embedded strategy (selected features are added or removed while building the model based on prediction errors) are three techniques for feature selection.

The second process, named Feature projection, projects the data from a high-dimensional space to a space of fewer dimensions. The variance between each class is accentuated in the re-sulting space, removing redundancy in data. Both linear and nonlinear dimensionality reduction techniques exist. The main linear technique is named Principal Component Analysis (PCA), which finds the directions of maximum variance [41]. The fraction of variance explained by a principal component is the ratio between the variance of that principal component and the total variance. The objective is to reduce the dimensionality while keeping a good amount of infor-mation, so that the cumulative explained variance ratio is close to 100%. PCA may also be used for traditional outlier detection [42, 43]. Using real traffic traces, [43] demonstrates that normal traffic data can reside in a low-dimensional linear subspace and form a low-rank tensor. The anomalies (outliers) should stay outside this subspace. Therefore, tensor-based approaches try to recover the normal data by separating the low-rank normal data and outlier data from the noisy traffic data captured, and then detect anomalies by using the outlier data separated.

Performance metrics and model validation

In the case of supervised learning, we are able to compute some metrics to assess the performance of our classification model. A confusion matrix is a table often used to evaluate the performance of a classification model [11]. The basic terms are the following (expressed as whole numbers and not rates): True Positive (T P ) is the number of bots correctly classified; True Negative (T N) is the number of benign hosts correctly classified; False Positive (F P ) is the number of benign hosts incorrectly classified; False Negative (F N ) is the number of bots incorrectly classified. This is a list of rates that are often computed from the confusion matrix for a binary classifier:

• Accuracy, computed as ACC = T P +T N , shows the percentage of true detection TP+TN+FP+FN

over the total number of instances. High accuracy is required. However, a bias may be introduced if the dataset is too unbalanced, then we need to consider other metrics.

• True Positive Rate, defined as T P R = T P , also known as recall, shows the percent-TP+FN

age of predicted malicious instances versus all malicious instances. A high T P R value is desirable.

• False Positive Rate, computed as F P R = F P , also known as false alarm rate, refers FP+TN to the ratio of incorrectly classified benign instances versus all the benign instances. A low F P R value is desirable. If the dataset is too unbalanced, consider using the precision and recall instead of the TPR and FPR.

Table of contents :

1 Introduction

1.1 Context and motivation

1.2 Statistical and ML techniques

1.3 Data analysis applications to networking

1.4 Contributions and thesis outline

2 Related work

2.1 Statistical and machine learning techniques

2.1.1 Statistical learning

2.1.2 ML techniques: paradigms and addressed problems

2.1.3 Data collection

2.1.4 Feature design

2.1.5 Performance metrics and model validation

2.2 Intrusion detection

2.2.1 Intrusion detection methodologies

2.2.2 Large-scale intrusion detection

2.2.3 Application to botnet detection

2.3 Botnet Detection

2.3.1 Flow-based techniques

2.3.2 Graph-based techniques

2.4 Spatiotemporal anomaly detection in cellular networks

2.4.1 Detection of spatiotemporal anomalies

2.4.2 Per-app mobile traffic analysis

2.4.3 Group anomaly detection

2.5 Summary

3 Detection of zero-day attacks

3.1 Introduction

3.2 Split-and-Merge Port-centric Network Anomaly Detection

3.2.1 Rationale

3.2.2 Features design

3.2.3 Local anomaly detection

3.2.4 Central correlation

3.3 Network traffic datasets

3.4 Evaluation

3.4.1 Normal distribution fitting

3.4.2 Local anomaly detection

3.4.3 Comparison between aggregated and split views

3.4.4 Last years panorama

3.4.5 Anomaly score distribution

3.4.6 Features and parameters choice

3.4.7 Anomalies classification

3.4.8 Ground-truth

3.5 Complexity and performances analysis

3.5.1 Complexity analysis

3.5.2 Execution performance

3.6 Conclusion

4 Botnet Fingerprinting

4.1 Introduction

4.2 Dataset

4.3 Bots Fingerprints

4.3.1 Preliminary example

4.3.2 Methodology

4.3.3 Flow records collection and formatting

4.3.4 Quantification (attribute frequency distributions)

4.3.5 Signatures formatting

4.4 Bot Detection

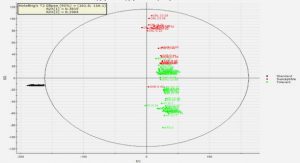

4.4.1 BotFP-Clus

4.4.2 BotFP-ML

4.5 Evaluation

4.5.1 BotFP-Clus

4.5.2 Comparison between BotFP-Clus and BotFP-ML

4.5.3 Comparison to state-of-the-art detection techniques

4.6 Complexity

4.6.1 Attribute frequency distributions computation

4.6.2 Training

4.6.3 Classification

4.6.4 Comparison to other techniques

4.7 Conclusion

5 Group anomaly detection in mobile apps usages

5.1 Introduction

5.2 Measurements and dataset

5.3 ASTECH Methodology

5.3.1 Algorithmic approach

5.3.2 Notations

5.4 Time series anomaly detection

5.4.1 Time series decomposition

5.4.2 Detection of raw anomalies

5.5 Group anomalies

5.5.1 Identification of abnormal snapshots

5.5.2 Detection of group anomalies

5.5.3 Fine-grained characterization of group anomalies

5.6 Numerical results

5.6.1 Raw anomalies

5.6.2 Group anomalies

5.6.3 Group anomalies classification

5.7 Conclusion

6 Conclusion

6.1 Summary of contributions

6.2 Perspectives

6.2.1 Detection of zero-day attacks

6.2.2 Botnet Fingerprinting

6.2.3 Group anomaly detection in mobile app usages