Get Complete Project Material File(s) Now! »

Dynamical Systems and Representation Learning for Complex Spatiotemporal Data

Moving on from general-purpose representation learning, we then more particularly study representation learning for complex structured spatiotemporal data. The latter arise in large-scale applications involving the observation of moving human subjects, objects and physical entities; typical examples of such data include videos and physical phenomena. They still constitute a challenge for neural networks due to their complexity and the need for high computational power to handle them, thus motivating advances that could improve existing models with reasonable resources requirements.

We consider two main types of data: videos and physical phenomena. Videos have numerous applications with respect to autonomous systems, including robotics and self-driving cars. They necessitate predictive models which should generate realistic images and take into account the inherent stochasticity of the observed phenomena.

The applicability of these models highly depends on their representation learning abilities. Indeed, the latter are essential for downstream tasks like planning and action recognition, allowing autonomous systems to benefit from small-scale representations of the environment. The prediction of physical phenomena, possibly less random but more chaotic, is a recent application field of deep learning that still struggles to achieve results equivalent to more classical prediction methods relying on physical models. Representation learning is especially interesting for the latter to understand the prediction mechanisms of data-driven approaches for partially observable data. Therefore, in this thesis, we explore representation learning for this type of sequence via generative modeling and forecasting. For both considered applications, we design temporal generative prediction models for spatiotemporal data relying on learning meaningful and disentangled representations. We show that the long-term predictive performance and representation learning abilities of these models mutually benefit from each other. An influential modeling choice in this regard is the inspiration from dynamical systems for the design of the proposed temporal evolution models. More precisely, our models are based on discretizations of differential equations parameterized by neural networks, which we show to be particularly adapted to the learning of continuous-time dynamics typically involved in videos and physical phenomena.

These contributions, developed in Part III of this document, were presented in the hereunder two international conference publications.

Jean-Yves Franceschi, Edouard Delasalles, Mickaël Chen, Sylvain Lamprier, and Patrick Gallinari (July 2020). “Stochastic Latent Residual Video Pre-diction”. In: Proceedings of the 37th International Conference on Machine Learning. Ed. by Hal Daumé III and Aarti Singh. Vol. 119. Proceedings of Machine Learning Research. PMLR, pp. 3233–3246.

Study of Generative Adversarial Networks via their Training Dynamics

After highlighting the valuable role of dynamical systems for deep generative predictive models, we then leverage differential equations within a novel theoretical framework to analyze and explain the training dynamics of a popular but still misunderstood generative model: Generative Adversarial Networks (GANs).

We point out a fundamental flaw in previous theoretical analyses of GANs that leads to ill-defined gradients for the discriminator. Indeed, within these frameworks that neglect its architectural parameterization as a neural network, the discriminator is insufficiently constrained to ensure the existence of its gradients. This oversight raises important modeling issues as it makes these analyses incompatible with standard GAN practice using gradient-based optimization. We overcome this problem which impedes a principled study of GAN training, solving it within our framework by taking into account the discriminator’s architecture and training.

To this end, we leverage the theory of infinite-width neural networks for the discrimi-nator via its Neural Tangent Kernel (NTK) in order to model its inductive biases as a neural network. We thereby characterize the trained discriminator for a wide range of losses by expressing its training dynamics with a differential equation. From this, we establish general differentiability properties of the network that are necessary for a sound theoretical framework of GANs, making ours closer to GAN practice than previous analyses.

Thanks to this adequacy with practice, we gain new theoretical and empirical insights about the generated distribution’s flow during training, advancing our understanding of GAN dynamics. For example, we find that, under the integral probability metric loss, the generated distribution minimizes the maximum mean discrepancy given by the discriminator’s NTK with respect to the target distribution. We empirically corroborate these results via a publicly released analysis toolkit based on our framework, unveiling intuitions that are consistent with current GAN practice and opening new perspectives for better and more principled GAN models.

This contribution, explained in Part IV of this document, corresponds to the following preprint and is currently under review at an international conference.

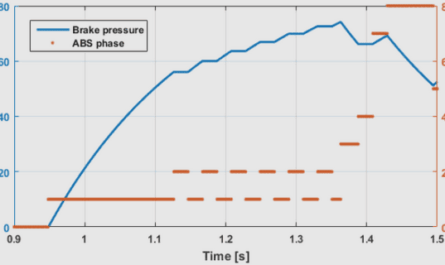

ODEs and Neural Network Optimization

We would like to note in this introduction that the use of ODEs in deep learning is not restricted to neural-parameterized sequence modeling, as it has also been independently leveraged to analyze, and even sometimes improve, the very training dynamics of neural networks with respect to training time. Let us indeed consider a network f with parameters , optimized by plain gradient descent with learning rate to minimize the loss function L . A gradient descent iteration for optimization step k consists in: k+1 = k @L k ; (2.12) @ k which is the Euler discretization with step size t = 1 of the following ODE: d t = @L t ; (2.13) dt @ t where t here denotes the training time.

This usual observation has been used to analyze neural network optimization algo-rithms (Barakat and Bianchi, 2021), but also standard gradient-based optimization procedures (Belotto da Silva and Gazeau, 2020; Su, S. Boyd, and Candès, 2016; A. A. Brown and Bartholomew-Biggs, 1989); even mini-batch training may be studied through the lens of SDEs. Other ODEs describing the evolution of the parameterized function f t as well as the loss L t can then be derived from the description of the parameter evolution through time by Equation (2.13). Jacot, Gabriel, and Hongler (2018) thereby derive from these ODEs the theory of NTKs for infinite-width neural networks, which simplifies these differential equations and their subsequent analysis. We base one of our contributions, in Chapter 6, on this theory and the resulting training ODEs in order to theoretically and empirically study GAN training. Note that numerous authors have also considered ODEs, but never in this specific setting nor with the same generality, to analyze and improve GANs (Mescheder, Nowozin, and A. Geiger, 2017; Nagarajan and Kolter, 2017; Balduzzi et al., 2018; C. Wang, H. Hu, and Y. M. Lu, 2019), with the most recent example (Qin et al., 2020) transforming the Euler discretization of Equation (2.12) into a more involved ODE higher-order solving scheme.

Unsupervised Representation Learning for Temporal Data

We succinctly summarize in this section the state of the literature for unsupervised representation learning on temporal data. Given the wide range of this topic, both on the representation learning and temporal data sides, this presentation is not meant to be exhaustive, but rather contextualizes our contributions in the next chapters by highlighting the two main research orientations on this matter: contrastive learning in Section 2.2.1 and autoencoding in Section 2.2.2.

Sequential Deep Generative Models

The generative models presented until now in the context of static data have also been used for sequential data. The general formulation that we adopt for their presentation is directly applicable to such temporal data. However, the nature and complexity of the latter call for specifically designed models.

For example, a desirable property of temporal generative models would be to generate longer sequences than those they have been trained on, which is only possible when specific architectures are used. There are abundant neural network architectures that are specially adapted for sequential data, mostly based on RNNs or any other sequential architecture of Section 2.1. They can be used as a direct replacement for the generator g or any network involved during the training of the latter. We more specifically discuss these architectures in Section 2.1 and instead focus in the rest of this discussion on specific generative modeling advances towards better handling time series. Their breadth of application being immense with multiple sources and types of temporal data, a thorough review of generative models in this setting would be outside of the scope of this document. We instead opt for highlighting general research axes in the literature of sequential generative modeling, listed in the following.

Temporally Aware Training Objectives

One of the manners to adapt existing methods such as those of Section 2.3.1 is to further specialize their training objective to take into account the temporality of the data, without necessarily changing the structure of the generative model. This is beneficial because objectives tailored for static data can bias generative models towards producing undesirable effects (M. Mathieu, Couprie, and LeCun, 2016; Le Guen and Thome, 2019), such as blurry outputs for videos. Proposed adaptations of training objectives can be generally applicable to any kind of time series by nature. For instance, T. Xu et al. (2020) adapt the WGAN objective by replacing the optimal transport view of W1 with a causal optimal transport paradigm, thus constraining the adversarial objective to account for the causality brought by the temporality of the data. Nonetheless, proposed methods are most often specifically tailored for the considered data, such as videos (T.-C. Wang et al., 2018), audio (Dhariwal et al., 2020) and low-dimensional data (Cuturi and Blondel, 2017). For example, the general GAN discriminator is replaced by T.-C. Wang et al. (2018) with two discriminators of different architectures and nature: a first one acting on video frames only to assess their individual quality and a second one taking as input the whole video to consider the temporal consistency of the produced sequence.

While this research direction is promising, we rather deal in this thesis with structural changes – i.e., modifications of p – to obtain temporal generative models, which we discuss in the rest of this section.

Stochastic and Deterministic Models for Sequence-to-Sequence Tasks

A standard extension of generative models presented earlier is to tackle conditional generation problems (Mirza and Osindero, 2014; Sohn, H. Lee, and X. Yan, 2015), where the goal is to generate data points x under some condition c, i.e. the generative model instead corresponds to the conditional probability p (x j c) trained to imitate pdata(x j c). For static data, a typical example is class-conditional generation, e.g. synthesizing an image of a given object (Odena, Olah, and Shlens, 2017).

For sequential data, conditional generation is also applied to sequence-to-sequence tasks, for which an input sequence conditions the output sequence. This includes sequence transformations (van den Oord, Dieleman, et al., 2016; T.-C. Wang et al., 2018) as well as prediction tasks, consisting in forecasting the next future time steps of a series based on some previous conditioning steps.

Sequence conditioning may be strong enough to fully or almost completely determine the corresponding output for some data types because conditioning steps contain decisive information about the dynamics of the observed series. In this case, the true conditional pdata(x j c) is a Dirac, or close to a Dirac. This happens, for instance, in fully observable physical phenomena driven by ODEs, where the latter ensure that sufficient observations can determine the whole process, or in videos where visual features and movements can be predictable in the short term. This has led authors in fields where this observation stands to choose, often implicitly, a Dirac as p (x j c) that is centered at the point outputted by the generator. In this setting, the generator becomes a simple deterministic regressor, trained to predict a function of its inputs. While this can be achieved via usual loss functions like the MSE, some peculiar techniques of generative modeling can also be applied in this case to improve the prediction quality, such as adversarial losses (M. Mathieu, Couprie, and LeCun, 2016; Vondrick and Torralba, 2017).

Table of contents :

List of Figures

List of Tables

List of Acronyms

I. Motivation

1. Introduction

1.1. Context

1.2. Subject and Contributions of this Thesis

1.2.1. General-Purpose Unsupervised Representation Learning for Time Series

1.2.2. Dynamical Systems and Representation Learning for Complex Spatiotemporal Data

1.2.3. Study of Generative Adversarial Networks via their Training Dynamics

1.2.4. Outline of this Thesis

2. Background and Related Work

2.1. Neural Architecture for Sequence Modeling

2.1.1. Recurrent Neural Networks

2.1.1.1. Principle

2.1.1.2. Refinements

2.1.2. Neural Differential Equations

2.1.2.1. ODEs and PDEs

2.1.2.2. Differential Equations and Neural Networks

2.1.2.3. ODEs and Neural Network Optimization

2.1.3. Alternatives

2.1.3.1. Convolutional Neural Networks

2.1.3.2. Transformers

2.2. Unsupervised Representation Learning for Temporal Data

2.2.1. Contrastive Learning

2.2.2. Learning from Autoencoding and Prediction

2.2.2.1. Learning Methods

2.2.2.2. Disentangled Representations

2.3. Deep Generative Modeling

2.3.1. Families of Deep Generative Models

2.3.1.1. Variational Autoencoders

2.3.1.2. Generative Adversarial Networks

2.3.1.3. Other Categories

2.3.2. Sequential Deep Generative Models

2.3.2.1. Temporally Aware Training Objectives

2.3.2.2. Stochastic and Deterministic Models for Sequence-to- Sequence Tasks

2.3.2.3. Latent Generative Temporal Structure

II. Time Series Representation Learning

3. Unsupervised Scalable Representation Learning for Time Series

3.1. Introduction

3.2. Related Work

3.3. Unsupervised Training

3.4. Encoder Architecture

3.5. Experimental Results

3.5.1. Classification

3.5.1.1. Univariate Time Series

3.5.1.2. Multivariate Time Series

3.5.2. Evaluation on Long Time Series

3.6. Discussion

3.6.1. Behavior of the Learned Representations Throughout Training .

3.6.2. Influence of K

3.6.3. Discussion of the Choice of Encoder

3.6.4. Reproducibility

3.7. Conclusion

III. State-Space Predictive Models for Spatiotemporal Data

4. Stochastic Latent Residual Video Prediction

4.1. Introduction

4.2. Related Work

4.3. Model

4.3.1. Latent Residual Dynamic Model

4.3.2. Content Variable

4.3.3. Variational Inference and Architecture

4.4. Experiments

4.4.1. Evaluation and Comparisons

4.4.2. Datasets and Prediction Results

4.4.2.1. Stochastic Moving MNIST

4.4.2.2. KTH Action Dataset

4.4.2.3. Human3.6M

4.4.2.4. BAIR Robot Pushing Dataset

4.4.3. Illustration of Residual, State-Space and Latent Properties

4.4.3.1. Generation at Varying Frame Rate

4.4.3.2. Disentangling Dynamics and Content

4.4.3.3. Interpolation of Dynamics

4.4.3.4. Autoregressivity and Impact of the Encoder and Decoder Architecture

4.5. Conclusion

5. PDE-Driven Spatiotemporal Disentanglement

5.1. Introduction

5.2. Background: Separation of Variables

5.2.1. Simple Case Study

5.2.2. Functional Separation of Variables

5.3. Proposed Method

5.3.1. Problem Formulation Through Separation of Variables

5.3.2. Fundamental Limits and Relaxation

5.3.3. Temporal ODEs

5.3.4. Spatiotemporal Disentanglement

5.3.5. Loss Function

5.3.6. Discussion of Differences with Chapter 4’s Model

5.4. Experiments

5.4.1. Physical Datasets: Wave Equation and Sea Surface Temperature

5.4.2. A Synthetic Video Dataset: Moving MNIST

5.4.3. A Multi-View Dataset: 3D Warehouse Chairs

5.4.4. A Crowd Flow Dataset: TaxiBJ

5.5. Conclusion

IV. Analysis of GANs’ Training Dynamics

6. A Neural Tangent Kernel Perspective of GANs

6.1. Introduction

6.2. Related Work

6.3. Limits of Previous Studies

6.3.1. Generative Adversarial Networks

6.3.2. On the Necessity of Modeling Discriminator Parameterization .

6.4. NTK Analysis of GANs

6.4.1. Modeling Inductive Biases of the Discriminator in the InfiniteWidth Limit

6.4.2. Existence, Uniqueness and Characterization of the Discriminator

6.4.3. Differentiability of the Discriminator and its NTK

6.4.4. Dynamics of the Generated Distribution

6.5. Fined-Grained Study for Specific Losses

6.5.1. The IPM as an NTK MMD Minimizer

6.5.2. LSGAN, Convergence, and Emergence of New Divergences .

6.6. Empirical Study with GAN(TK)2

6.6.1. Adequacy for Fixed Distributions

6.6.2. Convergence of Generated Distribution

6.6.3. Visualizing the Gradient Field Induced by the Discriminator .

6.6.3.1. Setting

6.6.3.2. Qualitative Analysis of the Gradient Field

6.7. Conclusion and Discussion

V. Conclusion

7. Overview of our Work

7.1. Summary of Contributions

7.2. Reproducibility

7.3. Acknowledgements

7.4. Other Works

8. Perspectives

8.1. Unfinished Projects

8.1.1. Adaptive Stochasticity for Video Prediction

8.1.2. GAN Improvements via the GAN(TK)2 Framework

8.1.2.1. New Discriminator Architectures

8.1.2.2. New NTK-Based GAN Model

8.2. Future Directions

8.2.1. Temporal Data and Text

8.2.2. Spatiotemporal Prediction

8.2.2.1. Merging the Video and PDE-Based Models

8.2.2.2. Scaling Models

8.2.2.3. Relaxing the Constancy of the Content Variable

8.2.3. NTKs for the Analysis of Generative Models

8.2.3.1. Analysis of GANs’s Generators

8.2.3.2. Analysis of Other Models

Appendix

A. Supplementary Material of Chapter 3

A.1. Training Details

A.1.1. Input Preprocessing

A.1.2. SVM Training

A.1.3. Hyperparameters

A.2. Univariate Time Series

A.3. Multivariate Time Series

B. Supplementary Material of Chapter 4

B.1. Evidence Lower Bound

B.2. Datasets Details

B.2.1. Data Representation

B.2.2. Stochastic Moving MNIST

B.2.3. KTH Action Dataset (KTH)

B.2.4. Human3.6M

B.2.5. BAIR Robot Pushing Dataset (BAIR)

B.3. Training Details

B.3.1. Architecture

B.3.2. Optimization

B.4. Influence of the Euler step size

B.5. Pendulum Experiment

B.6. Additional Samples

B.6.1. Stochastic Moving MNIST

B.6.2. KTH

B.6.3. Human3.6M

B.6.4. BAIR

B.6.5. Oversampling

B.6.6. Content Swap

B.6.7. Interpolation in the Latent Space

C. Supplementary Material of Chapter 5

C.1. Proofs

C.1.1. Resolution of the Heat Equation

C.1.2. Heat Equation with Advection Term

C.2. Accessing Time Derivatives of w and Deriving a Feasible Weaker Constraint

C.3. Of Spatiotemporal Disentanglement

C.3.1. Separation of Variables Preserves the Mutual Information of w and y through Time

C.3.1.1. Invertible Flow of an Ordinary Differential Equation (ODE)

C.3.1.2. Preservation of Mutual Information by Invertible Mappings

C.3.2. Ensuring Disentanglement at any Time

C.4. Datasets

C.4.1. WaveEq and WaveEq-100

C.4.2. Sea Surface Temperature (SST)

C.4.3. Moving MNIST

C.4.4. 3D Warehouse Chairs

C.4.5. TaxiBJ

C.5. Training Details

C.5.1. Baselines

C.5.2. Model Specifications

C.5.2.1. Architecture

C.5.3. Optimization

C.5.4. Prediction Offset for SST

C.6. Additional Results and Samples

C.6.1. Preliminary Results on KTH

C.6.2. Modeling SST with Separation of Variables

C.6.3. Additional Samples

C.6.3.1. WaveEq

C.6.3.2. SST

C.6.3.3. Moving MNIST

C.6.3.4. 3D Warehouse Chairs

D. Supplementary Material of Chapter 6

D.1. Proofs of Theoretical Results and Additional Results

D.1.1. Recall of Assumptions

D.1.2. On the Solutions of Equation (6.9)

D.1.3. Differentiability of Infinite-Width Networks and their NTKs .

D.1.3.1. K Preserves Kernel Differentiability

D.1.3.2. Differentiability of Conjugate Kernels, NTKs and Discriminators

D.1.4. Dynamics of the Generated Distribution

D.1.5. Optimality in Concave Setting

D.1.5.1. Assumptions

D.1.5.2. Optimality Result

D.1.6. Case Studies of Discriminator Dynamics

D.1.6.1. Preliminaries

D.1.6.2. LSGAN

D.1.6.3. IPMs

D.1.6.4. Vanilla GAN

D.2. Discussions and Remarks

D.2.1. From Finite to Infinite-Width Networks

D.2.2. Loss of the Generator and its Gradient

D.2.3. Differentiability of the Bias-Free ReLU Kernel

D.2.4. Integral Operator and Instance Noise

D.2.5. Positive Definite NTKs

D.3. Experimental Details

D.3.1. Datasets

D.3.2. Parameters

Bibliography