Get Complete Project Material File(s) Now! »

Chapter 3 Problem Definition and Objectives

Given the timeliness model and the system model described in Chapter 2, our objective is to maximize the aggregate benefit accrued by the arrival of all application messages at their destination hosts. Thus, the problem that we are addressing in this thesis can be described as Maximize Pnk=1 Bk(tk), where tk is the time at which message Mk arrives at its destination host.

BPA is a packet scheduling algorithm. Thus, packets constitute the “input” to the algorithm. Therefore, if packets have benefit functions, that will enable the algorithm to reason about scheduling packets such that it will maximize the aggregate message-level benefit. This requires us to translate benefit functions of messages into benefit functions for packets.

Since the packets of a message do not have any precedence relations, the packets can be transmitted in any order from the source host of the message. Furthermore, the benefit of a message is accrued only when all packets of the message arrive at the destination host and are reassembled. Thus, the packets of a message can simply inherit the benefit function of its parent message.

Thus, BPA reasons that by scheduling packets at outgoing queues of end-hosts and at the switch such that the aggregate packet benefit is maximized, the algorithm can maximize the aggregate message benefit.

We now formalize the objective of BPA as follows

Let A µ P denote the set of packets in the outgoing packet queue at an end-host or at the switch at a time t. (Note that the switch has one outgoing queue per host.) Let fi µ (m £ n) denote the number of packets in the set A. Let S(A) denote all possible sequences of packets of the set A, and let ¾ 2 S(A) denotes one of the possible packet sequence—or a packet schedule—of the packets in A. Let ¾(i) denotes the packet occupying the ith position in the schedule ¾. Then, the objective of BPA is to:

Thus, the objective of BPA is to determine a packet schedule that will maximize the aggregate packet benefit in terms of the sum of the individual benefits of each packet that is accrued when the packet arrives at its destination host.

This optimization problem is equivalent to the non-preemptive task scheduling problem ad-dressed by Chen and Muhlethaler in [27]. In [27], Chen and Muhlethaler show that their task scheduling problem is NP-hard by establishing the equivalence of their problem to the scheduling problem addressed in [29]. Thus, the problem that we are addressing in this thesis is NP-hard.

In [27], Chen and Muhlethaler present a heuristic algorithm to solve this problem. As dis-cussed in Chapter 1, we refer to this algorithm as CMA, for convenience. Given, n tasks (i.e., fi = n packets in our case), the complexity of CMA is shown to be O(n3) in [27]. Through simulation studies, Chen and Muhlethaler show that CMA yields an aggregate benefit that is generally close to the maximum possible—or the optimal—aggregate benefit. To determine the optimal aggregate benefit, they use Held and Karp’s dynamic programming solution for the same problem presented in [30]. Held and Karp’s solution has an exponential complexity of O(n2n).

We believe that O(n3) is too high for a scheduling algorithm, especially for an on-line algo-rithm such as a packet scheduling algorithm. This is because, higher the cost of the algorithm, higher will be the scheduling overhead. Thus, when used in the switch, the algorithm will “slow down” the scheduling of packets at the switch and thereby reduce the utilization of the network segments. Furthermore, a faster scheduling algorithm will require less buffer space for storing the outgoing packet queues. If the scheduler is slow, packets will quickly queue-up over a short period of time.

Thus, in designing BPA, our objective is twofold: (1) compute scheduling decisions faster than CMA’s O(n3) time, and (2) compute scheduling decisions that will yield an aggregate packet benefit that is as close as possible to that of CMA, if not better

Overview of CMA

The CMA algorithm directly exploits the precedence-relation property that Chen and Muh-lethaler presents in [27].

Chen and Muhlethaler uses the precedence-relation property to define a precedence relation between tasks. A task i is said to precede a task j at a time t, denoted as i `t j, if i;j(t) ‚ 0.

Now, if the precedence relationship between all task pairs can be determined, the task that gains the maximum number of precedences is a very good candidate to be scheduled at time t. Thus, Chen and Muhlethaler reason that this maximum-precedence task can be determined at each scheduling instant and thereby a good scheduling decision can be made. This intuition is exploited by the CMA algorithm.

CMA constructs a precedence matrix at each scheduling instant to store the precedence relations. Each row and column of this matrix represents a task. Furthermore, each entry of the matrix contains a boolean value that indicates whether there exists a precedence relation between the tasks represented on the row and on the corresponding column. Thus, given a precedence matrix I(1; 2; : : : ; n)(1; 2; : : : ; n) that represents the precedence relationship of n tasks at a scheduling instant t, I(i; j) is true, if and only if i `t j; otherwise I(i; j) is f alse.

Table 4.1 illustrates this concept.

Once the precedence matrix is constructed, CMA computes the schedule by examining the rows of the matrix. For each row of the matrix, the algorithm counts the number of true values that are present in the columns of the row. The number of true values in a row i indicates the number of tasks over which task i has a precedence relation. Once the “true counts” are determined for rows, CMA simply selects the task that has the largest true count, inserts the task into the schedule, and marks the row of the task as “examined” in the matrix (so that subsequent examinations can ignore the row). The algorithm repeats this process until all tasks are inserted into the schedule

1 Introduction

1.1 Research on Real-Time Ethernet

1.2 Motivation

1.3 Overview of the Thesis

1.4 Organization of the Thesis

2 The Message and System Model

2.1 The Message Model

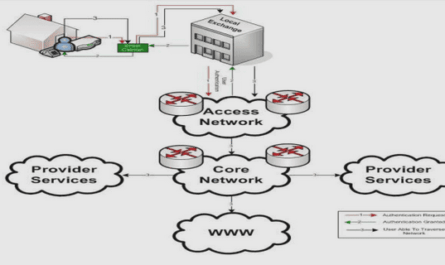

2.2 The System Model

3 Problem Definition and Objectives

4 Overview of CMA

5 The BPA Algorithm: Heuristics and Rationale

5.1 Sort Packets In Decreasing Order Of Their “Return of Investments”

5.2 Move Infeasible Packets Toward The End of The Schedule

5.3 Maximize Local Aggregate Benefit As Much As Possible

5.4 Computational Complexity of BPA

6 Simulation Study I: Performance in a Single Processor Environment

6.1 Experiment Setup and Parameters

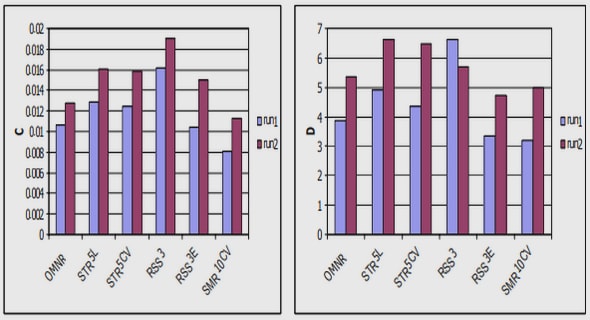

6.2 Normalized Average Performance

6.3 Performance Under Increasing Task Laxity

6.4 Performance Under Heterogeneous Benefit Functions

6.5 Performance Comparison with DASA under Rectangular Benefit Function

7 Simulation Study II: Performance in a Switched Network Environment

7.1 Experiment Setup and Parameters

7.2 Normalized Average Performance

7.3 Performance Under Increasing Arrival Density

7.4 Performance Under Heterogeneous Benefit Functions

7.5 Performance Comparison with DASA under Rectangular Benefit Function

7.6 The Importance Factor: Performance Comparison under Rectangular Benefit Function

8 Implementation of BPA

8.1 Prototype Setup

8.2 Implementation of Packet Scheduling Algorithms in Linux Kernel

8.3 Packet Switching Inside Linux Kernel Using The Linux Bridge Program

9 Test Bench Setup

9.1 Experimental Parameters for Generating Message Traffic

9.2 Emulating Four End-Hosts With a Single PC

9.3 Generating Heavy Message Traffic

9.4 Benefit Functions and Benefit Assignment

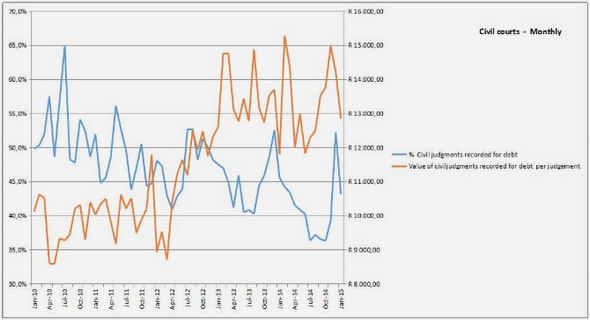

10 Performance Measurement and Analysis

11 Timeliness Feasibility Conditions

11.1 The Soft Real-time Packet Transmission Design Problem

11.2 A Solution Using BPA: Construction of Timeliness Feasibility Conditions

12 Conclusions, Limitations and Future Work

12.1 Limitations

12.2 Future Work

GET THE COMPLETE PROJECT