Get Complete Project Material File(s) Now! »

Arbitrage-Free neural networks

Criticisms of neural network from a regulatory perspective

However, these new tools raise some concerns from the regulator and in order to reference them we relate our analysis on the publication of the Banque de France (see (Dupont, Fliche, and Yang 2020)).

The bank of France has identified four criteria for evaluating artificial intelligence algorithms :

• Data management including data protection and features engineering.

• The performance of the algorithm including for example the accuracy of the model but also other potentially regulatory constraints.

• The stability of the algorithm over time if data are not stationary (or involve a new dataset) and if the model is retrained.

• The explicability of the algorithm which means understanding its behav-ior, the meaning of its (hyper) parameters or even having evidence of its (dys)function.

In the remainder of this report, we will mainly deal with the last three criteria. Neural networks show difficulties in meeting these conditions. Their weights are randomly initialized which affects the stability of the networks after training. Moreover, their rich parameterization makes overfitting frequent, so it is often necessary to regularize the learning criterion as we will do in the chapter 2.

A least square convergence criterion can be misleading if the underlying data is arbitrable. It is therefore necessary to include other performance criteria such as non-arbitrability and we must be able to assess a posteriori compliance with these conditions.

The choice of the neural network architecture is not subject to a universal rule and the parameters of the network have no trivial interpretation. It is thus essential to build a procedure for selecting hyperparameters to motivate the design of the network.

All of these criticisms about deep learning arouse some skepticism from regu-lators as shown in figure 1.2.1 in section 10 of Dupont, Fliche, and Yang (2020). According to this diagram, neural networks can not offer a good compromise be-tween the complexity of the model (in the sense of understanding its behavior) and its performance. An efficient neural network, from the point of view of the regulator, is often abstruse (the so-called black box phenomenon) and calls for a particularly rigorous model governance.

Hard constraints versus soft constraints

The accuracy of an algorithm is not an end in itself as underlined in the previ-ous subsection. For instance in the case of the interpolation of European option prices, arbitrage constraints have to be imperatively respected in order to meet the obligations of internal bank controls. These arbitrage constraints are declined in shape constraints, i.e. involving the partial derivatives of the interpolator (in particular with respect to the strike or the maturity).

A neural network should in theory (see section 6 of Schmidt-Hieber (2020)) see its partial derivatives converge to the sensitivities of price functions. However, obtaining the local minimum of the risk function does not in practice ensure a suitable precision of the neural network derivatives. In addition, the data can itself violate these shape constraints and it is then necessary to regularize the learning of the network so as not to overfit.

There are then two approaches to impose these constraints:

• The hard constraints guarantee the respect of these conditions whatever the input data. This means no-arbitrage, in the interpolation context, whatever the option coordinates.

• Soft constraints penalize the risk function if the no-arbitrage conditions are violated for some observations of the training set. This approach results in penalizations in the optimization routine that can impose non-arbitrability on the training set but offer no guarantee on new observations for example in the testing set.

The hard constraints are reflected in the case of a neural network by the con-struction of an architecture which integrates these shape constraints (see Dugas, Bengio, Bélisle, Nadeau, and Garcia (2009)). However, the architecture induces a class of functions that is too restrictive with respect to the price function to be approached. The universal approximation theorem is then no longer valid in this case. In addition, there is no architecture ensuring non-arbitrability in cases where the condition is more complex (see in particular the butterfly condition for the interpolation of implied volatility).

Soft constraints, on the other hand, provide the flexibility necessary to penalize complex conditions. The idea of penalization is to ensure compliance with non-arbitrage conditions at the nodes defined by the learning grid. The wager of the flexible constraints is to exploit the neural network regularity so that these conditions are also respected in the neighborhoods of the learning set nodes.

Other non-arbitrable surrogate models

Other statistical learning models can mix interpolation and respect for arbitrage constraints. Among these models we can cite the Gaussian processes which assume that all the prices are distributed according to a Gaussian vector. The option locations, through variables such as maturity or strike, is involved in the calculation of the correlation of different prices. It is the purpose of the kernel which assigns a stronger correlation to prices whose coordinates are close. A Gaussian calculation (see subsection 15.2.1 of Murphy (2012b)) then makes it possible to interpolate the price of new options by exploiting their correlation with the observations of a training grid. Cousin, Maatouk, and Rullière (2016a) propose a method to build Gaussian processes that ensure linear constraints in a hard way. The idea is to simulate the trajectories of a truncated Gaussian process, and more precisely to reject samples of the process which do not respect, for example, monotonicity constraints on a discrete grid. Other linear (equality) constraints are modeled by tuning the distribution of the Gaussian process (see page 14 of Cousin, Maatouk, and Rullière (2016a)).

Aubin-Frankowski and Szabo (2020) model shape constraints for reproducing kernel Hilbert space based estimators. The kernel k in this case plays the role of a change of variable which is supposed to facilitate the learning of an estimator f. The authors reinforce their shape constraints of the type Df(xm) + b 0 to apply them to any node of the training set by adding a constant to obtain Df(xm) + b . This constant is calibrated according to the regularity of the kernel so as to guarantee the respect of the shape constraints for a neighborhood of all the points xm of the training set, typically:

= sup kDk(xm; 🙂 Dk(xm + u; :)k m2f1;::;Mg;u2Bk:k(0;1)

However, this procedure is not compatible with non-linear constraints.

Dealing with empirical data

Intraday data shortcomings

Various data providers (Bloomberg, ICAP, etc.) continuously inform market op-erators of the valuations of various financial assets or products. But these data cannot be processed in that condition and require additional post-processing. If this data is intraday (i.e. coming from the current market session) then it is very likely that the information is incomplete. Some financial contracts, for example, are less liquid and are not traded during the day or only in a negligible volume.

In addition, the values provided by the data supplier may be inconsistent or appear erroneous for an expert eye. This can be explained by operational reasons (human error, low volume of exchange), or because of temporally inconsistent observations (some data has not been updated). We propose in the chapter 3 a way to correct these data flows.

The data that we will process have in common that they are structured in the form of a tensor. By tensor is meant here a data structure indexed according to several dimensions. For example, the implied volatility of European options is organized as a tensor of order 2 (a matrix) and indexed along to the maturity and the strike. Indexing plays an important role since it defines the notion of proximity between the elements of the tensor. In this report we will only deal with tensors of order 2 at most but it is quite possible to observe tensors of higher order: the implicit volatility of swaptions are for instance arranged according to a cube. In addition, indexation may vary over time in the sense that the maturities observed one day may be different the next day in value and number. We cannot thus be satisfied with identifying an element according to its rank of indices.

Outlier detection

The definition of an outlier is controversial since its detection requires expert ad-vice. Referring to Hawkins (1980), an outlier is an observation that differs sig-nificantly from other observations in the same dataset to raise a doubt about its validity. An outlier can be materialized by corrupted, incomplete or even atypical data in terms of shape.

There are several approaches to formalize the detection of abnormal observa-tions, including:

• An outlier is an observation significantly distant from the others according to a metric calculated from the sample features. A clustering analysis, based for example on the method of K nearest neighbors (Knorr and Ng 1998), can then be applied to the dataset by basing the decision rule on the distance previously mentioned. One way to build this distance is to perform a coor-dinate change through a PCA (see section 3.3 of (Aggarwal 2017)) or using a kernel (see section 3.4.3 of (Aggarwal 2017)).

• An outlier can be modeled according to a statistical model from which a notion of likelihood derives. An atypical observation then results in a low level of likelihood. Among the statistical models we can cite the Kalman filter (cf. (Ting, Theodorou, and Schaal 2007), (Liu, Shah, and Jiang 2004)) or hidden Markov models (section 9.3.3 of (Aggarwal 2017)).

• An outlier is an observation that does not respect the latent structure of the sample. In this perspective, a low-dimensional representation (with a PCA or an autoencoder according to Aggarwal (2017)) enables us to discriminate an abnormal observation with its reconstruction error.

Completing observations with variable indexation

Matrix completion is a subcategory of the statistics branch aiming to impute miss-ing values. Completion assumes that the incomplete dataset has a low rank struc-ture, which is justified if the variables in our dataset are strongly related or if the observations are structured around categories. In this part, we will denote by D 2 Rm n a matrix representing the dataset with m observations (individuals) and n variables. The low rank hypothesis means that we can factorize by singular value decomposition (truncated) the matrix D in the form U V with U 2 Rm r, 2 Rr r a diagonal matrix and V 2 Rr n. The lower the rank r and the stronger the connections between the variables/observations, the better the imputation of the missing values will be. If we introduce missing values to D then a SVD is no longer possible and it is then necessary to estimate (U; V; ) with the remaining values of the dataset. There are then several methods to estimate (U; V; ) such as the softImpute algorithm (Mazumder, Hastie, and Tibshirani 2010) or Alternating Least Square (Hastie, Mazumder, Lee, and Zadeh 2015).

This whole branch of statistical learning is driven by the recommendation sys-tems of digital companies and online marketing. The observations in the dataset represent users of a platform and the features are ratings of sold products. Com-pletion then allows the platform owner to infer the missing ratings in order to propose their customers products that would interest them. This application case gave rise to a competition (the netflix price) whose dataset now serves as a refer-ence dataset in the literature (cf. (Nguyen, Kim, and Shim 2019)). Bibliographic reviews are available on the subject such as Li, Huang, So, and Zhao (2019) and Nguyen, Kim, and Shim (2019).

However, the literature in completion does not apply to the implicit option volatility data studied in the 3 chapter:

• The columns are not identified with variables since an option can expire one day and leave its place in the column to an option having another residual maturity.

• The matrix structure does not encode information about the indexing of variables. Yet, this information is necessary to define a notion of proximity between the variables.

• Only the last line, corresponding to the current quotation day, contains miss-ing values. As the other lines have been observed in the past, the number of missing values is reduced and localized unlike user experience datasets (a la ” netflix“).

The 3 chapter aims at reconciling the imputation of missing values with the management of data whose indexing varies from day to day. A low rank structure will always be learned from the past by learning a decoder but the encoder will be implicit to deal with the variable number of entries. Finally, we will only consider connections between the variables (implicit volatilities) and not between the trading days. This is similar in the literature to an item-based approach.

XVAs compression

The 2008 turmoils

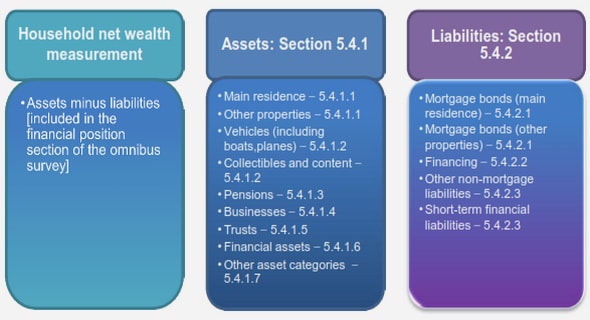

The acronym XVA designates a family of counterparty risk adjustments and more literally the X designates a blank letter preceding Value Adjustments. These risk premiums will be classified into three main categories: default risk premiums, premiums denoting the cost of funding collateral and premiums for financing reg-ulatory capital.

These adjustments aim in part to model two types of losses:

• Depreciation on the market of a financial contract that is only justified by a loss of confidence in the solvency of the counterparty. The loss in value is therefore not linked to the intrinsic characteristics of the contract.

• The default of the counterparty who fails to honor its payments. Contract payments maturing after the default date are then modeled as payoffs to be hedged as part of counterparty risk.

The 2008 financial crisis materialized the losses caused by the counterparty risk. First of all, the number of defaults has increased, in particular in the US mortgage loan sector, impacting the financial markets through securitization. A contagion effect causes credit spreads to rise in all financial markets and a down-ward pressure on derivatives for fear of numerous bankruptcies. In addition, bank financing is weakened: collaterals are only accepted in return for larger haircuts, interbank rates soar with a spread of 3:5 % against the OIS 3. With the value of banks’ derivative portfolios depreciated and the need for more fundings to face risks, banks must sell some of their assets (stocks, bonds) to restore their capi-tal ratio and confidence. However, these firesales accelerate the depreciation of assets on the financial markets, thus creating a negative spiral. This crisis thus has all the characteristics to which the XVAs wish to respond: valuing the losses linked to defaults, anticipating the costs of financing collateral 4, reinforcing the shareholders’ equity of the banks to pass the financial stress periods smoothly.

To formalize these concepts it is necessary to differentiate between the intrinsic value 5 of a contract U and its market value V supposed to capture confidence in the counterparty. The difference U V values this counterparty risk and refers to the CVA and the FVA which will be described later. Banks therefore list these quantities in their balance sheet so as to refine the valuation of their products and set up hedging strategies.

In this section, we will briefly present, for each counterparty adjustment, the calculation methodologies proposed by the regulator. Then we will discuss a more quantitative formulation of these counterparty adjustments in order to highlight the market incompleteness behind each XVA.

Pricing default risk

The CVA, or more precisely Credit Value Adjustment in English, is a risk premium for the default of the counterparty. In fact, the bank records a loss if the counter-party engaged under a contract with the bank defaults. This loss is measured by the client’s exposure to the bank if it is negative (i.e. the counterparty owes the bank money).

There are several approaches to assess a so-called accounting CVA, that is to say the CVA that most faithfully reflects the losses caused by the default risk. We will limit ourselves to the unilateral case, that is to say the situation where only the default of the counterparty is valued.

The first approach, called semi-replication (Burgard and Kjaer 2011), is more suited for the resolution by EDP. The term semi refers to the imperfect nature of the hedge: the recovery process R 6 is unknown and there are no hedging instru-ments on the market allowing a perfect replication of the counterparty’s default. Other methodologies have been proposed to value XVAs. We can notably cite Al-banese, Crépey, and Chataigner (2018) which models the bank’s complete balance sheet. This makes it possible to calculate the FVA for a group of counterparties and to benefit from mutual funding of the collateral. In addition, the KVA is no longer considered as a liability of the bank but is integrated into its equity, which means that the KVA does not intervene in the P&L associated to the counterparty.

The formula for CVA below is demonstrated in section 9.4.1 of Green (2015) in this semi-replication setting. In chapter 4, the optimized metric will be calculated according to this formula (1.1).

CVA = (1 R) C se Rst ru+ C uduE (Vs Xs)+ ds (1.1) with Xs the amount of collateral posted on the date s, T the maturity of the portfolio and C the credit spread of the counterpart.

In reality this formula is the result of a simplistic assumption that assumes the independence between the exposure V X and the default risk of the counterparty, measured by lambdaC . Thus in Albanese, Crépey, and Chataigner (2018), the default risk should be modeled more generally according to the following formula: CVA = (1 R)E (V X )+1 T (1.2) where tau designates the counterpart default time.

It is interesting to dwell on the operational execution of this kind of formula. The clean value V can be recorded as a tensor of order 3 called mark-to-market cube. It contains all trade values for all exposure trajectories, all dates until ma-turity. The challenge for the bank’s credit desk is to value a mark-to-market cube that is consistent with the trading desk’s pricing methodology. This trading desk, which values and hedges trades independently of the creditworthiness, must fit in with the credit desk on the environment it uses (market data, calibration of model parameters) in order to match the counterparty risk hedging strategy of the credit desk with market risk hedge of the trading desk. Indeed, exposure plays a central role in the valuation of XVAs and affects their sensitivities. In the case of CVAs, the exposure affects the hedge against non-credit instruments such as yield curves for example. This rigorous and automated exchange of information is referred in chapter 4 under the name of desk segregation. It is moreover implicitly encouraged by the regulator which, in its revision of the CVA calculations (see section 4 of Basel Committee on Banking Supervision (2015)) or with FRTB-CVA, asks to in-clude the hedge in the calculations of capital charges. In addition, regulatory CVAs must use parameters (drifts, probabilities of default, etc.) calibrated according to the risk-neutral approach (page 2-3 of Basel Committee on Banking Supervision (2015)). The regulator therefore tends to align its regulatory calculations with accounting valuations (IFRS 13 still according to page 2 of the same report) which are aligned with the valuations of the trading desk.

Finally, the complexity of the Mark-to-market cube is very important and the CVA is not linear with respect to the portfolio. If the bank concludes a new trade with the counterparty then the mark-to-market cube must be revalued in its entirety to know the new amount of the CVA. This setback will be limited in chapter 4 thanks to the incremental computation.

Pricing collateral funding costs

We justified in the previous sub-section that a perfect replication linked to the default of the counterpart is impossible. Therefore, exposure must be minimized in order to reduce the residual risk. Standard market practice use collateral as a pledge of the value of the portfolio. If the bank’s exposure is negative then it must post collateral and otherwise it is up to the counterpart to post the collateral to the bank. This exchange of collateral also occurs between the bank and the entities with which the bank hedges (back-to-back hedge) except that the bank posts collateral with these entities if it receives collateral from the counterpart.

Generally the collateral is a good quality financial security such as a sovereign bond of good rating or simply cash. If the guarantee is deemed unreliable then a discount (haircut) is applied to the value of the collateral and it is necessary to mobilize a larger notional amount as a pledge.

There are several categories of collateral characterized by their method of cal-culation:

• The Variation margin which is indexed to the amount of MtM at each re-fresh date. The amount posted between two margin calls depends on the amount of collateral already posted and various clauses such as minimum transfer amounts, trigger thresholds (see chapter 6 of (Gregory 2015) for more details) … The variation margin can be reused as collateral in other transactions by the collateral recipient before the transaction expires. This means that the cost of borrowing collateral for the hedge is zero if we can post the collateral provided by the counterpart. On the other hand, if the counterparty does not post collateral, because it has not signed an credit support annex, then the bank must borrow this collateral on the markets at a cost generally greater than its remuneration (often equal to the OIS) . The Funding Value Adjustment (FVA, for its formulation see chapter 9 of (Green 2015)) corresponds to the cost of financing the variation margin if the counterpart does not or not enough.

• The initial margin is a minimum safety cushion aimed at covering the risk of the MtM slipping between two margin calls 7. For each margin call, the amount of collateral is based on a portfolio risk measure: Value-at-Risk or a derivative of VaR based on sensitivities in the case of an IM SIMM (Standardized initial margin method ). The cost of financing this initial margin gives rise to the Margin Value Adjustment (or MVA, see chapter 16 of (Gregory 2015) or the appendix of (Crépey, Hoskinson, and Saadeddine 2021) for its formulation). The initial margin cannot in general be reused by its recipient for other transactions, that is to say it is segregated.

Funding costs occur on other occasions such as clearing houses (see (Armenti and Crépey 2017)). The regulator also encourages the clearing of derivative trans-actions or even makes it mandatory (see section 9.3 of (Gregory 2015)). CCPs will not be included in the study of chapter 4.

Pricing capital funding costs

Along with these accounting entries, the regulator wanted to impose capital re-serves on banks (the first pillar of the Basel regulation) in order to prevent the appearance of Ponzi schemes. The principle of this financial arrangement is to enter into new contracts in order to finance the losses of a pre-existing portfolio. However, capital reserves are expensive to raise from shareholders since they gen-erally require a much higher return than the funding cost on the interbank market, for example. Setting up a trade is then more expensive and prevents the construc-tion of a Ponzi scheme. Regulatory capital reserves are primarily conservative and are not intended to exactly cover a loss but rather to provide a safety cushion in anticipation of turmoils.

Among the capital reserves we can mention:

• The counterparty credit risk capital charge that covers the default of the bank’s customers.

• The CVA capital charge which provisions for depreciations of the value of the bank’s assets if the creditworthiness of its debtors deteriorates.

• The Risk weighted asset which serves as a reference for provisioning the bank’s regulatory capital (Basel ratio). All the bank’s balance sheet and all risk classes are captured by the RWA.

Details of the formulas for each capital reserve can be found in (Basel Com-mittee on Banking Supervision 2015) (or chapter 8 of (Gregory 2015)) but we can identify some similarities. First of all, the regulator offers at least two calcula-tion methodologies to adapt to the computation capacities of each establishment. Among these approaches there is always a parametric method, simpler to calcu-late, but more conservative. Another, more complex approach allows the use of internal bank valuation models, which often requires simulating exposure as for other XVAs.

In addition, all these quantities designate amounts of capital to be reserved but it is their funding cost which is charged to the customer. The capital value adjustment (KVA) aims to estimate this cost and then add it to the clean value V of the contract alongside the CVA and the FVA. However, the capital reserve involved in the KVA is not necessarily based on one of the previous regulatory capital reserves but can alternatively be based on economic capital. Economic capital is a measure of risk of how much the bank must have in reserve to weather a crisis. This is generally a Value-at-Risk or an expected shortfall calculated on the bank’s losses with an one year horizon. Noting h the hurdel rate of shareholders on capital invested in the bank, Crépey, Hoskinson, and Saadeddine (2021) in their appendix formulate the KVA as: KV A = E he hs max (ECs; KV As) ds (1.3)

The KV A thus captures all losses arising from counterparty risk that cannot be hedged. Like the CVA, the KVA and other capital reserves could be optimized. However, the computational complexity of the KVA makes its compression inac-cessible for the moment. To our knowledge, only the MVA (in a simplistic form) with (Kondratyev and Giorgidze 2017) has been the subject of a compression pro-cedure in the literature.

Table of contents :

1 Introduction

1.1 Apprentissage statistique en finance

1.1.1 La quête d’approximations

1.1.2 Résolution de problèmes encore ouverts

1.1.3 L’émergence des réseaux de neurones

1.2 Réseaux de neurones non-arbitrables

1.2.1 Inquiétudes des régulateurs pour ces nouvelles techniques

1.2.2 Contraintes dures versus contraintes souples

1.2.3 Autres approximations nonarbitrables

1.3 Traiter des données brutes

1.3.1 Défauts des données intra-journalières

1.3.2 Détection de valeurs aberrantes

1.3.3 Compléter des données à indexation variable

1.4 Compression des XVAs

1.4.1 Le tournant de 2008

1.4.2 Valoriser le risque de défaut

1.4.3 Valoriser le financement du collatéral

1.4.4 Valoriser des provisions en capital

2 Arbitrage-Free neural network

2.1 Introduction

2.2 Problem Statement

2.3 Shape Preserving Neural Networks

2.3.1 Hard Constraints Approach

2.3.2 Soft Constraints Approach

2.3.3 Learning problems

2.4 DAX Numerical Experiments

2.4.1 Experimental Design

2.4.2 Numerical Results Without Dupire Penalization

2.4.3 Numerical Results With Dupire Penalization

2.4.4 Robustness

Numerical Stability Through Recalibration

Monte Carlo Backtesting Repricing Error

2.5 Gaussian process regression for learning arbitrage-free price surfaces

2.5.1 Imposing the no-arbitrage conditions

2.5.2 Hyper-parameter learning

2.5.3 The most probable response surface and measurement noises

2.5.4 Sampling finite dimensional Gaussian processes under shape constraints

2.5.5 Local volatility

2.6 Arbitrage-free SVI

2.6.1 SVI parameterizations

2.6.2 No-arbitrage conditions on SVI parameters

2.6.3 Slice parameter interpolation

2.7 SPX Numerical Experiments

2.7.1 Experimental design

2.7.2 Calibration results

2.7.3 In-sample and out-of-sample calibration errors

2.7.4 Backtesting results

2.8 Conclusion

3 Nowcasting network

3.1 Introduction

3.2 Problems

3.2.1 Compression

3.2.2 Completion

3.2.3 Outlier Detection

3.3 Models

3.3.1 The Convolutional (Autoencoder) Approach

3.3.2 The Linear Projection Approach

3.3.3 The Functional Approach

3.3.4 Synthesis

3.4 Experimental Methodology and Setting

3.4.1 Performance Metrics

3.4.2 Introduction to the Case Studies

3.4.3 Discussion of the Arbitrage Issue

3.5 Repo Curves

3.5.1 Functional Network Architecture

3.5.2 Numerical Results

3.6 Equity Derivative Implied Volatility Surfaces

3.6.1 Compression

3.6.2 Outlier Detection and Correction

3.6.3 Completion

3.7 At-the-Money Swaption Surfaces

3.7.1 Network Architectures

3.7.2 Numerical Results

3.8 Conclusions and Perspectives

4 XVA compression

4.1 Introduction

4.1.1 Outline and Contributions

4.2 CVA Compression Modeling

4.2.1 Credit Valuation Adjustment

4.2.2 Fitness Criterion

4.2.3 Genetic Optimization Algorithm

4.3 Acceleration Techniques

4.3.1 MtM Store-and-Reuse Approach for Trade Incremental XVA Computations

4.3.2 Parallelization of the Genetic Algorithm

4.4 Case Study

4.4.1 New Deal Parameterization

4.4.2 Design of the Genetic Algorithm

4.4.3 Results in the Case of Payer Portfolio Without Penalization

4.4.4 Results in the Case of Payer Portfolio With Penalization

4.4.5 Results in the Case of a Hybrid Portfolio With Penalization

4.5 Conclusion