Get Complete Project Material File(s) Now! »

Some perspectives on invariant shape analysis

In this section we present a small example about image classification. The goal of image analysis is to recognize and classify patterns. These patterns may have been transformed by some set of transformations, such as translations, rotations or scaling and are more generally affected by the conditions in which the images to be analysed have been taken. The challenge is then to find a representation that is not hampered by the variations of the same pattern from image to image. Making a representation invariant to a set of transformations is a challenging task, even in the simpler case of translations. Indeed, it is sufficient to observe that the set of functions obtained by translating a single pattern in various directions typically spans a vector space of high dimension, meaning that the shapes (here the functions) that we would like to put in the same category do not even live in a common low-dimensional subspace.

A possible approach is to study representations that leave invariant some group of transfor-mations. For instance, the Fourier transform has translation invariant properties, since its modulus is translation invariant. However it is unstable to small deformations at high fre-quencies. Wavelet transforms provide a workaround. Scattering representations proposed by Mallat [18] compute translation invariant representations by cascading wavelet trans-forms and modulus pooling operators. They bring improvements for audio classification [1] and for image classification [3].

This kind of careful mathematical study has to be repeated for any kind of transformations and in image analysis the pattern transformations we would like to take into account are numerous and not easy to formalize, since they may come from changes in the perspective or in illumination, from partial occlusions, from object deformations, etc.

Coupling spectral clustering with a change of representation in a reproducing kernel Hilbert space may lead to a generic and rather radical alternative. Instead of deriving the repre-sentation of a pattern from a mathematical study, the idea is to learn the representation itself from examples of patterns that sample in a sufficiently dense way the orbits of the set of transformations at stake.

116 Chapter 4. Spectral Clustering

Developing a convincing toolbox for unsupervised invariant shape analysis is beyond the scope of this study and it will be carried on elsewhere. Our purpose in this section is just to hint that spectral clustering, coupled with some preliminary change of representation in a reproducing kernel Hilbert space, can be a winning tool to bring down the representation of classes to a low-dimensional space. We suggest with a small example how this approach may lead to learn transformation invariant representations from datasets containing small successive transformations of a same pattern.

We briefly describe this approach to get a hint of its potential. We consider two images (figure 4.5) and we create our patterns by translating a subwindow of a given size in each image repeatedly, using a translation vector smaller than the subwindow size. In such a way we create a sample consisting of two classes of connected images, as shown in figure 4.6. This notion of translation cannot be grasped easily by a mathematical definition, because we do not translate a function (here the image defined as a function sampled on a rectangular grid of pixels), but a window of observation. Hence in this case the translation depends on the image content and it may not be easy to model in any realistic situation.

We present on an example a successful scenario using first a change of representation in a reproducing kernel Hilbert space to better separate the two classes, and then spectral clustering to shrink each class to a tight blob. We show that the so called kernel trick, introduced to better separate classes in the supervised learning framework addressed by support vector machines (SVMs), also works in an unsupervised context. In this setting, we do not separate classes using hyperplanes, since we do not know class labels which would be necessary to run a SVM, but, instead, we use spectral clustering to finishing the work.

Proceeding as already done in the previous sections, we estimate K with the kernel Kc and we apply the classification algorithm to Kcm. As in the framework of SVMs, we use the kernel trick to embed the sample in a higher-dimensional space in which the geometry of the classes is simpler. However, as already said, we do not use hyperplanes but spectral clustering to separate the two classes.

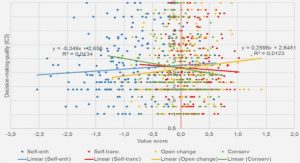

In figure 4.7 we compare the representation of the images in the initial space and in the space H2.

On the left we present the projection of the sample onto the space spanned by the first two largest eigenvectors of the matrix of inner products between images hXi, Xji. On the right we plot the projection onto the space spanned by the two largest eigenvectors of the matrix of inner products k2(Xi, Xj) in H2.

We observe that in the first representation the two classes intersect each other while in the second one, after the change of representation, they are already separated.

To conclude, figure 4.8 shows the final representation. Here the data points are projected onto the space spanned by the two largest eigenvectors of the matrix Kc(Xi, Xj)m.

Figure 4.7: On the left the projection onto the space spanned by the two largest eigen-vectors of hXi, Xji, on the right the projection onto the space spanned by the two largest eigenvectors of k2(Xi, Xj).

Figure 4.8: The projection onto space spanned by the two largest eigenvectors of Kc(Xi, Xj)m.

Density Estimation

In this appendix we consider the problem of estimating the density function of an unknown probability distribution and we present an estimator, based on the results obtained in chapter 1, that is more robust than the classical kernel density estimator.

Let P ∈ M1+(Rd) be an unknown probability measure and let us assume it has a density with respect to the Lebesgue measure. The goal of density estimation is to construct an estimator of the density function f from an i.i.d. sample X1, . . . , Xn ∈ Rd distributed according to P. To achieve this aim, we need to introduce the concept of approximate identities (also called approximations to the identity). Let ϕ be a function defined on Rd and let > 0. We assume that ϕ is a non-negative measurable function such that Let us denote by Lp(Rd) the space of p-integrable functions with respect to the Lebesgue measure.

We now consider as approximate identities the family of Gaussian distributions πα ∼ N (0, α−1I) of means 0 and covariance matrix α−1I. By the properties of approximate converges to f as α grows to infinity. However, since P is unknown, also f ∗πα is unknown. A possible approach to estimate f ∗ πα is to replace the distribution P by the empirical measure 1 Pn δX .

We can then remark that if in Proposition 1.17 on page 35 and consequently in Proposi-tion 1.31 on page 47 we restrict the statement to any θ ∈ Θ, where Θ ⊂ Rd is some subset of Rd, then, in the definition of κ (equation (1.10) on page 26), we can also restrict the supremum to the same subset. Using this variant of Proposition 1.

Let us remark that we can see the influence of the dimension in this result by looking at equivalents when α goes to +∞. In this case (under suitable regularity assumptions)

Kernel density estimation

Kernel density estimation is a non-parametric method of estimating the probability density function of a continuous random variable which consists in associating to each point of a given dataset a kernel function centered on that point. The kernel density estimator is defined as the sum of the centered kernel functions, scaled by a parameter h, called bandwidth, to have unit area. To be more precise, given an i.i.d.

Table of contents :

1 The Gram Matrix

1.1 Introduction

1.2 Estimate of the Gram matrix

1.3 Gram operators in Hilbert spaces

1.4 Symmetric random matrices

1.5 Covariance matrix

1.6 Empirical results

2 The Empirical Gram Matrix

2.1 Introduction

2.2 Estimate of the Gram matrix via the empirical Gram matrix

3 Principal Component Analysis

3.1 Introduction

3.2 Estimate of the eigenvalues

3.3 Standard Principal Component Analysis

3.4 Robust Principal Component Analysis

4 Spectral Clustering

4.1 Introduction

4.2 Description of an ideal algorithm

4.3 Estimation of the ideal algorithm by an empirical one

4.4 Empirical results