Get Complete Project Material File(s) Now! »

Application of simulation

Introduction

In this chapter, we demonstrate the use of the auction simulation from Chapter 3 for evaluating fraud detection algorithms. This requires adding agents with fraudulent be-haviours into the simulation to generate synthetic data that contains both normal and fraudulent transactions. The synthetic data is then used to evaluate di erent fraud de-tection algorithms.

In this chapter, we implement three types of fraudulent agents to generate three syn-thetic datasets, used to evaluate three di erent fraud detection algorithms. The rst type of fraud agent is described by Trevathan and Read [2]. The agent exhibits shilling be-haviour, where its goal is to increase the nal selling price of auctions for a collaborating seller. The second and third fraud agents are variations of the rst which attempt to hide some of their fraudulent characteristics to avoid detection. Synthetic datasets containing these shilling behaviours are used to test a shill detection algorithm, also described in [2], and two of its variations.

This chapter is organised as follows. Section 4.2 describes background for shilling fraud. Sections 4.3 and 4.4 describe the implementation of three fraud agents and fraud detection algorithms. Section 4.5 and 4.6 compare the detection results between di erent fraud behaviours and detection strategies. Section 4.7 concludes the chapter.

Background

This section provides information that may be helpful in understanding subsequent sec-tions. Section 4.2.1 describes the generation and quality of synthetic data used in this chapter. Section 4.2.2 describes the characteristics of shilling fraud.

Generating synthetic data that contains fraud

As noted previously, this chapter builds on the validated agent-based simulation from Chapter 3. The agent-based simulation is extended here by implementing additional agents, described in Section 4.3. By adding these agents to the simulation, the resulting synthetic data will contain fraudulent bids and auctions. Table 4.1 shows the bids and auction information for three example auctions, containing the type of information that is generated by the simulation. User A and B in Table 4.1 are examples of these fraudulent agents.

Though the agents implemented only commit competitive shilling, it demonstrates the usefulness of the simulation in generating synthetic data that contains fraudulent behaviour. In Chapter 6, we will see that other types of fraud, including collusive fraud, can be simulated.

Shilling Behaviour

Shilling is de ned as any activity where a seller, or an associate, places bids in auctions held by the seller. Competitive shilling occurs when a user submits bids to a collaborating seller’s auction to elevate the nal auction price, without the intention of winning. The legitimate bidder is cheated by paying more than they otherwise would when winning the item. The goal is to increase the price the legitimate winner would otherwise pay, to increase seller pro t [11]. For example, suppose there were only two bidders in an auction: one legitimate (L), and one fraudulent (F), with bidding proceeding like so: L:$10, F:$11, L:$12, F:$13; L:$14. If there are no additional bids, L, the legitimate bidder, pays an additional $4 due to bids by F.

Users engaging in competitive shilling often display a set of behaviours that distinguish them from normal users [5, 17]. These behaviours allow them to function e ectively as shills. These behaviours are:

Shills only attempt to elevate prices of auctions held by collaborating sellers, since only those auctions a ect the collaborating sellers’ pro t.

Shills have a higher bid frequency since they must bid after legitimate bids in order to stimulate bidding to maximise the nal price.

Shills will win a low number of auctions, since winning will mean the seller bought their own or their collaborator’s item, and they will be forced to pay the auction fee.

Shills tend to bid soon after a legitimate bid to maximise the time legitimate users have to submit a new bid, to both maximise nal price and reduce the chance of winning.

Shills bid the minimum amount possible to not discourage bidding by legitimate bidders.

Shills tend to have bids early in the auction to reduce the chances of accidentally winning.

The prevalence of shilling behaviour in commercial auction sites is unknown. Commer-cial auction sites do not publicly disclose information about identi ed fraudulent accounts. Even with complete transaction histories, it is di cult to prove that an account is engag-ing in shilling. Shilling behaviours, and fraudulent behaviours in general, are not binary, but are continuous; each account may display di erent degrees of each type of suspicious behaviour. The shill agents described in Section 4.3 below represent three possible in-stances of shilling behaviour: the simple shilling agent in Section 4.3.1, and two agents that attempt to hide some of their fraudulent characteristics in Sections 4.3.2 and 4.3.3. Other agent variations are possible. For example, they may attempt to hide di erent aspects of their behaviour, such as bidding after two legitimate bids; or incorporating di erent legitimate actions, such as intentionally losing in other auctions to hide their true collaborator.

Fraud agents

In this section, we describe three shilling fraud agents and their implementation. The rst is an implementation of the simple shill agent described by Trevathan et al. [12]. The second and third agent types build on the rst, where the agents attempt to hide part of their fraudulent behaviour, as one would expect to see in real life.

Simple shill agent

The simple shill agent has ve directives to maximise its potential as a shill. The directives are consistent with the shill characteristics listed in Section 4.2.2. Informally, they are:

Bid the minimum amount required.

Bid quickly after a rival bid.

Do not bid too close to the auction’s end.

Bid until the target price has been reached.

Only bid when the current bidding volume is high.

The behaviour of the shill is tuned by three parameters , , and , which govern the thresholds at which the simple shill agent ceases bidding, and in turn controls the amount of risk the agent takes while in ating the price of an auction. The agent will stop bidding if the auction is greater than % complete, or if current price > . The parameter a ects when the bidding frequency is considered \high » by controlling how far into the auction history that bids should be included during counting. Increasing the value of these parameters tends to increase the nal price, but also the risk of accidentally winning the auction.

In the simulation, each shill bidding agent is paired with a seller agent, and the shill bidding agent only bids in auctions held by the corresponding seller. Normal agents, modelling legitimate users, may participate in these fraudulent auctions according to their behaviour.

Table 4.1 gives an example auction where a simple shill agent, User A, is working with User 8. In the rst auction where User 8 is the seller, User A bids immediately after a legitimate bid, with the minimum required bid: $1.00 over the previous bid. Section A.3 in Appendix A shows the pseudocode for the simple shill bidding agent.

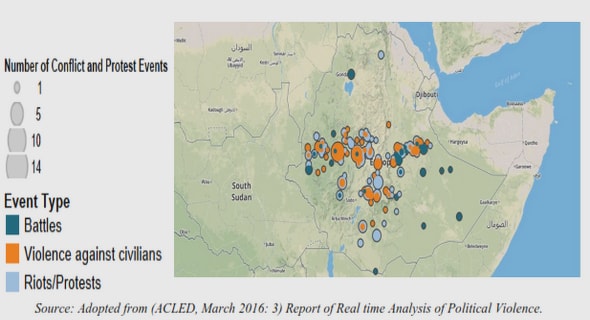

Late-start shill agent

The behaviour of the simple shill bidding agent is to bid immediately after the auction has been submitted by their counterpart. However, in TradeMe, users do not tend to bid immediately after the auctions begin. 43.0% of auctions in TradeMe have the rst bid occur when there is less than 24 hours before termination, and 78% of auctions have the rst bid occur when there are fewer than 5 days remaining. This can be seen in Figure 4.1, which shows the distribution of times at which the rst bid appears. Even though bidding immediately after the auction begins may give other users more opportunities to bid, agents that bid this way are suspicious. Therefore, agent behaviour is changed so that they do not begin bidding unless there are already one or more bids in an auction, or if the auction is more than two days from termination. User B in Table 4.1 is an example of this shill agent, where the agent waits for a legitimate bid to appear before bidding.

Legitimate-bidding shill agent

To raise the nal selling price, the shill agent only needs to bid in auctions held by the collaborating seller. However, this makes the agent easy to identify since the agent only bids in auctions held by a single seller, in addition to losing most of the auctions it participates in. This agent attempts to conceal this by bidding in low priced legitimate auctions held by other sellers. For every shill auction that the agent participates in, it also participates in a legitimate auction.

Agent shilling performance

We evaluated the e ectiveness of each agent type as shills using two criteria: ability to raise the nal selling price of an auction; and the probability of losing the auction, as intended, to a legitimate bidder. An e ective shill will have high values for both. Table 4.2 shows the performance of the simple shill agent within the simulation, using the parameters ( , , ) = (0:95; 0:85; 0:85). On average, the simple shill agent successfully loses 75.3% of auctions, and raises the nal price by 14.1%. The performance of the other agent types, late-start shill, and legitimate-bidding shill, is similar to that of the simple shill.

We also report the in uence of changing the parameters , and on performance. Results are calculated from 20 synthetic datasets each containing 100 shill bidding agents, and make up roughly 1% of the bidding agents in the simulation. Figures 4.2 to 4.4 shows the average performance of 1000 agents as the values of , and range over 0:80 to 0:99 at 0:01 intervals. The error bars represent one standard deviation. The parameter has a strong in uence on the observed win rate and price increase. As expected, as increases, the e ect on the nal price increases, at the cost of reducing the probability of losing the auction. has a similar e ect, though weaker. For , there is no obvious e ect. Table 4.3 shows the correlation between the choice of parameters and the price increase, and the fraction of auctions lost, respectively. The correlation values are consistent with that observed from Figures 4.2 to 4.4.

The performance of the shill bidding agents in our simulation is signi cantly di erent to that reported in [12], where the agent using similar parameters increased nal price by 15% and successfully lost in only 30% of auctions. The di erence in performance is most likely due to the di erence in the behaviour of the non-fraud agents in our simulation.

Table 4.2 Shill bidding agent performance.

Fraud detection algorithm

In this section we describe the shill score algorithm by Trevathan et al. [2]. We also describe and explain the two changes that we propose to improve detection performance.

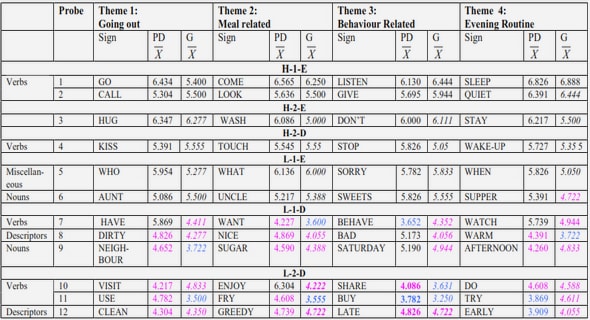

Shill score

Trevathan et al. proposed the shill score algorithm (SSA) [2] to detect competitive shilling behaviour. SSA uses the bidding history of users to calculate a score which re ects the likelihood that a user is acting as a shill in a given auction. SSA works by identifying users that exhibit the six shill behaviours listed in Section 4.3.1. Trevathan de nes six ratings, through , that each target one shill behaviour. For de nitions of the six ratings, refer to [2].The six ratings are combined in a weighted average according to Equation 4.1. The values of ( 1, …, 6) were de ned to be (9; 2; 5; 2; 2; 2) by the authors.

Since the algorithm was designed and tested on small samples of synthetic and com-mercial auction data that may not represent the range of behaviours by users in TradeMe, modi cations to the algorithm may improve detection results.

Shill score with Bayesian average

Users that have participated in only one auction may receive very high scores from SSA and be incorrectly labelled as shills. SSA fails to take into account that bidding patterns observed from a small number of auctions is not su cient evidence to classify an agent as a shill. This increases the number of false-positives. To avoid this problem, we take into account the amount of evidence that exists for an agent’s score by recalculating SSA using where is the average score from SSA for legitimate users, C is a user-de ned constant, and n is the number of auctions, x is the score from SSA, and x0 is the new score.

The value of C is de ned to be the average number of auctions each legitimate user participates in. In our real dataset, C = 1:8. The e ect is that if an agent participates in very few auctions, the resulting score x0 will be much closer to the group average. If the number of auctions the agent participates in is large, the resulting score will be similar to the original x from SSA.

Shill score with alternative weights

The weights for SSA were designed under di erent assumptions that do not hold here. For example, shill agents are assumed in SSA to have low accidental win rates, and is given a very high weight of 9 out of a total of 22. This assumption is observed to be untrue in the real dataset and in the synthetic data, as shown in Table 4.2. Therefore, using a di erent set of weights to combine the six ratings may produce better detection performance.

We test whether combining ratings with equal weights will give better performance. Using equal weights is unlikely to be optimal, but allows us to test whether altering weights is worth further investigation. Below, we will see that the weights have a large e ect on performance, and in Chapter 5, we use supervised techniques to search for an optimal set of weights for SSA.

Detection accuracy for each agent type

In this section we compare the ability of SSA to detect three di erent agent fraud types. For each agent type, we used 20 simulation runs each with 100 fraud agents and 10000 legitimate agents. The detection algorithm used was SSA.

Metric de nitions

Some commonly used metrics for measuring classi cation accuracy are de ned below. True positives (TP) is the number of instances correctly labelled as fraudulent or suspi-cious. False positives (FP) is the number of instances incorrectly labelled as fraudulent or suspicious. True negatives (TN) is the number of instances correctly labelled as normal. False negatives (FN) is the number of instances incorrectly labelled as normal.

TPR is also known as recall. The receiver operating characteristic (ROC) curve is a method for illustrating the performance of a binary classi er, and shows the trade-o between FPR and TPR. They are constructed by varying the discrimination threshold and plotting the corresponding TPR and FPR values.

Accuracy

We examine the e ect of choosing di erent score thresholds on the detection of three di erent shill agent types. Agents are identi ed as shills if they have a score that is above a de ned threshold. Figure 4.5 shows the trade-o that exists: as we decrease the score threshold, both the number of shill agents we correctly identify (true positive), and the number of legitimate agents we incorrectly identify as shills (false positive) increase.

Late-start shills are consistently more di cult to detect, compared to the simple shill

Contents

1 Introduction

1.1 Problem denition

1.2 Proposed solution

1.3 Scope and objectives

1.4 Contributions

1.5 Thesis outline

2 Related work

2.1 Fraud types

2.2 Methods for predicting or detecting fraud

3 Auction simulation

3.1 Introduction

3.2 Background and related work

3.3 Auction Model

3.4 Auction simulation overview

3.5 Commercial dataset

3.6 Evaluation

3.7 Conclusion

4 Application of simulation

4.1 Introduction

4.2 Background

4.3 Fraud agents

4.4 Fraud detection algorithm

4.5 Detection accuracy for each agent type

4.6 Performance comparison of three fraud detection algorithms

4.7 Conclusion

5 Supervised fraud detection

5.1 Introduction

5.2 Improving detection performance using supervised learning methods

5.3 Synthetic data evaluation: methods and results

5.4 Applying models to commercial data

5.5 Conclusion

6 Unsupervised fraud detection

6.1 Introduction

6.2 Background

6.3 SPAN algorithm

6.4 Phase 1: Calculating anomaly scores

6.5 Phase 2: Propagating anomaly scores

6.6 Fraudulent behaviours

6.7 Evaluation results

6.8 Conclusion

7 Case study: TradeMe

7.1 Introduction

7.2 Results

7.3 Conclusion

8 Conclusion and future work

8.1 Summary

8.2 Contributions

8.3 Limitations

8.4 Future work

GET THE COMPLETE PROJECT

Fraud Detection in Online Auctions